This site is being phased out.

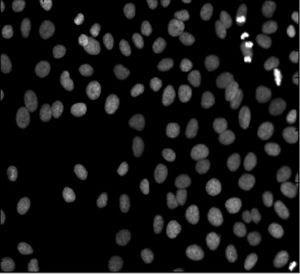

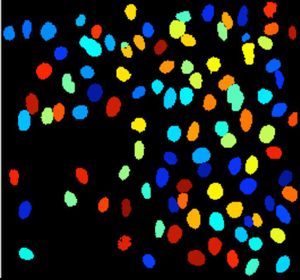

Image segmentation

“Image segmentation is partitioning a digital image into multiple regions”.

This is the first sentence in the Wikipedia article. It suffers from a few very serious flaws.

First, what does “partitioning” mean? A partition is a representation of something as the union of non-overlapping pieces. Then partitioning is a way of obtaining a partition. The part about the regions not overlapping each other is missing elsewhere in the article: “The result of image segmentation is a set of regions that collectively cover the entire image” (second paragraph).

Then, is image segmentation a process (partitioning) or the output of that process? The description clearly suggests the former. This approach emphasizes the “how” over the “what”, the process over the end result (see also Overview). That suggests human involvement in the process that is supposed to be objective and reproducible.

Of course, the regions don't have to be “multiple”. The image may be blank or contain a single object.

And the image does not have to be “digital”. Segmentation of analogue images makes perfect sense. (In fact, we can assume that the world is "analog", see Continuous vs discrete.)

Next, a segmentation is a result of partitioning but not every partitioning results in a segmentation. A segmentation is supposed to have something to do with the content of the image. Then, a slightly better “definition” I could suggest is this:

A segmentation of an image is a partition of the image that reveals some of its content.

Keep in mind that the background is a very special element of the partition. It shouldn’t count as an object…

Another issue is with the output of the analysis. The third sentence in the article is “Image segmentation is typically used to locate objects and boundaries (lines, curves, etc.) in images.” It is clear that “boundaries” should be read “their boundaries” here - boundaries of the objects. The image does not contain boundaries – it contains objects... and objects have boundaries. (A boundary without an object is like Cheshire Cat’s grin.) This is not an academic issue, see below.

Once the object is found, finding its boundary is an easy exercise. This does not work the other way around. The article says: “The result of image segmentation [may be] a set of contours extracted from the image.” But contours are simply level curves of some function. They don’t have to be closed (like a circle). If a curve isn’t closed, it does not enclose anything – it’s a boundary without an object!

More generally, searching for boundaries instead of objects is called edge detection. In the presence of noise, one ends up with just a bunch of pixels – not even curves...

Compare to section “Boundary Lines” in Image Processing Handbook by Russ: "One of the shortcomings of thresholding is that the pixels are selected primarily by brightness, and only secondarily by location. This means that there is no requirement for regions to be continuous... Automatic edge following suffers from several problems... [A] line of pixels may be broken or incomplete... or may branch... [T]he inability of the fully automatic ridge-following method to track the boundaries has been supplemented by a manually assisted technique."

Another reason to avoid the language of “contours”, “edges”, etc is that it limits you to 2D images.

See also Algebraic topology and digital image analysis.

Segmentation methods:

- Binary watershed

- Labeling

- Gray scale watershed

- Edge detection

- Blob detection

- Level sets method

- Graph representation of gray scale images

See also Books on computer vision.

Pixcavator carries out a special kind of image segmentation. You can see numerous examples here. To experiment with the concepts, download the free Pixcavator Student Edition.