This site is being phased out.

Functions in higher dimensions

Contents

- 1 Multiple variables, multiple dimensions

- 2 Euclidean spaces and Cartesian systems of dimensions $1$, $2$, $3$,...

- 3 Geometry of distances

- 4 Sequences and topology in ${\bf R}^n$

- 5 The coordinate-wise treatment of sequences

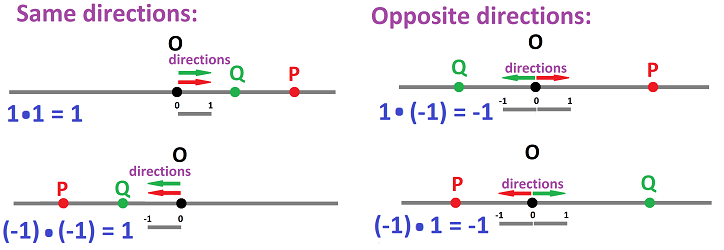

- 6 Where vectors come from

- 7 Algebra of vectors

- 8 Components of vectors

- 9 Lengths of vectors

- 10 Parametric curves

- 11 Partitions of the Euclidean space

- 12 Discrete forms

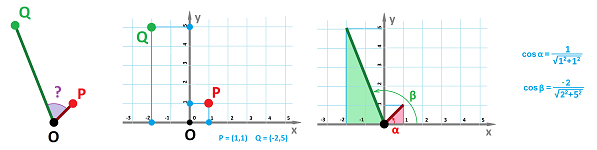

- 13 Angles between vectors and the dot product

- 14 Projections and decompositions of vectors

- 15 Sequences of vectors and their limits

Multiple variables, multiple dimensions

Why do we need to study multidimensional spaces?

These are the two main sources of a multiple dimension:

- the physical space, dimension $3$,

- abstract spaces designed to accommodate multiple variables (such as the stock and the commodity prices), and

- multiple spaces of single dimension interconnected via functional relations.

We will start with the last item. Even though in the early calculus (Chapters 7 -13) we deal with only numbers, the graph of a function of one variable lies in the $xy$-plane, a space of dimension $2$.

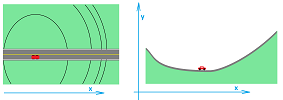

Let's furthermore recall the following problem from Chapter 8. A car is driven through a mountain terrain. Its location and its speed, as seen on the map, are known. The grade of the road is also known. How fast is the car climbing?

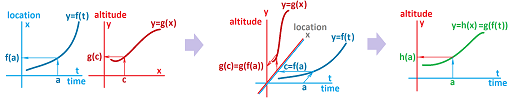

The first variable is time, $t$. We also have two spatial variables: the horizontal location $x$ and the elevation (the vertical location) $z$. Then $z$ depends on $x$ and $x$ depends on $t$. Therefore, $z$ depends on $t$ via the composition:

As we can see, we have to consider the Cartesian space of dimension $3$ in order to understand what is going on.

Furthermore, these are the two observations that we made:

- 1. your vertical speed, $\frac{\Delta z}{\Delta t}$ or $\frac{dz}{dt}$, is proportional to your horizontal speed, $\frac{\Delta x}{\Delta t}$ or $\frac{dx}{dt}$;

- 2. your vertical speed, $\frac{\Delta z}{\Delta t}$ or $\frac{dz}{dt}$, is proportional to the slope of the road, $\frac{\Delta z}{\Delta x}$ or $\frac{dz}{dx}$.

This lead us to discover the Chain Rule: $$\frac{\Delta z}{\Delta t}=\frac{\Delta z}{\Delta x}\frac{\Delta x}{\Delta t} \text{ or } \frac{dz}{dt}=\frac{dz}{dx}\frac{dx}{dt}.$$ These aren't locations anymore but vectors.

Now, the road in this example is straight! More realistically, it should have turns and curves...

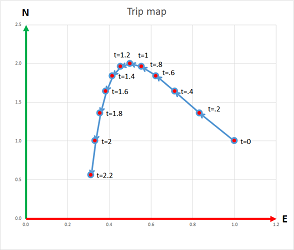

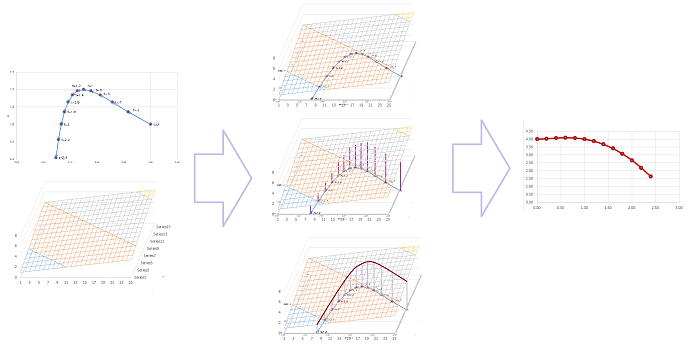

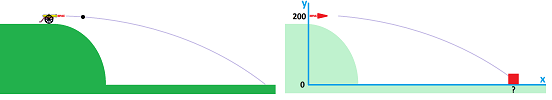

Planning a road trip, we create a trip plan: the times and the places put on a simple automotive map:

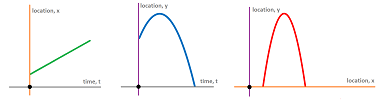

This is a parametric curve: $$x=f(t),\ y=g(t),$$ with $x$ and $y$ providing the coordinates of your location. Conversely, motion in time is a go-to metaphor for parametric curves!

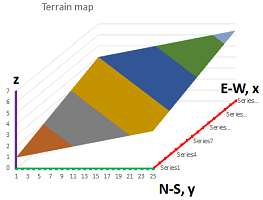

We then bring the terrain map of the area:

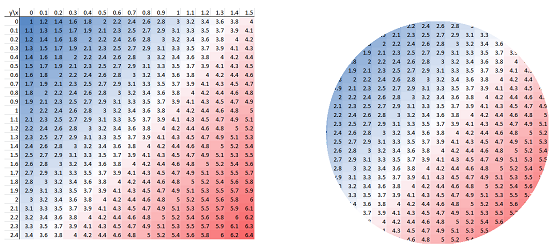

Such a topographic map has the colors indicating the elevation:

This is a function of two variables: $$z=f(x,y).$$ Conversely, a terrain map is a go-to metaphor for functions of two variables!

Now, back to the same question: how fast will we be climbing?

We realize that we face new kinds of functions and a new kind of composition: $$\begin{array}{|ccccc|} \hline &\text{trip map} & & \bigg|\\ \hline t&\longrightarrow & (x,y) &\longrightarrow & z\\ \hline &\bigg| & &\text{terrain map}\\ \hline \end{array}$$ Both functions deal with $3$ variables at the same time with a total of $4$! Furthermore, planning a flight of a plane would require $3$ spatial variables in addition to time. The number increases to $6$ if we are to consider the orientation of the plane in the air: the roll, the pitch, and the yaw. In the meantime, there are many functions of the $3$ variables of location: the temperature and the pressure of the air or water, the humidity, the concentration of a particular chemical, etc.

The observations about the rates of change are still applicable:

- If we double our horizontal speed (with the same terrain), the climb will be twice as fast.

- If we double steepness of the terrain (with the horizontal speed), the climb will be twice as fast.

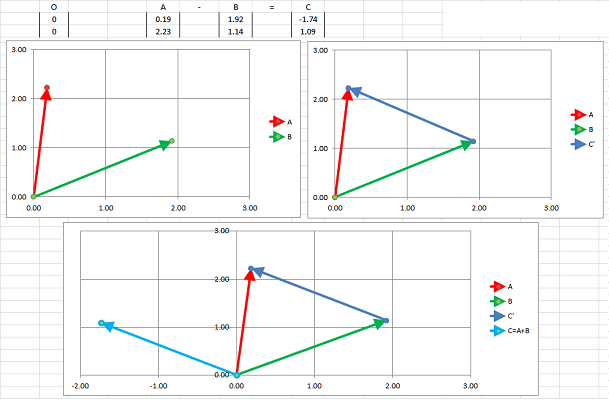

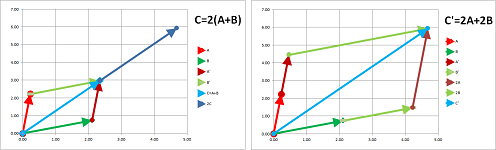

It follows that the speed of the climb is proportional to both our horizontal speed and the steepness of the terrain. That's the Chain Rule. What is it in this new setting? Both rates of change of and with respect to $x$ and $y$ will have to be involved. We will show that it is the sum of those: $$\frac{\Delta z}{\Delta t}=\frac{\Delta z}{\Delta x}\frac{\Delta x}{\Delta t} + \frac{\Delta z}{\Delta y}\frac{\Delta y}{\Delta t} \text{ or }\frac{dz}{dt}=\frac{\partial z}{\partial x}\frac{dx}{dt} + \frac{\partial z}{\partial y}\frac{dy}{dt}.$$ This number is then computed from these two vectors:

- the rate of change of the parametric curve of the trip, i.e., the horizontal velocity, $\left< \frac{\Delta x}{\Delta t}, \frac{\Delta y}{\Delta t} \right>$ or $\left< \frac{dx}{dt}, \frac{dy}{dt} \right>$, and

- the rates of change of the terrain function in the two directions, $\left< \frac{\Delta z}{\Delta x}, \frac{\Delta z}{\Delta x} \right> $ or $\left< \frac{\partial z}{\partial x}, \frac{\partial z}{\partial x} \right> $.

We saw in Chapter 14 an example of an abstract space: the space of prices. The space's dimension was $2$ with only two prices of two ingredients of bread. The dependence is as follows: $$t\ \longrightarrow\ (x,y) \ \longrightarrow \ z.$$ Multiple variables lead to high-dimensional abstract spaces such as in the case of the price of a car dependent on the prices of $1000$ of its parts: $$t\ \longrightarrow\ (x_1,x_2,...,x_{1000}) \ \longrightarrow \ z.$$ We will be developing algebra, geometry, and calculus that will be applicable to a space any dimension. We replace multiple single variables with a single variable in a space of multiple dimension. For example, $P$ below is such a variable, i.e., a location in a $1000$-dimensional space: $$t\ \longrightarrow\ P \ \longrightarrow \ z.$$ Initially however we will limit ourselves to dimensions that we can visualize!

Convention. We will use upper case letters for the entities that are (or may be) multidimensional, such as points and vectors: $$A,\ B,\ C,\ ...P,\ Q,\ ...,$$ and lower case letters for numbers: $$a,\ b,\ c,\ ..., x,\ y,\ z,\ ...$$

Euclidean spaces and Cartesian systems of dimensions $1$, $2$, $3$,...

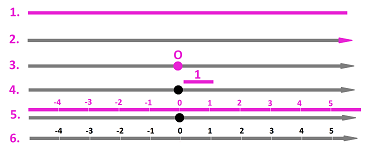

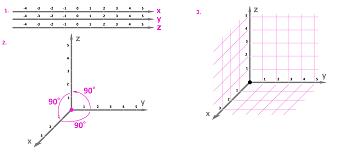

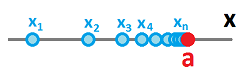

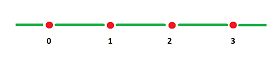

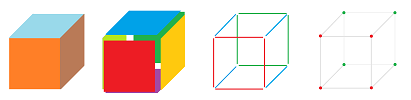

We start with the Cartesian system for dimension $1$. It is a lie with a certain collection of features -- the origin, the positive direction, and the unit -- added:

The main idea is this correspondence (i.e., a function that is one-to-one and onto):

- a location $P\ \longleftrightarrow\ $ a real number $x$.

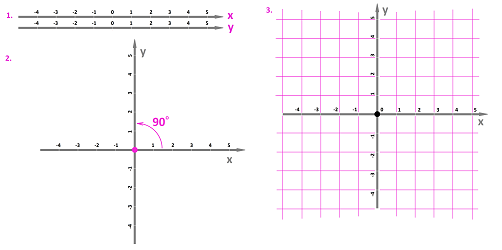

We can have such “Cartesian lines” as many as we like and we can arrange them in any way we like. Then the Cartesian system for dimension $2$ is made of two copies of the Cartesian system of dimension $1$ aligned at $90$ degrees (of rotation) from positive $x$ to positive $y$:

Warning: the units of the two systems don't have to match. The most common $xy$-plane that we have seen has $x$ is time measured in seconds while $y$ is distance measured in feet. Sometimes even the spatial coordinates use different units such as in the case of a location of an aircraft: altitude in feet and distance in miles.

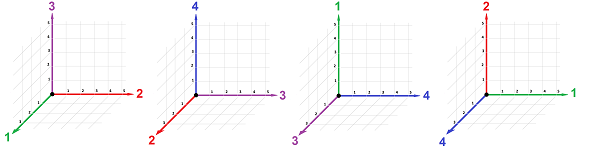

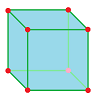

The Cartesian system for dimension $3$ is made of three copies of the Cartesian system of dimension $1$ as follows:

- 1. three coordinate axes are chosen, the $x$-axis, the $y$-axis, and the $z$-axis;

- 2. the two axes are put together at their origins so that it is a $90$-degree turn from the positive direction of one axis to the positive direction of the next -- from $x$ to $y$ to $z$ to $x$;

- 3. use the marks on the axis to draw a grid.

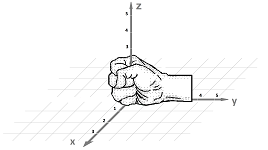

The second requirement is called the right-hand rule. Indeed, if we curl our fingers from the $x$-axis to the $y$-axis, our thumb will point in the direction of the $z$-axis.

The main idea is this correspondence:

- a location $P\ \longleftrightarrow\ $ a triple of numbers $(x,y,z)$.

Remember, the three variables or quantities represented by the three axes may be unrelated. Then our visualization will remain valid with rectangles instead of the squares and boxes instead of cubes.

What about dimension $4$ and higher?

We cannot use our physical space as a reference anymore! We can't use it for visualization either. The space is abstract.

The idea of the $n$-dimensional space remains the same; it is the correspondence:

- a location $P\ \longleftrightarrow\ $ a string of $n$ real numbers $(x_1,x_2,x_3,...,x_n)$.

Using the same letter with subscripts is preferable even for dimension $3$ as the symmetries between the axes and variables are easier to detect and utilize. However, using just $P$ is often even better!

Because of the difficulty or even impossibility of visualization of these “locations” in dimension $4$ this correspondence become much more than just a way to go back and forth whenever convenient. This time we just say: “it's the same thing”.

Example. This may be the data continuously collected by a weather center: $$\begin{array}{llll} 1&2&3&4&5&...\\ \hline \text{temperature}&\text{pressure}&\text{precipitation}&\text{humidity}&\text{sunlight}&...\\ \end{array}$$ They are all measured in different units and cannot be seen as an analog of our physical space. $\square$

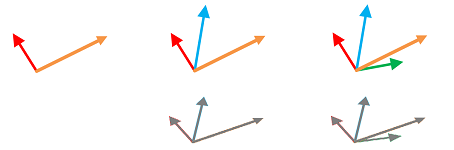

How do we visualize an $n$-dimensional space? Let's first realize that, in a sense, we have failed even with the three-dimensional space! We had to squeeze these three dimensions on a two-dimensional piece of paper.

At best, we can see the shadows of the axes...

These are the spaces we will study and the notation for them:

- ${\bf R}$, all real numbers;

- ${\bf R}^2$, all pairs of real numbers (plane);

- ${\bf R}^3$, all triples of real numbers (space);

- ${\bf R}^4$, all quadruples of real numbers;

- ...

- ${\bf R}^n$, all strings of $n$ real numbers;

- ...

Each of them is supplied with its own algebra and geometry.

We can build these consecutively adding one dimension at a time.

- if ${\bf R}$ is given, we treat it as the $x$-axis and then add another axis, the $y$-axis, perpendicular to the first;

- the result is ${\bf R}^2$, which we treat the $xy$-plane and then add another axis, the $z$-axis, perpendicular to the first two;

- the result is ${\bf R}^3$, which we treat the $xyz$-space and then add another axis perpendicular to the first three; and so on.

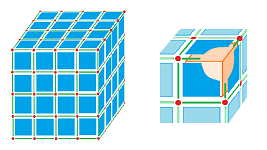

Let's consider ${\bf R}^4$. This space is abstract but still constructed on the frame of copies of ${\bf R}$: the four coordinate axes. We just don't have a place to put them in so that we could see them... It is also seen to be using copies of ${\bf R}^2$: the six coordinate planes (each spanned on those coordinate axes). There are also four copies of ${\bf R}^3$ it is built upon (each constructed on the frame of those coordinate planes):

Exercise. How many coordinate planes are their in ${\bf R}^5$? ${\bf R}^n$? Hint: Chapter 1.

So, these spaces aren't unrelated!

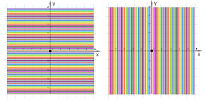

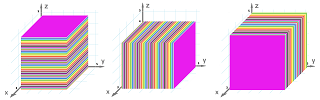

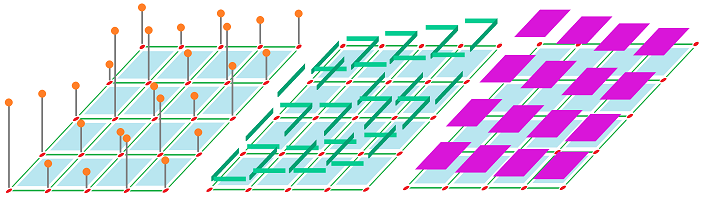

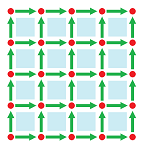

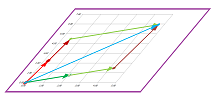

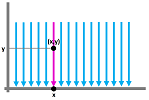

In fact, we can see many copies of ${\bf R}^m$ in ${\bf R}^n$ with $n>m$. For example, the plane can be seen as a row of lines parallel to either of the coordinate axes:

These lines are given by the equations: $$x=a,\ y=b,$$ over all real $a,b$. They are copies of ${\bf R}$ and will have the same algebra and geometry. Now, ${\bf R}^3$ is a stack of planes parallel to one of the coordinate planes:

They are given by the equations: $$x=a,\ y=b,\ z=c,$$ respectively, over all real $a,b,c$. These are copies of ${\bf R}^2$. Now, ${\bf R}^4$ is a “stack” of ${\bf R}^3$s... How they fit together is hard to visualize but they are still copies of ${\bf R}^3$ given by equations: $x_1=a_1$, $x_2=a_2$, etc.

Geometry of distances

The axes of the Cartesian system ${\bf R}^3$ for our physical space refer to the same: distances to the coordinate planes. They are (or should be) measured in the same unit. Even though, in general, the axes of ${\bf R}^n$ refer to unrelated quantities, they may be measured in the same unit, such as the prices of $n$ commodities being traded. When this is the case, doing geometry in ${\bf R}^n$ based entirely on the coordinates of points is possible.

A Cartesian system has everything in the space pre-measured.

In particular, we compute (rather than measure) the distances between locations because the distance can be expressed in terms of the coordinates of the locations.

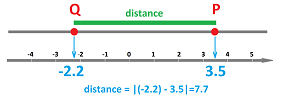

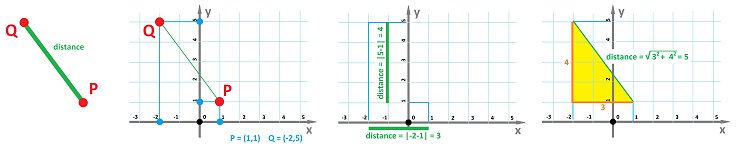

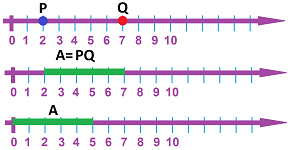

Theorem (Distance Formula for dimension $1$). The distance from point $P$ to point $Q$ in ${\bf R}$ given by real numbers $x$ and $x'$ respectively is $$d(P,Q)=|x-x'|.$$

Here, the geometry problem of finding distances relies on the algebra of real numbers (the subtraction).

The idea of how to compute how close two locations are to each other comes up very early in calculus. Recall the definition of the limit of a sequence:

- $a_n\to a$ if for any $\varepsilon >0$ there is $N$ such that $|a_n-a|<\varepsilon$ whenever $n>N$.

Moreover, in the definition of the limit of a function this expression appears twice, for $x$- and $y$-axes:

- $\lim_{x\to a}f(x)=L$ if for any $\varepsilon >0$ there is $\delta >0$ such that $|f(x)-L|<\varepsilon$ whenever $0<|x-a|<\delta$.

Both sequences (and their limits) and functions (and their limits) appear in multidimensional spaces and will be treated in this manner.

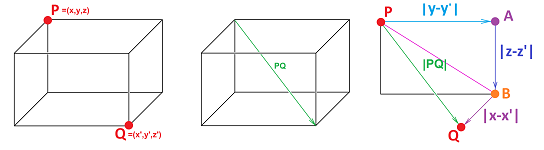

Now the coordinate system for dimension $2$. The formula for the distance between locations $P$ and $Q$ in terms of their coordinates $(x,y)$ and $(x',y')$ is found by using the distance formula from the $1$-dimensional case for either of the two axis in order to find

- the distance between $x$ and $x'$, which is $|x-x'|=|x'-x|$, and

- the distance between $y$ and $y'$, which is $|y-y'|=|y'-y|$,

respectively.

Then the two number are put together by the Pythagorean Theorem taking into account this simplification: $$|x-x'|^2=(x-x')^2,\ |y-y'|^2=(y-y')^2.$$

Theorem (Distance Formula for dimension $2$). The distance between points $P$ and $Q$ in ${\bf R}^2$ with coordinates $(x,y)$ and $(x',y')$ respectively is $$d(P,Q)=\sqrt{(x-x')^2+(y-y')^2}.$$

The two exceptional cases when $P$ and $Q$ lie on the same vertical or the same horizontal line (and the triangle “degenerate” into a segment) are treated separately.

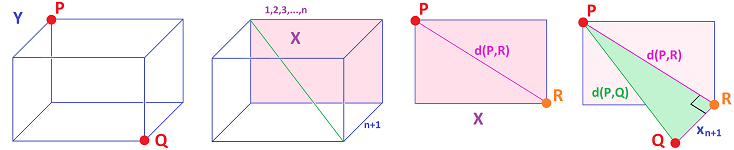

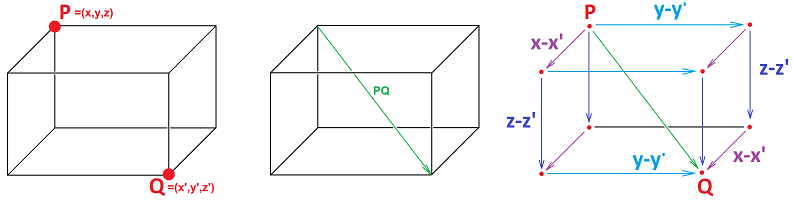

Now the coordinate system for dimension $3$. We can guess that there will be another term in the sum of the Distance Formula (the proof was provided in Chapter 14).

Theorem (Distance Formula for dimension $3$). The distance between points $P$ and $Q$ in ${\bf R}^3$ with coordinates $(x,y,z)$ and $(x',y',z')$ respectively is $$d(P,Q)=\sqrt{(x-x')^2+(y-y')^2+(z-z')^2}.$$

A pattern starts to appear: the square of the distance is the sum of the squares of the distances aoong each of the coordinates. Thinking by analogy, we continue on to include the case of dimension $4$: $$\begin{array}{l|ll|ll} \text{dimension}&\text{points}&\text{coordinates}&\text{distance}\\ \hline 1&P&x&\\ &Q&x'&d(P,Q)^2=(x-x')^2\\ \hline 2&P&(x,y)&\\ &Q&(x',y')&d(P,Q)^2=(x-x')^2+(y-y')^2\\ \hline 3&P&(x,y,z)&\\ &Q&(x',y',z')&d(P,Q)^2=(x-x')^2+(y-y')^2+(z-z')^2\\ \hline 4&P&(x_1,x_2,x_3,x_4)&\\ &Q&(x'_1,x'_2,x'_3,x'_4)&d(P,Q)^2=(x_1-x'_1)^2+(x_2-x'_2)^2+(x_3-x'_3)^2+(x_4-x'_4)^2\\ \hline ...&... \end{array}$$

There are $n$ terms in dimension $n$: $$\begin{array}{l|ll|lll} \text{dimension}&\text{points}&\text{coordinates}&\text{distance}\\ \hline n&P&(x_1,x_2,...,x_n)&\\ &Q&(x'_1,x'_2,...,x'_n)&d(P,Q)^2=(x_1-x'_1)^2+(x_2-x'_2)^2+...+(x_n-x'_n)^2\\ \hline \end{array}$$ So, there is the square root of the sum of the squares of the distances for each of the coordinates. $$\begin{array}{l|ll|lll} \text{dimension}&\text{points}&\text{coordinates}&\text{distance}\\ \hline n&P&(x_1,x_2,...,x_n)&\\ &Q&(x'_1,x'_2,...,x'_n)&d(P,Q)=\sqrt{(x_1-x'_1)^2+(x_2-x'_2)^2+...+(x_n-x'_n)^2}\\ \hline \end{array}$$

The formula for $n=1,2,3$ is justified by what we know about the physical space. What about $n=4$ and above? Let's take a look at the copies of ${\bf R}^3$ that make up ${\bf R}^4$. One of them is given by $x_4=a_4$ for some real number $a_4$. If we take any two points $P,Q$ within it, the formula becomes: $$\begin{array}{ll} d(P,Q)&=\sqrt{(x_1-x'_1)^2+(x_2-x'_2)^2+(x_3-x'_3)^2+(a_4-a_4)^2}\\ &=\sqrt{(x_1-x'_1)^2+(x_2-x'_2)^2+(x_3-x'_3)^2}. \end{array}$$ In other words, the distance is the same as the one for dimension $3$. We conclude that the geometry of such a copy of ${\bf R}^3$ is the same as the “original”!

Exercise. Show that the geometry of any plane in ${\bf R}^3$ and ${\bf R}^4$ is the same as that of ${\bf R}^2$.

In general, we progress from understanding the geometry of $X={\bf R}^n$ to that of $Y={\bf R}^{n+1}$ by adding an extra axis -- perpendicular to the rest -- to ${\bf R}^n$. Then, the Pythagorean Theorem is applied:

We thus replace the study the complex geometry of locations in a multi-dimensional space with a study of distances, i.e., numbers.

Exercise. State the definition of the limits of (a) a parametric curve, (b) a function of two variables.

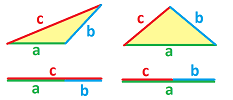

Next, we would like to formulate three very simple properties of the distances that apply equally to all dimensions, without reference to the formulas. First, the distances can't be negative and, moreover, for the distance to be zero, the two points have to be the same. Second, the distance from $P$ to $Q$ is the same as the distance from $Q$ to $P$. And so on.

Theorem (Axioms of Metric Space). Suppose $P,Q,S$ are points in ${\bf R}^3$. Then the following properties are satisfied:

- Positivity: $d(P,Q)\ge 0$; and $d(P,Q)=0$ if and only of $P=Q$;

- Symmetry: $d(P,Q)=d(Q,P)$;

- Triangle Inequality: $d(P,Q)+d(Q,S)\ge d(P,S)$.

Proof. $\blacksquare$

These geometric properties have been justified following the familiar geometry of the “physical space” ${\bf R}^3$. However, they also serve as a starting point for further development of linear algebra. Below, we will define the new geometry of the abstract space ${\bf R}^n$ and demonstrate that these “axioms” are still satisfied.

Exercise. The distance is a function. Explain.

We know the last property from Euclidean geometry. We can justify it for dimension $n\ge 4$ by referring to the following fact: any three points lie within a single plane. This fact brings us back to Euclidean geometry... if that's what we want.

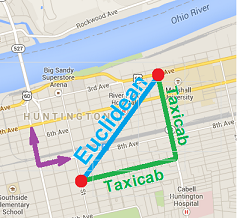

Example (city blocks). The Distance Formula for the plane gives us the distance measured along a straight line as if we are walking through a field. But what if we are walking through a city? We then cannot go diagonally and have to follow the streets. This fact dictates how we measure distances. To find the distance between two locations $P=(x,y)$ and $Q=(u,v)$, we go along the grid: $$d_T(P,Q)= |x-u|+|y-v|.$$

It is called the taxicab metric. $\square$

Exercise. Prove that the taxicab metric satisfies the three properties in the theorem.

The example helps us realize that the Distance Formula is in fact a definition. In other words, for a given space of locations -- one of ${\bf R}^n$s -- we choose a specific way to compute distances from coordinates.

Definition. The Euclidean distance between points $P$ and $Q$ in ${\bf R}^n$ with coordinates $(x_1,x_2,...,x_n)$ and $(x'_1,x'_2,...,x'_n)$ respectively (or simply the distance) is defined to be $$d(P,Q)=\sqrt{\sum_{k=1}^{n}(x_n-x_n')^2}.$$ We refer to the formula as the Euclidean metric. The space ${\bf R}^n$ equipped with the Euclidean metric is called the $n$-dimensional Euclidean space.

Exercise. Prove the Axioms of Metric Space for this formula.

When we deal with the “physical space” ($n=1,2,3$) as in the above theorems, the Euclidean metric is implied. For the “abstract spaces” ($n=1,2,...$), the Euclidean metric is the default choice; however, there are many examples when the Euclidean geometry and, therefore, the Euclidean metric (aka the Distance Formula) don't apply.

Example (prices). Let's consider the prices of wheat and sugar again. The space of prices is the same, ${\bf R}^2$. However, measuring the distance between two combinations of prices with the Euclidean metric leads to undesirable effects. For example, such a trivial step as changing the latter from “per ton” to “per kilogram” will change the geometry of the whole space. It is as if the space is stretched vertically. As a result, in particular, point $P$ that used to be closer to point $A$ than to $B$ might now satisfy the opposite condition. $\square$

Example (attributes). The problem is that the two (or more) measurements or other attributes might have nothing to do with each other. In some obvious cases, they will even have different units! For example, we might compare two persons built based to the two main measurements: weight and height. Unfortunately, if we substitute such numbers into our formula, we will be adding pounds to feet! $\square$

Compare:

- a circle on the plane is defined to be the set of all point a given distance away from its center;

- a sphere in the space is defined to be the set of all point a given distance away from its center.

What about higher dimensions? The pattern is clear:

- a hypersphere in ${\bf R}^n$ is defined to be the set of all point a given distance away from its center.

In other words, each point $P$ on the hypersphere satisfies: $$d(P,Q)=R,$$ where $Q$ is its center and $R$ is its radius.

Example (Newton's Law of Gravity). According to the law, the force of gravity between two objects is

- 1. proportional to either of their masses,

- 2. inversely proportional to the square of the distance between their centers.

In other words, the force is given by the formula: $$F = G \frac{mM}{r^2},$$ where:

- $F$ is the force between the objects;

- $G$ is the gravitational constant;

- $m$ is the mass of the first object;

- $M$ is the mass of the second object;

- $r$ is the distance between the centers of the mass of the two.

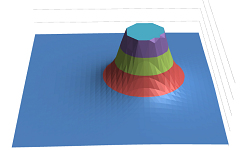

We can treat this formulas as a function. This may be seen as a function of the three variables listed above, like this: $$F(m,M,r) = G \frac{mM}{r^2}.$$ However, the dependence of $F$ on $n$ and $M$ is very simple and, furthermore, we can assume that the masses of planets are remain the same. The third variable is more interesting especially because it depends on the location $P$ of the second object in the $3$-dimensional space: $$r=d(O,P),$$ if, for simplicity, we assume that the first object is located at the origin. Note that this force is constant along any of the spheres centered at $O$. Now, we can re-write the law as a function of three variables: $$F(x,y,z) =\frac{G mM}{d(O,P)^2}=\frac{G mM}{\left(\sqrt{x^2+y^2+z^2}\right)^2}=\frac{G mM}{x^2+y^2+z^2},$$ where the three spatial variables $x,y,z$ are the coordinates of $P$. If we ignore the third variable ($z=0$), we can plot the graph of the resulting function of two variables:

The information about the asymptotic behavior of the original function, $$F(r)\to +\infty\ \text{ as }\ r\to 0\ \text{ and }\ F(r)\to 0\ \text{ as }\ r\to +\infty,$$ can now be restated: $$F(P)\to +\infty\ \text{ as }\ d(O,P)\to 0\ \text{ and }\ F(P)\to 0\ \text{ as }\ d(O,P)\to +\infty.$$ This idea is developed in the next section. $\square$

Sequences and topology in ${\bf R}^n$

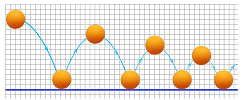

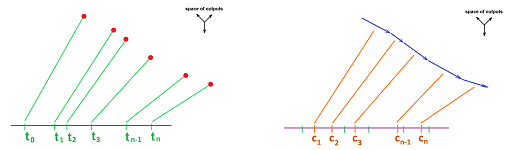

Recall from Chapter 1 the image of a ping-pong ball bouncing off the floor. Recording its height every time gives us a sequence:

It is a sequence of numbers!

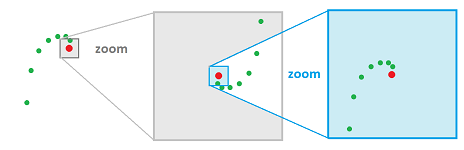

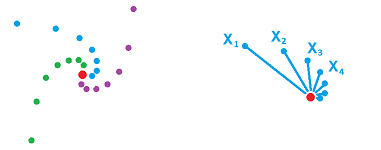

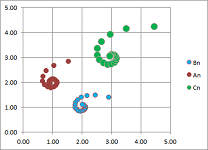

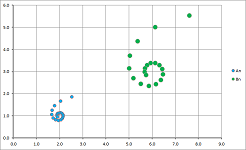

Imagine now watching a ball bouncing on an uneven surface with its locations recorded at equal periods of time. The result is a sequence of locations on the plane:

We will study infinite sequences of locations and especially their trends. An infinite sequence will be sometimes “accumulating” around a single location. The gap between the ball and the drain becomes invisible!

Definition. A function defined on a ray in the set of integers, $\{p,p+1,...\}$, is called an infinite sequence, or simply sequence with the notation: $$A_k:\ k=p,p+1,p+2,...,$$ or, abbreviated, $$A_k.$$

Every function $X=f(t)$ with an appropriate domain creates a sequence: $$A_k=f(k).$$

We visualize numerical sequences as sequences of points on the $xy$-plane via their graphs.

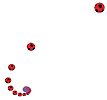

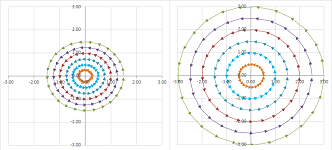

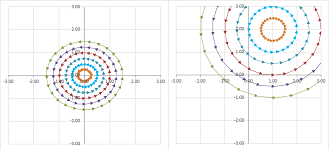

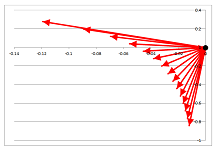

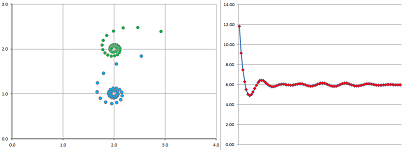

Example. The go-to example is that of the sequence made of the reciprocals: $$A_k=\left( \frac{\cos k}{k},\ \frac{\sin k}{k} \right).$$ It tends to $0$ while spiraling around it.

This fact is easily confirmed numerically. $\square$

Unfortunately, not all sequences are as simple as that. They may approach their respective limits in an infinite variety of ways. And then there are sequences with no limits. We need a more general approach.

Let's recall from Chapter 5 the idea of the convergence of (infinite) sequences of real numbers:

The idea is that the distance from the $k$th point to the limit is getting smaller and smaller: $$|x_k-a|\to 0 \text{ as }k\to \infty.$$ In the $n$-dimensional case, the idea remains the same: a sequence of points in ${\bf R}^n$ is getting closer and closer to its limit, which is also a point in ${\bf R}^n$:

We re-write what we want to say about the meaning of the limits in progressively more and more precise terms. $$\begin{array}{l|ll} k&X=A_k\\ \hline \text{As } k\to \infty, & \text{we have } X\to A.\\ \text{As } k\text{ approaches } \infty, & X\text{ approaches } A. \\ \text{As } k \text{ is getting larger and larger}, & \text{the distance from }X \text{ to } A \text{ approaches } 0. \\ \text{By making } k \text{ larger and larger},& \text{we make } d(X,A) \text{ as small as needed}.\\ \text{By making } k \text{ larger than some } N>0 ,& \text{we make } d(X,A) \text{ smaller than any given } \varepsilon>0. \end{array}$$ Then, the following condition holds:

- for each real number $\varepsilon > 0$, there exists a number $N$ such that, for every natural number $k > N$, we have

$$d(A_k , A) < \varepsilon .$$

Our study become much easier once we realize that distances are numbers and $d(A_k , A)$ is just a sequence of numbers! Understanding distances in ${\bf R}^n$ and numerical sequences allows us easily to sort this out.

Definition. Suppose $A_k:\ k=1,2,3...$ is a sequence of points in ${\bf R}^n$. We say that the sequence converges to another point $A$ in ${\bf R}^n$, called the limit of the sequence, if: $$d(A_n,A)\to 0\text{ as }k\to \infty,$$ denoted by: $$A_k\to A \text{ as }k\to \infty,$$ or $$A=\lim_{k\to \infty}A_k.$$ If a sequence has a limit, then we call the sequence convergent and say that it converges; otherwise it is divergent and we say it diverges.

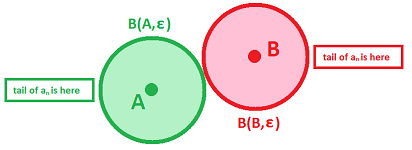

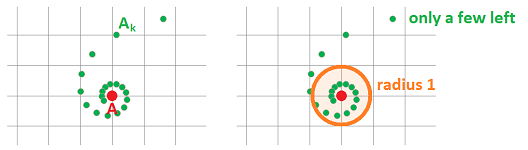

In other words, the points start to accumulate in smaller and smaller circles around $A$. A way to visualize a trend in a convergent sequence is to enclose the tail of the sequence in a disk:

It should be, in fact, a narrower and narrower band; its width is $2\varepsilon$. Meanwhile, the starting point of the band moves to the right; that's $N$.

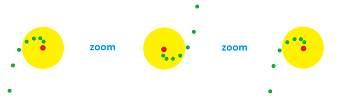

Examples of divergence are below.

Example. A sequence may tend to infinity, such as $A_k=(k,k)$ at the simplest:

Then no disk -- no matter how large -- will contain the sequence's tail. $\square$

This behavior however has a meaningful pattern.

Definition. We say that a sequence $A_n$ tends to infinity if the following condition holds:

- for each real number $R$, there exists a natural number $N$ such that, for every natural number $k > N$, we have

$$d(0,A_n) >R.$$

We describe such a behavior with the following notation: $$A_k\to \infty \text{ as } k\to \infty .$$

We need to justify “the” in “the limit”.

Theorem (Uniqueness). A sequence can have only one limit (finite or infinite); i.e., if $A$ and $B$ are limits of the same sequence, then $A=B$.

Proof. The geometry of the proof is the following: we want to separate these two points by two non-overlapping disks. Then the tail of the sequence would have to fit one or the other, but not both. In order for them to be disjoint, their radii (that's $\varepsilon$!) should be less than half the distance between the two points.

The proof is by contradiction. Suppose $A$ and $B$ are two limits, i.e., either satisfies the definition. Let $$\varepsilon = \frac{d(A,B)}{2}.$$ Now, we write the definition twice:

- there exists a number $L$ such that, for every natural number $k > L$, we have

$$d(A_k, A) < \varepsilon ,$$ and

- there exists a number $M$ such that, for every natural number $k > M$, we have

$$d(A_k, B) < \varepsilon .$$ In order to combine the two statements we need them to be satisfied for the same values of $k$. Let $$N=\min\{ L,M\}.$$ Then,

- for every number $k > N$, we have

$$d(A_k , A) < \varepsilon \text{ and }d(A_k, B) < \varepsilon .$$ In particular, for every $k > N$, we have by the Triangle Inequality: $$d(A,B)\le d(A , A_k)+d(A_k , B) < \varepsilon+\varepsilon<2\varepsilon.$$ A contradiction. $\blacksquare$

Exercise. Follow the proof and demonstrate that that it is impossible to for a sequence to have as limit: a point and infinity.

Thus, there can be no two limits and we are justified to speak of the limit.

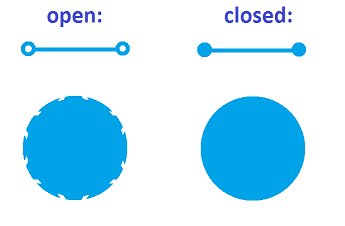

What is the $n$-dimensional analog of a closed interval?

Compare the disk and the disk with its boundary (the circle) removed: $$\{(x,y):\ x^2+y^2\le 1\}\ \text{ vs. }\ \{(x,y):\ x^2+y^2< 1\}.$$ Or think of the ball and the ball with its boundary (the sphere) removed. The difference is that in the latter one can reach the boundary -- and the outside of the set -- by following a sequence that lies entirely inside the set!

Definition. A set in ${\bf R}^n$ is called closed if it contains the limits of all of its convergent sequences.

Definition. A set $S$ in ${\bf R}^n$ is bounded if it fits in a sphere (or a box) of a large enough size: $$d(x,0) < Q \text{ for all } x \text{ in } S.$$

The coordinate-wise treatment of sequences

Here we go back from the treatment of the space to the spread-out coordinate-wise approach.

Suppose space ${\bf R}^n$ is supplied with a Cartesian system.

Let's first look at the definition of the limit of a sequence $X_k$ in ${\bf R}^n$. The limit is defined to be such a point $A$ in ${\bf R}^n$ that $$d(X_k,A)\to 0\text{ as } k\to \infty.$$

Suppose we use the Euclidean metrics is our space ${\bf R}^3$, what does the above condition mean? Suppose $$X_k=\big( x_k,y_k,z_k \big)\text{ and }A=(a,b,c).$$ Then we have: $$\sqrt{(x_k-a)^2+(y_k-b)^2+(z_k-c)^2}\to 0.$$

This limit is equivalent to the following: $$(x_k-a)^2+(y_k-b)^2+(z_k-c)^2\to 0,$$ because the function $u^2$ is continuous at $0$. Since these three terms all non-negative, all three have to approach $0$! Then we have: $$(x_k-a)^2\to 0,\ (y_k-b)^2\to 0,\ (z_k-c)^2\to 0.$$ These limits are equivalent to the following: $$|x_k-a|\to 0,\ |y_k-b|\to 0,\ |z_k-c|\to 0,$$ because the function $\sqrt{u}$ is continuous from the right at $0$. Finally, we have: $$x_k\to a,\ y_k\to b,\ z_k\to c.$$ All coordinate sequences converge!

For the $n$-dimensional case, build a table: $$\begin{array}{c|ccccc} &1&2&...&n\\ \hline X_k&x_k^1&x_k^2& ...&x_k^n\\ \downarrow&\downarrow&\downarrow&...&\downarrow&\text{ as } k\to\infty\\ A&a^1&a^2& ...&a^n \end{array}$$ The following is a summary.

Theorem (Coordinate-wise convergence). A sequence of points $X_k$ in ${\bf R}^n$ converge to another point $A$ if and only if every coordinate of $X_k$ converges to the corresponding coordinate of $A$; i.e., $$X_k\to A \text{ as } k\to \infty\ \Longleftrightarrow\ x_k^i\to a^i \text{ as } k\to \infty \text{ for all } i=1,2,...,n,$$ where $$X_k=(x_k^1,\ x_k^2,\ ...,\ x_k^n) \text{ and } A=(a^1,\ a^2,\ ...,\ a^n).$$

Exercise. Prove the “if” part of the theorem.

Example. We compute using the Continuity Rule for numerical sequences: $$\begin{array}{lll} \lim_{k\to \infty}\left( \cos \frac{1}{k},\ \sin \frac{1}{k} \right)&=\left( \lim_{k\to \infty}\cos \frac{1}{k},\ \lim_{t\to \infty} \sin \frac{1}{k} \right)\\ &=(\cos 0,\ \sin 0)\\ &=(1,0), \end{array}$$ because $\sin t$ and $\cos t$ are continuous. $\square$

This theorem also makes it easy to prove the algebraic properties of limits from those for numerical functions.

Where vectors come from

We introduced vectors in Chapter 4 to handle properly the geometric issue of direction and angles between directions. However, vectors appear frequently in our study of the natural world.

If $P$ and $Q$ are two locations then we can say that $PQ$ is the displacement (from $P$ to $Q$). The idea applies to any space ${\bf R}^n$ but we will start with the physical space devoid of a Cartesian system.

From this point of view, a vector is a pair, $PQ$, of locations $P$ and $Q$.

We saw vectors in action in Chapter 4, but the goal was limited to using vectors to understand directions and angles between them. Our interest here is the algebraic operations on vectors.

It is an ordered pair so that $$PQ\ne QP.$$

The locations and displacements and, therefore, points and vectors are subject to algebraic operations that connect them: $$P+PQ=Q.$$ As you can see, we add a vector to a point that is its initial point and the result is its terminal point. Furthermore, $$PQ=Q-P.$$ As you can see, the vector is the difference of its terminal and its initial points. It follow that $$QP=-PQ.$$

First dimension $1$.

Even though the algebra of vectors is the algebra of real numbers, we can still, even without a Cartesian system, think of the algebra of directed segments.

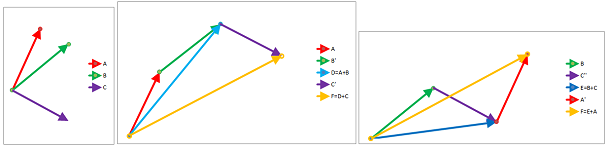

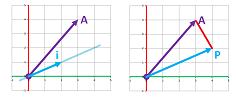

The addition of two vectors is executed by attaching the head of the second vector to the tail of the first, as illustrated below:

The negative number (red) is a segment directed backwards so that its tail is on its left.

Now dimension $2$.

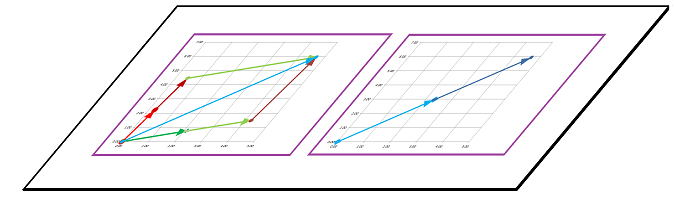

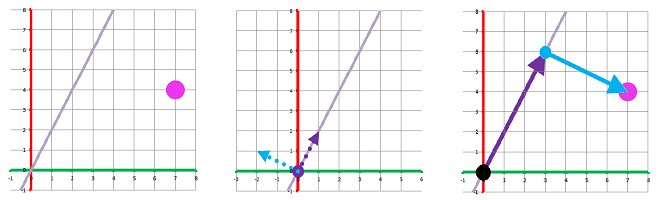

Example. We move point to point through the plane:

This how we can understand addition of vectors as displacements: $$\begin{array}{ccll} \text{initial location}&\text{displacement}&\text{terminal location}\\ \hline P&PQ&Q=P+PQ\\ Q&QR&R=Q+QR&=P+PQ+QR\\ R&RS&S=R+RS&=P+PQ+QR+RS\\ S&ST&T=S+ST&=P+PQ+QR+RS+ST \end{array}$$ For the general case of $m$ steps, we have: $$\sum_{k=0}^m X_kX_{k+1}=X_m-X_0,$$ which, with the velocities denoted by $V_k,\ k=1,2,...,m$, respectively, takes the form: $$\sum_{k=0}^m V_k\Delta t=X_m-X_0.$$ The formula resembles -- and not by coincidence -- the Fundamental Theorem of Calculus! $\square$

Since moving from $P$ to $Q$ and then from $Q$ to $R$ amounts to moving from $P$ to $R$, the construction is, again, a “head-to-tail” alignment of vectors: $$PR=PQ+QR.$$

However, in the physical world, there are “metaphors” for vectors in addition to displacements.

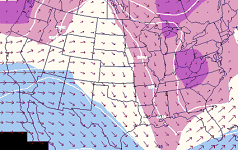

Example (velocities). First, velocities are vectors. We can look at the velocity as the difference (or the rate of change of the location), or independently. Velocities appear as the wind speed at different locations:

If we look at the velocities of particles in a stream they may also be combined with the speed to rowing of the boat:

We need to add these two vectors but they, contrast to displacements, they start at the same point! $\square$

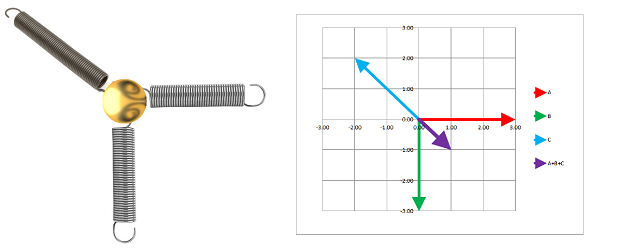

Example (forces). Let's also look at forces as vectors. For example, springs attached to an object will pull it in their respective directions:

We add these vector to find the combined force as if produced by a single spring. The forces are vectors that start at the same location. $\square$

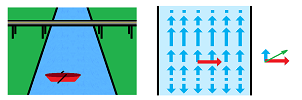

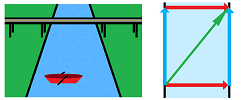

Example (displacements). We can interpret the displacements, too, as vectors aligned to their starting points. Imagine we are crossing a river $3$ miles wide and we know that the current takes us $2$ miles downstream. We can think of what happens in three different ways:

- a trip $3$ miles north followed by a trip $2$ miles east; or

- a trip $2$ miles east followed by a trip $3$ miles north; but also

- a trip along the diagonal of a rectangle with one side going $3$ miles north and another $2$ miles east.

They are the same. $\square$

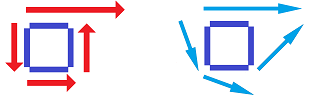

So, to add two vectors, we follow either

- the head-to-tail construction, or

- the tail-with-tail construction.

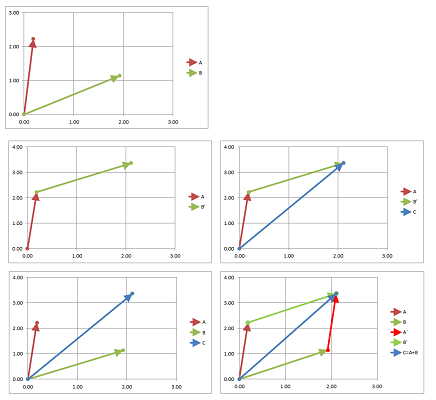

They have to produce the same result! They do, as illustrated below.

For the former, we make a copy $B'$ of $B$, attach to the end of $A$, and then create a new vector with the initial point that of $A$ and terminal point that of $B'$. For the latter, we make a copy $B'$ of $B$, attach to the end of $A$, also make a copy $A'$ of $A$, attach to the end of $B$. Then the sum $A+B$ of two vectors $A$ and $B$ with the same initial point is the vector with the same initial point that is the diagonal of the parallelogram with sides $A$ and $B$.

Exercise. Prove that the result is the same according to what we know from Euclidean geometry.

The latter is called the parallelogram construction and it follows rule that says:

- two vectors can only be added when they have the same initial point.

We think about vectors as line segments in a Euclidean space. As such, it has a direction and the length. It is possible to have the length to be $0$; that's the zero vector. Its direction is undefined.

Example (rowing). We may want to choose the exact way to row the boat in order to overcome the current in order to arrive to the desired destination on the other side of the river.

$\square$

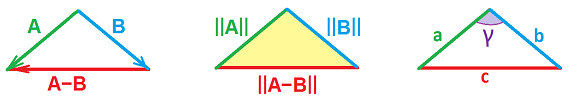

Once we know addition, subtraction is its inverse operation. Indeed, given vectors $A$ and $B$, finding the vector $C$ such that $B+C=A$ amounts to solving an equation, just as with numbers:

So, $A-B$ is found by:

- constructing the vector, $C$, from the end of $B$ to the end of $A$ and then

- making a copy of $C$ with the same starting point as $A$.

Example (stretching vectors). If we want to go faster, we row twice as hard; the vector has to be stretched! Or, one can attach two springs in a consecutive manner to double the force or cut any portion of the spring to reduce the force proportionally. A force might keep its direction but change its magnitude! It might also change the direction to the opposite. $\square$

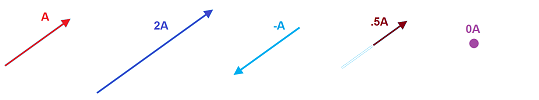

There is then another algebraic operation on vectors. The actual construction is nothing but stretching or shrinking of the vector. This is dimension $1$:

This is dimension $2$:

Thus the scalar product $cA$ of a vector $A$ and a real number $c$ is the vector with the same initial point as $A$, with the direction which is

- same as that of $A$ when $c>0$,

- opposite to that of $A$ when $c<0$, and

- zero when $c=0$;

and the length equal to the length of $A$ multiplied by $|c|$.

What is the dimension of the space? As we know from Euclidean geometry, two lines and, therefore, two vectors, determine a plane. This is why the dimension doesn't matter because the situation is always $2$-dimensional.

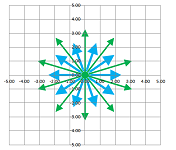

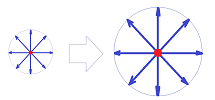

So, both vector operations, even repeated many times, will always produce vectors with the same initial point! Therefore, a single choice of initial point will be sufficient for our study of vector algebra. This point will be assumed to be $O$, the origin, unless otherwise indicated. This is what this collection of vectors looks like, for dimension $2$:

Such a “space of vectors” is called a vector space. It is equipped with two operations, the vector addition: $$\text{ vector }+\text{ vector }=\text{ vector },$$ and the scalar multiplication: $$\text{ number }\cdot\text{ vector }=\text{ vector }.$$ One can never get a vector with a different initial point in this manner.

Example (units). Out of caution, we should look at the units of the scalar. Yes, the force is a multiple of the acceleration: $F=ma$. However, these two have different units and, therefore, cannot be added together! Also, the displacement is the time multiplied by the velocity: $\Delta X=\Delta t \cdot V$. But these two have different units and, therefore, cannot be added! They live in two different spaces... $\square$

We will continue, throughout the chapter, to use capitalization to help to tell vectors from numbers.

Warning: many sources use:

- an arrow above the letter, $\vec{v}$, or

- the bold face, ${\bf v}$,

to indicate vectors.

In this section, we introduced to ${\bf R}^3$, a space of points, a new entity -- a vector. It is a pair of points, $P$ and $Q$, linked together by this algebra: $$PQ=Q-P.$$

Theorem (Axioms of Affine Space). The points and vectors in ${\bf R}^3$ satisfy the following properties:

- Right identity: for every point $P$, we have $P+0=P$;

- Associativity: for every point $P$ and any vectors $V$ and $W$, we have $(P + V) + W = P + (V + W)$;

- Free and transitive action: for every point $P$ and every point $Q$, there is a vector $V$ such that $P+V=Q$.

These algebraic properties have been derived from the familiar algebra and geometry of the “physical space” ${\bf R}^3$. However, they also serve as a starting point for further development of linear algebra. In the following sections, we will define the new algebra and geometry of the abstract space ${\bf R}^n$ and demonstrate that these “axioms” are still satisfied.

Algebra of vectors

We will look for similarities with the algebra of numbers. This link is established via the laws of algebra...

First dimension $1$, the algebra of directed segments.

Let's consider how we can apply two (or more) scalar multiplications in a row. Given a vector $A$ and real numbers $a$ and $b$, we can create several new vectors:

- $B=aA$ from $A$ and then $C=bB$ from $B$; or

- $D=bA$ from $A$ and then $C=aD$ from $D$; or

- $C=(ab)A$ directly from $A$.

The results are the same:

We have the Associativity Property of Scalar Multiplication: $$b(aA)=(ba)A.$$

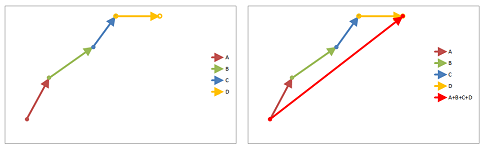

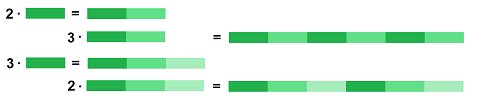

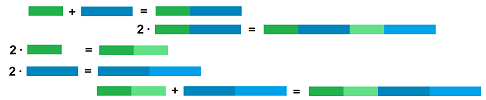

The following simple property, $$A+A=2A,$$ connects vector addition to scalar multiplication. Its generalization is the First Distributivity Property of Vector Algebra: $$(a+b)A=aA+bA.$$ It is illustrated below:

In other words, we distribute scalar multiplication over addition of real numbers.

Next, we distribute multiplication of real numbers over addition of vectors. The Second Distributivity Property of Vector Algebra is: $$a(A+B)=aA+aB.$$ It is illustrated below:

Also, dimension $2$:

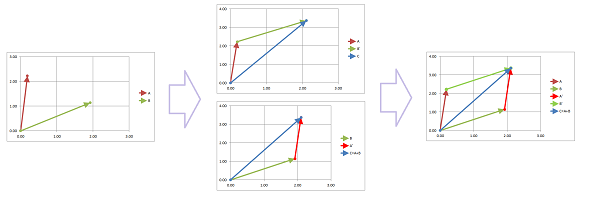

Recall that to find $A+B$ we make a copy $B'$ of $B$, attach to the end of $A$, and then create a new vector with the initial point that of $A$ and terminal point that of $B'$. Now, to find $B+A$ we make a copy $A'$ of $A$, attach to the end of $B$, and then create a new vector with the initial point that of $B$ and terminal point that of $A'$.

From what we know from Euclidean geometry -- two triangles with two identical sides and identical angle between them are identical -- these two triangles form a parallelogram. It has sides $A,B,A',B'$. Therefore, we have the Commutativity Property of Vector Addition: $$A+B=B+A.$$

We have the Associativity Property of Vector Addition: $$A+(B+C)=(A+B)+C.$$ This is the property of dimension $1$:

Also, dimension $2$:

There are some special numbers and there are special vectors...

There is $0$, the real number, and then there is $0$, the vector. The latter is called the zero vector and can mean no displacement, no motion (zero velocity), forces that cancel each other, etc. The two are related: $$0\cdot A=0.$$ We have here: $$\text{number }\cdot \text{ vector }=\text{ vector }.$$ Similarly, $$1\cdot A=A.$$ Of course, we have (all vectors): $$A+0=A.$$ Also, since $PQ=-QP$, we have the negative $-A$ of a vector $A$, as the vector that goes in reverse of $A$. From the algebra, we also discover that $$-A=(-1)\cdot A.$$

Taken together, these properties of vectors match the property of numbers perfectly! This means that all manipulations of algebraic expressions that we have done with numbers are now allowed with vectors -- as long as the expression itself makes sense. In other words, we just need to avoid operations that haven't been defined: no multiplication (for now) of vectors, no division of vectors, no adding numbers to vectors, etc.

As a summary, this is the complete list of rules one needs to carry out algebra with vectors.

Theorem (Axioms of Vector Space). The two operations -- addition of two vectors and multiplication of a vector by a scalar -- in ${\bf R}^3$ satisfy the following properties:

- $X+Y=Y+X$ for all $X$ and $Y$;

- $X+(Y+Z)=(X+Y)+Z$ for all $X$, $Y$, and $Z$;

- $X+0=X=0+X$ for some vector $0$ and all $X$;

- $X+(-X)=0$ for any X and some vector $-X$;

- $a(bX)=(ab)X$ for all $X$ and all scalars $a, b$;

- $1X=X$ for all $X$;

- $a(X+Y)=aX+aY$ for all $X$ and $Y$;

- $(a+b)X=aX+bX$ for all $X$ and all scalars $a, b$.

These algebraic rules have been justified following the familiar algebra and geometry of the “physical space” ${\bf R}^3$. However, they also serve as a starting point for further development of linear algebra. In the following sections, we will define the new algebra and geometry of the abstract space ${\bf R}^n$ and demonstrate that these “axioms” are still satisfied.

Components of vectors

We now understand vectors -- at least in the lower-dimensional setting. Now we add a Cartesian system to these spaces and also include the abstract spaces of arbitrary dimensions, ${\bf R}^n$.

A vector is a pair $PQ$ of points in ${\bf R}^n$, either of which corresponds to a string of $n$ numbers called coordinates.

On the line ${\bf R}^1$, points are numbers and the vectors are simply differences of these numbers: $$PQ=Q-P.$$

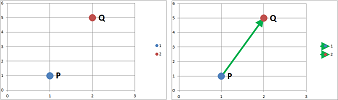

On the plane ${\bf R}^2$, we might have: $$P=(1,2) \text{ and } Q=(2,5).$$ How can we express vector $PQ$ in terms of these four numbers?

We look at the change from $P$ to $Q$:

- the change with respect to $x$, which is $2-1=1$, and

- the change with respect to $y$, which is $5-2=3$.

We combine these into a new pair of numbers (with triangular brackets to distinguish these from points): $$PQ=<1,3>.$$ Technically, however, for a complete information on a vector we have to mention its initial point $P=(1,2)$.

Now in ${\bf R}^3$, we might have: $$P=(x,y,z) \text{ and } Q=(x',y',z').$$ How can we express vector $PQ$ in terms of these six numbers?

There are three changes (differences) along the three axes, i.e., a triple: $$PQ=<x'-x,y'-y,z'-z>.$$

Definition. A vector $PQ$ in ${\bf R}^n$ with its initial point $$P=(x_1,x_3,...,x_n)$$ and its terminal point $$Q=(x_1',x_2',...,x_n')$$ is given by the string of $n$ numbers called the components of the vector: $$x_1'-x_1,\ x_2'-x_3,\ ...,\ x_n'-x_n.$$

The definition matches the one that relies on directed segments.

A vector may emerge from its initial and terminal points or independently. In either case, we assemble the components according to the following row notation: $$A=<a_1,a_2,...,a_n>,$$ or the column notation: $$A=\left[\begin{array}{ccc} a_1\\ a_2\\ ...\\ a_n\end{array}\right].$$

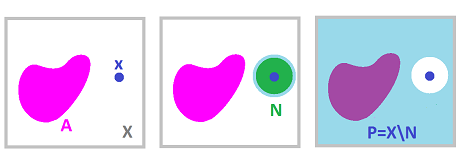

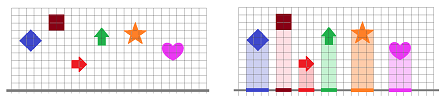

Once again we can only carry out vector addition on vectors with the same initial point. What happens if we change the initial point while leaving the components of the vectors intact? Not only each vector are “copied” but so are the results of the algebraic operations. They are the same just shifted to a new location:

It is then sufficient to provide results for the vectors that start at the origin $O$ only! Only these are allowed:

In that case, the components of a vectors are simply the coordinates of its end: $$\begin{array}{rlccccccc} P&=(x_1,&x_3,&...,&x_n)& \Longrightarrow\\ OP&=<x_1,&x_2,&...,&x_n>. \end{array}$$

Next we consider the familiar algebraic operations but this time the vectors are represented by their components.

We carry out operations component-wise.

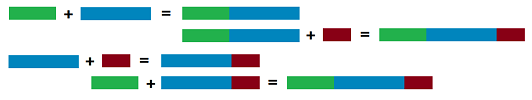

We demonstrate these operations for dimension $n=3$ and for both row and column style of notation. The vector addition is defined by: $$\begin{array}{rcccccc} A&=<&x,&y,&z&>\\ +\\ B&=<&u,&v,&w&>\\ \hline A+B&=<&x+u,&y+v,&z+w&> \end{array},\qquad \left[\begin{array}{c}x\\y\\z\end{array}\right]+ \left[\begin{array}{c}u\\v\\w\end{array}\right]= \left[\begin{array}{c}x+u\\y+v\\z+w\end{array}\right].$$ The scalar multiplication is defined by: $$\begin{array}{rcccccc} A&=<&x,&y,&z&>\\ \times\\ c&\\ \hline cA&=<&cx,&cy,&cz&> \end{array},\qquad c\cdot\left[\begin{array}{c}x\\y\\z\end{array}\right]= \left[\begin{array}{c}cx\\cy\\cz\end{array}\right].$$ In either case the components are aligned. Even though both seem equally convenient, the former will be seen as an abbreviation of the latter.

Definition. For two vectors in ${\bf R}^n$, $$A=<a_1,...,a_n>\ \text{ and }\ B=<b_1,...,b_n>,$$ their sum is defined to be: $$A+B=<a_1+b_1,...,a_n+b_n>.$$

Definition. For a number $c$ and a vector in ${\bf R}^n$, $$A=<a_1,...,a_n>,$$ their scalar product is defined to be: $$cA=<ca_1,...,ca_n>.$$

We thus have the algebra of vectors for a space of any dimension! These operations can be proven to satisfy the same properties as the vectors in ${\bf R}^3$ as discussed in the last section. The proof is straight-forward and relies on the corresponding property of real numbers. For example, to prove the commutativity of vector addition we use the commutativity of addition of numbers as follows: $$A+B=\left[\begin{array}{c}x\\y\\z\end{array}\right]+ \left[\begin{array}{c}u\\v\\w\end{array}\right]= \left[\begin{array}{c}x+u\\y+v\\z+w\end{array}\right]= \left[\begin{array}{c}u+x\\v+y\\w+z\end{array}\right]= \left[\begin{array}{c}u\\v\\w\end{array}\right]+ \left[\begin{array}{c}x\\y\\z\end{array}\right]=B+A.$$

Furthermore, the special vectors deserve special attention.

Definition. The zero vector in ${\bf R}^n$ has all zero components: $$0=<0,...,0>.$$

Definition. The negative vector of a vector $A$ in ${\bf R}^n$ has its components the negatives of those of $A$: $$-A=<-a_1,...,-a_n>.$$

Exercise. Prove the eight axioms of vector space for the algebraic operations defined this way for (a) ${\bf R}^3$, (b) ${\bf R}^n$.

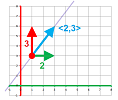

The reason for the word “component” becomes clear: a vector $A$ is decomposed into the sum of vectors each of which is aligned with one of the axes; for example, $$A=<3,2>=<3,0>+<0,2>.$$ They are called the component vectors of $A$. We can take this analysis one step further with scalar multiplication: $$A=<3,2>=<3,0>+<0,2>=3<1,0>+2<0,1>.$$ Then, similarly, any vector can be represented in such a way: $$<a,b>=a<1,0>+b<0,1>.$$

We use the following notation for these vectors in ${\bf R}^2$: $$i=<1,0,0>,\ j=<0,1,0>.$$ Then, $$<a,b>=ai+bj.$$

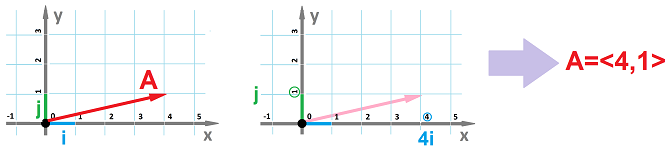

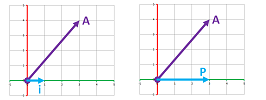

Example. Below we have: $$4i+j=<4,1>.$$

$\square$

Thus, representing a vector in terms of its components is just a way (a single way in fact) to represent it in terms of a pair of specified unit vectors aligned with the axis.

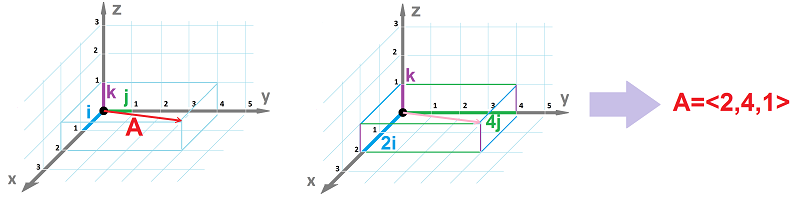

We, furthermore, use the following notation for these vectors in ${\bf R}^3$: $$i=<1,0,0>,\ j=<0,1,0>,\ k=<0,0,1>.$$ They are called the basis vectors. For every vector, we have the following: $$<a,b,c>=ai+bj+ck.$$

Example. Below we have: $$2i+4j+k=<2,4,1>.$$

$\square$

Definition. The basis vectors in ${\bf R}^n$ are defined and denoted by $$\begin{array}{ll} e_1=<1,0,0,...,0>,\\ e_2=<0,1,0,...,0>,\\ ...\\ e_n=<0,0,0,...,1>.\\ \end{array}$$

Of course, choosing a different Cartesian system will produce a new set of basis vectors! We can now understand the Cartesian system as the origin and the basis vectors instead of the origin and the axes. The choice of the basis vectors is dictated by the problem to be solved.

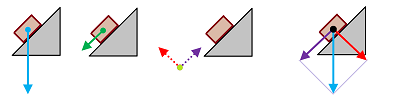

Example (compound motion). Suppose we are to study the motion of an object sliding down a slope. Even though the gravitation is pulling it vertically down, the motion is restricted to the surface of the slope and, therefore, is linear. It is then beneficial to choose the first basis vector $i$ to be parallel to the surface and the second $j$ perpendicular.

The gravity force is then decomposed into the sum of two vectors. It is the first one that affects the object and is to be analyzed in order to find the acceleration (the Second Newton's Law). The second is perpendicular and is cancelled by the resistance of the surface (the Newton's Third Law). $\square$

Example (investing). Even when we deal with the abstract spaces ${\bf R}^n$, such decompositions may be useful. For example, an investment advice might be to hold the proportion of stocks and bonds $1$-to-$2$. We plot each possible portfolio as a point on the $xy$-plane, where $x$ is the amount of stocks and $y$ is the amount of bonds in it. Then the “ideal”portfolios lies on the line $y=2x$. Furthermore, how do we evaluate how well portfolios follow this advice? We choose the first basis vector to be $i=<2,1>$ and the second perpendicular to it, $j=<-1,2>$.

Then the first coordinate -- with respect to this new coordinate system -- of your portfolio reflects how far you have followed the advice and the second how much you've deviated from it. Now we just need to learn how to compute angles in such a space... $\square$

Lengths of vectors

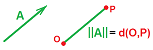

A vector is a directed segment and one of its attributes is its length. The meaning of this number is clear if we look at the vector $PQ$ as two points $P$ and $Q$.

Definition. The length of a vector is defined to be the distance between its initial and terminal points: $$||PQ|| = d(P,Q).$$ This number is also called the magnitude or the norm of the vector.

The notation resembles the absolute value and not by accident: it is the same thing in the $1$-dimensional case, ${\bf R}$.

The properties of distance -- between points $P$ and $Q$ -- will give us the properties of the magnitude -- of the vector $PQ$.

First, the Positivity:

- $d(P,Q)\ge 0$; and $d(P,Q)=0$ if and only of $P=Q$.

It follows that

- $||PQ||\ge 0$; and $||PQ||=0$ if and only of $P=Q$,

or

- $||A||\ge 0$; and $||A||=0$ if and only of $A=0$.

Second, the Symmetry:

- $d(P,Q)=d(Q,P)$.

It follows that

- $||PQ||=||QP||$,

or

- $||A||=||-A||$.

Third, the Triangle Inequality:

- $d(P,Q)+d(Q,S)\ge d(P,S)$.

It follows that

- $||PQ||+||QS||\ge ||PS||$,

or

- $||A||+||B||\ge ||A+B||$.

That how the magnitude interacts with vector addition. What about scalar multiplication?

There is another convenient property, the Homogeneity:

- $||c\cdot A||=|c|\cdot ||A||$.

In other words, the when the direction doesn't matter, we just multiply the components by this stretching factor. Note that the Symmetry property above is incorporated into this new property because $-A=(-1)A$.

These properties are applicable to all dimensions and are used to manipulate vector expressions.

Whenever only the directions of the vectors matter, the following is a convenient concept.

Definition. For any vector $X\ne 0$, its unit vector is given by: $$\frac{X}{||X||}.$$ Also any vector of length $1$ is called a unit vector.

Theorem. Two vectors with equal, or opposite, unit vectors: $$\frac{V}{||V||}=\pm\frac{W}{||W||},$$ are multiples of each other: $$V=cW.$$

Exercise. Prove the theorem.

Example (Newton's Law of Gravity). Recall that the force of gravity between two objects of masses $M$ and $m$ located at points $O$ and $P$ is given by: $$f(P)= G \frac{mM}{d(O,P)^2}.$$ It is a function of three variables, or function of points in this space with real values. However, the law adds to the formula the statement that the force is directed from $P$ to $O$. This is an implicit language of vectors: the direction of the force depends on the direction of the location vector. Let's sort this out.

We have two vectors:

- The gravity is a force and, therefore, a vector.

- The location of the second object can also be represented as a vector (with the initial point at the first object).

If $X=OP$, we can re-write the formula as follows: $$||F(X)|| = G \frac{mM}{||X||^2}.$$ But what about the directions?

Let's derive the vector form of the law. The law states that the force of gravity affecting either of the two objects is

- directed towards the other object.

In other words, $F$ points in the opposite direction to $X$, i.e., $-X$. Therefore, their unit vectors are equal: $$\frac{F}{||F||}=-\frac{X}{||X||}.$$ Therefore, they are multiples of each other: $$F(X)=c(-X).$$ That's all we need except for the coefficient. We now use Homogeneity to find it: $$G \frac{mM}{||X||^2}=||F||=|c|\cdot ||(-X)||=|c|\cdot||X||.$$ The final form is the following: $$F(X)=-G\frac{mM}{||X||^3}X.$$

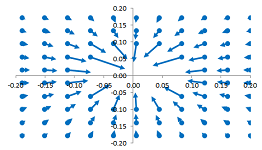

Now that both the input and the output are $3$-dimensional vectors! How do we illustrate this new kind of function? First, we think of the input as a point and the output as a vector and then we attach the latter to the former. Below, we plot vector $F(X)$ starting at location $X$ on the plane ($z=0$):

The limit description of the asymptotic behavior takes the following form: $$||F(X)||\to +\infty \text{ as } ||X||\to 0\text{ and }||F(X)||\to 0 \text{ as } ||X||\to +\infty.$$ $\square$

When a Cartesian system is provided, we have the Euclidean metrics, i.e., the distance between points $P$ and $Q$ in ${\bf R}^3$ with coordinates $(x,y,z)$ and $(x',y',z')$ respectively is $$d(P,Q)=\sqrt{(x-x')^2+(y-y')^2+(z-z')^2}.$$

Theorem. The length of vector $A$ in ${\bf R}^3$ with components $a,b,c$ is equal to $$||A||=\sqrt{a^2+b^2+c^2}.$$

As a summary, these are the properties of the magnitudes of vectors.

Theorem (Axioms of Normed Space). For any vectors $A,B$ in ${\bf R}^3$ and any real $c$, the following properties are satisfied:

- 1. $||A||\ge 0$, and $||A||=0$ if and only if $A=0$;

- 2. $||A||+||B||\ge ||A+B||$;

- 3. $||c\cdot A||=|c|\cdot ||A||$.

These geometric properties have been justified following the familiar geometry of the “physical space” ${\bf R}^3$. However, they also serve as a starting point for further development of linear algebra. We define the new geometry of the abstract space ${\bf R}^n$ of vectors as follows.

Definition. The norm of a vector $$A=<a_1,...,a_n>$$ in ${\bf R}^n$ defined to be: $$||A||=\sqrt{\sum_{k=1}^n a_k^2}.$$

We can now demonstrate that these “axioms” are still satisfied.

Exercise. Demonstrate independently that our formula for the norm satisfies these three properties.

Parametric curves

Functions process an input of any nature and produce an output of any nature.

In general, we represent a function diagrammatically as a black box that processes the input and produces the output: $$ \newcommand{\ra}[1]{\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{ccccccccccccccc} \text{input} & & \text{function} & & \text{output} \\ x & \mapsto & \begin{array}{|c|}\hline\quad f \quad \\ \hline\end{array} & \mapsto & y \end{array}$$

Convention. We will use upper case letters for the functions the outputs of which are (or may be) multidimensional, such as points and vectors: $$F,\ G,\ P,\ Q,\ ...,$$ and lower case letters for the functions with numerical outputs: $$f,\ g,\ h,\ ...$$

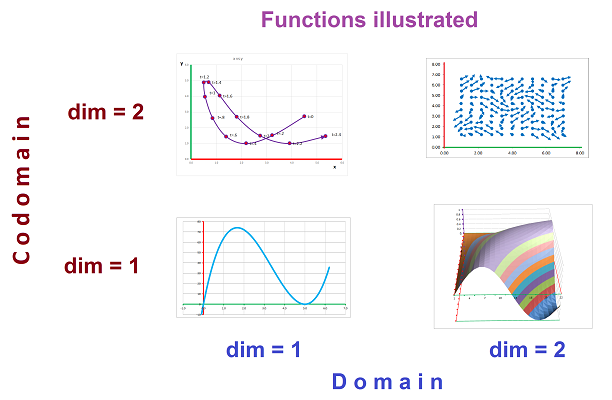

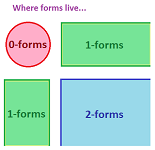

Functions in multidimensional spaces take points or vectors as the input and produce points or vectors of various dimensions as the output. We can say that the input $X$ is in ${\bf R}^n$ and the output $U=F(X)$ of $X$ is in ${\bf R}^m$: $$\begin{array}{lll} F:&P&\mapsto&U\\ &\text{in }{\bf R}^n&&\text{in }{\bf R}^m \end{array}$$ Then, the domain of such a function is in ${\bf R}^n$ and the range (image) is in ${\bf R}^m$. The domain can be less than the whole space. In fact, the function can be defined on the nodes of a partition of the subset of ${\bf R}^n$. Below we illustrate the four possibilities for $n=1,2$ and $m=1,2$:

In addition to the usual functions, we see

- a parametric curve $P:t\mapsto (x,y)$ or $P:t\mapsto <x,y>$,

- a function of two variables $F:(x,y)\mapsto z$ or $F:<x,y>\mapsto z$, and

- a vector field on the plane $V:(x,y)\mapsto <u,v>$.

We will review these three items starting with parametric curves. We need to learn how, instead of treating them one axis at a time, to study them as functions with multidimensional inputs and outputs. Vector algebra will be especially useful.

We will refer to as a parametric curve to

- any function of the real variable, i.e., the domain lies inside ${\bf R}$, and

- with its values in ${\bf R}^m$ for some $m=1,2,3...$.

In this section we will limit ourselves to the interpretation of these functions via motion. The independent variable is then time and the value is the location.

A point is the simplest curve. Such a curve with no motion is provided by a constant function.

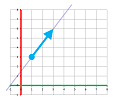

A straight line is the second simplest curve.

We start with lines in ${\bf R}^2$. We already know how to represent straight lines on the plane; the first method is the slope-intercept form: $$y=mx+b.$$ This method does not include vertical lines: the slope is infinite! In our study of curves (specifically to represent motion) on the plane, there are no preferred directions and then it is unacceptable to exclude any straight lines. The second method is implicit: $$px+qy=r.$$ The case of $p\ne 0,\ q=0$ gives us a vertical line. The third method is parametric. It has a dynamic interpretation.

Example (straight motion). Suppose we would like to trace the line that starts at the point $(1,3)$ and proceeds in the direction of the vector $<2,3>$.

We use motion as a starting point and as well as a metaphor for parametric curves, as follows. We start moving ($t=0$)

- from the point $P_0=(1,3)$,

- under a constant velocity of $V=<2,3>$.

We move

- $2$ feet per second horizontally, and

- $3$ feet per second vertically.

In terms of vectors, if we are at point $P$ now, we will be at point $P+V$ after one second. For example, we are at $P_1=P_0+V=(1,3)+<2,3>=(3,6)$ at time $t=1$. We then have already two points on our parametric curve $P$: $$P(0)=P_0=(1,3)\ \text{ and }\ P(1)=P_1=(3,6).$$ Of course, these are also the values of our function.

Let's find the formulas for this function. Early on, it's OK to do this component-wise. Then $$P(t)=(x(t),y(t)),$$ and $$x(0)=1,\ x(1)=3\ \text{ and }\ y(0)=3,\ y(1)=6.$$ These functions must be linear; therefore, we have: $$x(t)=1+2t\ \text{ and }\ y(t)=3+3t.$$ This is a parametric curve... but not an acceptable answer if we are to learn how to use vectors!

The four coefficients, of course, come from the specific numbers that give us $P_0$ and $V$. Let's assemble the two coordinate function into one parametric curve: $$P(t)=(x(t),y(t))=(1+2t,\ 3+3t).$$ This is still not good enough; we still can't see the $P_0$ and $V$ directly! We continue by using vector algebra: $$\begin{array}{lll} P(t)&=(1+2t,\ 3+3t)&\text{...we use vector addition...}\\ &=(1,3)+<2t,\ 3t>&\text{...then scalar multiplication...}\\ &=(1,3)+t<2,\ 3>&\text{...and finally...}\\ &=P_0+tV. \end{array}$$ We have discovered a vector representation of straight motion: $$P(t)=P_0+tV,$$ where $P_0$ is the initial location and $V$ is the (constant) velocity. $\square$

Warning: One can, of course, move along a straight line at a variable velocity.

So,

- position at time $t$ = initial position $\ +\ t\cdot\ $ velocity.

We stated this conclusion before! This time only the context has changed. The pattern is clear: the line starting at the point $(a,b)$ in the direction of the vector $<u,v>$ is represented parametrically as: $$P(t)=(a,b)+t<u,v>.$$ Similar for dimension $3$: the line starting at the point $(a,b,c)$ in the direction of the vector $<u,v,w>$ is represented as: $$P(t)=(a,b,c)+t<u,v,w>.$$ And so on.

At the next level, we'd rather have no references to neither the dimension of the space nor the specific coordinates.

Definition. Suppose $P_0$ is a point in ${\bf R}^m$ and $V$ is a vector. Then the parametric curve of the uniform motion through $P_0$ with the initial velocity of $V$ is the following: $$P(t)=P_0+tV.$$ Then, the line through $P_0$ in the direction of $V$ is the path (image) of this parametric curve.

Stated for $m=1$, the definition produces the familiar point-slope form! The rate of change is a single number (the slope) because the change is entirely within the $y$-axis. What has changed is the context: there are infinitely many directions in ${\bf R}^2$ for change. That is why the change and the rate of change is a vector. But the equation looks exactly the same... even though each letter may contain unlimited amount of information!

Example (prices). The definition applies to the abstract spaces. If ${\bf R}^m$ is the space of prices (of stocks or commodities), we might have $m=10,000$. The prices recorded continuously will produce a parametric curve and this curve might be a straight line. This happens when the prices are growing (or declining) proportionally but, possibly, at different rates. Also, in the short term this curve is likely to look like a straight line: the most recent change of each price is recorded is then the same change is predicted for the next time period. In each column we use the same recursive formula for the $k$th price: $$x_k(t+\Delta t)=x_k(t)+v_k\Delta t,$$ where $v_k$ is the $k$th rate of change.

The table is our $10,000$-dimensional curve! Can we visualize such a curve in any way? We pick two columns at a time and plot that curve on the plane. Since these columns correspond to the axes, we are plotting a “shadow” of our curve cast on the corresponding coordinate plane. They are all straight lines. $\square$

Exercise. Find a parametric representation of the line through two distinct points $P$ and $Q$.

In the physical space, a straight line is followed by an object when there are not forces at play. Even a constant force leads to acceleration which may change the direction of the motion.

Example (constant velocity). Recall these recursive formulas that gives the location as a function of time when the velocity is constant ($k=0,1,...$): $$\begin{array}{lll} x:&p_{k+1}&=p_k&+v\Delta t;\\ y:&q_{k+1}&=q_k&+u\Delta t. \end{array}$$

These quantities are now combined into points on the plane: $$P_k=(p_k,q_k),$$ and the equations take a vector form too: $$P_{k+1}=P_k+V_k\Delta t.$$ $\square$

Example (thrown ball). Let's review the dynamics of a thrown ball. A constant force causes the velocity to change linearly, just as the location in the last example. How does the location change this time?

In the horizontal direction, as there is no force changing the velocity, the latter remains constant. Meanwhile, the vertical velocity is constantly changed by the gravity. The dependence of the height on the time is quadratic. The path of the ball will appear to an observer -- from the right angle -- as a curve:

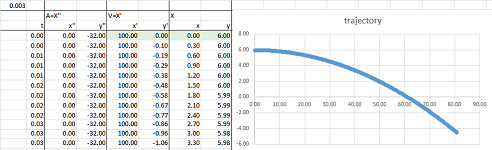

A falling ball is subject to these accelerations, horizontal and vertical: $$x:\ a_{k+1}=0;\quad y: a_{k+1}=-g.$$ Now recall the setup considered previously: from a $200$ feet elevation, a cannon is fired horizontally at $200$ feet per second.

The initial conditions are:

- the initial location, $x:\ p_0=0$ and $y:\ p_0=200$;

- the initial velocity, $x:\ v_0=200$ and $y:\ v_0=0$.

Then we have two pairs of recursive equations -- for the location in terms of the velocity and the velocity in terms of acceleration -- independent of each other: $$\begin{array}{lll} x:& v_{k+1}&=v_0, & &p_{k+1}&=p_k&+v_k\Delta t;\\ y:& u_{k+1}&=v_k&-g\Delta t, &q_{k+1}&=q_k&+u_k\Delta t. \end{array}$$ These are the formulas in the vector notation: $$\begin{array}{llll} V_{k+1}&=V_{k}&+A&\cdot\Delta t,\\ P_{k+1}&=P_{k}&+V_{k+1}&\cdot\Delta t. \end{array}$$ $\square$

The advantage of the vector approach is that the choice of the coordinate system is no longer a concern!

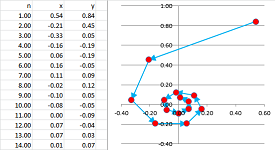

Example (recursive formulas). In dimension $2$, for example, we don't have to align the $x$-axis with the direction of the throw and in dimension $3$ we don't have to align the $z$-axis with the vertical direction. Nonetheless, let's start with former case. A $6$-foot man throws -- straight forward -- a ball with the speed of $100$ feet per second. If the throw is along the $x$-axis and the $y$-axis is vertical, we have $$A=<0,-32>,\ V_0=<0,100>,\ P_0=(6,0).$$ This data goes into the first row of our table for the columns marked $x' ', y' '$, $x',y'$, and $x,y$ respectively.

We apply the recursive formulas given above. In the spreadsheet,

- the velocity is computed from the velocity

- the location is computed from the acceleration,

What is the difference of our vectors approach from our previous treatment of the flight of a ball? Instead of three columns for $x' ', x', x$ and then three columns for $y' ', y', y$, one can see how the two components of acceleration, velocity, and location are combined into vectors contained in two columns each: $x' ',y' '$, then $x',y'$, then $x,y$. The formula is almost the same as before: $$\texttt{=R[-1]C+(RC[-2]-R[-1]C[-2])*R2C1}$$

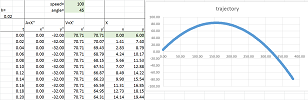

Next an angled throw... The only change is the vector of initial velocity: $$A=<0,-32>,\ V_0=<100\cos \alpha,\ 100\sin \alpha>,\ P_0=(6,0),$$ where $\alpha$ is the angle of the throw.

$\square$

Example (continuous motion). Now the continuous case... Starting with the physics, $$\begin{cases} x' '&=&0,\\ y' '&=&-g, \end{cases}$$ we integrate -- coordinate-wise -- once: $$\begin{cases} x'&=&&&v_x, & & x'(0)=v_x&\text{is the initial horizontal velocity},\\ y'&=&-gt&+&v_y,& & y'(0)=v_y&\text{is the initial vertical velocity}; \end{cases}$$ and twice: $$\begin{cases} x&=&&&v_xt&+&p_x, & & x(0)=p_x&\text{is the initial horizontal position},\\ y&=&-\tfrac{1}{2}gt^2&+&v_yt&+&p_y,& & y(0)=p_y&\text{is the initial vertical position}. \end{cases}$$ Thus, we have: $$\begin{cases} \text{depth }&=&\text{ initial depth }&+&\text{ initial horizontal velocity }&\cdot\text{ time },\\ \text{height}&=&\text{ initial height }&+&\text{ initial vertical velocity }&\cdot\text{ time }&-\tfrac{1}{2}g\cdot\text{ time }^2. \end{cases}$$ We take this solution to the next level by assembling these components into vectors just as in the last example. $$\begin{array}{ccccccc} \text{location}&=&\text{ initial location}&+&\text{ initial velocity }&\cdot\text{ time }&+&<0,-\tfrac{1}{2}g\cdot\text{ time }^2>. \end{array}$$ The last term needs work. The zero represents the zero horizontal acceleration while $-g$ is the vertical acceleration. Then the last term is the acceleration times $\frac{t^2}{2}$. Algebraically, we have: $$\begin{array}{ccccccc} \left[\begin{array}{l}x\\y\end{array}\right]&=&\left[\begin{array}{l}p_x\\p_y\end{array}\right]&+&\left[\begin{array}{l}v_x\\v_y\end{array}\right]&\cdot t&+&\left[\begin{array}{r}0\\-g\end{array}\right] &\cdot\frac{t^2}{2}. \end{array}$$ $\square$

The nature of the acceleration is irrelevant; we only need it to be constant.

Definition. Suppose $P_0$ is a point in ${\bf R}^m$ and $V_0,A$ are vectors. Then the parametric curve of uniformly accelerated motion through $P_0$ with the initial velocity of $V$ and acceleration $A$ is: $$P(t)=P_0+V_0\cdot t+A\cdot \frac{t^2}{2}.$$

We have an extra term, that disappears when $A=0$, in comparison to the uniform motion. Just as in the $1$-dimensional case, a constant acceleration produces a quadratic motion!

Exercise. Show that the path of this parametric curve is a parabola.

The values of a function represented by a parametric curve lie in ${\bf R}^m$ as points but can also be seen as vectors. For example, we can re-write the familiar parametric curve of points: $$P(t)=P_0+V_0\cdot t+A\cdot \frac{t^2}{2},$$ as one of vectors: $$R(t)=R_0+V_0\cdot t+A\cdot \frac{t^2}{2}.$$ Instead of passing through point $P_0$ it passes through the end point of vector $R_0=OP_0$, which is the same thing. And, of course, the end of vector $R(t)$ is the point $P(t)$. The advantage of the latter approach is that it allows us to apply vector operations to the curves.

A more general approach to parametric curves, as well as their calculus, is presented in Chapter 17.

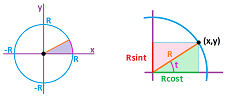

Example (circle transformed). Recall how we parametrized the unit circle using the angle as the parameter. Here, the $x$- and $y$-coordinates of a point at angle $t$ is $\cos t$ and $\sin t$ respectively: $$x=\cos t,\ y=\sin t.$$ The values of $t$ may be the nodes of a partition of an interval such as $[0,2\pi]$ or run through the whole interval.

We can also look at this formula as a parametrization with respect to time. Then this is a record of motion with a constant speed or, in other words, a constant angular velocity. Now, this is the vector representation of this curve: $$R(t)=<\cos t,\sin t>.$$ So, applying vector operations to this curve will give as new curves, just as in the $1$-dimensional case (Chapter 4). For example, using scalar multiplication by $2$ on all vectors means stretching radially the whole space. We then discover that the curve in the plane given by: $$Q(t)=2R(t)=2<\cos t,\sin t>,$$ is a parametric curve of the circle of radius $2$.

Similarly, using vector addition with $W=<3,1>$ on all vectors means shifting the whole space by this vector. We then discover that the curve in the plane given by: $$S(t)=W+2R(t)=(1,2)+2<\cos t,\sin t>,$$ is a parametric curve of the circle of radius $2$ centered at $(1,2)$.

And so on with other transformations of the plane. $\square$

Partitions of the Euclidean space

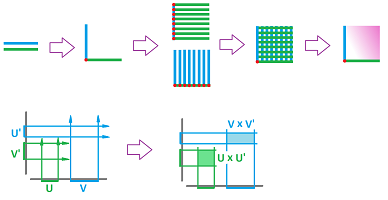

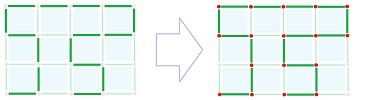

In Parts I and II, we used partitions of intervals, as well as the whole real line, in order to study incremental change. This time, we need partitions of the $n$-dimensional Euclidean space. The building blocks will come from partitions of the axes.

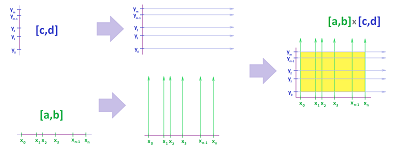

For dimension $2$, these are rectangles. An interval in the $x$-axis: $$[a,b]=\{x:\ a\le x\le b\},$$ and an interval in the $y$-axis: $$[c,d]=\{y:\ c\le y\le d\},$$ make a rectangle in the $xy$-plane: $$[a,b]\times [c,d]=\{(x,y):\ a\le x\le b,\ c\le y\le d\}.$$

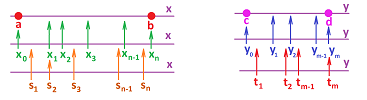

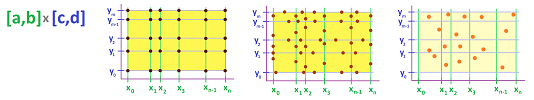

A partition of the rectangle $[a,b]\times [c,d]$ is made of smaller rectangles constructed in the same way as above. Suppose we have partitions of the intervals $[a,b]$ in the $x$-axis and $[c,d]$ in the $y$-axis:

We start with a partition of an interval $[a,b]$ in the $x$-axis into $n$ intervals: $$ [x_{0},x_{1}],\ [x_{1},x_{2}],\ ... ,\ [x_{n-1},x_{n}],$$ with $x_0=a,\ x_n=b$. Then we do the same for $y$. We partition an interval $[c,d]$ in the $y$-axis into $m$ intervals: $$ [y_{0},y_{1}],\ [y_{1},y_{2}],\ ... ,\ [y_{m-1},y_{m}],$$ with $y_0=c,\ y_n=d$.

The lines $y=y_j$ and $x=x_i$ create a partition of the rectangle $[a,b]\times [c,d]$ into smaller rectangles $[x_{i},x_{i+1}]\times [y_{j},y_{j+1}]$. The points of intersection of these lines, $$X_{ij}=(x_i,y_{j}),\ i=1,2,...,n,\ j=1,2,...,m,$$ will be called the nodes of the partition. So, there are nodes and there are rectangles (tiles); is that it?

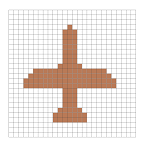

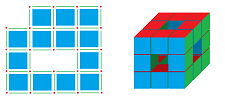

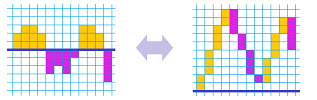

This is how an object can be represented with tiles, or pixels:

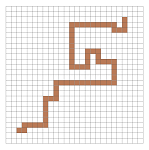

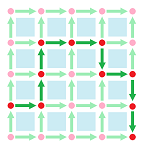

Now, are curves also made of tiles? Such a curve would look like this:

If we look closer, however, this “curve” isn't a curve in the usual sense; it's thick! The correct answer is: curves are made of edges of the grid:

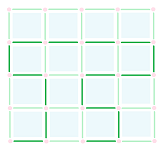

We have discovered that we need to include, in addition to the squares, the “thinner” cells as additional building blocks. The complete decomposition of the pixel is shown below; the edges and vertices are shared with adjacent pixels:

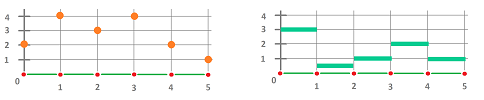

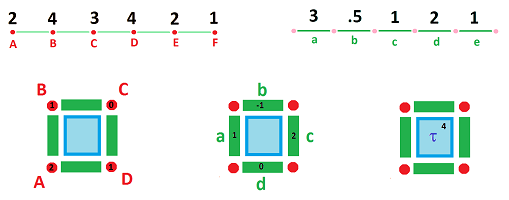

Example (dimension $1$). We start with dimension $n=1$:

In this simplest of partitions, the cells are:

- a node, or a $0$-cell, is $\{k\}$ with $k=...-2,-1,0,1,2,3, ...$;

- an edge, or a $1$-cell, is $[k, k + 1]$ with $k=...-2,-1,0,1,2,3, ...$. $\\$

And,

- $1$-cells are attached to each other along $0$-cells.

$\square$

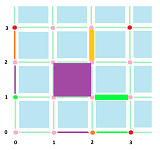

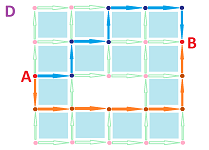

Example (dimension $2$). For the dimension $n=2$ grid, we define cells for all integers $k,m$ as products:

- a node, or a $0$-cell, is $\{k\} \times \{m\}$;

- an edge, or a $1$-cell, is $\{k \} \times [m, m + 1]$ or $[k, k + 1] \times \{m \}$;

- a square, or a $2$-cell, is $[k, k + 1] \times [m, m + 1]$. $\\$

And,

- $2$-cells are attached to each other along $1$-cells and, still,

- $1$-cells are attached to each other along $0$-cells.

Cells shown above are:

- $0$-cell $\{3\}\times \{3\}$;

- $1$-cells $[2,3]\times\{1\}$ and $\{2\}\times [2,3]$;

- $2$-cell $[1,2]\times [1,2]$.

$\square$

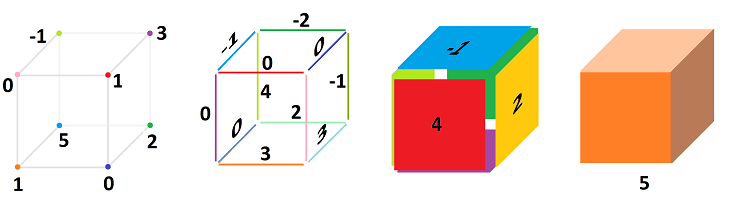

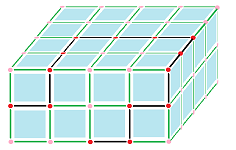

Similarly for dimension $3$, we have boxes. Intervals in the $x$-, $y$-, and $z$-axes: $$[a,b]=\{x:\ a\le x\le b\},\ [c,d]=\{y:\ c\le y\le d\},\ [p,q]=\{z:\ p\le z\le q\},$$ make a box in the $xyz$-space: $$[a,b]\times [c,d]\times [p,q]=\{(x,y):\ a\le x\le b,\ c\le y\le d,\ p\le z\le q\}.$$

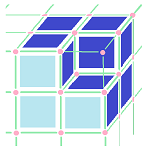

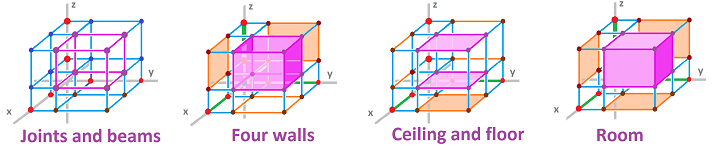

In dimension $3$, surfaces are made of faces of our boxes; i.e., these are tiles:

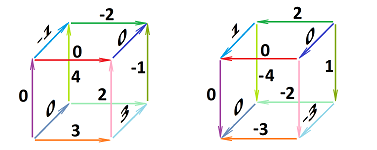

The cell decomposition of the box follows and here, once again, the faces, edges, and vertices are shared:

Example (dimension $3$). For all integers $i,m,k$, we have:

- a node, or a $0$-cell, is $\{i\} \times \{m\}\times \{k\}$;

- an edge, or a $1$-cell, is $\{i \} \times [m, m + 1]\times \{k\}$ etc.;

- a square, or a $2$-cell, is $[i, i + 1] \times [m, m + 1]\times \{k\}$ etc.;

- a cube, or a $3$-cell, is $[i, i + 1] \times [m, m + 1]\times [k, k + 1]$.

$\square$

Thus, our approach to decomposition of space, in any dimension, boils down to the following:

- The $n$-dimensional space is composed of cells in such a way that $k$-cells are attached to each other along $(k-1)$-cells, $k=1,2, ...,n$.

The examples show how the $n$-dimensional Euclidean space is decomposed into $0$-, $1$-, ..., $n$-cells in such a way that

- $n$-cells are attached to each other along $(n-1)$-cells,

- $(n-1)$-cells are attached to each other along $(n-2)$-cells,

- ...

- $1$-cells are attached to each other along $0$-cells.

What are those cells exactly?

Definition. In the $n$-dimensional space, ${\bf R}^n$, a cell is the subset given by the product with $n$ components: $$P=I_1\times ... \times I_n,$$ with its $k$th component is either

- a closed interval $I_k=[x_k,x_{k+1}],$ or

- a point $I_k=\{x_k\}.$ $\\$

The cell's dimension is equal to $m$, and it is also called an $m$-cell, when there are $m$ edges and $m-n$ vertices on this list. Replacing one of the edges in the product with one of its end-points creates an $(n-1)$-cell called a boundary cell of $P$.