This site is being phased out.

Discrete forms and cochains

Contents

Calculus and differential forms

Suppose $I$ is a closed interval. In the analysis context, the definite integral over $I$ is often thought of as a function the input of which is any integrable function $f$ while the output is a real number. This idea is revealed by the usual notation for functions as follows: $$G\big( f \big):=\int_I f(x) dx.$$ This point of view is understandable: after all, the Riemann integral is introduced in calculus as the limit of the Riemann sums of $f$. The student then discovers that this function is linear: $$G \big( sf+tg \big)=sG\big( f \big) +tG \big( g \big) ,$$ where $s,t\in {\bf R}$. However, this notation might obscure another important property of integral, the additivity: $$\int_{[a,b]\cup [c,d]} f(x) dx = \int_{[a,b]} f(x) dx+ \int_{[c,d]} f(x) dx,$$ for $a<b<c<d$. We then realize that we should also look at the integral as a function the input of which is an interval, as follows: $$H \big( I \big) := \int_{I} f(x)dx.$$

In higher dimensions, the intervals are replaced with surfaces and solids while the expression $f(x)dx$ is replaced with $f(x,y)dxdy$ and $f(x,y,z)dxdyz$, etc. These “expressions” are called differential forms and they are the ones that determine this new function. That's why we modify the notation as follows: $$\omega \big( I \big) =\int_I \omega.$$ This is an indirect definition of a differential form of dimension $1$ -- it is a function of intervals or, moreover, of $1$-chains. We can see this idea in the new form of the additivity property: $$\omega \big( sI+tJ \big) = s\omega \big( I \big) + t \omega \big( J \big).$$ This function is linear and we recognize it as a $1$-cochain!

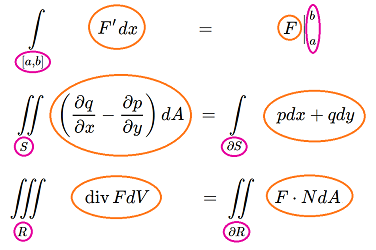

In light of this approach, let's take a look at the integral theorems of vector calculus. There are many of them and, with one for each dimension, maybe too many...

Let's proceed from dimension $3$, look at the formulas, and see what they have in common.

Green's Theorem: $$\iint_{S} \left( \frac{\partial q}{\partial x} - \frac{\partial p}{\partial y} \right) dA = \int_{\partial S} p dx + q dy. $$

Here, the integrals' domains are a solid and its boundary surface respectively.

Gauss' Theorem: $$\iiint_{R} \operatorname{div}F dV = \iint_{\partial R} F \cdot N dA.$$

The domains of integration are a plane region and its boundary curve.

Fundamental Theorem of Calculus: $$\int_{[a,b]} F' dx = F \Big|_{a}^b.$$

In the left-hand side, the integrand is $F' dx$. We think of the right-hand side as an integral too: the integrand is $F$. Then, the domains of integration are a segment and its two end-points: $$[a,b] \text{ and } \{a, b\}= \partial [a,b].$$

What do these three have in common?

Setting aside possible connections between the integrands, the pattern of the domains of integration is clear. The relation is the same in all these formulas:

Now, there must be some kind of a relation for the integrands too. The Fundamental Theorem of Calculus suggests a possible answer:

Of course, for the other two theorems, this simple relation can't apply. We can, however, make sense of this relation if we treat those four integrands as differential forms. The form on the left is what we call the exterior derivative of the form on the right.

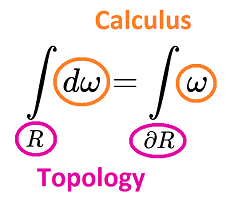

With this approach, we have just one general theorem which includes all three (and many more):

Stokes Theorem: $$\int_R d \omega = \int_{\partial R} \omega.$$

The relation between $R$ and $\partial R$ is a matter of topology. The relation between $d \omega$ and $\omega$ is a matter of calculus, the calculus of differential forms:

Furthermore, as we shall see, the transition from topology to calculus is just algebra!

How do we approximate continuous processes?

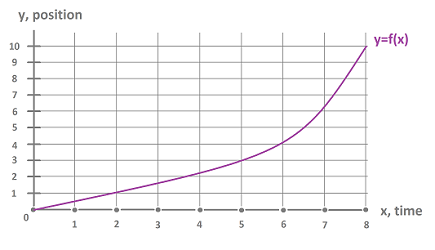

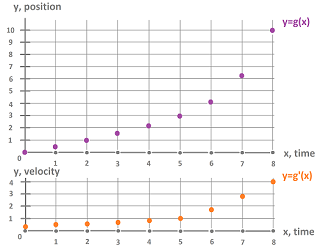

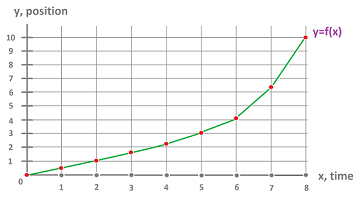

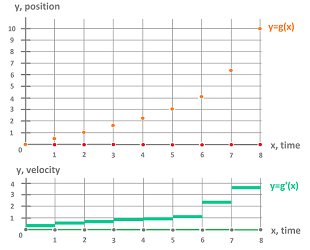

Suppose we have a function, $f$, representing the position, $y$, of your car as a function of time, $x$:

Let's suppose, however, that we know only a “sampled” version of this function, i.e., we only know its values at, say, $0, 1, 2, 4, 5, ...$. These numbers may come from looking occasionally at your odometer. Suppose also that the derivative, $f'$, of this function -- representing the velocity of your car -- is also sampled:

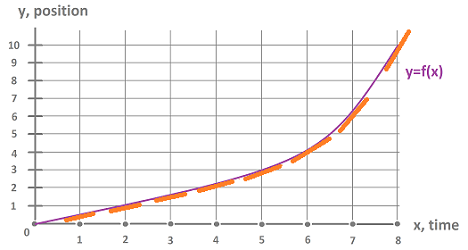

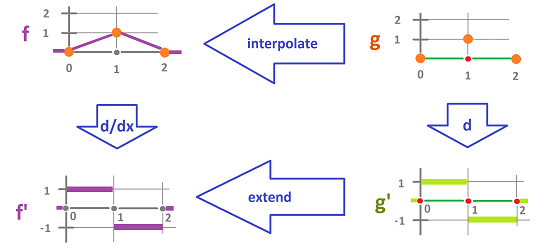

You get those numbers from looking at your speedometer once in a while. Thus, while $f$ and $f'$ are unknown, we have instead two new functions: $g$ and $g'$, defined only at predetermined points of time:

We may call them discrete functions. Now, it would make sense if $g'$ was the derivative of $g$ in some sense. But since the functions are discrete, there is no differentiation or the derivative in the conventional sense. Can we establish such a relation between two discrete functions?

Integral provides another relation between a function and its derivative. The Fundamental Theorem of Calculus states: $$f(8)-f(0) = \displaystyle\int_0^8 f'(x) dx.$$

Now, we would like see such a formula to link the two new functions together: $$g(8)-g(0) \stackrel{?}{=} \displaystyle\int_0^8 g'(x) dx.$$

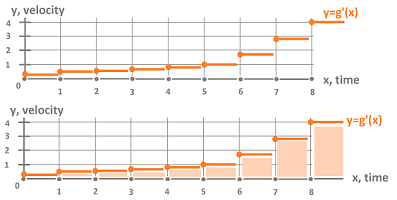

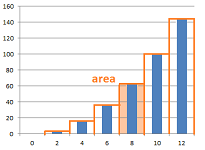

We rely on the conventional understanding of the Riemann integral as the area under the graph and that's why we turn $g'$ into a step-function:

The result is as follows. The right-hand side is: $$g(8)-g(0) =10,$$ but the left-hand side is: $$\displaystyle\int_0^1 g'(x)dx \approx 0.2+0.4+0.5+0.7+0.8+1+1.7+2.9+4 =12.2.$$ Then, the Fundamental Theorem of Calculus fails and, with it, one of the laws of physics!

Of course, we can improve the sampling by taking more sample values (i.e., we look at the dials more often), then the approximation improves too and, mathematically, the Riemann sums above converge to the Riemann integral, the actual displacement!

But what about the convergence of the approximations of the velocity? Suppose $f_n \to f$, does it mean that $f'_n \to f'$? We know that the answer is No. Just consider the famous Weierstrass function: $$f(x) := \displaystyle\sum_{n=0}^{\infty} a^n cos(b^n \pi x),\ 0 < a < 1.$$ This series converges uniformly, so $f$ is continuous. But for $b > 1 + \frac{a}{2} \pi$, the limit function $f$ is nowhere-differentiable.

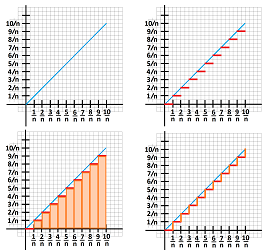

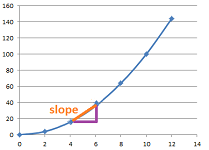

A related example is as follows. From calculus, we know how to use step-functions to approximate the definite integral of a function.

For example, for $f(x)=x,\ x\in [0,1]$, the (left-end) Riemann sum is: $$L_n:=\sum_{i=0}^{n-1} \frac{i}{n}\frac{1}{n}=\frac{1}{n^2}\sum_{i=0}^{n-1} i=\frac{1}{n^2}\frac{n(n-1)}{2} \to \frac{1}{2},$$ as expected. In other words, the areas under the graphs of the approximating step-functions converge to the area under the graph of $f$. However, an attempt to use the graphs of these functions to approximate the length of the graph fails: $$L:=\sum_{i=0}^{n-1} \left( \frac{1}{n}+\frac{1}{n} \right)=\frac{2}{n}\sum_{i=0}^{n-1} 1 =\frac{2}{n}n = 2,$$ completely!

Exercise. Use other step-functions to approximate the length of the diagonal segment.

Exercise. Use step-functions to approximate the area and the length of the circle.

To summarize:

- Any given approximation violates the laws of calculus (and the laws of physics).

- Improving the approximation won't necessarily bring us closer to the process being approximated.

Cochains as building blocks of discrete calculus

We will introduce discrete structures that don't approximate but rather mimic the behavior of the continuous structures of calculus.

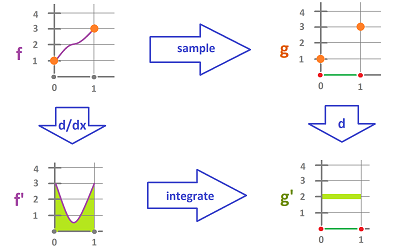

We already have a substitute for a function $f$ -- a discrete function $g$ defined at predetermined points of the real line. What is its derivative?

We consider, instead of the tangent lines of $f$, the secant lines of $f$ and, therefore, of $g$:

Then the values of $g'$ are the slopes on the secant lines over these intervals. This step may be understood as “differentiation” of $g$. To confirm that this makes sense, we would like to “integrate” $g'$ and recover $g$.

The key question is: where should $g'$ be defined?

The first idea is to define $g'$, just as $g$, at the sample points: $\{0, 1, 2,...\}$. Which, however, of the two end-points of the interval to choose? Another issue is that we would like to think of the integral in the usual sense -- as the area under the graph. That's why, instead, we prefer to assign $g'$ to the intervals ta make it a step-function:

- $g'(x)=.3$ on $(0,1)$,

- $g'(x)=.4$ on $(1,2)$, etc.

We can integrate it now. Consider the first interval: $$\begin{array}{lll} g(1)-g(0) &= \displaystyle\int_0^1 g'(x) dx \\ 0.3 &= 0.3 \end{array}$$ They match! Then the second interval: $$\begin{array}{lll} g(2)-g(1) &= \displaystyle\int_1^2 g'(x) dx \\ .4 &= .4 \end{array}$$ They match again! And then we match $g(2)-g(0)$ with the integral, etc. In other words, the Fundamental Theorem of Calculus holds.

Exercise. Prove the last statement.

We can simplify things even further. The domain of $g'$ has been understood as the set $$(0,1) \cup (1,2) \cup (2,3) \cup \ ...,$$ and we have been able to ignore the missing values of $g'$: $$g'(0)=?,\ g'(1)=?,\ ...,$$ because they don't affect the integrals. It is more convenient to think of the domain of this function as the collection of intervals (closed or open) rather than their union. For example, $$g'\Big|_{(0,1)}= .3$$ is replaced with $$g'([0,1])= .3.$$ The bonus is that the inputs of $g$ and $g'$ are revealed to be of the same nature: they both are cells, just of different dimensions: $$\begin{array}{r|c|c} & g & g'\\ \hline \text{domain:} & \{...,0, 1, 2, 3, ...\}& \{...,[0,1],[1,2],[2,3], ...\} \\ \text{inputs:} & \text{points} & \text{closed intervals} \\ \text{dimension:} & 0 & 1 \end{array}$$ Thus, these two functions are defined on $0$- and $1$-cells respectively. Consequently, both can be extended linearly to functions of $0$- and $1$-chains. We recognize them as a $0$-cochain and a $1$-cochain! In the calculus context, we will call them discrete differential forms of degree $0$ and $1$, or simply $0$- and $1$-forms.

Thus, we acquire $g$ by sampling $f$ and then acquire $g'$ by taking the differences of the values of $g$.

Alternatively, we find $g'$ independent from $g$ by integrating $f'$ on these intervals: $$g([a,b]):=\int_{[a,b]}f'dx.$$

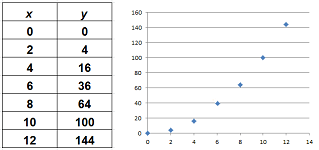

Now, let's approach the issue from the opposite direction. What if we start with discrete data?

In real-life, a function is given simply by a series of numbers. For example, this is what the “graph” of a function looks like in Excel:

Can we do calculus with such functions?

The two primary components of calculus are the following two questions:

1) What is the function's rate of change?

The derivative tells us the rate of change of data that continuously varies. Its discrete analog is the difference, the difference of values at two consecutive points. More precisely, if the function is represented as a collection of points, then its “derivative” -- evaluated at one of the intervals rather than a point -- is the slope of the line that connects the end-points of the interval.

2) What is the area under the graph of the function?

The definite integral gives us the area under a continuous curve. Its discrete analog is the sum, the sum of the values of the function for all points within the interval. However, if this is a step-function, the “integral” is the sum of the areas of the rectangles determined by these steps, just as before.

Even though the graph above is made of dots, with a couple of clicks, we can plot the same function with bars:

So, the same discrete function has been interpreted in two different ways:

- for differentiation -- with dots, and

- for integration -- with rectangles.

We, again, arrive to the idea of $0$-forms and $1$-forms.

At this point, we can construct continuous counterparts of these discrete forms by interpolation:

To summarize, an incremental motion is captured by a $0$-form. Its values provide the locations at every instant of the chosen sequence of moments of time while we think of the intermediate locations as unknown or unimportant. Meanwhile, a $1$-form gives the incremental changes of locations.

Exercise. Show that, given an initial location, all locations can be found from a given $1$-form.

Exercise. Given a $0$- and a $1$-form, what kind of continuous motion is captured exactly by these forms?

The spaces of cochains

Below we start to develop cohomology theory as the dual of that of homology, for the case when:

- $K$ is an oriented simplicial complex, and

- the ring of coefficients $R$ is the reals.

Definition. A $k$-cochain on $K$ is any linear function from the vector space of $k$-chains to $R$: $$s:C_k(K)\to R.$$

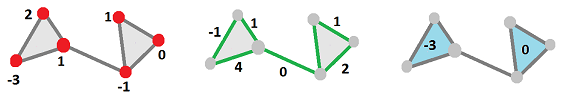

If we consider one cell at a time, it is simple: every such function is the multiplication by a number in $R$. This number is the only thing that we need to record. We visualize $0$-, $1$-, and $2$-cochains accordingly:

Thus,

- a $k$-cochain assigns a number, $r\in R$, to each $k$-chain.

Proposition. The $k$-cochains on complex $K$ form a vector space denoted by $C^k=C^k(K)$.

There is a special correspondence between chains and cochains. For every $k$-cell $c$ in $K$, there is a corresponding “elementary” cochain. We define the dual cochain $c^*:C_k\to R$ of $c$ by $$c^*(t)=\begin{cases} 1 & \text{ if } t=c,\\ 0 & \text{ if } t\ne c. \end{cases}$$

Proposition. If the vector space of all $k$-chains $C_k$ has as its standard basis the $k$-cells: $$\gamma := \{c_1,...,c_n\},$$ then the space of all $k$-cochains $C^k$ has as its standard basis the duals of these cells: $$\gamma^* := \{c^*_1,...,c^*_n\}.$$

Proof. These functions are defined, following the duality idea, on the basis elements of $\gamma$ by $$c^*_i(c_j):=\delta_{ij}.$$ $\blacksquare$

Exercise. Finish the proof.

Proposition. These two spaces are isomorphic in every dimension: $$C_k \cong C^k,$$ with the isomorphism given by $$\Phi(c_i):=c^i.$$

Then the dual of any chain makes sense: $$\left( \sum_i r_ic_i \right)^* := \sum_i r_ic^i,$$ and it satisfies the duality property.

Theorem (Duality). For any non-zero chain $a$, we have $$a^*(a)=1.$$

Exercise. Prove the theorem.

Proposition. The function $x\mapsto x^*$ generates an isomorphism between the vector spaces of $k$-chains and $k$-cochains.

Exercise. Prove the proposition.

Suppose now we are to evaluate an arbitrary cochain at an arbitrary chain. Consider: $$s(a)=?,$$ where $$s=\sum_i r_i c_i^*,\ a=\sum_i t_i c_i, \ r_i,t_i\in R,$$ and $\{c_i\}$ are the cells. Then, the linearity and the duality properties produce the following: $$\begin{array}{lll} s(a)&=\left( \sum_i r_i c_i^* \right) \left( \sum_j t_j c_j \right)\\ &=\sum_i \sum_j r_i t_j c_i^*(c_j)\\ &=\sum_i \sum_j r_i t_j \delta_{ij}\\ &=\sum_i r_i t_i. \end{array}$$

This coordinate-wise multiplication suggests a convenient notation.

Notation. The value of a cochain $s$ at a given chain $a$ is denoted by: $$s(a)= \langle s,a \rangle ,$$ when they are written as coordinate vectors in terms of their respective bases.

This isn't, however, the familiar dot product we use to compute angles between two vectors located in the same space. This time, the two inputs have very different nature; indeed, we have illustrated motion with the following:

- $1$-chain $a$ represents the time (measured in seconds) and

- $1$-cochain $s$ represents the velocity $s$ (measured in feet per second).

Certainly, the angle between $s$ and $a$ would be meaningless, but the scalar product makes sense as

- a number $D$ representing the displacement (measured in feet).

The coboundary operator

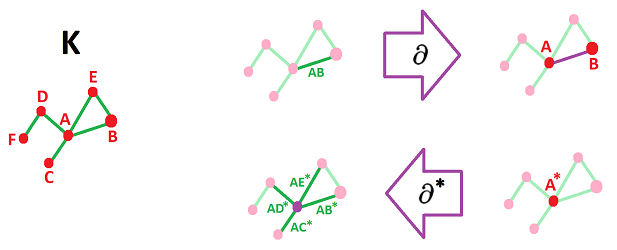

Recall that the boundary operator relates $(k+1)$-chains and $k$-chains and, therefore, $(k+1)$-cochains and $k$-cochains. The dependence is, of course, reversed.

What happens is seen in the following commutative diagram: $$\newcommand{\ra}[1]{\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} \begin{array}{rcccrr} a\in & C_{k+1}(K) & \ra{s} & {\bf R} & \ni r\\ & \da{\partial_{k+1}} & & || & || \\ b\in & C_{k}(K) & \ra{t} & {\bf R} & \ni r &. \end{array}$$ If $a$ is any $(k+1)$-chain (in the first row), then $b:=\partial_{k+1}a$ is its boundary (in the second row). Now, if $t$ is a $k$-cochain $t$ (in the second row), we can define its coboundary (in the first row) as a $(k+1)$-cochain $s$ given by its values: $$s(a):=r=t(b)=t\partial_{k+1} (a),$$ for all $a$; or simply: $$s:=t\partial_{k+1}.$$ This observation justifies the following.

Definition. The $k$th coboundary operator of $K$ is the linear operator: $$\partial^k:C^k\to C^{k+1},$$ defined by: $$\partial ^k(t):=t\partial_{k+1} ,$$ for any $k$-cochain $t$ in $K$.

We use $\partial ^*$ (or simply $d$) to denote the coboundary operator when the degree of the cochain is clear.

The dot product notation reveals the duality of the formula: $$ \newcommand{\ra}[1]{\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{ccccccccc} \begin{array}{|c|}\hline\quad \langle \partial ^*t,a \rangle = \langle t,\partial a \rangle \quad \\ \hline\end{array} \end{array} $$

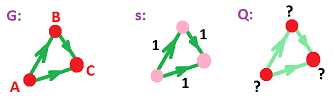

Let's apply this concept in the simplest context first: the graphs. The only boundary operator that matters is: $$\partial_1: C_1(K) \to C_0(K)$$ Therefore the only coboundary operator that matters is: $$\partial^0: C^0(K) \to C^1(K)$$

Example (circle). Let's compute the coboundary operator of the circle. Suppose the circle is given by the complex with three vertices $A,B,C$ and their edges $a=AB,b=BC,c=CA$. Then the bases of $C_0$ and $C_1$ are respectively: $$\{A,B,C\} \text{ and } \{a,b,c\}.$$ Then the bases of $C^0$ and $C^1$ are respectively: $$\{A^*,B^*,C^*\} \text{ and } \{a^*,b^*,c^*\}.$$ Let's compute the coboundary operator: $$\begin{array}{rrrr} \partial ^0(A^*)(a):=A^*(\partial_1 a)&=A^*(B-A)=&-1, \\ \partial ^0(A^*)(b):=A^*(\partial_1 b)&=A^*(C-B)=&0, \\ \partial ^0(A^*)(c):=A^*(\partial_1 c)&=A^*(A-C)=&1,& \quad \leadsto \partial ^0(A^*)=-a^*+c^*;\\ \partial ^0(B^*)(a):=B^*(\partial_1 a)&=B^*(B-A)=&1,\\ \partial ^0(B^*)(b):=B^*(\partial_1 b)&=B^*(C-B)=&-1,\\ \partial ^0(B^*)(c):=B^*(\partial_1 c)&=B^*(A-C)=&0,& \quad \leadsto \partial ^0(B^*)=a^*-b^*;\\ \partial ^0(C^*)(a):=C^*(\partial_1 a)&=C^*(B-A)=&0,\\ \partial ^0(C^*)(b):=C^*(\partial_1 b)&=C^*(C-B)=&1,\\ \partial ^0(C^*)(c):=C^*(\partial_1 c)&=C^*(A-C)=&-1,& \quad \leadsto \partial ^0(C^*)=b^*-c^*. \end{array}$$ These numbers form the matrix of $\partial ^0$, which is, as we see, the transpose of $\partial _1$: $$\partial _1=\left[ \begin{array}{ccccc} -1&0&1\\ 1&-1&0\\ 0&1&-1 \end{array} \right],\quad \partial ^0=\left[ \begin{array}{ccccc} -1&1&0\\ 0&-1&1\\ 1&0&-1 \end{array} \right].$$ $\square$

Exercise. Compute the coboundary operator of the figure eight.

The pattern of lowering vs. raising the dimension can be seen below:

Theorem. For any vertex $A$ in $K$, we have $$\partial ^*A^*=\sum_i AB_i^*,$$ where the summation is over all vertices $B_i$ adjacent to $A$.

Exercise. Prove the theorem.

Exercise. Provide an illustration, similar to the one above, for $\partial ^1$.

The cochain complex and cohomology

The duality between chains and cochains is used to define the isomorphism $\Phi:C_k\to C^k$ by $\Phi(c_{i}):=c^i.$ We sum up, algebraically, what we have:

- both chains and cochains are formal linear combinations of some geometric objects;

- the algebra is exactly the same: addition and scalar multiplication;

- they form vector spaces of the same dimension.

The difference becomes visible as we add the (co)boundary operators to the picture. What is happening is seen in the following, non-commutative diagram: $$\newcommand{\ra}[1]{\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\la}[1]{\!\!\!\!\!\xleftarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} \begin{array}{ccccccccc} C_{k+1} &\ra{\partial_{k+1}} & C_k \\ \da{\Phi} & \ne & \da{\Phi} \\ C^{k+1} &\la{\partial^k} & C^k . \end{array}$$

Exercise. Give an example to show that the diagram is indeed non-commutative.

Combined, the chain groups $C_k,\ k=0,1,...$, form the chain complex $\{C_*,\partial\}$: $$\newcommand{\ra}[1]{\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \begin{array}{rrrrrrrrrrr} 0& \ra{\partial_{N+1}=0} & C_N & \ra{\partial_{N}}& ... &\ra{\partial_{1}} & C_0 &\ra{\partial_{0}=0} & 0 . \end{array} $$ Here, $N=\dim K$. Meanwhile, the cochain groups $C^k,\ k=0,1,...$, form the cochain complex $\{C^*,\partial ^*\}$: $$\newcommand{\la}[1]{\!\!\!\!\!\xleftarrow{\quad#1\quad}\!\!\!\!\!} \begin{array}{rrrrrrrrrrr} 0& \la{\partial^{N}=0} & C^N & \la{\partial^{N-1}} & ... & \la{\partial^{0}} & C^0 &\la{\partial^{-1}=0} &0 . \end{array} $$ The “grading operators” here go in the opposite direction!

The result below shows that these two sequences of operators behave in a similar way. The formula comes from and looks identical to the familiar “the boundary of the boundary is zero” formula.

Theorem. For $k=0,1,...$, we have $$\partial^{k+1}\partial^k=0.$$

Proof. For any chain $a$, we use the definition of the coboundary operator: $$ \langle \partial^*\partial^*q,a \rangle = \langle \partial^*q,\partial a \rangle = \langle q,\partial\partial a \rangle = \langle q,0 \rangle =0.$$ $\blacksquare$

The short version of the formula is below: $$ \newcommand{\ra}[1]{\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{cccccccc} \begin{array}{|c|}\hline\quad \partial^*\partial^*=0 \quad \\ \hline\end{array} \end{array} $$

So, the cochain complex is a chain complex after all! The difference is only in the way we index its elements...

Therefore, we can simply repeat the definitions of homology theory, adding “co-” and raising the indices, as follows:

Definition.

- The elements of $Z^k:=\ker \partial ^k$ are called the cocycles, and

- the elements of $B^k:=\operatorname{Im}\partial ^{k-1}$ are called the coboundaries.

Suppose $s$ is a coboundary, $s=\partial^*q$, then $\partial^*s=\partial^*\partial^*q=0$. By the argument identical to the one for chain complexes, we have proven the following.

Corollary. Every coboundary is a cocycle, or $$B^k\subset Z^k, \ \forall k=0,1,....$$

The complete collections of these vector spaces can be connected to each other, as in this diagram: $$\newcommand{\la}[1]{\!\!\!\!\!\xleftarrow{\quad#1\quad}\!\!\!\!\!} \begin{array}{rrrrrrr} & \la{\partial^{k+1}} & 0\in Z^{k+1}=\ker \partial^{k+1} & \la{\partial^{k}} & 0\in Z^k =\ker \partial^{k} & \la{\partial^{k-1}} & \\ & & \cap \qquad & & \cap \quad & & \\ & \la{\partial^{k+1}}& \operatorname{Im} \partial^{k+1}=B^{k+1}\subset C^{k+1} & \la{\partial^{k}} & \operatorname{Im} \partial^{k-1}=B^k \subset C^{k} & \la{\partial^{k-1}} \end{array}$$

Definition. The $k$th cohomology group of $K$ is the quotient of the cocycles over the coboundaries: $$H^k=H^k(K):=Z^k / B^k = \frac {\ker \partial^{k+1}} {\operatorname{Im}\partial^k}.$$

Notation. When the dimension $k$ is clear, we use $H^*$.

Example. Let's compute the cohomology of the circle given, as in the last subsection, by the complex with three vertices $A,B,C$ and there edges $a=AB,B=BC,c=CA$. The coboundary operator satisfies: $$\begin{array}{lll} \partial^0(A^*)&=-a^*+c^*;\\ \partial^0(B^*)&=a^*-b^*;\\ \partial^0(C^*)&=b^*-c^*. \end{array}$$ Then the kernel is $$Z^0:=\ker \partial^0=<A^*+B^*+C^*>.$$ Therefore, $$H^0:=Z^0/B^0=<A^*+B^*+C^*>.$$ Next, the image is $$B^1:=\operatorname{Im}\partial^0=<-a^*+c^*,a^*-b^*,b^*-c^*>.$$ Therefore, $$H^1:=Z^1/B^1=<a^*>=<b^*>=<c^*>.$$ It is isomorphic to the homology!

$\square$

Exercise. Compute the cohomology of the figure eight.

The example suggests that not just the chain and cochain groups are isomorphic but so are the homology and cohomology groups -- under $\Phi$ -- as vector spaces.

Theorem. For graphs, we have $$H^*\cong H.$$

Exercise. Prove the theorem. Hint: follow the example.

Corollary. In an acyclic graph, every $1$-cocycle is a $1$-coboundary.

Observe that in the last example $Z^0$ is generated by $A^*+B^*+C^*$. This means that the cocycles are multiples of this cochain, such as $$s_r=r(A^*+B^*+C^*).$$ But $$s_r(A)=r,\ s_r(B)=r,\ s_r(C)=r.$$ In other words, these functions are constant.

Theorem. A $0$-cochain is a cocycle in $K$ if and only if it is constant on each path-component of $K$.

Proof. If $\partial^0Q=0$ then $ \langle \partial^0Q,a \rangle =0$ for any $0$-chain $a=AB$. Therefore, $ \langle Q,B-A \rangle =0$, or $Q(A)=Q(B)$. $\blacksquare$

Exercise. Provide the rest of the proof.

Example (Kirchhoff's voltage law). We, again, consider an electrical circuit understood as a graph.

As the edges are oriented, we can think of the voltages as $1$-cochains (over ${\bf R}$). Moreover, the voltage $V$ over any edge is the drop of the potential $U$ over this edge. The potential is then a $0$-cochain and $V=dU$. Therefore, $dU(c)=U(\partial c)=U(0)=0$ for any $1$-cycle $c$. So, the following conservation principle is satisfied: the sum of all the voltages around a loop is equal to zero.

$\square$

Calculus I, the discrete version

Below, we review these new concepts in the context of discrete calculus. In this context, the cochains are called differential forms or simply 'forms', while the coboundary operator is called the exterior derivative denoted by $$d:=\partial ^*.$$ The reason for this terminology is revealed below.

The setting of calculus of one variable requires only $0$- and $1$-cochains. Therefore, the only complexes we need to consider are graphs.

Substituting $a=AB$ into the definition of the exterior derivative, we acquire the following:

Theorem (Fundamental Theorem of Calculus for Graphs). Suppose we have a sequence of adjacent nodes in the graph: $$A=A_0,...,A_N=B,$$ and let $a$ be the $1$-form represented by the sum of the edges of the sequence: $$a:=A_0A_1+...+A_{N-1}A_N.$$ Then, for any $0$-form $Q$, we have $$ \langle dQ,a \rangle = \langle Q,B-A \rangle .$$

Exercise. State and prove the theorem for the case $a$ an arbitrary $1$-form.

In the integral notation, the result looks familiar: $$\int_{A}^B dQ=Q(B)-Q(A).$$

Even though we introduce forms (cochains) as definite integrals evaluated on intervals, we can now think of $Q$ as an antiderivative of $dQ$.

There are often two issues to be considered about a new concept: existence and uniqueness.

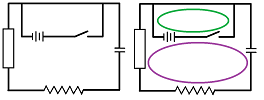

First, given a $1$-form $s$ on graph $G$, does a $0$-form $Q$ with $dQ=s$ always exist? The picture below shows why the answer is “No”:

Here, if we take the upper path, we have $$Q(B)-Q(A)=1,\ Q(C)-Q(B)=1;$$ but if we take the lower path, we have $$Q(C)-Q(A)=1.$$ This is a contradiction! We are facing a path-dependent integral.

The idea from calculus is that path-dependence of line integrals can be recast as the non-triviality of integrals along closed loops, i.e., $1$-cycles. Indeed, we can restate the above as: $$\int_{[A,B]+[B,C]+[C,A]} dQ=\int_{[A,B]}dQ+\int_{[B,C]}dQ+\int_{[C,A]} dQ=1\ne 0.$$ The result should remind us of a similar situation with chains:

- not every chain is a boundary.

This time we are speaking of cochains:

- not every cochain is a coboundary.

There is more than just similarity here: if $a$ is a non-zero $1$-cycle, then $a^*$ isn't a coboundary. Indeed, we have otherwise: $$1= \langle a^*,a \rangle = \langle \partial ^*Q,a \rangle = \langle Q,\partial a \rangle = \langle Q,0 \rangle =0.$$ In other words, for all integrals over closed loops to be always $0$, there should be no closed loops to begin with.

In the calculus context, coboundaries are also called exact forms.

Theorem. Every $1$-form is exact on a graph if and only if the graph is a tree.

Exercise. Provide the rest of the proof.

Second, given a $1$-form $s$ on graph $G$, is a $0$-form $Q$ with $dQ=s$ unique? The recognizable result below shows that the answer is “Yes, in a sense”.

Theorem. If $Q,Q'$ are two $0$-forms with $dQ=dQ'$, then they differ by a constant on every path-component of the graph.

Exercise. Prove the theorem. Hint: consider $Q-Q'$.

A common interpretation of this result is, if two runners run with an identical but possibly variable velocity, the distance between them remains unchanged.

Social choice: ratings and comparisons

We will consider the foundation of a basic voting system.

Examples of how votes are used for evaluation are numerous. Typically, the customer/voter assigns a number $\{1,2,...,N\}$ to a product or service as its perceived quality, such as:

- books,

- movies,

- hotels,

- various products,

- service providers,

- etc.

The choice is commonly visualized by $N=5$ stars: $$\star\quad \star\star\quad \star\star\star\quad \star\star\star\star\quad \star\star\star\star\star\ .$$ In political elections, we often have $N=2$: $$Yes: \underline{ \checkmark} \quad No: \underline{ }$$

Suppose there are $n$ alternatives/candidates: $$A:=\{0,1,2,...,n-1\}=\{A_0,A_1, A_2, ... ,A_{n-1}\}.$$ They are ordered. We also suppose that these candidates are the vertices of a simplicial complex $K$. All candidates are subject to evaluation but the presence of edge $AB$ in $K$ reflects the fact that $A$ and $B$ are comparable.

At first, we will only consider what a single voter does; however, the single vote may come from aggregated votes.

In a typical political election, the voter ranks the candidates with no numbers involved. We will initially concentrate on simpler, algebraic, voting methods that are meant to evaluate rather than rank. These methods require us to use our ring $R$ again.

A rating vote is a number $X\in R$ assigned to each candidate, as well as a combination of all these numbers. Therefore, this is a $0$-cochain on $K$. The totality is $C^0=C^0(K)$.

A comparison vote is a number $x\in R$ assigned to each pair of candidates (i.e., the edge between them), as well as a combination of all these numbers. Therefore, this is a $1$-cochain on $K$. The totality is $C^1=C^1(K)$.

That's all. We initially assume that $K$ is simply a graph.

The differences, according to the order of vertices, over the rating vote for each pair of candidates produces a comparison vote: $$x(12)=\partial^0 X(12):=X(2)-X(1).$$ Combined, this is the $0$-coboundary operator $\partial^0:C^0\to C^1$.

A rating comparison vote is one that comes from some rating vote of the candidates, as described. Therefore, this is a $1$-coboundary. The totality is $B^1=\operatorname{Im}\partial^0$.

A non-circular comparison vote is one that satisfies the condition: as one takes a circular route through the candidates/vertices of $K$, the preferences always add up to zero. Therefore, this is a $1$-cocycle. The totality is $Z^1=\ker \partial^1$.

We now use this terminology to restate what we learned in the last subsection.

“Every coboundary is a cocycle” is restated as follows.

Proposition. Every rating comparison vote is non-circular.

“Every $k$-cocycle is a $k$-coboundary if $H^1(K)=0$” is restated as follows.

Proposition. If $K$ is a tree, every comparison vote is rating.

The cochain complex and these subspaces are shown below: $$\newcommand{\la}[1]{\!\!\!\!\!\xleftarrow{\quad#1\quad}\!\!\!\!\!} \begin{array}{ccccccc} 0 & \la{\partial^{2}=0} & C^2 & \la{\partial^{1}} & C^1 & \la{\partial^{0}} & C^0 \\ & & \cup & & \cup & & || \\ & & 0 & \la{0} & Z^1 & \la{} & C^0 \\ & & || & & \cup & & || \\ & & 0 & \la{0} & B^1 &\la{onto} & C^0. \end{array}$$

Even in the case of a single voter, who wins?

In the simplest case, there is only a single rating. Provided $R$ is equipped with an order relation, we choose the winner to be the one (possibly tied) with the largest rating.

What if the voter casts a comparison vote $b$ instead? Even though what we really need is a rating vote, we don't have to discard $b$. The reason is that $b$ may be a rating comparison vote: $b=\partial^0(c)$ for some rating $c$ (i.e., $b$ is a coboundary). Then the winner should be the one with the largest value of $c$. Based on the last proposition, we conclude that we can guarantee that there is such a $c$ if and only if $K$ is a tree, which is certainly atypical. However, if this is the case, the choice of the winner(s) is unambiguous.

Exercise. Prove the last statement.

Exercise. What if, in order to guarantee a winner, we simply disallow circular comparison votes? Hint: what is the new chain complex?

Exercise. What to do if the voter casts both a rating vote and a comparison vote?

Exercise. What if there are two voters?

Observe that while the votes are always limited to the numbers up to $N$, the algebra they follow isn't ${\bf Z}_N$. Now, once the votes of many voters are added together, we can think of the total as a single rating vote over ${\bf Z}$ or ${\bf R}$. Aggregation methods are discussed later.

Exercise. Use cochains to represent exchange rates between world currencies. What about barter?