This site is being phased out.

The gradient

Contents

- 1 Overview of differentiation

- 2 Gradients vs. vector fields

- 3 The change of a function of several variables: the difference

- 4 The rate of change of a function of several variables: the gradient

- 5 Algebraic properties of the difference quotients and the gradients

- 6 Compositions and the Chain Rule

- 7 The gradient is perpendicular to the level curves

- 8 Monotonicity of functions of several variables

- 9 Differentiation and anti-differentiation

- 10 When is anti-differentiation possible?

- 11 When is a vector field a gradient?

Overview of differentiation

Where are we in our study of functions in high dimensions?

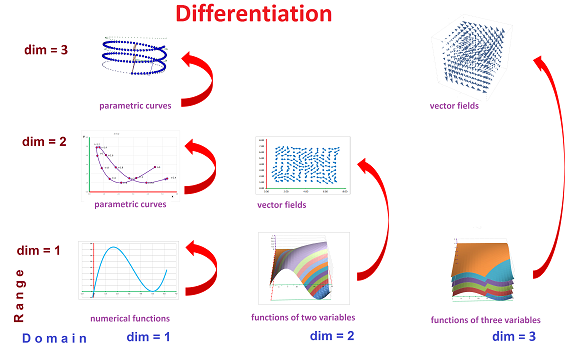

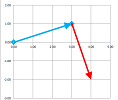

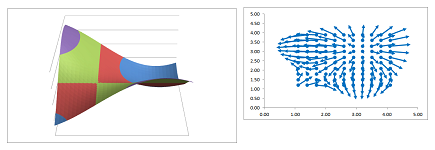

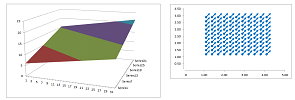

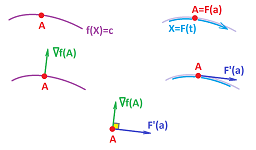

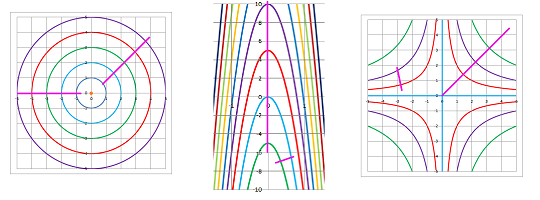

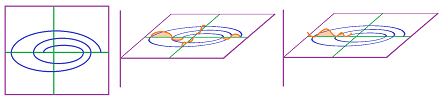

Once again, we provide a diagram that captures all types of functions we have seen so far as well as those haven't seen yet. They are placed on the $xy$-plane with the $x$-axis and the $y$-axis representing the dimensions of the input space and the output space. The first column consists of all parametric curves and the first row of all functions of several variables. The two have one cell in common; that is numerical functions.

This time we will see how everything is interconnected. We show with the red arrows for different types of functions what type of functions are their difference quotients or the derivatives.

In Chapter 7 and beyond, we faced only numerical functions and we implicitly used the fact that differentiation will not make us leave the confines of this environment. In particular, every function defined on the edges of a partition is the difference quotient of some function defined on the nodes of the partition. Furthermore, every continuous function is integrable and, therefore, is somebody's derivative. In this sense, the arrow can be reversed.

More recently, we defined the difference quotient and the derivative of a parametric curve in ${\bf R}^n$ and those are again parametric curves in ${\bf R}^n$ (think location vs. velocity). That's why we have arrows that come back to the same cell. Once again, every continuous parametric curve is somebody's derivative. In this sense, the arrow can be reversed.

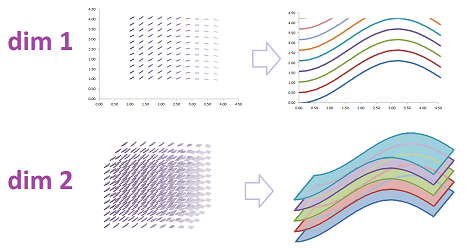

The study of functions of several variables would be incomplete without understanding their rates of change! What we know so far is that we can compute the rate of change of such a function of two variables in the two main directions. The result is given by a vector called its gradient.

For each point on the plane, we have a single vector but what if we carry out this computation over the whole plane? What if, in order to keep track of the correspondence, we attach this vector to the point it came from? The result is a vector field. It is a function from ${\bf R}^2$ to ${\bf R}^2$ and it is placed on the diagonal of our table. The same happens to functions of three variables and so on.

Every gradient is a vector field but not every vector field is a gradient. In this sense, the arrow cannot we reversed! The situation seems to mimic the one with numerical functions: there are non-integrable functions. However, the arrow in the first cell is reversible if we limit ourselves to smooth (i.e., infinitely many times differentiable) functions. The problem is more profound with vector fields as we shall see later.

Gradients vs. vector fields

Vector fields are just functions with:

- the input consisting of two numbers and

- the output consisting of two numbers.

We just choose to treat the former as a point on the plane and the latter as a vector attached to that point. This is just a clever way to visualize such a complex -- in comparison to the ones we have seen so far -- function. It's a location-dependent vector!

Let's plot some vector fields by hand and then analyze them.

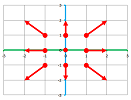

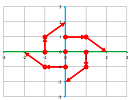

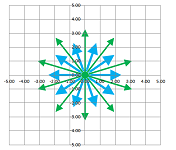

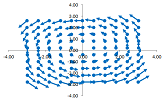

Example (accelerated outflow). Let's consider this simple vector field: $$V(x,y)=<x,y>.$$ A vector field is just two functions of two variables: $$V(x,y)=<p(x,y),\ q(x,y)> \text{ with } p(x,y)=x,\ q(x,y)=y.$$ Plotting those two functions, as before, does not produce useful visualization. However, we will still follow the same pattern: we pick a few points on the plane, compute the output for each, and assign it to that point. The difference is that instead of a single number we have two and instead of a vertical bar that we erect at that point to visualize this number we draw an arrow. We carry this out for these nine points around the origin: $$\begin{array}{ccccc} (-1,1)&(0,1)&(1,1)\\ (-1,0)&(0,0)&(1,0)\\ (-1,-1)&(0,-1)&(1,-1) \end{array}\quad\leadsto\quad\begin{array}{ccccc} <-1,1>&<0,1>&<1,1>\\ <-1,0>&<0,0>&<1,0>\\ <-1,-1>&<0,-1>&<1,-1> \end{array}$$ Each vector on right starts at the corresponding point on left:

What about the rest? We can guess that the magnitudes increase as we move away from the origin while the directions remain the same: opposite of the origin.

For each point $(x,y)$ we copy the vector that ends there, i.e., $<x,y>$ and place it at this location.

Now, if the vectors represent velocities of particles, what kind of flow is this? This isn't a fountain or an explosion (the particles would go slower away from the source). The answer is: this is a flow on a surface -- under gravity -- that gets steeper and steeper away from the origin! This surface would look like this paraboloid:

Can we be more specific? Well, this surface looks like the graph of a function of two variables and the flow seems to follow the line fastest descent; maybe our vector field is the gradient of this function? We will find out but first let's take a look at the vector field visualized as a system of pipes:

We recognize this as a discrete $1$-form. Now, the question above becomes: is it possible to produce this pattern of flow in the pipes by controlling the pressure at the joints?

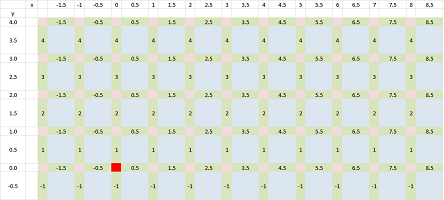

So, is this vector field -- when limited to the edges of a grid -- the difference of a function of two variables? Let's take the latter question; we need to solve this equation and such a function $z=f(x,y)$ that $$\Delta f=<x,y>?$$ The latter is just an abbreviation; the actual differences are $x$ on the horizontal edges and $y$ on the vertical. Let's concentrate on just one cell: $$[x,x+\Delta x]\times [y,y+\Delta y].$$ We choose the secondary node of an edge to be the primary node at the beginning of the edge:

- horizontal: $(x,y)$ in $[x,x+\Delta x]\times \{y\}$, etc.;

- vertical: $(x,y)$ in $\{x\}\times [y,y+\Delta y]$, etc.

Then our equation develops as follows: $$\Longrightarrow\ \begin{cases} \Delta_x f&=x\\ \Delta_x f&=y \end{cases}\ \Longrightarrow\ \begin{cases} f(x+\Delta x,y)-f(x,y)&=x\\ f(x,y+\Delta y)-f(x,y)&=y \end{cases}\ \Longrightarrow\ \begin{cases} f(x+\Delta x,y)&=f(x,y)+x\\ f(x,y+\Delta y)&=f(x,y)+y \end{cases}$$ These recursive relations allow us to construct $f$ one node at a time. We use the first one to progress horizontally and the second to progress vertically: $$\begin{array}{cccc} \uparrow& &\uparrow\\ (x,y+\Delta y)&\to&(x+\Delta x,y+\Delta y)&\to\\ \uparrow& &\uparrow\\ (x,y)&\to&(x+\Delta x,y)&\to \end{array}$$ The problem is solved! However, there may be a conflict: what if we apply these two formulas consecutively but in a different order? Fortunately, going horizontally then vertically produces the same outcome as going vertically then horizontally: $$f(x+\Delta x,y+\Delta y)=f(x,y)+x+y.$$ This is the first instance of path-independence.

Now the continuous case. Suppose $V$ is the gradient of some differentiable function of two variables $z=f(x,y)$. The result of this assumption is a vector equation that breaks into two: $$V=\nabla f\ \Longrightarrow\ V(x,y)=<x,y>=<f_x(x,y),\, f_y(x,y)>\ \Longrightarrow\ \begin{cases} x&=f_x(x,y),\\y&=f_y(x,y).\end{cases}$$ We now integrate one variable at a time: $$\begin{array}{lll} x&=f_x(x,y)&\Longrightarrow &f(x,y)=\int x\, dx &=\frac{x^2}{2}+C &\quad =\frac{x^2}{2}+C(y);\\ y&=f_y(x,y)&\Longrightarrow &f(x,y)=\int y\, dy &=\frac{y^2}{2}+K &\quad =\frac{y^2}{2}+K(x). \end{array}$$ Note that in either case we add the familiar constants of integration “$+C$” and “$+K$” (different for the two different integrations)... however, these constants are only constant relative to $x$ and $y$ respectively. That makes them functions of $y$ and $x$ respectively! Putting the two together, we have the following restriction on the two unknown functions: $$f(x,y)=\frac{x^2}{2}+C(y)=\frac{y^2}{2}+K(x).$$ Can we find such functions $C$ and $K$? If we group the terms, the choice becomes obvious: $$\begin{array}{lll} \frac{x^2}{2}\\+C(y)\\ \end{array}=\begin{array}{lll} K(x)\\+\frac{y^2}{2}\\ \end{array}$$ If (or when) it does not, we could just plug some values into this equation and examine the results: $$\begin{array}{lll} x=0&\Longrightarrow & C(y)=\frac{y^2}{2}+K(0)&\Longrightarrow & C(y)=\frac{y^2}{2}+\text{ constant};\\ y=0&\Longrightarrow & C(0)+\frac{x^2}{2}=K(x)&\Longrightarrow & K(x)=\frac{x^2}{2}+\text{ constant}.\\ \end{array}$$ So, either of the functions $C(y)$ and $K(x)$ differs from the corresponding expression by a constant. Therefore, we have: $$f(x,y)=\frac{x^2}{2}+\frac{y^2}{2}+L,$$ for some constant $L$. The surface is indeed a paraboloid of revolution. $\square$

When a vector field is the gradient of some function of two variables, this function is called a potential function, or simply a potential, of the vector field. Note that finding for a given vector field $V$ a function $f$ such that $\nabla f=V$ amounts to anti-differentiation. The following is an analog of several familiar results: any two potential functions of the same vector field defined on an open disk differ by a constant. So, you've found one -- you've found all, just like in Chapters 9 and 11. The proof is exactly the same as before but it relies, just as before, on the properties of the derivatives (i.e., gradients) discussed in the next section. The graphs of these functions then differ by a vertical shift.

It is as if the floor and the ceiling in a cave have the exact same slope in all directions at each location; then the height of the ceiling is the same throughout the cave.

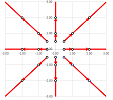

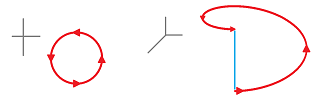

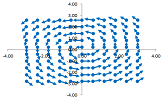

Example (rotational flow). We consider this vector field again: $$V(x,y)=<y,\, -x>.$$ Our two functions of two variables are: $$V(x,y)=<p(x,y),\, q(x,y)> \text{ with } p(x,y)=y,\ q(x,y)=-x.$$ We pick a few points on the plane, compute the output for each, and assign it to that point: $$\begin{array}{ccccc} (-1,1)&(0,1)&(1,1)\\ (-1,0)&(0,0)&(1,0)\\ (-1,-1)&(0,-1)&(1,-1) \end{array}\quad\leadsto\quad\begin{array}{ccccc} <1,-1>&<1,0>&<1,-1>\\ <0,1>&<0,0>&<0,-1>\\ <-1,1>&<-1,0>&<-1,1> \end{array}$$ Each vector on right starts at the corresponding point on left:

Now, if the vectors represent velocities of particles, what kind of flow is this? It looks like the water is flowing away from the center. Is it a whirl? Let's plot some more:

These lie on the axes and they are all perpendicular to those axes. We realize that there is a pattern: $V(x,y)$ is perpendicular to $<x,y>$. Indeed, $$<y,-x>\cdot <x,y>=yx-xy=0.$$

From what we know about parametric curves, to follow these arrows a curve would be rounding the origin never getting closer to or farther away from it; this must be a rotation. Now, is this a flow on a surface produced by gravity like last time? If we visualize the vector field as a system of pipes, the question above becomes: is it possible to produce this pattern of flow in the pipes by controlling the pressure at the joints?

Let's find out. We will try to solve this equation for $z=f(x,y)$: $$\Delta f=<y,\, -x>?$$ Just as in the last example, we choose the secondary node to be the primary node at the beginning of the edge. We have: $$\Longrightarrow\ \begin{cases} \Delta_x f&=y\\ \Delta_y f&=-x \end{cases}\ \Longrightarrow\ \begin{cases} f(x+\Delta x,y)-f(x,y)&=y\\ f(x,y+\Delta y)-f(x,y)&=-x \end{cases}\ \Longrightarrow\ \begin{cases} f(x+\Delta x,y)&=f(x,y)+y\\ f(x,y+\Delta y )&=f(x,y)-x \end{cases}$$ Can we use these recursive formulas to construct $f$? Is there a conflict: if we start at $(x,y)$ and then get to $(x+\Delta x,y+\Delta y)$ in the two different ways, will we have the same outcome? $$\begin{array}{cccc} \uparrow& &\uparrow\\ (x,y+\Delta y)&\to&(x+\Delta x,y+\Delta y)&\to\\ \uparrow& &\uparrow\\ (x,y)&\to&(x+\Delta x,y)&\to \end{array}$$ Unfortunately, the outcome is not the same: $$\begin{array}{ccc} & f(x+\Delta x,y+\Delta y)&=f(x,y)+y-(x+\Delta x)&=f(x,y)-x+y-\Delta x\\ \ne &f(x+\Delta x,y+\Delta y)&=f(x,y)-x+(y+\Delta y)&=f(x,y)-x+y+\Delta y. \end{array}$$ This is path-dependence!

Suppose $V$ is the gradient of some function of two variables $f$: $$V=\nabla f\ \Longrightarrow\ V(x,y)=<y,\, -x>=<f_x(x,y),\, f_y(x,y)>\ \Longrightarrow\ \begin{cases} y&=f_x(x,y),\\-x&=f_y(x,y).\end{cases}$$ What do we do with those? They are partial derivatives so let's solve these equations by partial integration, one variable at a time: $$\begin{array}{lll} y&=f_x(x,y)&\Longrightarrow &f(x,y)=\int y\, dx &=xy+C ;\\ -x&=f_y(x,y)&\Longrightarrow &f(x,y)=\int -x\, dy &=-xy+K . \end{array}$$ Putting the two together, we have $$f(x,y)=xy+C(y)=-xy+K(x).$$ Can we find such functions $C$ and $K$? If we try to group the terms, they don't group well: $$\begin{array}{lll} xy\\+C(y)\\ \end{array}=\begin{array}{lll} -xy\\+K(x)\\ \end{array}$$ To confirm that there is a problem, let's plug some values into this equation: $$\begin{array}{lll} x=0&\Longrightarrow & C(y)=K(0)&\Longrightarrow & C(y)=\text{ constant};\\ y=0&\Longrightarrow & C(0)=K(x)&\Longrightarrow & K(x)=\text{ constant}.\\ \end{array}$$ So, both $C$ and $K$ are constant functions, which is impossible! Indeed, on left we have a function of two variables and a constant on right: $$2xy=-C+K=\text{ constant}.$$ This contradiction proves that our assumption that $V$ has a potential function was wrong; there is no such $f$. We may even say that the vector field isn't “integrable”! Geometrically, there is no surface a flow of water on which would produce this pattern. $\square$

An insightful if informal argument to the same effect is as follows. Suppose we travel along the arrows of a vector field. Suppose that eventually we arrive to our original location. Is it possible that this vector field has a potential function? Is it the gradient of some function of two variables? If it is, we have followed the direction of the (fastest) increase of this function... but once we have come back, what is the elevation? After all this climbing, it can't be the same! This function therefore cannot be continuous at this location. Then it also cannot be differentiable, a contradiction!

Our conclusion that some continuous vector fields on the plane aren't derivatives has no analog in the $1$-dimensional, numerical, case discussed in Parts I and II.

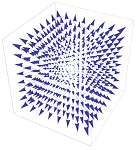

Example. Three-dimensional vector fields are more complex. The one below is similar to the first example above: $$V(x,y,z)=<x,y,z>.$$ The vectors point in the direction opposite of the direction to the origin.

Just as its two-dimensional analog, there is a potential function: $$f(x,y,z)=\frac{x^2}{2}+\frac{y^2}{2}+\frac{z^2}{2}.$$ The level surfaces of this function are concentric spheres:

$\square$

The change of a function of several variables: the difference

We consider functions of $n$ variables again.

First let's look at the point-slope form of linear functions: $$l(x_1,...,x_n)=p+m_1(x_1-a_1)+...+m_n(x_n-a_n),$$ where $p$ is the $z$-intercept, $m_1,...,m_n$ are the chosen slopes of the plane along the axes, and $a_1,...,a_n$ are the coordinates of the chosen point in ${\bf R}^n$. Let's recast this expression, as before, in terms of the dot product with the increment of the independent variable: $$l(X)=p+M\cdot (X-A),$$ where

- $M=<m_1,...,m_n>$ is the vector of slopes,

- $A=(a_1,...,a_n)$ is the point in ${\bf R}^n$, and

- $X=(x_1,...,x_n)$ is our variable point in ${\bf R}^n$, and

- $X-A$ is how far we step away from our point of interest $A$.

Then we can say that the vector $N=<m_1,...,m_n,1>$ is perpendicular to the graph of this function (a “plane” in ${\bf R}^{n+1}$). The conclusion holds independently from any choice of a coordinate system!

Suppose now we have an arbitrary function $z=f(X)$ of $n$ variables, i.e., $z$ is a real number and $X=(x_1,...,x_n)$ in ${\bf R}^n$.

We start with the discrete case. Suppose ${\bf R}^n$ is equipped with a rectangular grid with sides $\Delta x_k$ along the axis of each variable $x_k$. It serves as a partition with secondary nodes provided on the edges of the grid.

We only consider the increment of $X$ in one of those directions: $$\Delta X_k=<0,...,0,\Delta x_k,0,...,0>.$$

Definition. The partial difference of $z=f(X)=f(x_1,...,x_n)$ with respect $x_k$ at $X$ are defined to be the change of $z$ with respect to $x_k$ denoted by: $$\Delta_k f\, (C)=f(X+\Delta X_k)-f(X),$$ where $C$ is a secondary node on the edge between the nodes $X$ and $X+\Delta X_k$.

We collect these rates of change into one function!

Definition. The difference of $z=f(X)=f(x_1,...,x_n)$ at $X$ is defined to be the change of $z$ with respect to the corresponding $x_k$ denoted by: $$\Delta f\, (C)=f(X+\Delta X_k)-f(X),$$ where $C$ is a secondary node on the edge between $X$ and $X+\Delta X_k$.

Definition. The partial difference quotient of $z=f(X)=f(x_1,...,x_n)$ with respect $x_k$ at $X$ is defined to be the rate of change of $z$ with respect to $x_k$ denoted by: $$\frac{\Delta f}{\Delta x_k}(C)=\frac{f(X+\Delta X_k)-f(X)}{\Delta x_k},$$ where $C$ is a secondary node on the edge between $X$ and $X+\Delta X_k$.

We collect these rates of change into one function!

Definition. The gradient of $z=f(X)=f(x_1,...,x_n)$ at $X$ is the vector field the $k$th component of which is equal to the partial difference quotient of $z=f(X)$ with respect $x_k$ at $X$, denoted by: $$\operatorname{grad}f\, (X)=\frac{f(X+\Delta X_k)-f(X)}{\Delta x_k}.$$

Example (gradient ascent/descent).

The rate of change of a function of several variables: the gradient

For the continuous case, we focus on one point $A=(a_1,...,a_n)$ in ${\bf R}^n$.

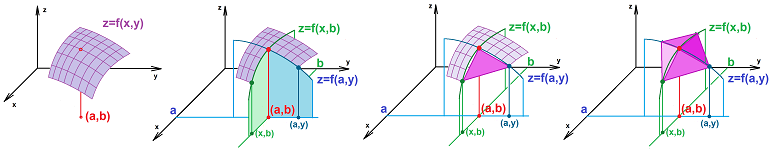

Definition. The partial derivative of $z=f(X)=f(x_1,...,x_n)$ with respect $x_k$ at $X=A=(a_1,...,a_n)$ are defined to be the limit of the difference quotient with respect to $x_k$ at $x_k=a_k$, if it exists, denoted by: $$\frac{\partial f}{\partial x_k}(A) =\lim_{\Delta x_k\to 0}\frac{\Delta f}{\Delta x_k}(A),$$ or $f_k'(A)$.

The following is an obvious conclusion.

Theorem. The partial derivative of $z=f(X)$ with respect to $x_k$ at $X=A=(a_1,...,a_n)$ is found as the derivative of the numerical function $$g(x)=f(a_1,...,a_{k-1},x,a_{k+1},...,a_n),$$ evaluated at $x=a_k$; i.e., $$\frac{\partial f}{\partial x_k}(A) = \frac{d}{dx}f(a_1,...,a_{k-1},x,a_{k+1},...,a_n)\bigg|_{x=a_k}.$$

So, this is the derivative of $z=f(x_1,...,x_n)$ with respect to $x_k$ with the rest of the variables fixed. These are, of course, just the slopes these edges of the graph.

Definition. Suppose $z=f(X)$ is defined at $X=A$ and $$l(X)=f(A)+M\cdot (X-A)$$ is any of its linear approximations at that point. Then, $z=l(X)$ is called the best linear approximation of $f$ at $X=A$ if the following is satisfied: $$\lim_{X\to A} \frac{ f(X) -l(X) }{||X-A||}=0.$$ In that case, the function $f$ is called differentiable at $X=A$. Then vector $M$ is called the gradient or the derivative of $f$ at $A$.

The numerator in the formula is the error of the approximation and the denominator is the length of the “run”.

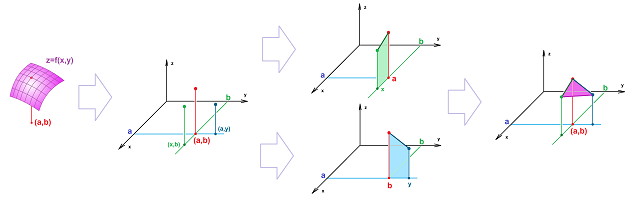

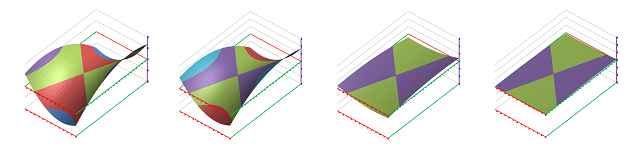

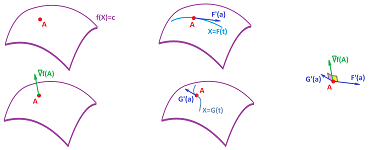

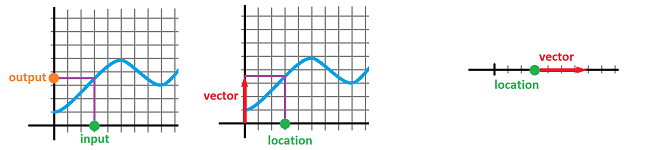

The result is the tangent plane in dimension $1$ and the tangent plane in dimension $2$:

So, we stick with the functions of $n$ variables the graphs of which -- on a small scale -- look like lines in dimension $n=1$, like planes in dimension $n=2$, and generally like ${\bf R}^n$!

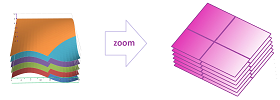

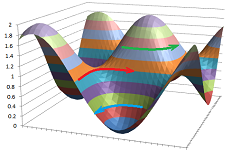

But there is more to it: what about the level curves? Below is a visualization of a differentiable function of two variables and its level curves:

Not only these curves look like straight lines when we zoom in, they also progress at a uniform rate. For example, this function is not differentiable:

Notation. There are multiple way to write the gradient. First is the Leibniz-style: $$f'(A),$$ and the Lagrange style: $$\frac{df}{dX}(A) \text{ and }\frac{dz}{dX}(A).$$ The following is also very common is science and engineering: $$\nabla f(A) \text{ and } \operatorname{grad}f(A).$$

Note that the gradient notation is to be read as: $$\big(\nabla f\big)(A),\ \big(\operatorname{grad}f\big)(A,$$ i.e., the gradient is computed and then evaluated at $X=A$.

Theorem. For a function differentiable as $X=A$, there is only one best linear approximation at $A$.

Proof. By contradiction. Suppose we have two such functions $$l(X)=f(A)+M\cdot (X-A)\ \text{ with }\ \lim_{X\to A} \frac{ f(X) -l(X) }{||X-A||}=0,$$ and $$p(X)=f(A)+Q\cdot (X-A)\ \text{ with }\ \lim_{X\to A} \frac{ f(X) -q(X) }{||X-A||}=0.$$ Then we have $$\lim_{X\to A} \bigg( \frac{ f(X) -f(A) }{||X-A||} +\frac{ M\cdot (X-A)}{||X-A||} \bigg)=0,$$ and $$\lim_{X\to A} \bigg( \frac{ f(X) -f(A) }{||X-A||} +\frac{ Q\cdot (X-A)}{||X-A||} \bigg)=0.$$ Therefore, by the Sum Rule we have: $$\lim_{X\to A} \bigg( \frac{ M\cdot (X-A)}{||X-A||} -\frac{ Q\cdot (X-A)}{||X-A||} \bigg)=0,$$ or $$\lim_{X\to A} \frac{ M\cdot (X-A)- Q\cdot (X-A)}{||X-A||} =0,$$ or $$\lim_{X\to A} \frac{ (M-Q)\cdot (X-A)}{||X-A||} =0,$$ or $$\lim_{X\to A} (M-Q)\cdot\frac{ X-A}{||X-A||} =0.$$ The limit of the fraction, however, does not exist. Therefore, $M-Q=0$. $\blacksquare$

Below is a visualization of a differentiable function of three variables given by its level surfaces:

Not only these surfaces look like planes when we zoom in, they also progress at a uniform rate. For example, this function is not differentiable:

Theorem. If $$l(X)=f(A)+M\cdot (X-A)$$ is the best linear approximation of $z=f(X)$ at $X=A$, then $$M=\operatorname{grad}f(A)=\left<\frac{\partial f}{\partial x_1}(A),..., \frac{\partial f}{\partial x_n}(A)\right>.$$

Exercise. Prove the theorem.

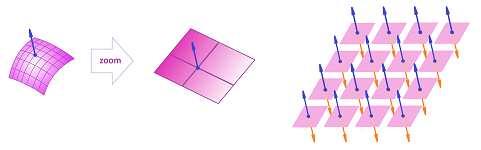

Now, suppose we carry out this procedure of linear approximation at each location throughout the domain of the function. We will have a vector at each location. This is a vector field!

The gradient serves as the derivative of a differentiable function.

Warning: When the function is not differentiable, combining its variables, say $x$ and $y$, into one, $X=(x,y)$, may be ill-advised even when the partial derivatives make sense.

Example (gradient ascent/descent).

Algebraic properties of the difference quotients and the gradients

Just as in dimension $1$, differentiation is a special kind of function too, a function of functions: $$ \newcommand{\ra}[1]{\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{ccccccccccccccc} f & \mapsto & \begin{array}{|c|}\hline\quad \frac{d}{dX} \quad \\ \hline\end{array} & \mapsto & G=f' \end{array}$$ The main difference is that the domain and the range of this function are different. We need to understand how this function operates.

Warning: Even though the derivative of a parametric curve in ${\bf R}^n$ at a point and the derivative of a function of $n$ at a point are both vectors in ${\bf R}^n$, this doesn't make the two derivatives similar.

We start with linear functions. After all, they serve as good-enough substitutes for the functions around a fixed point. This is the “Linear Sum Rule”: $$\begin{array}{ll|l} &\text{linear function}&\text{its gradient}\\ \hline f(X)&=p+M(X-A)&M\\ +\\ g(X)&=q+N(X-A)&N\\ \hline f(X)+g(X)&=p+(M+N)(X-A)&M+N\\ \end{array}$$ We used the Linearity of the dot product. The “Linear Constant Multiple Rule” relies on the same property: $$\begin{array}{ll|l} &\text{linear function}&\text{its gradient}\\ \hline f(X)&=p+M(X-A)&M\\ \cdot k\\ \hline k\cdot f(X)&=kp+(kM)(X-A)&kM\\ \end{array}$$

Now, the rules for the general case follow from these two via limits and the corresponding rules for limits.

Theorem (Sum Rule). (A) The difference and the difference quotient of the sum of two functions is the sum of their differences and difference quotients respectively; i.e., for any two functions of several variables $f,g$ defined at the nodes $X$ and $X+\Delta X$ of the partition, we have the differences and difference quotients defined at the corresponding secondary node $C$ satisfy: $$\Delta(f+g)\, (C)=\Delta f\, (C)+\Delta g\, (C),$$ and $$\frac{\Delta(f+g)}{\Delta X}(C)=\frac{\Delta f}{\Delta X}(C)+\frac{\Delta g}{\Delta X}(C).$$ (B) The sum of two functions differentiable at a point is differentiable at that point and its derivative is equal to the sum of their derivatives; i.e., for any two functions $f,g$ differentiable at $X=A$, we have: $$\frac{d(f+g)}{dX}(A)= \frac{df}{dX}(A) + \frac{dg}{dX}(A).$$

Proof. Suppose $$l(X)=f(A)+M\cdot (X-A)\text{ and } k(X)=g(A)+N\cdot (X-A)$$ are the best linear approximations at $A$ of $f$ and $g$ respectively. Then, the following is satisfied: $$\lim_{X\to A} \frac{ M\cdot (X-A) }{||X-A||}=0\text{ and }\lim_{X\to A} \frac{ N\cdot (X-A) }{||X-A||}=0.$$ We can add the two limit together, as allowed by the Sum Rule for Limits, and then manipulate the expression: $$\begin{array}{lll} 0&=\lim_{X\to A} \frac{ M\cdot (X-A) }{||X-A||}+\lim_{X\to A} \frac{ N\cdot (X-A) }{||X-A||}\\ &=\lim_{X\to A} \frac{ (M+N)\cdot (X-A) }{||X-A||}\\ \end{array}$$ According to the definition, $$l(X)+k(X)=f(A)+g(A)+(M+N)\cdot (X-A)$$ is the best linear approximation of $f+g$. $\blacksquare$

Theorem (Constant Multiple Rule). (A) The difference and the difference quotient of a multiple of a functions is the multiple of the difference and the difference quotient respectively; i.e., for any real $k$ and any function $f$ defined at the nodes $X$ and $X+\Delta X$ of the partition, we have the differences and the difference quotients defined at the corresponding secondary node $C$ satisfy: $$\Delta(k\cdot f)\, (C)=k\cdot \Delta f\, (C),$$ and $$\frac{\Delta(k\cdot f)}{\Delta X}(C)=k\cdot \frac{\Delta f}{\Delta X}(C),$$ (B) A multiple of a function differentiable at a point is differentiable at that point and its derivative is equal to the multiple of the function's derivative; i.e., for any real $k$ and any function $f$ differentiable at $X=A$, we have: $$\frac{d(k\cdot f)}{dX}(A) = k\cdot \frac{df}{dX}(A).$$

Exercise. Prove the theorem.

Unfortunately, multiplication and division of linear functions do not produce linear functions...

Warning: Just as in the case of numerical functions, we face and reject the “naive” product rule: the derivative of the product is not the product of the derivatives! Not only the units don't match, it's worse this time: all three of the derivatives are vectors and the product of two can't give us the third...

Theorem (Product Rule). (A) The difference and the difference quotient of the product of two functions is found as a combination of these functions and their difference and difference quotients respectively; for any two functions $f,g$ defined at the nodes $X$ and $X+\Delta X$ of the partition, we have the differences and the difference quotients defined at the corresponding secondary node $C$ satisfy: $$\Delta (f\cdot g)\, (C)=f(X+\Delta X) \cdot \Delta g\, (C) + \Delta f\, (C) \cdot g(X),$$ and $$\frac{\Delta (f\cdot g)}{\Delta X}(C)=f(X+\Delta X) \cdot \frac{\Delta g}{\Delta X}(C) + \frac{\Delta f}{\Delta X}(C) \cdot g(X).$$ (B) The product of two functions differentiable at a point is differentiable at that point and its derivative is found as a combination of these functions and their derivatives; specifically, given two functions $f,g$ differentiable at $X=A$, we have: $$\frac{d(f\cdot g)}{dX}(A) = f(A)\cdot \frac{dg}{dX}(A) + \frac{df}{dX}(A)\cdot g(A).$$

The formula is identical to that for numerical functions but we have to examine it carefully; same things have changed! Indeed, in the right hand side either term is the product of the value of one of the functions (a number) and the value of the gradient of the other (a vector). Furthermore we have a vector at the end of the computation: $$\begin{array}{ccccc} \text{scalar}&&\text{vector}&&\text{vector}&&\text{scalar}\\ f(A)& \cdot&\nabla g\, (A)& +& \nabla f\, (A)&\cdot &g(A)\\ &\text{vector}&&&&\text{vector}&\\ &&&\text{vector} \end{array}$$ It matches the left-hand side.

Moreover, when $A$ varies, the formulas take the form with the algebraic operations discussed in the last section: $$(f \cdot g)' = f\cdot g' + f'\cdot g.$$ Here, either term is the product of one of the functions, a scalar function, and the gradient of the other, a vector field. Such a product is again a vector field and so is their sum. It matches the left-hand side.

Proof. $\blacksquare$

Example. Let's differentiate this function:

$\square$

Theorem (Quotient Rule). (A) The difference and the difference quotient of the quotient of two functions is found as a combination of these functions and their differences and difference quotients; for any two functions $f,g$ defined at the nodes $X$ and $X+\Delta X$ of the partition, we have the difference quotients defined at the corresponding secondary node $C$ satisfy: $$\Delta (f/ g)\, (C)=\frac{f(X+\Delta X) \cdot\Delta g\,(C) - \Delta f\, (C) \cdot g(X)}{g(X)g(X+\Delta X)},$$ and $$\frac{\Delta (f/ g)}{\Delta X}(C)=\frac{f(X+\Delta X) \cdot \frac{\Delta g}{\Delta X}(C) - \frac{\Delta f}{\Delta X}(C) \cdot g(X)}{g(X)g(X+\Delta X)},$$ provided $g(X),g(X+\Delta X) \ne 0$. (B) The quotient of two functions differentiable at a point is differentiable at that point and its derivative is found as a combination of these functions and their derivatives; specifically, given two functions $f,g$ differentiable at $X=A$, we have: $$\frac{d(f/g)}{dX}(A) = \frac{\frac{df}{dX}(A)\cdot g(A) - f(A)\cdot \frac{dg}{dX}(A)}{g(A)^2},$$ provided $g(A) \ne 0$.

Proof. $\blacksquare$

Similar to the previous theorem, either term in the numerator is the product of a scalar function and a vector field. Their sum is a vector field and it's still a vector field when we divide by a scalar function.

This is the summary of the four properties re-stated in the gradient notation: $$\begin{array}{|ll|ll|} \hline \text{SR: }& \nabla(f+g)=\nabla f\, +\nabla g & \text{CMR: }& \nabla (kf)=k\, \nabla f& \text{ for any real }k\\ \hline \text{PR: }& \nabla(fg)=\nabla f\, g+f\, \nabla g& \text{QR: }& \nabla (f/g)=\frac{\nabla f\, g-f\, \nabla g}{g^2} &\text{ wherever }g\ne 0\\ \hline \end{array}$$

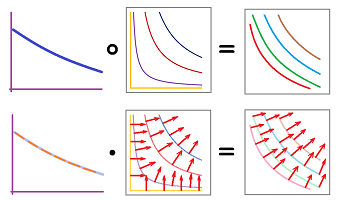

Compositions and the Chain Rule

How does one learn the terrain around him without the ability to fly? By taking hikes around the area! Mathematically, the former is a function of two variables and the latter is a parametric curve. Furthermore, we examine the surface of the graph of this function via its composition with these parametric curves.

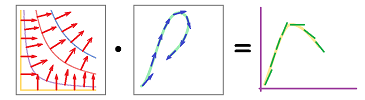

There are two functions with which to compose a function of several variables: a parametric curve before or a numerical function after. This is the former: $$\begin{array}{|ccccc|} \hline &\text{trip map} & & \bigg|\\ \hline t&\longrightarrow & (x,y) &\longrightarrow & z\\ \hline &\bigg| & &\text{terrain map}\\ \hline \end{array}$$ Recall how we interpret this composition. We imagine creating a trip plan as a parametric curve $X=F(t)$: the times and the places put on a simple automotive map, and then bring the terrain map of the area as a function of two variables $z=f(x,y)$:

The former give us the location for every moment of time and the latter the elevation for every location. Their composition gives us the elevation for every moment of time.

To understand how the derivatives of these two functions are combined, we start with linear functions. In other words, what if we travel along a straight line on a flat, not necessarily horizontal, surface (maybe a roof)? After this simple substitution, the derivatives are found by direct examination: $$\begin{array}{lll|ll} &&\text{linear function}&\text{its derivative}\\ \hline \text{parametric curve:}&X=F(t)&=A+D(t-a)&D&\text{ in } {\bf R}^n\\ &\circ \\ \text{function of several variables: }&z=f(X)&=p+M\cdot (X-A)&M&\text{ in } {\bf R}^n\\ \hline \text{numerical function: }&f(F(t))&=p+M\cdot ( A+D(t-a) -A)\\ &&=p+(M\cdot D)(t-a)&M\cdot D&\text{ in } {\bf R} \end{array}$$ Thus, the derivative of the composition is the dot product of the two derivatives.

We use this result for the general case of arbitrary differentiable functions via their linear approximations. The result is understood in the same way as in dimension $1$:

- If we double our horizontal speed (with the same terrain), the climb will be twice as fast.

- If we double steepness of the terrain (with the horizontal speed), the climb will be twice as fast.

It follows that the speed of the climb is proportional to both our horizontal speed and the steepness of the terrain. This number is computed as the dot product of:

- the derivative of the parametric curve $F$ of the trip, i.e., the horizontal velocity $\left< \frac{dx}{dt}, \frac{dy}{dt} \right>$, and

- the gradient of the terrain function $f$, i.e., $\left< \frac{\partial z}{\partial x}, \frac{\partial z}{\partial y} \right> $.

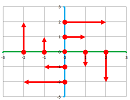

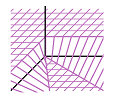

For the discrete case, we need the parametric curve $X=F(t)$ to map the partition for $t$ to the partition for $X$. In other words, it has to follow the grid:

Theorem (Chain Rule I). (A) The difference quotient of the composition of two functions is found as the product of the two difference quotients; i.e., for any parametric curve $X=F(t)$ defined at adjacent nodes $t$ and $t+\Delta t$ of a partition and any function of several variables $z=f(X)$ defined at the adjacent nodes $X=F(t)$ and $X+\Delta X=F(t+\Delta t)$ of a partition, we have the differences and the difference quotients (defined at the secondary nodes $a$ and $A=f(a)$ within these edges of the two partitions respectively) satisfy: $$\Delta (f\circ F)(a)= \Delta f\, (A),$$ and $$\frac{\Delta (f\circ F)}{\Delta t}(a)= \frac{\Delta f}{\Delta X}(A) \cdot \frac{\Delta F}{\Delta t}(a).$$ (B) The composition of a function differentiable at a point and a function differentiable at the image of that point is differentiable at that point and its derivative is found as the product of the two derivatives. In other words, if a parametric curve $X=F(t)$ is a differentiable at $t=a$ and a function of several variables $z=f(X)$ is differentiable at $X=F(a)$, then we have: $$\frac{d (f\circ F)}{dt}(a)= \frac{df}{dX}(A) \cdot \frac{dF}{dt}(a).$$

Proof. $\blacksquare$

Note: While the right-hand side in part (B) involves a dot product, the one in part (A) is a scalar product.

A function of several variables may appear in another context...

This is the meaning of the composition when our function of several variables is followed by a numerical function: $$\begin{array}{|ccccc|} \hline &\text{terrain map} & & \bigg|\\ \hline (x,y)&\longrightarrow & z &\longrightarrow & u\\ \hline &\bigg| & &\text{pressure}\\ \hline \end{array}$$ Recall how we interpret this composition. In addition to the terrain map of the area as a function of two variables $z=f(x,y)$, we have the atmospheric pressure dependent on the elevation (above the sea level) as a numerical function:

The former give us the elevation for every location and the latter the pressure for every elevation. Their composition gives us the pressure for every location.

To understand how the derivatives of these two functions are combined, we start with linear functions. After this simple substitution, the derivatives are found by direct examination: $$\begin{array}{lll|ll} &&\text{linear function}&\text{its derivative}\\ \hline \text{function of several variables: }&z=f(X)&=p+M\cdot (X-A)&M&\text{ in } {\bf R}^n\\ &\circ \\ \text{numerical function:}&u=g(z)&=q+m(z-p)&m&\text{ in } {\bf R}\\ \hline \text{function of several variables: }&g(f(X))&=q+m(p+M\cdot (X-A)-p)\\ &&=q+(mM)\cdot (X-A))&mM&\text{ in } {\bf R}^n \end{array}$$ Thus, the derivative of the composition is the scalar product of the two derivatives.

Once again, the parametric curve $z=f(X)$ has to map the partition for $X$ to the partition for $z$.

Theorem (Chain Rule II). (A) The difference quotient of the composition of two functions is found as the product of the two difference quotients; i.e., for any function of several variables $z=f(X)$ defined at adjacent nodes $X$ and $X+\Delta X$ of a partition and any numerical function $u=g(z)$ defined at the adjacent nodes $z=f(X)$ and $z+\Delta z=f(X+\Delta X)$ of a partition, we have the differences and the difference quotients (defined at the secondary nodes $A$ and $a=f(A)$ within these edges of the two partitions respectively) satisfy: $$\Delta (g\circ f)(A)= \Delta g\, (a),$$ and $$\frac{\Delta (g\circ f)}{\Delta X}(A)= \frac{\Delta g}{\Delta z}(a) \cdot \frac{\Delta f}{\Delta X}(A).$$ (B) The composition of a function differentiable at a point and a function differentiable at the image of that point is differentiable at that point and its derivative is found as the product of the two derivatives. In other words, if a function of several variables $z=f(X)$ is differentiable at $X=A$ and a numerical function $u=g(z)$ is differentiable at $a=f(A)$, then we have: $$\frac{d (g\circ f)}{dX}(A)= \frac{dg}{dz}(a) \cdot \frac{df}{dX}(A).$$

Proof. $\blacksquare$

Note: While the right-hand side in part (B) involves a scalar product, the one in part (A) is a product of two numbers.

Notice how the intermediate variable is “cancelled” in the Leibniz notation in both of the two forms of the Chain Rule; first: $$\frac{dz}{\not{dX}}\cdot\frac{\not{dX}}{dt}=\frac{dz}{dt};$$ and second: $$\frac{du}{\not{dz}}\cdot\frac{\not{dz}}{dX}=\frac{du}{dX}.$$

Thus, in spite of the fact that these two compositions are very different, the Chain Rule has a somewhat informal -- but single -- verbal interpretation: the derivative of the composition of two functions is the product of the two derivatives. The word “product”, as we just saw, is also ambiguous. We saw the multiplication of two numbers in the beginning of the book, then the dot product of two vectors, and finally a vector and a number: $$\begin{array}{lll} (f\circ g)'(x)&=f'(g(x))&\cdot g'(x),\\ (f\circ F)'(x)&=\nabla f(F(t))&\cdot F'(t),\\ \nabla (g\circ f)(X)&=g'(f(X))&\cdot \nabla f(X).\\ \end{array}$$ The context determines the meaning and this ambiguity serves a purpose: we will see later how this wording is, in a rigorous way, applicable to the composition of any two functions.

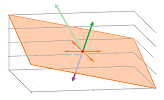

The gradient is perpendicular to the level curves

The result we have been alluding to is that the direction of the gradient is the direction of the fastest growth of the function. It is proven later but here we just consider the relation between the gradient and the level curves, i.e., the curves of constant value, of the function.

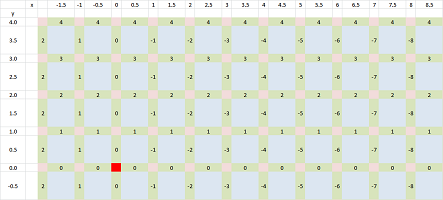

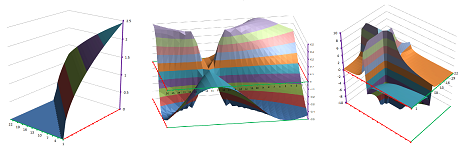

Example. A function defined at the nodes on the plane is shown in the first column with its level curve visualized:

In the second column, the difference quotient is computed and then below it is visualized. This curve and this vector are perpendicular. $\square$

Exercise. Consider other possible arrangements of the values of the function and confirm the conjecture.

Example. In the familiar example of a plane: $$f(x,y)=2x+3y,$$ the gradient is a constant vector field: $$\nabla f(x,y)=<2,3>.$$ Meanwhile, its level curves are parallel straight lines: $$2x+3y=c.$$

The slope is $-2/3$ which makes them perpendicular to gradient vector $<2,3>$! $\square$

We then conjecture that the gradient and the level curves are perpendicular to each other.

Let's consider a general linear function of two variables: $$z=f(x,y)=c+m(x-a)+n(y-b),$$ and $M=\nabla f=<m,n>$ the gradient of $f$. Let's pick a simple vector $D=<-n,m>$ perpendicular to $M=<m,n>$. Consider this straight line with $D$ as a direction vector: $$F(t)=(a,b)+<-n,m>t.$$ We substitute it into $f$: $$f(F(t))=f(a-nt,b+mt)=c+m(-nt)+n(mt)=c.$$ The composition is constant and, therefore, the line stays within a level curve of $f$. The conjecture is confirmed.

Example. In the familiar example of a circular paraboloid: $$f(x,y)=x^2+y^2,$$ the gradient consists of the radial vectors: $$\nabla f(x,y)=<2x,2y>.$$ Meanwhile, its level curves are circles: $$x^2+y^2=c \text{ for }c>0.$$

The radii of a circle are known (and are seen above) to be perpendicular to the circle! $\square$

We need to make our conjecture precise before proving it. First, the level curves of a function of two variables aren't necessarily curves. They are just sets in the plane. For example, when $f$ is constant, all the level sets are empty but one which is the whole plane: $$f(x,y)=c\ \Longrightarrow\ \{(x,y):f(x,y)=b\}=\emptyset \text{ when }b\ne c\text{ and } \{(x,y):f(x,y)=c\}={\bf R}^2.$$ Furthermore, even when a level curve is a curve, it's an implicit curve and isn't represented by a function.

Note: the question of when exactly level curves are curves will be discussed later.

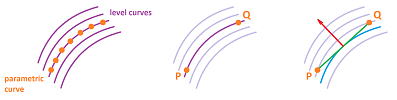

How do we sort this out? Just as before, we study the terrain by taking these hikes -- parametric curves -- and this time we choose an easy one: no climbing. We stay at the same elevation:

In other words, our function $z=f(X)$ does not change along this parametric curve $X=F(t)$, i.e., their composition is constant: $$f(F(t))=\text{ constant }.$$ As you can see, this doesn't mean that the path of $F$ is a level set but simply its subset. We can go slow or fast and we can go in either direction...

Second, what do we mean when we say a parametric curve and a vector are perpendicular to each other? The direction of a curve at a point is its tangent vector at that point, by definition!

We are then concerned with: $$\text{ the angle between } \nabla f(A) \text{ and } F'(a),\text{ where }A=F(a),$$ and, therefore, with their dot product: $$\nabla f(F(a))\cdot F'(a).$$ Is it zero? But we just saw this expression in the last section! It's the right-hand side of the Chain Rule: $$(f\circ F)'(a)=\nabla f(F(a))\cdot F'(a).$$ Why is the left-hand side zero? Because it's the derivative of a constant function! Indeed, the path of $F$ lie within a level curve of $f$. So, we have: $$0=\frac{d}{dt}f(F(t))\bigg|_{t=a}=(f\circ F)'(a)=\nabla f(F(a))\cdot F'(a).$$ So, we have demonstrated that level curves and the gradient vectors are perpendicular: $$\nabla f(A) \perp F'(a).$$

What remains is just some caveats. First, the functions have to be differentiable for the derivatives to make sense. Second, neither of these derivatives should be zero or the angle between them will be undefined ($\nabla f(A)\ne 0$ and $F'(a)\ne 0$).

Theorem. (A) Suppose a function $z=f(X)$ of several variables is defined at the adjacent nodes $X$ and $X+\Delta X\ne X$ of a partition. Then, if these two nodes lie within a level set of $z=f(X)$, i.e., $f(X)=f(X+\Delta X)$, then $$\frac{\Delta f}{\Delta X}(A)=0,$$ where $A$ is the secondary node of this edge. (B) Suppose a function of several variables $z=f(X)$ is differentiable at $X=A$ and a parametric curve $X=F(t)$ is differentiable on an open interval $I$ that contains $a$ with $F(a)=A$. Then, if the path of $X=F(t)$ lies within a level set of $z=f(X)$, then $$\frac{df}{dX}(A)\perp\frac{dF}{dt}(a),$$ provided both are non-zero.

Exercise. What about the converse?

We now demonstrate the following result that mixes the discrete and the continuous.

Corollary. Suppose a function of several variables $z=f(X)$ is differentiable on an open set $U$ in ${\bf R}^n$. Suppose a parametric curve $X=F(t)$ is defined at adjacent nodes $t$ and $t+\Delta t$ of a partition. Suppose the points $P=F(t)$ and $Q=F(t+\Delta t)$ are distinct and lie within a level set of $z=f(X)$, i.e., $f(P)=f(Q)$, and the segment $PQ$ between them lies entirely within $U$. Then, for some point $A$ on $PQ$ and a secondary node $a$ of $[t,t+\Delta t]$, we have $$\frac{df}{dX}(A)\perp\frac{\Delta F}{\Delta t}(a),$$ provided the gradient is non-zero in $U$.

Proof. Let $z=L(t)$ be the linear parametric curve with $L(t)=P$ and $L(t+\Delta t)=Q$. Then, $$\frac{dL}{dt}(a)=\frac{\Delta F}{\Delta t}(a),$$ for any choice of a secondary node $a$ of the interval $[t,t+\Delta t]$. We define a new numerical function defined at the nodes $t$ and $t+\Delta t$: $$h=f\circ F.$$ Then by the Mean Value Theorem, there is such a secondary node $a$ that: $$\frac{\Delta h}{\Delta t}(a)=\frac{dh}{dt}(a).$$ Since the former is zero by the assumption, we can apply the Chain Rule and conclude the following about the latter: $$0=\frac{dh}{dt}(a)= \frac{df}{dX}(A)\cdot\frac{dL}{dt}(a)=\frac{df}{dX}(A)\cdot\frac{\Delta F}{\Delta t}(a),$$ where $A=L(a)$. $\blacksquare$

The theorem remains valid no matter how a parametric curve traces the level curve as long as it doesn't stop. There are then only two main ways -- back and forth -- that a parametric curve can follow the level curve. But wait a minute, the theorem doesn't speak exclusively of functions of two variables? It seems to apply to level surfaces of functions of three variables. Indeed. The basic idea is the same: a parametric curve perpendicular to the gradient, even though there are infinitely many directions for the curve to go through the point.

With all the variety of angles between their tangents, they all have the same angle with the gradient. In this exact sense we speak of the gradient being perpendicular to the level surface.

This result is a free gift courtesy of abstract thinking and the vector notation!

Example. The level surfaces of the radial vector field, $$V(x,y,z)=<x,y,z>,$$ as well as the vector field of the gravitation, $$W(x,y,z)=-\frac{c}{||<x,y,z>||^3}<x,y,z>,$$ are concentric spheres. The gradient vectors point away from the origin in the former case and towards it in the latter. One can imagine how, no matter what path you take on the surface of the Earth, your body will point away from the center. $\square$

With this theorem we can interpret the idea that the gradient points in the direction of the fastest growth of the function: this is the shortest path toward the “next” level curve.

This informal explanation isn't good enough anymore. We will make the terms in this statement fully precise next.

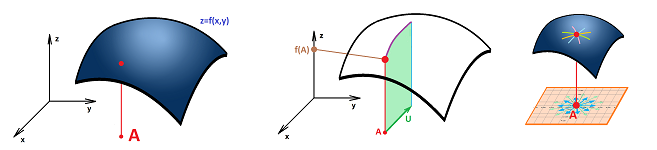

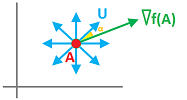

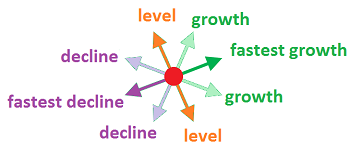

Monotonicity of functions of several variables

Suppose $z=f(X)$ is a function of $n$ variables. Suppose $A$ is a point in ${\bf R}^n$. Then $V=\nabla f(A)\ne 0$ is a vector. As such it has a direction. More precisely, the direction of $V$ is its normalization, the unit vector $V/||V||$. Thus, the first part of the statement is well understood. But what does “the direction of the fastest growth of the function” mean? First, the gradient will be chosen from all possible directions, i.e., all unit vectors.

Then, what does the “growth of the function in the direction of a unit vector” mean?

Let's first take a look at dimension $n=1$. There are only two unit vectors, $i$ and $-i$, along the $x$-axis. Therefore, if $f'(A)>0$, then $i$ is the direction of the fastest growth; meanwhile, if $f'(A)<0$, it's $-i$.

For higher dimensions, we certainly know what this statement means when the direction coincides with the direction of one of the axes: it's the partial derivative (vectors $i,\ -i$, $j,\ -j$ etc.). However, if we are exploring the terrain represented by a function of two variables, going only north-south or east-west is not enough. The idea comes from the earlier part of this section: we, again, take various trips around this terrain. This time we don't have to go far or follow any complex routs: we'll go along straight lines. Also, in order to compare the results, we will travel at the same speed, $1$, during all trips.

We will consider all parametric curves $X=F_U(t)$ that

- start at $X=A$, i.e., $F_U(0)=A$,

- are linear, i.e., $F_U(t)=A+tU$, and, furthermore,

- have unit direction vector $||U||=1$.

Warning: we are able to ignore non-linear parametric curves only under the assumption that $f$ is differentiable.

Now we compare the rate of growth of $f$ along these parametric curves by considering their composition with $f$: $$h_U(t)=f(F_U(t)).$$

So, the rate of growth we are after is this: $$h'_U(0)=\frac{d}{dt}f(F_U(t))\bigg|_{t=0}=\nabla f(F_U(t))\cdot F_U'(t)\bigg|_{t=0}=\nabla f(A)\cdot U,$$ according to the Chain Rule. There is a convenient term for this quantity. The directional derivative of a function $z=f(X)$ at point $X=A$ in the direction of a unit vector $U$ is defined to be $$D_U(f,A)=\nabla f(A)\cdot U.$$

We continue: $$D_U(f,A)=||\nabla f(A)||\cdot ||U||\cos \alpha=||\nabla f(A)||\cos \alpha,$$ where $\alpha$ is the angle between $\nabla f(A)$ and $U$. As the gradient is known and fixed, the directional derivative in a particular direction depends on its angle with the gradient, as expected.

Now, this expression is easy to maximize over $U$s. What direction, i.e., a unit vector $U$, provide the highest value of $D_U(f,A)$? Only $\cos \alpha$ matters and it reaches is maximum values, which is $1$, at $\alpha =0$. In other words, the maximum is reached when the direction coincides with the gradient!

Theorem (Monotonicity Theorem). Suppose $z=f(X)$ is a function of $n$ variables differentiable at a point $A$ in ${\bf R}^n$. Then the directional derivative $D_U(f,A)$ reaches it maximum in the direction $U$ of the gradient $\nabla f(A)$ of $f$ at $A$; this maximum value is $||\nabla f(A)||$.

This is the summary of the theorem and the rest of the analysis:

Exercise. Explain the diagram.

Theorem. The directional derivative of a function $z=f(X)$ at point $X=A$ in the direction of a unit vector $U$ is also found as the following limit: $$D_U(f,A)=\lim_{h\to 0}\frac{f(A+hU)-f(A)}{h}.$$

Exercise. Prove the theorem.

Exercise. Represent each partial derivative as a directional derivative.

This is what happens with functions of $3$ variables:

All vectors on one side of the level surface are the directions of increasing values of the function and all on the other side decreasing.

Differentiation and anti-differentiation

Let's review the algebraic properties of differentiation of functions of several variables. The properties are the same as before!

Theorem (Algebra of Derivatives). For any differentiable functions, we have in the gradient notation: $$\begin{array}{|ll|ll|} \hline \text{SR: }& \nabla(f+g)=\nabla f+\nabla g & \text{CMR: }& \nabla (cf)=c\nabla f& \text{ for any real }c\\ \text{PR: }& \nabla(fg)=\nabla f\, g+f\nabla g& \text{QR: }& \nabla (f/g)=\frac{\nabla f\, g-f \nabla g}{g^2} &\text{ wherever }g\ne 0\\ \text{CR1: }& (f\circ F)'=\nabla f\cdot F'& \text{CR2: }& (g\circ f)'=g'\nabla f\\ \hline \end{array}$$

Example. $\square$

The Mean Value Theorem (Chapter 9) will help us to derive facts about the function from the facts about its gradient. For example: $$\begin{array}{l|l|ll} \text{info about }f &&\text{ info about }\nabla f\\ \hline f\text{ is constant }&\Longrightarrow &\nabla f \text{ is zero}\\ &\overset{?}{\Longleftarrow}&\\ \hline f\text{ is linear}&\Longrightarrow &\nabla f \text{ is constant}\\ &\overset{?}{\Longleftarrow}&\\ \hline \end{array}$$ Are these arrows reversible? If the derivative of the function is zero, does it mean that the function is constant? At this time, we have a tool to prove this fact.

Consider this simple statement about terrains:

- “if there is no sloping anywhere in the terrain, it's flat”.

If $y=f(x)$ represent the position, we can restate this mathematically.

Theorem (Constant). (A) If a function defined at the nodes of a partition of a cell in ${\bf R}^n$ has a zero difference throughout the partition, then this function is constant over the nodes; i.e., $$\Delta f\,(C) = 0 \ \Longrightarrow\ f=\text{ constant }.$$ (B) If a function differentiable on an open path-connected set $I$ in ${\bf R}^n$ has a zero gradient for all $X$ in $I$, then this function is constant on $I$; i.e., $$\frac{df}{dX}=0 \ \Longrightarrow\ f=\text{ constant }.$$

Proof. (A) If $X$ and $Y$ are two nodes connected by an edge with a secondary node $C$, then we have: $$\Delta f\,(C) = 0 \ \Longrightarrow\ f(X)-f(Y)=0\ \Longrightarrow\ f(X)=f(Y).$$ In a cell, any two nodes can be connected by a sequence of adjacent nodes, with no change in the value of $f$. (B) Suppose two points $A,B$ inside $I$ are given. Then there is a differentiable parametric curve $X=P(X)$ with its path that goes from $A$ to $B$ and lies entirely in $I$: $$P(a)=A,\ P(b)=B,\ P(t)\text{ in }I.$$ Define a new numerical function: $$h(t)=f(P(t)).$$ Then, by the Chain Rule we have: $$\frac{dh}{dt}(t)=\frac{d}{dt}\big( f(P(t)) \big)= \nabla f\, (P(t))\cdot F'(t)=0\cdot F'(t)=0.$$ Then, by the corollary to the Mean Value Theorem in Chapter 9, $h$ is a constant function. In particular, we have $$f(A) = f(B).$$ We will see later that the differentiability requirement is unnecessary. $\blacksquare$

Exercise. What if $\nabla f=0$ on a set that isn't path-connected? Is it still true that $$\nabla f=0 \ \Longrightarrow\ f=\text{ constant }?$$

Just as in dimension $1$, the openness of the domain is crucial.

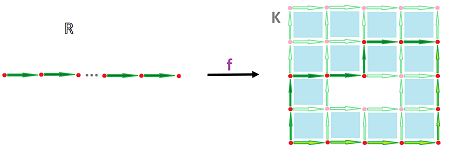

The problem then becomes one of recovering the function $f$ from its derivative (i.e., gradient) $\nabla f$, the process we have called anti-differentiation. In other words, we reconstruct the function from a “field of tangent lines or planes”:

Now, even if we can recover the function $f$ from it derivative $\nabla f$, there many others with the same derivative, such as $g=f+C$ for any constant vector $C$. Are there others? No.

Theorem (Anti-differentiation). (A) If two functions defined at the nodes of a partition of a cell in ${\bf R}^n$ have the same difference, they differ by a constant; i.e., $$\Delta f\,(C) = \Delta g\,(C) \ \Longrightarrow\ f(X) – g(X)=\text{ constant }.$$ (B) If two functions differentiable on an open path-connected set $I$ in ${\bf R}^n$ have the same gradient, they differ by a constant; i.e., $$\frac{df}{dX} =\frac{dg}{dX} \ \Longrightarrow\ f – g=\text{ constant }.$$

Proof. (B) Define $$h(X) = f(X) – g(X).$$ Then, by SR, we have: $$\nabla h\, (X) = \nabla \left( f(X)–g(X) \right)=\nabla f\, (X)–\nabla g\, (X) =0,$$ for all $X$. Then $h$ is constant, by the Constant Theorem. $\blacksquare$

Geometrically, $$\nabla f =\nabla g \ \Longrightarrow\ f – g=\text{ constant },$$ means that the graph of $f$ shifted vertically gives us the graph of $G$.

We can cut the list of algebraic rules down to the most important ones: $$\begin{array}{|ll|ll|} \hline \text{Linearity Rule: }& \nabla(\lambda f+\mu g)=\lambda\nabla f+\mu\nabla g \text{ for all real }\lambda, \mu\\ \text{Chain Rule: }& (f\circ F)'=\nabla f\cdot F'\\ \hline \end{array}$$

When is anti-differentiation possible?

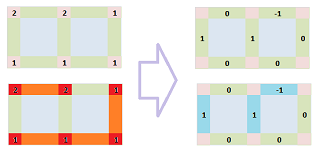

Recall the diagram of partial differentiation of a function of two variables. It produces the difference and the difference quotient (aka the gradient) both of which are functions defined at the secondary nodes of a partition: $$\begin{array}{cccccccccccc} &&&& &f\\ &&&&\swarrow_x&&_y\searrow\\ &&\Delta f=<&\Delta_x f& &,& &\Delta_y f&>\\ \end{array}$$

Definition. A function $G$ defined on the secondary nodes of a partition is called exact if $\Delta f=G$, for some function $f$ defined on the nodes of the partition.

When the secondary nodes aren't specified, we speak of an exact $1$-form.

Definition. A vector field $F$ defined on the secondary nodes of a partition is called gradient if $F(N)\cdot N=G(N)$ for some exact function $G$ and any secondary node $N$.

Not all vector fields are gradient; the example we saw was $F(x,y)=<y,-x>$.

Note that the Anti-differentiation Theorem is an analog of the familiar result from Chapters 8 and 9: $$\Delta f= \Delta g \ \Longrightarrow\ f-g=\text{constant}.$$

How do we know that a given function defined at the secondary nodes is exact? In other words, is it the difference of some function? We have previously solved this problem by finding, or trying and failing, such a function. The examples required producing the recursive formulas for $x$ and $y$ and then matching their applications in reverse order. The methods only work when the functions are simple enough. The familiar theorem below gives us a better tool. Surprisingly, this tool is further partial differentiation. We continue the above diagram: $$\begin{array}{ccc} &&&& &f\\ &&&&\swarrow_x&&_y\searrow\\ &&\Delta f=<&\Delta_x f& &,& &\Delta_y f&>\\ &&\swarrow_x &&_y\searrow&&\swarrow_x &&_y\searrow\\ &\Delta^2_{xx}f&&&&\Delta^2_{yx} f=\Delta^2_{xy} f &&&&\Delta^2_{yy} f\\ \end{array}$$

Recall the following result from Chapter 18.

Theorem (Discrete Clairaut's Theorem). Over a partition in ${\bf R}^n$, first, the mixed second differences with respect to any two variables are equal to each other: $$\Delta_{yx}^2 f=\Delta_{xy}^2 f;$$ and, second, the mixed second difference quotients are equal to each other: $$\frac{\Delta^2 f}{\Delta y \Delta x}=\frac{\Delta^2 f}{\Delta x \Delta y}.$$

Thanks to this theorem we can draw conclusions from the assumption that we face a difference. So, the plan is, instead of trying to reverse the arrows in the first row of the diagram, we continue down and see whether we have a match: $$\Delta_y p=\Delta_x q.$$

As a summary, consider an arbitrary function on secondary nodes. It has arbitrary component functions, with no relations between them whatsoever! Everything changes once we make the assumption that it is exact. The theorem ensures that the diagram of the differences of the component functions of some $V=<p,q>$, on left, turns -- under this assumption -- into something rigid, on right: $$\begin{array}{cccccccccccc} &&& &..\\ &&&..&&..\\ &V=<&p& &,& &q&>\\ &&&_y\searrow&&\swarrow_x&&\\ &&&&\Delta_{y} p\, ...\, \Delta_x q \end{array}\leadsto\begin{array}{cccccccccccc} && &f\\ &&\swarrow_x&&_y\searrow\\ &p=\Delta_x f& && &q=\Delta_y f\\ &&_y\searrow&&\swarrow_x&&\\ &&&\Delta_y p=\Delta^2_{yx}f=\Delta^2_{xy}f=\Delta_x q \end{array}$$ This rigidity of the diagram means that the two trips from the top to the bottom produce the same result. We have described this property as commutativity. Indeed, it's about interchanging the order of the two operations of partial differentiation: $$\Delta_x\Delta_y=\Delta_y\Delta_x.$$

Theorem (Exactness Test dimension $2$). If $G$ is exact on a rectangle on the $xy$-plane with component functions $p$ and $q$, then $$\Delta_y p=\Delta_x q.$$

Corollary (Gradient Test dimension $2$). Suppose a vector field $V$ is defined on the secondary nodes of a partition of a rectangle in the $xy$-plane with component functions $p$ and $q$. If $V$ is gradient, then $$\frac{\Delta p}{\Delta y}=\frac{\Delta q}{\Delta x}.$$

Example.

$\square$

The quantity that vanishes when the function is gradient is called its rotor. It is a real-valued function defined on the faces of the partition, $$\Delta_y p-\Delta_x q.$$

For three variables, we just consider two at a time with the third kept fixed.

Below is the diagram of the partial differences for three variables with only the mixed partial differences shown: $$\newcommand{\ra}[1]{\!\!\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\la}[1]{\!\!\!\!\!\!\!\xleftarrow{\quad#1\quad}\!\!\!\!\!} % \begin{array}{ccc} &&&&&\la{}&\la{}& \la{} &f& \ra{} & \ra{}& \ra{}\\ &&&&\swarrow_x&&&&\downarrow_y&&&&_z\searrow\\ &&&\Delta_x f & &&& &\Delta _y f & &&& &\Delta_z f \\ &&&\downarrow_y&_z\searrow&&&\swarrow_x&&_z\searrow&&&\swarrow_x&\downarrow_y&\\ &&&\Delta^2_{yx} f &&\Delta^2_{zx}f & \Delta^2_{xy}f &&&&\Delta^2_{zy}f& \Delta^2_{xz}f &&\Delta^2_{yz}f &&\\ &&& &\searrow&\swarrow&\searrow &\to&=&\leftarrow&\swarrow&\searrow&\swarrow&\\ \end{array}$$ The six that are left are paired up according to Discrete Clairaut's Theorem above. If $$V=<p,q,r> =<\Delta_x f,\Delta_y f,\Delta_z f>=\Delta f,$$ we can trace the differences of the component functions in the diagram. $$\newcommand{\ra}[1]{\!\!\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\la}[1]{\!\!\!\!\!\!\!\xleftarrow{\quad#1\quad}\!\!\!\!\!} % \begin{array}{ccc} &&&p& &&& &q& &&& &r\\ &&&\downarrow_y&_z\searrow&&&\swarrow_x&&_z\searrow&&&\swarrow_x&\downarrow_y&\\ &&&\Delta_{y}p&&\Delta_{z}p& \Delta_{x}q&&&&\Delta_{z}q& \Delta_{x}r&&\Delta_{y}r&&\\ &&& &\searrow&\swarrow&\searrow &\to&=&\leftarrow&\swarrow&\searrow&\swarrow&\\ \end{array}$$ We write down the results below.

Theorem (Exactness Test dimension $3$). If $G$ is exact on a partition of a box in the $xyz$-space with component functions $p$, $q$, and $r$, then $$\Delta_y p=\Delta_x q,\ \Delta_z q=\Delta_y r,\ \Delta_x r=\Delta_z p.$$

Corollary (Gradient Test dimension $3$). Suppose a vector field $V$ is defined on the secondary nodes of a partition of a box in the $xyz$-space with component functions $p$, $q$, and $r$. If $V$ is gradient, then $$\frac{\Delta p}{\Delta y}=\frac{\Delta q}{\Delta x},\ \frac{\Delta q}{\Delta z}=\frac{\Delta r}{\Delta y} ,\ \frac{\Delta r}{\Delta x}=\frac{\Delta p}{\Delta z}.$$

Notice the following simple pattern. First the variables and the components are arranged around a triangle: $$\begin{array}{cccc} && & &x& & &&\\ && &\nearrow& &\searrow& &&\\ &&z& &\leftarrow& &y&&\\ \end{array}\quad \begin{array}{ccc} && & &p& & &&\\ && &\nearrow& &\searrow& &&\\ &&r& &\leftarrow& &q&&\\ \end{array}$$ Then one of the variables is omitted and the difference over the other two is set to $0$: $$\begin{array}{ccc} && & &\cdot& & &&\\ && && && &&\\ &&\bullet& &\Delta_z q=\Delta_y r& &\bullet&&\\ \end{array}\quad \begin{array}{ccc} && & &\bullet& & &&\\ && && &\Delta_y p=\Delta_x q& &&\\ &&\cdot& && &\bullet&&\\ \end{array}\quad \begin{array}{ccc} && & &\bullet& & &&\\ && &\Delta_x r=\Delta_z p& && &&\\ &&\bullet& && &\cdot&&\\ \end{array}\quad$$

The conditions of these two theorems, and their analogs in higher dimensions, put severe limitations on what functions can be exact.

When is a vector field a gradient?

Recall the diagram of partial differentiation of a function of two variables that produces the vector field of the gradient: $$\begin{array}{ccc} &&&& &f\\ &&&&\swarrow_x&&_y\searrow\\ &&\nabla f=<&f_x& &,& &f_y&>\\ \end{array}$$

Definition. A vector field that is the gradient of some function of several variables is called a gradient vector field. This function is then called a potential function of the vector field.

Note that finding for a given vector field $V$ a function $f$ such that $\nabla f=V$ amounts to anti-differentiation as we try to reverse the arrows in the above diagram. The Anti-differentiation Theorem is an analog of several familiar result from Chapters 8 and 9: $$\nabla f= \nabla g\ \Longrightarrow\ f-g=\text{constant}.$$

Corollary. Any two potential functions of the same vector field defined on an open path-connected set differ by a constant within this set.

Not all vector fields are gradient. The example we saw was $V(x,y)=<y,-x>$. There are many more...

Example. Consider the spiral below. Can it be a level curve of a function of two variables?

$\square$

How do we know that a given vector field is gradient? We have previously solved this problem by finding, and trying and failing, a potential function for the vector field in dimension $2$. The examples required integration with respect to both variables and then matching the results. The methods only work when the functions are simple enough. The familiar theorem below gives us a better tool. Surprisingly, this tool is further partial differentiation. We continue the above diagram: $$\begin{array}{cccccccccccc} &&&& &f\\ &&&&\swarrow_x&&_y\searrow\\ &&\nabla f=<&f_x& &,& &f_y&>\\ &&\swarrow_x &&_y\searrow&&\swarrow_x &&_y\searrow\\ &f_{xx}&&&&f_{yx}=f_{xy}&&&&f_{yy}\\ \end{array}$$ Note that the last row is the derivative of the gradient, a vector field, somehow...

Recall the following result from Chapter 18 that gives us the equality of the mixed second derivatives.

Theorem (Clairaut's Theorem). The mixed second derivatives of a function $f$ of two variables with continuous second partial derivatives at a point $(a,b)$ are equal to each other; i.e., $$f_{xy}(a,b) = f_{yx}(a,b).$$

Thanks to this theorem we can draw conclusions from the assumption that a given vector field $V=<p,q>$ is gradient -- as long as its component functions $p$ and $q$ are twice continuously differentiable. So, the plan is, instead of trying to reverse the arrows in the first row of the diagram and find $f$ with $\nabla f=V$, we continue down and see whether we have a match: $$p_y=q_x.$$

Example. It's easy. For $V=<x,y>$, we have $$\begin{array}{lll} p=x&\Longrightarrow &p_y=0\\ q=y&\Longrightarrow &q_x=0 \end{array}\ \Longrightarrow\ \text{ match!}$$ The test is passed! So what? What do we conclude from that? Nothing.

On the other hand, $V=<y,-x>$, we have $$\begin{array}{lll} p=y&\Longrightarrow &p_y=1\\ q=x&\Longrightarrow &q_x=-1 \end{array}\ \Longrightarrow\ \text{ no match!}$$ The test is failed. It's not gradient! $\square$

We draw no conclusion when the test is passed and when it isn't, we would still have to integrate to find out if it is gradient and, at the same time, try to find the gradient. Meanwhile the failure to satisfy the test proves the vector field is not gradient.

As a summary, consider an arbitrary vector field. It has arbitrary component functions, with no relations between them whatsoever! Everything changes once we make the assumption that this is a gradient vector field. The theorem ensures that the diagram of the derivatives of the component functions of an arbitrary vector field $V=<p,q>$ on left turns -- under the assumption that it's gradient -- into something rigid on right: $$\begin{array}{cccccccccccc} &&& &..\\ &&&..&&..\\ &V=<&p& &,& &q&>\\ &&&_y\searrow&&\swarrow_x&&\\ &&&&p_{y}\ ...\ q_x&&&& \end{array}\leadsto\begin{array}{cccccccccccc} && &f\\ &&\swarrow_x&&_y\searrow\\ &p=f_x& && &q=f_y\\ &&_y\searrow&&\swarrow_x&&\\ &&&p_y=f_{yx}=f_{xy}=q_x&&&& \end{array}$$ This rigidity of the diagram means that the two trips from the top to the bottom produce the same result. We have described this property as commutativity. Indeed, it's about interchanging the order of the two operations of partial differentiation: $$\frac{\partial}{\partial x}\frac{\partial}{\partial y}=\frac{\partial}{\partial y}\frac{\partial}{\partial x}.$$

Theorem (Gradient Test dimension $2$). Suppose $V=<p,q>$ is a vector field with continuously differentiable on an open disk in ${\bf R}^2$ component functions $p$ and $q$. If $V$ is gradient, then $$p_y = q_x.$$

Example.

$\square$

The quantity that vanishes when the vector field is gradient is called the rotor of the vector field. It is a function of two variables: $$p_y - q_x.$$ We will see later how the rotor is used to measure how close the vector field is to being gradient.

For three variables, we just consider two at a time with the third kept fixed.

Below is the diagram of the partial derivatives for three variables with only the mixed derivatives shown: $$\newcommand{\ra}[1]{\!\!\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\la}[1]{\!\!\!\!\!\!\!\xleftarrow{\quad#1\quad}\!\!\!\!\!} % \begin{array}{cccccccccccc} &&&&&\la{}&\la{}& \la{} &f& \ra{} & \ra{}& \ra{}\\ &&&&\swarrow_x&&&&\downarrow_y&&&&_z\searrow\\ &&&f_x& &&& &f_y& &&& &f_z\\ &&&\downarrow_y&_z\searrow&&&\swarrow_x&&_z\searrow&&&\swarrow_x&\downarrow_y&\\ &&&f_{yx}&&f_{zx}& f_{xy}&&&&f_{zy}& f_{xz}&&f_{yz}&&\\ &&& &\searrow&\swarrow&\searrow &\to&=&\leftarrow&\swarrow&\searrow&\swarrow&\\ \end{array}$$ The six that are left are paired up according to Clairaut's theorem. If $$V=<p,q,r> =<f_x,f_y,f_z>=\nabla f,$$ we can trace the derivatives of the component functions in the diagram. $$\newcommand{\ra}[1]{\!\!\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\la}[1]{\!\!\!\!\!\!\!\xleftarrow{\quad#1\quad}\!\!\!\!\!} % \begin{array}{cccccccccccc} &&&p& &&& &q& &&& &r\\ &&&\downarrow_y&_z\searrow&&&\swarrow_x&&_z\searrow&&&\swarrow_x&\downarrow_y&\\ &&&p_{y}&&p_{z}& q_{x}&&&&q_{z}& r_{x}&&r_{y}&&\\ &&& &\searrow&\swarrow&\searrow &\to&=&\leftarrow&\swarrow&\searrow&\swarrow&\\ \end{array}$$ We write down the results below.

Theorem (Gradient Test dimension $3$). Suppose $V=<p,q,r>$ is a vector field with continuously differentiable on an open ball in ${\bf R}^3$ component functions $p$, $q$, and $r$. If $V$ is gradient, then $$p_y=q_x,\ q_z=r_y,\ r_x=p_z.$$

Notice the following simple pattern. First the variables and the components are arranged around a triangle: $$\begin{array}{cccc} && & &x& & &&\\ && &\nearrow& &\searrow& &&\\ &&z& &\leftarrow& &y&&\\ \end{array}\quad \begin{array}{cccc} && & &p& & &&\\ && &\nearrow& &\searrow& &&\\ &&r& &\leftarrow& &q&&\\ \end{array}$$ Then one of the variables is omitted and the rotor over the other two is set to $0$: $$\begin{array}{cccc} && & &\cdot& & &&\\ && && && &&\\ &&\bullet& &q_z=r_y& &\bullet&&\\ \end{array}\quad \begin{array}{cccc} && & &\bullet& & &&\\ && && &p_y=q_x& &&\\ &&\cdot& && &\bullet&&\\ \end{array}\quad \begin{array}{cccc} && & &\bullet& & &&\\ && &r_x=p_z& && &&\\ &&\bullet& && &\cdot&&\\ \end{array}\quad$$

All three of these quantities: $p_y-q_x,\ q_z-r_y,\ r_x-p_z$, vanish when the vector field is gradient. In order to have only one, we will use them as components to form a new vector field, called the curl of the vector field.

The conditions of these two theorems, and their analogs in higher dimensions, put severe limitations on what vector fields can be gradient. The source of these limitations is the topology of the Euclidean spaces of dimension $2$ and higher. They are to be discussed later.

Back to dimension $2$.

Example. The rotational vector field, $$V=<y,-x>,$$ is not gradient as it fails the Gradient Test.

Let's consider its normalization: $$U=\frac{V}{||V||}=\frac{1}{\sqrt{x^2+y^2}}<y,\, -x>=\left< \frac{y}{\sqrt{x^2+y^2}},\ -\frac{x}{\sqrt{x^2+y^2}}\right>=<p,q>.$$ All vectors are unit vectors with the same directions as the last vector field:

Let's test the condition of the Gradient Test: $$\begin{array}{lll} p_y=\frac{\partial}{\partial y}\frac{y}{\sqrt{x^2+y^2}}=\frac{1\cdot \sqrt{x^2+y^2}-y\frac{y}{\sqrt{x^2+y^2}}}{x^2+y^2}\\ q_x=\frac{\partial}{\partial x}\frac{-x}{\sqrt{x^2+y^2}}=-\frac{1\cdot \sqrt{x^2+y^2}-x\frac{x}{\sqrt{x^2+y^2}}}{x^2+y^2}\\ \end{array}\quad\text{ ...no match!}$$ This vector field also fails the test and, therefore, isn't gradient.

Let's take this one step further: $$W=\frac{V}{||V||^2}=\frac{1}{x^2+y^2}<y,\ -x>=\left< \frac{y}{x^2+y^2},\ -\frac{x}{x^2+y^2}\right>=<p,q>.$$ The new vector field has the same directions but the magnitude varies; it approaches $0$ as we move farther away from the origin and infinite as we approach the origin; i.e., we have: $$W(X)\to 0\text{ as } ||X||\to \infty \text{ and } ||W(X)||\to \infty \text{ as } X\to 0.$$

Let's test the condition: $$\begin{array}{lll} p_y=\frac{\partial}{\partial y}\frac{y}{x^2+y^2}=\frac{1\cdot (x^2+y^2)-y\cdot 2y}{(x^2+y^2)^2}=\frac{x^2-y^2}{(x^2+y^2)^2}\\ q_x=\frac{\partial}{\partial x}\frac{-x}{x^2+y^2}=-\frac{1\cdot (x^2+y^2)-x\cdot 2x}{(x^2+y^2)^2}=-\frac{y^2-x^2}{(x^2+y^2)^2}\\ \end{array}\quad\text{ ...match!}$$ The vector field passes the test! Does it mean that it is gradient then? No, it doesn't and we will demonstrate that it is not! The idea is the same that we started with in the beginning of the chapter: a round trip along the gradients is impossible as it leads to a net increase of the value of the function according to the Monotonicity Theorem. It is crucial that the vector field is undefined at the origin. $\square$

We will show later that the converse of the Gradient Theorem for dimension $2$ isn't true: $$p_y=q_x\ \not\Longrightarrow\ <p,q>=\nabla f,$$ unless a certain further restriction is placed. This restriction is topological: there can be no holes in the domain. Furthermore, integrating $p_y-q_x$ will be used to measure how close the vector field is to being gradient.