This site is being phased out.

Multivectors

Introduction

Given a vector space (or a module) $V$ over a field (or ring) $R$, we think of multivectors over $V$ as ordered "combinations" of vectors in $V$:

- $1$-vector $v_1 \in V$;

- $2$-vector $v_1 \wedge v_2$ with $v_1,v_2 \in V$;

- $3$-vector $v_1 \wedge v_2 \wedge v_3$ with $v_1,v_2,v_3 \in V$; etc.

Here the wedge symbol $\wedge$ is just to string the vectors together. The meaning of these combinations is revealed by their algebraic properties.

But first, let's consider some geometry: the magnitudes of these entities.

The magnitude of $1$-vector $v_1$ is the length of this vector.

The magnitude of $2$-vector $v_1 \wedge v_2$ can be interpreted as the area of the parallelogram with sides $v_1,v_2 \in V$. Note: in three dimensions it can also be computed as the magnitude of the cross product of these two vectors.

The magnitude of $v_1 \wedge v_2 \wedge v_3$ gives the volume of the parallelepiped spanned by those vectors.

Our interest however is these multivectors themselves. The reason why the magnitude is insufficient is clear in the case of $1$-vectors: if we have moved by vector $v_1$ and then $-v_1$ then the result is $0$. They cancel!

The idea of cancelling isn't as immediate for areas. In geometry we are used to think of areas as always positive. Not until calculus we realize that areas are algebraic in nature, they can be negative, they cancel etc, and the sign may depend on how the region is "oriented" with respect to the coordinate axes.

For example, an area under the graph of the velocity function is the displacement which can be negative. Or this area could be the amount of water gained versus the amount lost etc. The same idea is applicable to the volumes, in any dimension.

Also, recall from calc 1: $$\int_a^bf(x)dx=-\int_b^af(x)dx.$$ This suggests that the length of $[a,b],b>a$, is $b-a$ or $a-b$ depending on the "orientation" of this segment. In fact, the segment should be treated algebraically to help explain the above formula: $$\int_a^bf(x)dx=\int_{[a,b]}f(x)dx=\int_{-[b,a]}f(x)dx=-\int_{[a,b]}f(x)dx=-\int_b^af(x)dx.$$ Here, the whole integral is thought of as a linear function defined on the segments (see chains vs cochains).

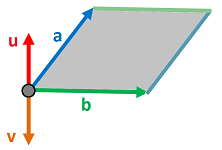

The idea of how the sign depends on the orientation also applies to the area of a region spanned by two vectors. It can be computed as $v = a \times b$ or $u = b \times a$:

Which one to choose?

Note that $v$ has the opposite direction of $u$. Now, $2$-vectors give us surface areas and, in particular, the volume of a flow through a given surface per a unit of time. This volume will change its sign to the opposite if we switch the surface from the one spanned by $a,b$ to the one spanned by $b,a$.

To summarize, we look at algebraic lengths, areas, and volumes, etc as opposed to geometric.

For completeness sake, we also include $0$-vectors; they are simply scalars. So, for $k = 0, 1, 2$ and $3$, $k$-vectors are often called respectively scalars, vectors, bivectors and trivectors. And, further, $(n − 1)$-vectors are pseudovectors and $n$-vectors are pseudoscalars, if $\dim V = n$.

Wedge product

Let's consider how $2$-vectors are formed from $1$-vectors: $$\varphi = a \wedge b.$$ Is this operation linear?

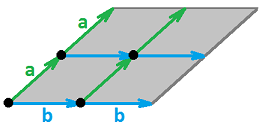

No, as the picture below illustrates:

Indeed, the area of a region like this depends linearly on each of its dimensions, $a$ and $b$, and as a result it is quadratic on $(a,b)$.

We call such a dependence multilinear, i.e., it is linear with respect to each variable when all others are fixed.

What about symmetry? That is, is it similar to the dot product: $$<x,y>=<y,x>?$$

As discussed above multivectors are not symmetric but anti-symmetric. That is, they are more like the cross product: $$a \wedge b = -a \wedge b.$$

From this rule it follows, for example, $$u \wedge v \wedge w = -v \wedge u \wedge w = -(-v \wedge w \wedge u) = v \wedge w \wedge u.$$

We also have that $$u \wedge u = -u \wedge u$$ and, from linearity, $$2u \wedge u = 0$$ implying

Theorem: $$u \wedge u=0.$$

Spaces of multivectors

The set of all $k$-vectors in $V$ is denoted by $$\Lambda _k = \Lambda _k (V),k=0,1,2,....$$ It's a vector space with respect to the usual operations of addition and scalar multiplication (Exercise).

However, there is an extra operation, the wedge product. We use this operation to inductively define these spaces starting with these: $$\Lambda _0 (V)= R,$$ $$\Lambda _1 (V) = V.$$ Let's consider $\Lambda _2(V)$. It is defined via the wedge product: $$\wedge :V \times V \rightarrow \Lambda _{2}.$$ We define $\Lambda _{2}$ as a space that consists of these formal sums: $$\sum s_i (v_i \wedge w_i),$$ where $s_i \in R, v_i,w_i \in V$, subject to these algebraic rules: $$\begin{align} (s_1v_1 + s_2v_2) \wedge w = s_1(v_1 \wedge w) + s_2(v_2 \wedge w),\\ v \wedge (t_1w_1 + t_2w_2) = t_1(v \wedge w_1) + t_2(v \wedge w_2),\\ v \wedge v =0,\\ v \wedge w = - w \wedge v. \end{align}$$ where $$v, v_1,v_2,w, w_1,w_2\in V, s_1,s_2, t_1,t_2\in R.$$ These are the linearity, cancellation, and anti-commutativity as discussed above (compare to Tensor product).

Suppose $\{v_1,...,v_n \}$ is a basis of $V$. Then any vector in $V$ can be represented as a linear combination of these vectors: $$a=\sum _ia_iv_i,ba=\sum _ib_iv_i, a_i,b_i \in R.$$ Then $$a \wedge b = \big( \sum _ia_iv_i\big) \wedge \big( \sum _jb_jv_j \big)$$ $$=\sum _{i,j} a_ib_j (v_i \wedge v_j)$$ $$=\sum _{i<j} (a_ib_j -a_jb_i)(v_i \wedge v_j),$$ using the above properties. Since every element of $\Lambda _2(V)$ can be represented as a wedge product, hence $$\{v_i \wedge v_j |1 \le i < j \le n \}$$ is a basis of this space. Therefore $$\dim \Lambda _2(V) =\frac{n(n-1)}{2}=\binom{n}{2}.$$

To provide a proper mathematical treatment for this construction one needs to treat the above identities/rules as equivalences of elements in $V \times V$: $$\begin{align} (s_1v_1 + s_2v_2) \times w \sim s_1(v_1 \times w) + s_2(v_2 \times w),\\ v \times (t_1w_1 + t_2w_2) \sim t_1(v \times w_1) + t_2(v \times w_2),\\ v \times v \sim 0,\\ v \times w \sim - w \times v. \end{align}$$ Then (Exercise) we define $$\Lambda _2 (V) = \big( V \times V \big) / _\sim.$$ In that sense, $u \wedge v$ is simply the equivalence class of $u \times v$. Then, the above "rules" are indeed true identities.

The construction is similar for $\Lambda _k(V)$. We define its elements as formal linear combinations (over $R$) of: $$v_1 \wedge v_2 \wedge ... \wedge w_k,$$ where $v_i,w_i \in V$, subject to algebraic rules of linearity, cancellation, and anti-commutativity. Then we realize that we need to treat them as equivalences of elements in $V^k$:

- $(su + tw, v_2 ,..., v_k) \sim s(u,v_2 ,..., v_k) + t(w,v_2 ,..., v_k)$;

- $(v_1, v_2 ,..., v_k) \sim 0$ if for some pair of indices $i \ne j$, $v_i=v_j$;

- $(v_1, v_2 ,..., v_k) \sim -(u_1, u_2 ,..., u_k)$ if the latter is the same as the former with two entries interchanged.

Then (Exercise) we define $$\Lambda _k (V) = V^k / _\sim.$$

Again, $v_1 \wedge v_2 \wedge ... \wedge v_k$ stands for the equivalence class of $(v_1, v_2, ..., v_k) \in V^k$ and the equivalences can be rewritten as identities for the wedge product:

- $(su + tw)\wedge v_2 \wedge ...\wedge v_k = s(u \wedge v_2 \wedge ...\wedge v_k) + t(w \wedge v_2 \wedge... \wedge v_k)$;

- $v_1\wedge v_2 \wedge ...\wedge v_k = 0$ if for some pair of indices $i \ne j$, $v_i=v_j$;

- $v_1\wedge v_2 \wedge ...\wedge v_k = -u_1\wedge u_2 \wedge ...\wedge u_k$ if the latter is the same as the former with two entries interchanged.

Theorem. If $\dim V =n$, then $$\dim \Lambda _k = \binom {n}{k}.$$

Proof. Exercise $\blacksquare$

In particular, $$\dim \Lambda _n = 1,$$ so $$\Lambda _n = R.$$ We can also assume that $$\Lambda _k = 0, \forall k>n.$$

The most direct way to define this space is as a quotient over s subspace: $$\Lambda _k (V) = V^k / T_k,$$ where $T_k$ is defined as the subspace (Exercise) of $V^k$ made of the vector that satisfy those properties. Since the linearity is ensured by the structure of $V^k$ itself and the cancellation follows from the anti-symmetry, the last is the only one that is necessary:

- $T_k =\{ (v_1, v_2 ,..., v_k) + (u_1, u_2 ,..., u_k):$ and $(u_1, u_2 ,..., u_k)$ is the same as $(v_1,...,v_k)$ with two entries interchanged $\}$.

Summary

The wedge product operates as: $$\wedge :\Lambda _k \times \Lambda _m \rightarrow \Lambda _{k+m}.$$

Together these spaces form a graded vector space $$\Lambda = \bigoplus _k \Lambda _k$$ with the wedge product described above operating within $\Lambda$ as: $$\wedge :\Lambda \times \Lambda \rightarrow \Lambda .$$