This site is being phased out.

Multilinear forms

Contents

Forms as multilinear functions

Given a vector space $V$, how does one compute the (algebraic) lengths, areas, volumes, etc of the multivectors in $V$?

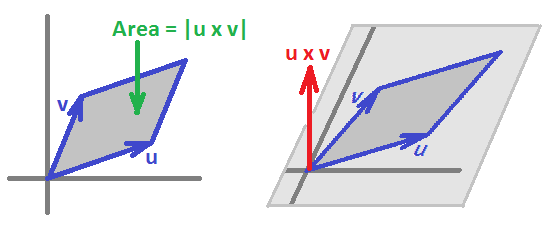

For example, in dimension $2$, the are of a parallelogram spanned by two vectors is: $$Area = \det\begin{bmatrix} v & w\end{bmatrix} = \det\begin{bmatrix} a & c\\b & d \end{bmatrix} = ad - bc .$$

In general, we consider a function: $$\varphi =\varphi ^k: V^k \rightarrow {\bf R},$$ where the superscript is used to indicate the dimension of the geometric object and the "degree" or "order" of this function.

As we have seen, this function would be better defined on the multivectors instead of combinations of vectors. However, we achieve the same effect if we require the function to satisfy:

- $\varphi$ is linear with respect to each $V$;

- $\varphi$ is antisymmetric with respect to $V^k$.

Such a function is called a multilinear antisymmetric form of degree $k$ over $V$, or simply a $k$-form.

Note that $V$ may have no geometric structure. The inner product and, therefore, geometric lengths (distances) and angles aren't being considered, at this time. In this sense the discussion is purely algebraic.

The set of such $k$-forms over $V$ is denoted by $\Lambda ^k(V)$. It is a vector space.

For dimension $3$, $k$-forms are these functions: $$\varphi =\varphi ^k : ({\bf R}^3)^k \rightarrow {\bf R},$$ with the following properties with respect to each ${\bf R}^3$.

- Multilinearity:

- For $\varphi(\cdot,b,c)$ : $\varphi(\alpha u + \rho v,b,c) = \alpha \varphi(u,b,c) + \rho \varphi(v,b,c)$;

- For $\varphi(a,\cdot,c)$ : $\varphi(a,\alpha u + \rho v,c) = \alpha \varphi(a,u,c) + \rho \varphi(a,v,c)$

- For $\varphi(a,b,\cdot)$ : $\varphi(a,b,\alpha u + \rho v) = \alpha \varphi(a,b,u) + \rho \varphi(u,b,v)$

- Antisymmetry:

- $\varphi(x,y,c) = -\varphi(y,x,c)$

- $\varphi(a,y,z) = -\varphi(a,z,y)$

- $\varphi(x,b,z) = -\varphi(z,b,x)$

Let's consider the set $\Lambda^k$ of all $k$-forms. The algebra that we have considered gives it an extra structure.

It is a vector space under the usual addition and scalar multiplication of functions: $$+ \colon \Lambda^k \times \Lambda^k \rightarrow \Lambda^k,$$ $$\cdot \colon {\bf R} \times \Lambda^k \rightarrow \Lambda^k.$$ The only thing to be verified is the fact that the multilinearity and the antisymmetry are preserved under these operations (Exercise).

Another way to look at this space is the dual space of the space of multivectors: $$\Lambda^k = (\Lambda_k)^*.$$ The formula explains the reason for calling forms covectors.

The space of $1$-forms

Let's try to come up with a basis of this space $\Lambda^k$ with specific formulas for the elements of the basis.

It all starts when the domain $V={\bf R}^n$ is supplied with a coordinate system. Suppose $\{e_1,...,e_n\}$ is a basis. It is important to remember that this is an ordered basis, i.e., interchanging any two element amounts to a new basis that require change of variables etc. This assumption makes the space "oriented". This assumption explains why $e_x \wedge e_y \ne e_y \wedge e_x$.

We choose these names: $$v \in V \Rightarrow v=<v_1,...,v_n>.$$ Then our variables are: $$v_1,...,v_n.$$

As these lists of variables are quite cumbersome, we'll stay in lower dimensions and use the fact that

- $({\bf R}^3)^k$ has the standard basis $\{e_x, e_y, e_z\}$.

Then we use:

- for variables: $v_x,v_y,v_z$.

What about $1$-forms as functions?

To approach this, we start associating to the basis elements $e_x$ and $e_y$ in ${\bf R}^2$ a set of basis elements of $\Lambda$. We define the forms by their values on the basis:

- $g_x(e_x)=1$;

- $g_x(e_y)=0$;

- $g_y(e_x)=0$;

- $g_y(e_y)=1$.

This is a standard construction in linear algebra called duality:

- $g_i(e_j)=\delta _{ij}$.

Just as for all forms, we have $$g_x,g_y:{\bf R}^2 \rightarrow {\bf R}.$$ Then their analytical representations are:

- $g_x(v_x,v_y)=v_x,$

- $g_y(v_x,v_y)=v_y.$

Now, what about the rest of $1$-forms?

We know that they are to be found all as $$\varphi^1=Ag_x+Bg_y,$$ where $A,B$ are just numbers. This representation still makes sense in light of our new understanding of the two basic forms. Indeed, we just evaluate this formula with the usual meanings of the algebraic operations: $$\varphi^1(v_x,v_y)=A \cdot v_x+B \cdot v_y.$$

The only question remains: does this function satisfy the definition?

For $1$-forms we just need to verify linearity with respect to $(v_x,v_y)$. (Exercise.)

Then, $\{g_x,g_y\}$ is a basis of $\Lambda^1({\bf R}^2)$! So, $$\Lambda^1=span\{g_x,g_y\}.$$

Exercise. Prove this formula.

For $2$-vectors we will need the wedge product of forms.

$2$-forms as combinations $g_x,g_y$

Recall that we use

- variables: $v_x,v_y,v_z$ and $v'_x,v'_y,v'_z$ etc.

We have defined the two "basic" $1$-forms: $$g_x(v_x,v_y)=v_x,$$ $$g_y(v_x,v_y)=v_y.$$ and the rest: $$\varphi^1=Ag_x+Bg_y.$$

Now $2$-forms.

They are $$\psi^2=Ag_x \wedge g_y,$$ or in $3$-space $$\psi^2=Ag_x\wedge g_y+Bg_y\wedge g_z+Cg_z\wedge g_x,$$ i.e., "linear combinations" of forms $g_x\wedge g_y,g_y\wedge g_z,g_z\wedge g_x$. Let's try to understand them.

The immediate idea is that $g_x \wedge g_y$ should be made of the basic $1$-forms we already understand, $g_x,g_y$. But how?

The first thought is that we just multiply them: $$g_x \wedge g_y=g_x \cdot g_y.$$ We can multiply two functions, so such a function makes sense.

Exercise. What kind of algebraic structure is $(\Lambda ^1, +, \cdot)$?

However, this isn't what we want!

The fact that antisymmetry fails with $g_x \cdot g_y=g_y \cdot g_x$ springs to mind right away. But a bigger problem is that $g_x \cdot g_y$ is a $1$- not a $2$-form! Indeed we have: $$(g_x \cdot g_y)(v_x,v_y) = g_x(v_x,v_y) \cdot g_y(v_x,v_y).$$

From this formula, it becomes clear that to produce a $2$-form from two $1$-forms, we shouldn't "recycle" the variables. We will need $$(g_x \wedge g_y)(v_x,v_y,v'_x,v'_y)=?$$

What should be the formula for the wedge product?

First, it has to be linear on $(v_x,v_y)$ and linear on $(v'_x,v'_y)$. The regular multiplication above works: $$g_x(v_x,v_y) \cdot g_y(v'_x,v'_y).$$ However, there is no antisymmetry! The answer is to flip the variables and then combine the results: $$(g_x \wedge g_y)(v_x,v_y,v'_x,v'_y) = g_x(v_x,v_y) \cdot g_y(v'_x,v'_y) - g_x(v'_x,v'_y) \cdot g_y(v_x,v_y).$$ This suggests that in any dimension, we have: $$(\varphi ^1 \wedge \psi ^1)(v,v')= \varphi ^1(v) \cdot \psi ^1(v') - \varphi ^1(v') \cdot \psi ^1(v).$$

So, the explicit formula of our new form is: $$(g_x \wedge g_y)(v_x,v_y,v'_x,v'_y) = v_xv'_y - v_yv'_x.$$ Of course, we recognize this as a determinant.

We have found a way to construct the "familiar" $2$-form named $g_x \wedge g_y$ from the two "familiar" (and explicitly defined) $1$-forms named $g_x$ and $g_y$. Now we need to extend this construction to all forms.

Now, for the $2$-dimensional space we've got all we need. All $2$-forms are given as multiples: $$\psi^2=Ag_x \wedge g_y.$$

In $3$-space it's more complicated: $$\psi^2=Ag_x \wedge g_y+Bg_y \wedge g_z+Cg_x \wedge g_z,$$ where these three $2$-forms form a basis and can be constructed from the familiar $1$-forms $g_x,g_y,g_z$ via the wedge product.

The wedge product

We have defined the wedge product on $g_x,g_y$ (and $g_z$ in the $3$-space) and the last example suggest how one can apply it to all forms.

Generally, we use the fact that those two form a basis of $\Lambda^1({\bf R}^2)$ and we also require the wedge product, $$\wedge \colon \Lambda^1 \times \Lambda^1 \rightarrow \Lambda^{2},$$ to be linear on either of the components. Then $$\varphi^1\wedge\psi^1=(Ag_x+Bg_y)\wedge(Cg_x+Dg_y)$$ $$=Ag_x\wedge Cg_x+Ag_x\wedge Dg_y+Bg_y\wedge Cg_x+Bg_y\wedge Dg_y$$ Use the linearity of the wedge product: $$=ACg_x\wedge g_x+ADg_x\wedge g_y+BCg_y\wedge g_x+BDg_y\wedge g_y$$ Use the antisymmetry $$=AC0+ADg_x\wedge g_y+BCg_y\wedge g_x+BD0$$ $$=(AD-BC)g_x\wedge g_y.$$ It is interesting to notice that this is equal to a certain determinant: $$AD-BC=\det \left( \begin{array}{ccc} A & B \\ C & D \end{array} \right). $$ This number also represents the area of the parallelogram spanned by forms $u=<A,B>,v=<C,D>$ in the plane:

One can think of it as the cross product of these forms. This idea is also visible in dimension $3$: $$\varphi^1\wedge\psi^1=(Ag_x+Bg_y+Cg_y)\wedge(Dg_x+Eg_y+Fg_z).$$ We just quickly collect the similar terms here while dropping zeros: $$=(AE-BD)g_x \wedge g_y$$ $$+(BF-CE)g_y \wedge g_z$$ $$+(AF-CD)g_x \wedge g_z,$$ which is almost the same as $$u \times v = <A,B,C> \times <D,E,F> = \det \left( \begin{array}{ccc} i & j & k \\ A & B & C \\ D & E & F \end{array} \right) $$

The idea is to study forms, such as $u$ and $v$, as well "$2$-forms", such as $u \wedge v$, which is simply $u$ and $v$ paired up, and "$3$-forms" $u \wedge v \wedge w$, etc. The properties that we have seen are required from these forms. For example, the $2$-forms should be linear on either form and be anti-symmetric.

Then $k$-forms capture the oriented $k$-volumes:

- lengths are linear,

- areas are bi-linear,

- volumes are tri-linear, etc,

just as above. Warning: This is not about the arc-length, surface area, etc from calculus, which are independent of orientation.

With this insight, the combined direction variable of a $k$-form is a $k$-form.

Back to the wedge product of forms.

We already have an idea that this operator behaves as follows: $$\wedge \colon \Lambda^k \times \Lambda^m \rightarrow \Lambda^{k+m},$$ that is, multiplication of lower dimensional forms yields higher dimensional forms.

We build this operator inductively.

We already understand the wedge product for the degree $1$ forms in all dimensions. For dimension $3$ the construction for all forms can be easily outlined. First we list the bases of the spaces of differential forms of each degree:

- $\Lambda ^0({\bf R}^3) : \{1\}$;

- $\Lambda ^1({\bf R}^3) : \{g_x,g_y,g_z\}$;

- $\Lambda ^2({\bf R}^3) : \{g_x \wedge g_y,g_y \wedge g_z,g_x \wedge g_z\}$;

- $\Lambda ^3({\bf R}^3) : \{g_x \wedge g_y \wedge g_z\}$;

- $\Lambda ^k({\bf R}^3) =0$ for $k>3$.

Next we define the wedge product as a linear operator (on each multiple) $$\wedge \colon \Lambda^k({\bf R}^3) \times \Lambda^m({\bf R}^3) \rightarrow \Lambda^{k+m}({\bf R}^3)$$ in the usual way. For each pair of the basis elements of the domain spaces we express their wedge product in terms of the basis of the target space. For example:

- $\wedge \colon \Lambda^0 \times \Lambda^1 \rightarrow \Lambda^{1}$

- $\wedge(1,g_x)=1 \wedge g_x =g_x, \wedge(1,g_y)=1 \wedge g_y =g_y, \wedge(1,g_z)=1 \wedge g_z =g_z$;

- $\wedge \colon \Lambda^1 \times \Lambda^1 \rightarrow \Lambda^{2}$

- $\wedge(g_x,g_y)=g_x \wedge g_y,\wedge(g_y,g_z)=g_y \wedge g_z ,\wedge(g_x,g_z)=g_x \wedge g_z$;

- $\wedge(g_y,g_x)=g_y \wedge g_x =-g_x \wedge g_y,\wedge(g_z,g_y)=g_z \wedge g_y =-g_y \wedge g_z,\wedge(g_z,g_x)=g_z \wedge g_x =-g_x \wedge g_z$;

- $\wedge(g_x,g_x)=g_x \wedge g_x =0,\wedge(g_y,g_y)=g_y \wedge g_y =0,\wedge(g_z,g_z)=g_z \wedge g_z =0$.

- etc

By linear algebra, we conclude that these conditions fully define $\wedge$ as a linear operator.

Clearly, these spaces aren't closed under the wedge product which is what we are used to. To make it a "self-map" we use a graded vector space: $$\wedge \colon \Lambda^0 \times \Lambda^1 \times \Lambda^2 \times \ldots \rightarrow \Lambda^0 \times \Lambda^1 \times \Lambda^2 \times \ldots.$$ Observe that this approach also allows adding any two forms not just ones of the same degree.

We will refer to the forms $g_x,g_y,g_x,g_x \wedge g_y$ etc and $g_{x^{i_1}} \wedge ... \wedge g_{x^{i_k}}$ as the basic forms.

Skew commutativity of the wedge product

Is the wedge product commutative?

No, because we know that $g_x \wedge g_y= -g_y \wedge g_x$.

Is the wedge product anti-commutative?

No, once we realize that $f^0 \wedge \varphi = f^0\varphi$ is simply multiplication.

Example. Consider the product $g_x \wedge g_y \wedge g_z$. By antisymmetry we have $$g_x \wedge g_y \wedge g_z = -g_y \wedge g_x \wedge g_z = g_y \wedge g_z \wedge g_x,$$ so it seems that $$g_x \wedge (g_y \wedge g_z) = (g_y \wedge g_z) \wedge g_x,$$ which suggests that multiplication of forms may be commutative. However consider in ${\bf R}^4$ the product $$g_x \wedge g_y \wedge g_z \hspace{1pt} g_u = -g_y \wedge g_x \wedge g_z \wedge g_u = g_y \wedge g_z \wedge g_x \wedge g_u = -g_y \wedge g_z \wedge g_u \wedge g_x.$$ So we cannot say in general that multiplication of forms is a commutative operation.

So the answer is, it depends on the degrees of the forms.

It is clear now that we can rearrange the order of the multiples step by step and every time the only thing that changes is the sign. Then there must be a general rule here: $$\varphi \wedge \psi = (-1)^{?}\psi \wedge \varphi.$$ What is the sign? It depends only on the number of flips we need to do.

All we know is that, if $\varphi \in \Lambda^k$ and $\psi \in \Lambda^m$, then $\varphi \wedge \psi$ is an $(m+k)$-vector.

Example. Let $\varphi = g_x \wedge g_y$ and $\psi = g_z \wedge g_u$. Then $$\varphi \wedge \psi = g_x \wedge g_y \wedge g_z \wedge g_u = g_z \wedge g_u \wedge g_x \wedge g_y.$$ To understand the sign, we observe that we simply count the number of times we move an item one step left (or right) and use the anti-symmetry property: $$(g_x \wedge g_y) \wedge (g_z \wedge g_u)=(-1)^?(g_z \wedge g_u) \wedge (g_x \wedge g_y).$$

This suggests that the property might look like this: $$\varphi \wedge \psi = (-1)^4 \psi \wedge \varphi .$$

Indeed, there is a more general result here.

Theorem: Suppose $\varphi \in \Lambda^k$ and $\psi \in \Lambda^m$. Then $$\varphi \wedge \psi = (-1)^{km}\psi \wedge \varphi.$$

Proof: We use linearity to reduce the problem to the one for $\varphi = g_{x_1} \wedge g_{x_2}\ldots \wedge g_{x_k}$ and $\psi = g_{y_1} \wedge g_{y_2} \wedge \ldots \wedge g_{y_m}$. Then our product is $$\varphi \wedge \psi=(g_{x_1} \wedge g_{x_2} \wedge \ldots \wedge g_{x_k}) \wedge (g_{y_1} \wedge g_{y_2} \wedge \ldots \wedge g_{y_m}).$$ To move $g_{y_1}$ to the left of $g_{x_1}$ requires precisely $k$ jumps -- and as many applications of the anti-symmetry property (of the wedge product of $1$-forms) as follows $$g_{x_1} \wedge g_{x_2} \wedge \ldots \wedge g_{x_k} \wedge (g_{y_1})\wedge g_{y_2}\wedge \ldots \wedge g_{y_m}$$ $$=(-1)g_{x_1} \wedge g_{x_2} \wedge \ldots \wedge (g_{y_1})\wedge g_{x_k} \wedge g_{y_2}\wedge \ldots \wedge g_{y_m}$$ $$...$$ $$=(-1)^{k-1}g_{x_1}\wedge (g_{y_1}) \wedge g_{x_2} \wedge \ldots \wedge g_{x_k} \wedge g_{y_2}\wedge \ldots \wedge g_{y_m}$$ $$=(-1)^k (g_{y_1})\wedge g_{x_1} \wedge g_{x_2} \wedge \ldots \wedge g_{x_k} \wedge g_{y_2}\wedge \ldots \wedge g_{y_m}.$$ So, we get a factor of $(-1)^k$ by moving $g_{y_1}$ all the way. Then, moving $g_{y_2}$ also requires precisely $k$ steps, so our factor becomes $(-1)^k(-1)^k=(-1)^{2k}$. We perform this jumping for each of the $m$ of the $g_{y_i}$ factors which yields the factor $(-1)^{km}$. $\blacksquare$

This property is called skew commutativity or graded commutativity.

Corollary: If $\varphi \in \Lambda^k$ and $k$ is odd, then $\varphi \wedge \varphi = 0$.

Proof. Exercise.

We have proven that each of these two properties implies the other:

Multilinear forms vs multivectors

We defined forms as functions on the power of $V$: $$\varphi =\varphi ^k: V^k \rightarrow R,$$ for simplicity's sake even though the rationale was to define them on multivectors: $$\varphi =\varphi ^k: \Lambda _k (V) \rightarrow R.$$

To connect the two we start with the definition of the space of multivectors: $$\Lambda _k (V) = V^k / Q_k(V),$$ where

- $Q_k = Q_k(V) =\{ (v_1, v_2 ,..., v_k) + (u_1, u_2 ,..., u_k):(u_1, ..., u_k)$ is the same as $(v_1,...,v_k)$ with two entries interchanged $\}$.

Now, the first definition requires that $\varphi$ is antisymmetric. But this requirement is equivalent to: $$\varphi (Q_k) = 0.$$ This condition, in turn, is equivalent to saying that $$\varphi :\Lambda _k =V^k / Q_k \rightarrow R$$ is well defined.

The second definition is profound as it gives us a very important identity: $$\Lambda ^k = (\Lambda _k)^*.$$

Summary

Forms of order $k$ over a real vector space $V$ are multilinear antisymmetric functions $$\varphi =\varphi ^k: V^k \rightarrow {\bf R}$$ that give us oriented lengths, area, volumes, etc. They constitute a vector space $\Lambda^k(V)$. Forms of all orders constitute a graded vector space: $$\Lambda ^* = \Lambda^0 \times \Lambda^1 \times \Lambda^2 \times \ldots.$$ This space has an extra operation, the wedge product: $$\wedge \colon \Lambda^k \times \Lambda^m \rightarrow \Lambda^{k+m},$$ which makes it a graded linear operator which is is graded commutative.

The two operations $$+, \wedge \colon \Lambda ^* \rightarrow \Lambda ^*$$ make this space in to a graded ring.

When the forms are continuously parametrized by location on a smooth manifold $M$: $$\varphi =\varphi ^k: M \times V^k \rightarrow {\bf R}$$ they are called differential forms.

Further see tensor fields.

Exercises

1. Show that a form of even degree commutes with any other form.

2. $\dim \Lambda ^k (p) = ?$

3. Provide explicit formulas of the basic forms in $\Lambda ^2({\bf R}^3)$, i.e., $g_x \wedge g_y,g_y \wedge g_z,g_z \wedge g_x$ in terms of the definition. Suggest formulas for the general case of $\Lambda ^k({\bf R}^n)$.