This site is being phased out.

Linear operators: part 2

We already know that each matrix give rise to a linear operator.

What about vice versa?

How to find a matrix for a linear operator

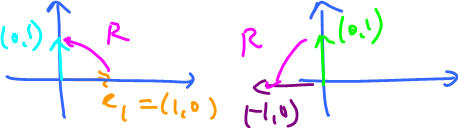

We start with Euclidean spaces. Recall that the rotation $R$ $90^o$ counterclockwise is a linear operator $R \colon {\bf R}^2 \rightarrow {\bf R}^2$ and can be represented as a multiplication by a matrix,

$$A_R=\left[ \begin{array}{} 0 & -1 \\ 1 & 0 \end{array} \right].$$

What does it mean exactly?

We treat points/vectors in ${\bf R}^2$ as $2 \times 1$ matrices

$$(x,y) \rightarrow \left[ \begin{array}{} x \\ y \end{array} \right].$$

Then the output of $(x,y)$ under $R$ is computed by multiplication of these two matrices:

$$A_R\left[ \begin{array}{} x \\ y \end{array} \right] = \left[ \begin{array}{} 0 & -1 \\ 1 & 0 \end{array} \right] \left[ \begin{array}{} x \\ y \end{array} \right]$$

Verify that this is equal to:

$= \left[ \begin{array}{} -y \\ x \end{array} \right]$.

So $R(x,y)=(-y,x)$, if we go back to the function notation.

Observe that the two columns of $A_R$ are $\left[ \begin{array}{} 0 \\ 1 \end{array} \right]$ and $\left[ \begin{array}{} -1 \\ 0 \end{array} \right]$.

These happen to be the images of $e_1,e_2$ under $R$. Recall, to know a linear operator all you need to know is the images of the basis elements.

So, take $(0,1)$ and $(-1,0)$ and form a matrix, using them as columns. That's all.

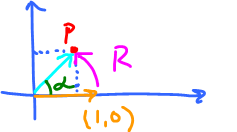

Further, what about rotation through $\alpha$ radians?

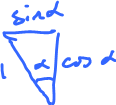

Find the coordinates of the point $P$.

It's $$P({\rm cos \hspace{3pt}}\alpha, {\rm sin \hspace{3pt}}\alpha),$$ that comes from this simple triangle:

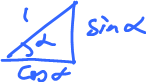

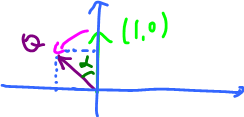

Next, find the coordinates of $Q$:

It's $$Q(-{\rm sin \hspace{3pt}} \alpha, {\rm cos \hspace{3pt}} \alpha)$$ that comes from this simple triangle:

Then $$A_R = \left[ \begin{array}{} {\rm cos \hspace{3pt} } \alpha & -{\rm sin \hspace{3pt} } \alpha \\ {\rm sin \hspace{3pt} } \alpha & {\rm cos \hspace{3pt} } \alpha \end{array} \right],$$

Note: ${\rm rank \hspace{3pt}} A_R=2$.

A matrix corresponding to a linear operator in a vector space

Theorem: Suppose $T \colon {\bf R}^n \rightarrow {\bf R}^m$ is a linear operator. Then the value of $T$ of $v$ is computed by matrix multiplication:

$$T(v)=A_Tv,$$

where $A_T$ is the $m \times n$ matrix the $i^{\rm th}$ column of which is $T(e_i)$, and $\{e_i\}$ is the standard basis of ${\bf R}^n$.

Note: $e_i = (0,0,\ldots,1,0,\ldots,0) \in {\bf R}^n$ is a vector, $T(e_i) \in {\bf R}^m$ is a vector too.

To form the matrix $A_T$ transpose $T(e_i)$, to turn a row into a column.

Recall, that $A_Tv$ is a linear operator too.

To prove the theorem we only need to verify that they coincide on the basis elements: $$T(e_i)=T_Te_i.$$

This suffices because we know these three things:

- 1. matrix "is" a linear operator (given a basis).

- 2. The theorem holds if we choose $T$ to be given by $T(v)=A_Tv$, i.e., if the two linear operators are equal to each other.

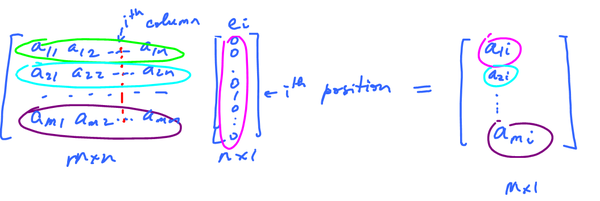

- 3. $Ae_i$ is the $i^{\rm th}$ column of $A$.

Proof:

$$\left[ \begin{array}{} a_{11} & a_{12} & \ldots & a_{1n} \\ a_{21} & a_{22} & \ldots & a_{2n} \\ \ldots & \ldots & \ldots & \ldots \\ a_{m1} & a_{m2} & \ldots & a_{mn} \end{array} \right] \left[ \begin{array}{} 0 \\ 0 \\ \vdots \\ 0 \\ 1 \\ 0 \\ \vdots \\ 0 \end{array} \right] = \left[ \begin{array}{} a_{1i} \\ a_{2i} \\ \vdots \\ a_{mi} \end{array} \right]$$

Where the second matrix has a $1$ in the $i^{\rm th}$ position and the final matrix is the $i^{\rm th}$ column vector of $A$. $\blacksquare$

This is how those three facts apply here:

- 3 $\rightarrow A_Te_i = i^{\rm th}$ column of $A_T$

- 1 $\rightarrow A_T$ is a linear operator

- 2 $\rightarrow T$ matches $A_T$ on the basis.

To summarize: suppose $V$ is a $n$-dimensional vector space, $B$ is its basis, $B = \{v_1,\ldots,v_n\}$.

Correspondence between vectors and coordinate vectors, and between operators and matrices

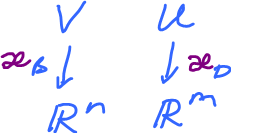

Suppose we have a vector space $V$ with basis $B$.

Every $v \in V$ corresponds to an element $v_B \in {\bf R}^n$ as a "coordinate vector":

- if $v = \displaystyle\sum_{i=1}^n a_iv_i$

- then $v_B = [a_1,\ldots,a_n]^T.$

(This is a column, $n \times 1$ matrix.)

One can define a function

- $\varkappa_B \colon V \rightarrow {\bf R}^n$ given by

- $\varkappa_B(v)=v_B$

It's different for different choice of $B$!

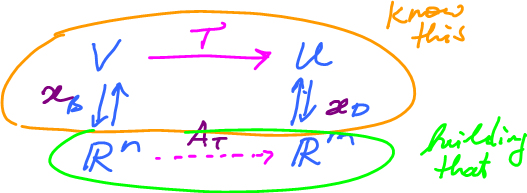

This is its diagram:

$$ \newcommand{\ra}[1]{\!\!\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{llllllllllll} V \\ \da{\varkappa_B} \\ {\bf R}^n \end{array} $$

Properties of $\varkappa_B$:

- 1. one-to-one,

- 2. onto,

- 3. linear.

These three facts together show that $\varkappa_B$ is an isomorphism.

The theorem takes this further.

We can mimic vectors. What if we want to mimic functions too?

Suppose we have another vector space $U$ with basis $D$. Together:

$$ \newcommand{\ra}[1]{\!\!\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{llllllllllll} V & U \\ \da{\varkappa_B} & \da{\varkappa_D} \\ {\bf R}^n & {\bf R}^m \end{array} $$

Suppose its ${\rm dim}=m$, basis $D=\{u_1,\ldots,u_m\}$.

So far, this is old.

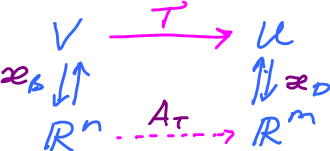

Now what if there is a linear operator $T \colon V \rightarrow U$?

$$ \newcommand{\ra}[1]{\!\!\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} \newcommand{\ua}[1]{\left\uparrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{llllllllllll} V & \ra{T} & U \\ \da{}\ua{\varkappa_B} & & \ua{}\da{\varkappa_D} \\ {\bf R}^n & \ra{A_T} & {\bf R}^m \end{array} $$

This is what we have, $V$ and $U$ are "represented" by ${\bf R}^n$ and ${\bf R}^m$.

Question: How do we represent $T$?

Answer: By a matrix, $A_T$.

By the theorem, $A_T$ is made of columns with each of them being the value of $e_i$ (basis element of ${\bf R}^n$) under $T$. How to find them?

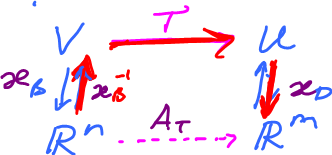

We need $A_T$. Use the diagram:

To build $A_T$, we have to go around the diagram, to follow the arrows that we know.

$$ \newcommand{\ra}[1]{\!\!\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} \newcommand{\ua}[1]{\left\uparrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{llllllllllll} & V & \ra{T} & U \\ \varkappa_B & \da{}\ua{\varkappa_B^{-1}} & & \ua{}\da{\wp_D} \\ & {\bf R}^n & \ra{A_T} & {\bf R}^m \end{array} $$

Go: up, right down.

Then algebraically, $A_T=\varkappa_DT\varkappa_B^{-1}$.

Specifically, $A_T$ has a matrix with respect to the standard bases of ${\bf R}^n$, ${\bf R}^m$.

Let's find it based on $T$ only.

Theorem: Suppose $T \colon V \rightarrow U$ is linear operator. Then the $i^{\rm th}$ column of $A_T$ is made of the coefficients of the linear combination of $T(u_i)$ in terms of $v_1,\ldots,v_n$, the basis of $V$.

Compare: $$T \colon {\bf R}^n \rightarrow {\bf R}^m$$ and $$T(v)=A_Tv,$$ where $A_T$ is $m \times n$ matrix the $i^{\rm th}$ column of which is $T(e_i)$, $\{e_i\}$ is the standard basis of ${\bf R}^n$.

Further, $V$ has basis $B$, $n$-dimensional. $U$ has basis $D$, $m$-dimensional.

Let $\mathcal{L}(V,U)$ be the set of all linear operators $T \colon V \rightarrow U$.

Recall $M(m,n)$ is the set of all $m \times n$ matrices. What's the relation?

This what we have: $$\mathcal{L}(V,U) \stackrel{\wp_B}{\rightarrow} M(m,n)$$ This is a function: vector space $\longleftarrow$ vector space.

There is a correspondent here. What kind? It is

- 1. one-to-one

- 2. onto

- 3. linear

Therefore $\varkappa_B$ is an isomorphism of the two vector spaces.

Lesson: The algebra of a finite dimensional vector space can be reduced to that of Euclidean spaces via matrices.

Homework: Let $\mathcal{R}$ be the set of all rotations of the plane. Prove that $\mathcal{R}$ is closed under compositions

- a) geometrically,

- b) algebraically.