This site is being phased out.

Internal structure of a vector space: part 1

Contents

How many dimensions?

Online comment: "Democrats, Republicans; this is so two-dimensional!"

What's wrong with this remark?

Correct, it should be one-dimensional!

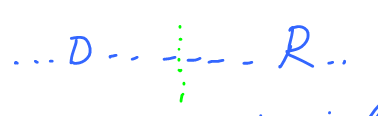

Supposedly, there is a spectrum between democrats and republicans, like this:

Dimension is, informally, the number of the degrees of freedom.

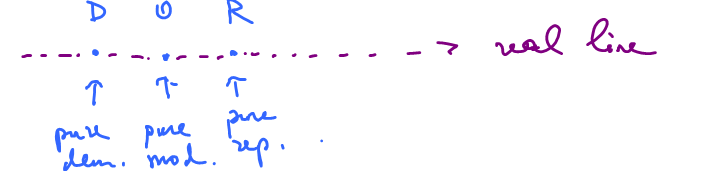

For example, if you are hiking in a field, then you have more:

$N \longleftrightarrow S$ or $E \longleftrightarrow W$

These are the two independent degrees of freedom.

More on politics...

Republicans and Democrats are the two opposites, algebraically, $$D = -R.$$

Further, a pure moderate is half way between a Republican and a Democrat. Algebraically: $$M=0.$$

One can add more algebra: $2R, 3D$, etc, whatever this means...(perhaps Nancy Pelosi is $3D$ or it might take a communist to reach $ 3\ \mbox{times} \ 1D$. It depends how far out $1D$ goes, but Poole & Rosenthal have actually attempted to quantify the "Democratishness" and "Republicanness" of various US representatives, senators, and judges -- and principal components analysis suggests that >85% of the disputes do lie along a single dimension.)

Back to vectors

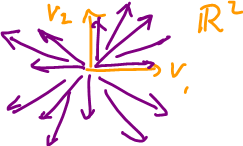

Let's consider the directions in the field, all of them.

Let's give this set the structure of a vector space.

Now, $E$, $N$, given, use the operations to get everything else:

- Multiples: $S=-N$, $W=-E$, etc.

- Sums: $NE=N+E$.

Also the "combinations": $NW=N+W=N-E$, $SW=-N-E$, etc.

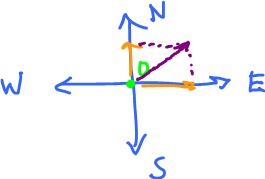

For example, $NNE=N+E+N=2N+E$.

Definition: Given a set of vectors $v_1,\ldots,v_n$ in a vector space $V$, $$u=a_1v_1+a_2v_2+\ldots+a_nv_n$$ is called a linear combination of $v_1,\ldots,v_n$, with coefficients $a_1,\ldots,a_n$.

Example: Consider $V={\bf R}^2$, the plane, and $E=(1,0)$, $N=(0,1)$, the two main directions.

Fact: All other directions are linear combinations of these two.

Algebra of linear combinations

Proposition. Let $i=(1,0)$, $j=(0,1)$. Then every vector in ${\bf R}^2$ can be represented as a linear combination of $i,j$.

Proof. Show that $u=(x,y)$ is a linear combination of $i$,$j$: $$u=(x,y)=a_1(1,0)+a_2(0,1).$$ Show this is possible.

We simply exhibit the combination, by finding $a_1$, $a_2$, $$u= (a_1,0)+(0,a_2) = (a_1,a_2)$$ Choose $a_1=x$, $a_2=y$, i.e., the coefficients are the "components" of the vector. $\blacksquare$

Example: As we just saw, for $(1,0)$, $(0,1)$, the solution is easy. What if it's $(1,1)$, $(3,2)$?

Again we write what we want: $$(-1,1) = a_1(1,1) + a_2(3,2).$$ Do the algebra: $$(-1,1)=(a_1,a_1)+(3a_2,2a_2)=(a_1+3a_2,a_1+2a_2).$$ There are two equal vectors, so their coordinates are also equal:

- $-1=a_1+3a_2$ and

- $1=a_1+2a_2$.

What is this? A system of linear equations.

Solve it.

$E_1 - E_2 \rightarrow -2=a_2$, substitute in $E_1$:

$\begin{array}{} -1 &= a_1+3(-2) \\ -1 &= a_1-6 \\ 5 &= a_1 \end{array}$.

Now, verify: $$(-1,1)=5(1,1)-2(3,2).$$

Example: When is it not possible? If the two vectors are multiples of each other: $$(-1,1)=a_1(1,1)+a_2(5,5) = (a_1+5a_2,a_1+5a_2).$$ Re-written coordinate-wise,

$\begin{array}{} -1 &= a_1+5a_2 \\ 1 &= a_1+5a_2 \end{array}$

This is a contradiction.

Observation: Solving a linear combination problem leads to a system of linear equations. Conversely, every system of linear equations is a linear combination problem!

Example: Given a system of linear equations,

$\left\{ \begin{array}{} x_1 &- 2x_2 &+ x_3 &= 5 \\ x_1 & &+ x_3 &= 1 \\ & x_2 &+ 3x_3 &= 0 \end{array} \right.$

Find $x_1$, $x_2$, $x_3$.

But this is a vector equation written coordinate-wise!

Indeed:

$\left[ \begin{array}{} x_1 &-2x_2 &+ x_3 \\ x_1 & &+ x_3 \\ & x_2 &+ 3x_3 \end{array} \right] = \left[ \begin{array}{} 5 \\ 1 \\ 0 \end{array} \right]$

This is a vector to be represented as a linear combination of what?

More algebra:

$\left[ \begin{array}{} x_1 \\ x_2 \\ 0 \end{array} \right] + \left[ \begin{array}{} -2x_2 \\ 0 \\ x_2 \end{array} \right] + \left[ \begin{array}{} x_3 \\ x_3 \\ 3x_3 \end{array} \right] = \left[ \begin{array}{} 5 \\ 1 \\ 0 \end{array} \right]$

Factor $x_1, x_2, x_3$ out:

$x_1 \left[ \begin{array}{} 1 \\ 1 \\ 0 \end{array} \right] + x_2 \left[ \begin{array}{} -2 \\ 0 \\ 1 \end{array} \right] + x_3 \left[ \begin{array}{} 1 \\ 1 \\ 3 \end{array} \right] = \left[ \begin{array}{} 5 \\ 1 \\ 0 \end{array} \right].$

Name these $v_1$, $v_2$, $v_3$, and $v_4$ respectively.

Finally, $u$ is a linear combination of $v_1$, $v_2$, $v_3$, $v_4$, if the system has a solution.

Example: Consider the vector space of polynomials: $V={\bf P}$.

Problem: Represent $2x^3-x^2+x+4$ as a linear combination of $x^3$, $x^2$, $x$.

Question: Is it possible?

Match the two polynomials: $$2x^3-x^2+x+4 = a_1x^3 + a_2x^2 + a_3x.$$

Note: This is not an equation of $x$, $a_1$, $a_2$, $a_3$! There are infinitely many equations here, one for each $x$.

Answer: Impossible.

Why? Plug in $x=0$, then we have $4=0$!

How do we fix this?

What if we have four vectors: $\{x_3, x_2, x, 1\}$?

Then, $$2x^3-x^2+x+4=a_1x^3+a_2x^2+a_3x+a_4.$$

Plug in $x=0$, then $4=a_4$.

How do I find the rest?

Substitute $a_4=4$, then cancel $4$: $$2x^2 - x^2 + x + \not{4} = a_1x^3 + a_2x^2+a_3x+\not{4},$$ divide by $x$: $$2x^2-x+1=a_1x^2+a_2x+a_3,$$ plug in $x=0$: $$1=a_3.$$

Continue.

The pattern is clear. The result is: $$a_2=-1,a_1=2.$$

Conclusion: Two polynomials are equal to each other only when their coefficients are equal.

The vectors were the power functions $1,x,x^2$, etc.

Exercise: Represent $x^3-x+2$ as a linear combination of $\{x_1, x^2+1, x^3-1, x+x^2 \}$.

One more example.

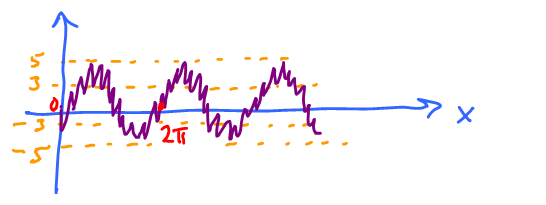

What function looks like this?

It looks like $\sin$, but with smaller oscillations on top of that.

These oscillations could also be represented by $\sin$, but with smaller period!

Say, it's $10$ times.

Then our function should look like this: $$\_ \sin x + \_ \sin 10x,$$ with some unknown coefficients.

An examination of the graph suggests that the amplitude of $\sin$ is $4$, and the smaller one is $1$. Hence, our function is $$4\sin x + 1\sin 10x.$$

This is a linear combination of $\sin x$ and $\sin 10x$. Commonly, these (two or more) functions are given and one has to find the coefficients.

This is important in signal processing, music, etc.

Fact: Every periodic function is a linear combination of $\sin nx$, $\cos nx$, possibly infinitely many. See Fourier series, not linear algebra though.

Sets of all linear combinations, spans

Recall, a linear combination of $v_1,\ldots,v_n$ with coefficients $a_1,\ldots,a_n$ is $$a_1v_1+\ldots+a_nv_n$$ or $$\displaystyle\sum_{i=1}^n a_iv_i.$$

So, here $v_1,\ldots,v_n$, $a_1,\ldots,a_n$ are fixed.

What if $v_1,\ldots,v_n$ are fixed but $a_1,\ldots,a_n$ are not?

Problem: Find all linear combinations of $v_1,\ldots,v_n$.

Observe:

- Linear combination of trigonometric functions is a trigonometric function.

- Linear combination of polynomials is a polynomial. (to get $\sin$ and other non-polynomials, use Taylor series.)

Definition: The set of all linear combinations of a set $S$ (in vector space $V$) is called the span of $S$.

We start with $S = \{v_1,\ldots,v_n\} \subset V$, a finite set.

Then $${\rm span \hspace{3pt}} S = \{a_1v_1 + \ldots + a_nv_n \colon a_1,\ldots,a_n \in {\bf R} \} \subset V.$$

Example: Given $$S = \{ (1,0,0), (0,1,0), (0,0,1) \} \subset V = {\bf R}^3,$$ find $${\rm span \hspace{3pt}} S = \{a_1(1,0,0) + a_2(0,1,0) + a_3(0,0,1) \colon a_1, a_2, a_3 \in {\bf R} \}$$ $$ = \{(a_1,a_2,a_3) \colon a_1, a_2, a_3 \in {\bf R} \}$$ $$ = {\bf R}^3,$$ because $1^{\rm st}, 2^{\rm nd}, 3^{\rm rd}$ coordinates are arbitrary and independent from each other.

Prove it: Given $(x,y,z) \in {\bf R}^3$ find it as an element of ${\rm span \hspace{3pt}} S$.

How? Choose: $a_1=x$, $a_2=y$, $a_3=z$.

Example: Let $S = \{(1,1),(2,2)\} \subset {\bf R}^2$. In other words, $v_1=(1,1)$ and $v_2=(2,2)$. Compute ${\rm span \hspace{3pt}} S\subset {\bf R}^2$:

$${\rm span \hspace{3pt}} S = \{a_1(1,1)+a_2(2,2) \colon a_1, a_2 \in {\bf R} \} $$

$$= \{(a_1,a_1)+(2a_2,2a_2) \colon a_1,a_2 \in {\bf R} \} $$

$$= \{(a_1+2a_2, a_1+2a_2) \colon a_1, a_2 \in {\bf R} \} $$

(the two components are equal!)

Answer: It's a line.

Prove ${\rm span \hspace{3pt}} S = L$, the line diagonal through $0$.

Define: $$L=\{\lambda(1,1) \colon \lambda \in {\bf R}\}.$$ Show that ${\rm span \hspace{3pt}} S = L$.

($\subset$) ${\rm span \hspace{3pt} S} \subset L$.

Given $v \in {\rm span \hspace{3pt} S}$, show $v \in L$. $v \in {\rm span \hspace{3pt} S}$, so $v = (a_1+2a_2,a_1+2a_2)$ for some $a_1,a_2 \in {\bf R}$.

Why $v$ has the form of $\lambda(1,1)$, $\lambda \in {\bf R}$? Find $\lambda$.

Observe $v = (a_1+2a_2)(1,1)$. So choose $\lambda = a_1+2a_2$.

($\supset$) $L \subset {\rm span \hspace{3pt} S}$.

Given $u \in L$, show $u \in {\rm span \hspace{3pt} S}$. Since $u \in L$, so $u = \lambda(1,1)$ for some $\lambda \in {\bf R}$.

Why $u$ has the form $(a_1+2a_2, a_1+a_2)$? Find $a_1,a_2$. Choose $a_1=\frac{1}{3} \lambda$, $a_2 = \frac{1}{3}\lambda$. Finish. $\blacksquare$

Example: Compute:

$${\rm span \hspace{3pt} S} = {\rm span \hspace{3pt}} \{(1,0,0),(1,0,1),(2,0,1) \}$$

$$ = \{a_1(1,0,0) + a_2(1,0,1) + a_3(2,0,1) \colon a_1,a_2,a_3 \in {\bf R} \}$$

We call $u = (1,0,0)$, $v=(1,0,1)$, $w=(2,0,1)$.

Observation: The span can't be ${\bf R}^3$ because $2^{\rm nd}$ coordinate is zero.

Can this be a line? Yes, if the vectors are multiples of each other.

The span of $v_1,v_2$ looks like this:

Unless $v_1$, $v_2$ are multiples of each other. Then it's a line.

Other options: ${\bf R}^3$, plane through $0$.

Definition: A plane in a vector space is given by $$P = \{av+bu \colon a,b \in {\bf R}\}$$ for fixed $u$, $v$ not multiples of each other.

We can rewrite this as $P = {\rm span}\{u,v\}$.

Question: When is ${\rm span}\{u,v,w\}$ a line? A plane? ${\bf R}^3$?