This site is being phased out.

Determinants of linear operators

Contents

How to determine that a matrix is invertible?

Given a matrix or a linear operator $A$, it is either singular or non-singular: $${\rm ker \hspace{3pt}}A \neq 0 {\rm \hspace{3pt} or \hspace{3pt}} {\rm ker \hspace{3pt}}A=0$$

If the kernel is zero, then $A$ is invertible (as 1-1).

Recall, matrix $A$ is singular when its column vectors are linearly dependent ($i^{\rm th}$ column of $A = A(e_i)$).

The goal is to find a function that determines whether $A$ is singular. It is called the determinant.

Specifically, we want:

$${\rm det \hspace{3pt}}A = 0 {\rm \hspace{3pt} iff \hspace{3pt}} A {\rm \hspace{3pt} is \hspace{3pt} singular}.$$

Start with dimension $2$.

$A = \left[ \begin{array}{} a & b \\ c & d \end{array} \right]$ is singular when $\left[ \begin{array}{} a \\ c \end{array} \right]$ is a multiple of $\left[ \begin{array}{} b \\ d \end{array} \right]$

Let's consider that:

$$\left[ \begin{array}{} a \\ c \end{array} \right] = x\left[ \begin{array}{} b \\ d \end{array} \right] \rightarrow \begin{array}{} a = bx \\ c = dx \\ \end{array}$$

So $x$ has to exist.

Then, $$x=\frac {a}{b} = \frac{c}{d}.$$ That the condition. (Is there a division by 0 here?)

Rewrite as: $ad-bc=0 \longleftrightarrow A$ is singular, and we want $\det A =0$.

This suggests that

$${\rm det \hspace{3pt}}A = ad-bc,$$

Let's make this the definition.

Verify:

Theorem: A $2\times 2$ matrix $A$ is singular iff $\det A=0$.

Proof. ($\Rightarrow$) Suppose $\left[ \begin{array}{} a & b \\ c & d \end{array} \right]$ is singular, then $\left[ \begin{array}{} a \\ c \end{array} \right] = x \left[ \begin{array}{} b \\ d \end{array} \right]$, then $a=xb, c=xd$, then substitute:

$${\rm det \hspace{3pt}}A = ad-bc = (xb)d - b (xd)=0.$$

($\Leftarrow$) Suppose $ad-bc=0$, then let's find $x$, the multiple.

- Case 1: assume $b \neq 0$, then choose $x = \frac{a}{b}$. Then

$$\begin{array}{} xb &= \frac{a}{b}b &= a \\ xd &= \frac{a}{b}d &= \frac{ad}{b} = \frac{bc}{b} = c. \end{array}$$

So $x \left[ \begin{array}{} b \\ d \end{array} \right] = \left[ \begin{array}{} a \\ c \end{array} \right].$

- Case 2: assume $a \neq 0$, etc.

Observations

We defined $ \det A$ with requirement that $ \det A = 0$ iff $A$ is singular.

But $ \det A$ could be $k(ad-bc)$, $k \neq 0$. Why $ad-bc$?

Because...

- Observation 1: $ \det I_2=1$ (normalization property)

Notice this similarity:

File:2x2 determinant via cross multiplication.png $= ad-bc$,

i.e., "cross multiplication" (just like with differentiation:  , $(fg)'=f'g+g'f$).

, $(fg)'=f'g+g'f$).

So,

- Observation 2: Each entry of $A$ appears only once.

What if we interchange rows or columns?

$$ \det \left[ \begin{array}{} c & d \\ a & b \end{array} \right] = cb-ad = - \det \left[ \begin{array}{} a & b \\ c & d \end{array} \right].$$

Then the sign changes. So

- Observation 3: $ \det A=0$ is preserved under this elementary row operation.

Moreover, $ \det A$ is preserved up to a sign! (later)

- Observation 4: If $A$ has a zero row or column, then $ \det A=0$.

To be expected -- we are talking about linear independence!

- Observation 5: $ \det A^T= \det A$.

$$ \det \left[ \begin{array}{} a & c \\ b & d \end{array} \right] = ad-bc$$

- Observation 6: Signs of the terms alternate: $+ad-bc$.

- Observation 7: $ \det A \colon {\bf M}(2,2) \rightarrow {\bf R}$ is linear... NOT!

$$\begin{array}{} \det 3\left[ \begin{array}{} a & b \\ c & d \end{array} \right] &= \det \left[ \begin{array}{} 3a & 3b \\ 3c & 3d \end{array} \right] \\ &= 3a3d-3b3c \\ &= 9(ad-bc) \\ &= 9 \det \left[ \begin{array}{} a & b \\ c & d \end{array} \right] \end{array}$$

not linear, but quadratic. Well, not everything in linear algebra has to be linear...

What about dimension $3$?

Let's try to step up from dimension 2 with this simple matrix:

$$B = \left[ \begin{array}{} e & 0 & 0 \\ 0 & a & b \\ 0 & c & d \end{array} \right]$$

We, again, want to define ${\rm det \hspace{3pt}}B$ so that ${\rm det \hspace{3pt}}B=0$ if and only if $B$ is singular, i.e., the columns are linearly dependent.

Question: What does the value of $e$ do to the linear dependence?

- Case 1, $e=0 \rightarrow B$ is singular. So, $e=0 \rightarrow {\bf det \hspace{3pt}}B=0$.

- Case 2, $e \neq 0 \rightarrow$ the vectors are:

$$\left[ \begin{array}{} e & 0 & 0 \\ 0 & a & b \\ 0 & c & d \end{array} \right]$$

and

$v_1=\left[ \begin{array}{} e \\ 0 \\ 0 \end{array} \right]$ is not a linear combination of the other two $v_2,v_3$.

So, we only need to consider the linear independence of those two, $v_2,v_3$!

Observe that $v_2,v_3$ are linearly independent if and only if ${\rm det \hspace{3pt}}A \neq 0$, where

$$A = \left[ \begin{array}{} a & b \\ c & d \end{array} \right]$$

Two cases together: $e=0$ or ${\rm det \hspace{3pt}}A=0 \longleftrightarrow B$ is singular.

So it makes sense to define

$${\rm det \hspace{3pt}}B = e \cdot {\rm det \hspace{3pt}}A$$

With that we have: ${\rm det \hspace{3pt}}B=0$ if and only if $B$ is singular.

Let's review the observations above...

(1) $\det I_3 = 1$.

(4), (5) still hold.

(7) still not linear: $\det (2B) = 8 \det B.$

It's cubic.

So far so good...

Now let's give the definition of ${\rm det}$ in dimension three.

Definition in dimension 3 via that in dimension 2

We define via "expansion along the first row."

$$\det A = a \det \left[ \begin{array}{} e & f \\ h & i \end{array} \right] - b \det \left[ \begin{array}{} d & f \\ g & i \end{array} \right] + c \det \left[ \begin{array}{} d & e \\ g & h \end{array} \right]$$

Observe that the first term is familiar from before.

This is the beginning of induction: from dimension 2 to dimension 3!

Expand farther using the formula for $2 \times 2$ determinant:

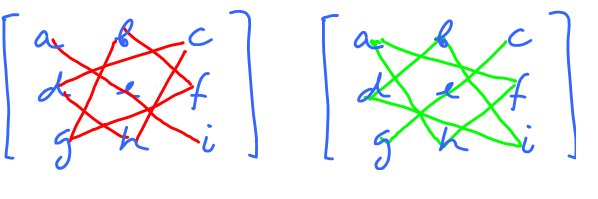

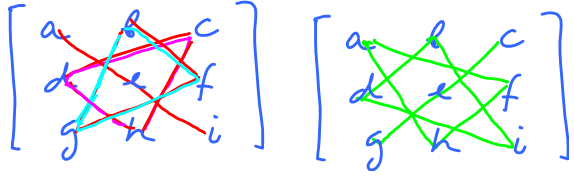

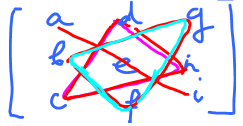

$$\begin{array}{} {\det} _1 A &= a(ei-fh) - b(di-fg)+c(dh-eg) \\ &= (aei+bfg+cdh)-(afh+bdi+ceg) \end{array}$$

Observe:

- each entry appears twice -- once with $+$, once with $-$.

Prior observation:

- in each term (in determinant) every column appears exactly once, as does every row.

The determinant helps us with problems from before.

Example: Given $(1,2,0),(-1,0,2),(2,-2,1)$. Are they linearly independent? We need just yes or no; no need to find the actual dependence.

Note: this is similar to discriminant that tells us how many solutions a quadratic equation has:

- $D<0 \rightarrow 0$ solutions,

- $D=0 \rightarrow 1$ solution,

- $D>0 \rightarrow 2$ solutions.

Consider: $$\begin{array}{} \det \left[ \begin{array}{} 1 & -1 & 2 \\ 2 & 0 & -2 \\ 3 & 2 & 1 \end{array} \right] &= 1 \cdot 0 \cdot 1 + (-1)(-2)3 + 2\cdot 2 \cdot 2 - 3 \cdot 0 \cdot 2 - 1(-2)2 - 2(-1)1 \\ &= 6 + 8 + 4 + 2 \\ &\neq 0 \end{array}$$

So, they are linearly independent.

Back to matrices.

Recall the definition, for:

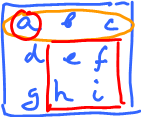

$A = \left[ \begin{array}{} a & b & c \\ d & e & f \\ g & h & i \end{array} \right]$ of expansion along the first row:

(*) $$\begin{array}{} {\det} _1A &= a \det \left[ \begin{array}{} e & f \\ h & i \end{array} \right] - b \det \left[ \begin{array}{} d & f \\ g & i \end{array} \right]+ c \det \left[ \begin{array}{} d & e \\ g & h \end{array} \right] \\ &=(aei+bfg+cdh)-(afh+bdi+ceg) \end{array}$$

Let's try the expansion along the second row.

(**) $$\begin{array}{} {\det} _2A &= d \det \left[ \begin{array}{} b & c \\ h & i \end{array} \right] - e \det \left[ \begin{array}{} a & c \\ g & i \end{array} \right] + f \det \left[ \begin{array}{} a & b \\ g & h \end{array} \right] \\ &=d(bi-ha) - e(ai-cg) + f(ah-bg) \\ &=dbi+\ldots \end{array}$$

Let' try to match $(*)$ and $(**)$.

Wrong sign!

But it's wrong for all terms, fortunately!

To fix the formula, flip the signs,

$$\begin{array}{} {\det}_{2} A &= -d \det \left[ \begin{array}{} b & c \\ h & i \end{array} \right] + e \det \left[ \begin{array}{} a & c \\ g & i \end{array} \right] - f \det \left[ \begin{array}{} a & b \\ g & h \end{array} \right] \end{array}$$

Conclusion: the expansion formula depends on the row chosen.

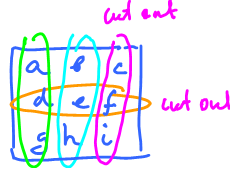

But it's the same procedure:

- $3$ determinants, $2 \times 2$,

- with coefficients cut out row and column,

- signs alternate.

The difference is alternating signs: start with $-$ or $+$, depending on the row chosen.

Determinants of $n \times n$ matrices

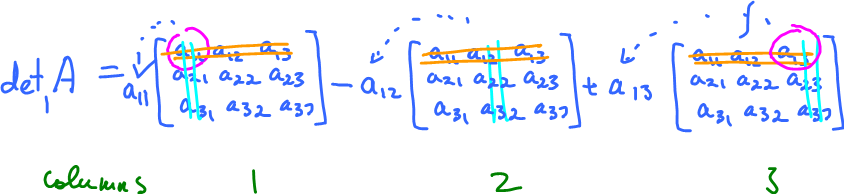

First, $3 \times 3$ but with proper notation:

$$A = \left[ \begin{array}{} a_{11} & a_{12} & a_{13} \\ a_{21} & a_{22} & a_{23} \\ a_{31} & a_{32} & a_{33} \end{array} \right].$$

Then

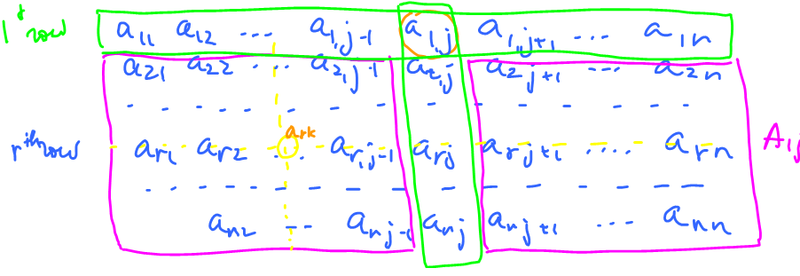

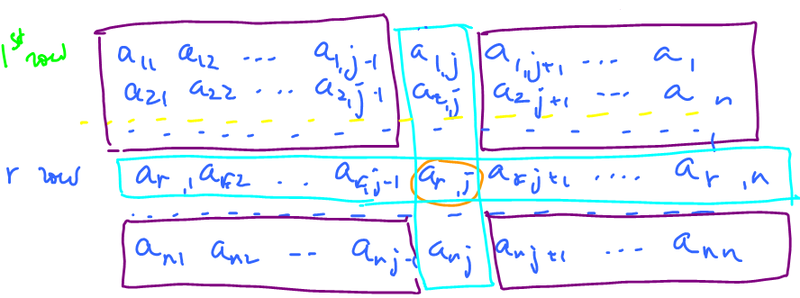

Given an $n \times n$ matrix and two numbers, $r,k$ $1 \leq r,k \leq n$, then define $A_{rk}$ is the $(n-1) \times (n-1)$ matrix obtained by removing $r^{\rm th}$ row and $k^{\rm th}$ column from $A$.

So, take $a_{rk}$, the $(r,k)$-entry in $A$, and remove the row and the column that contain $a_{rk}$, that's $A_{rk}$.

Then, rewrite

$${\det} _1 A = a_{01}\det A_{11} - a_{12} \det A_{12}+a_{13} \det A_{13}.$$

Also,

$${\det} _2 A=-a_{21} \det A_{21}+a_{22} \det A_{22}-a_{23} \det A_{23}.$$

(Recall, ${\det} _1A= \det _2A$).

What about ${\det} _rA=?$

$${\det} _r A = \pm a_{r1} \det A_{r1}, \mp a_{r2} \det A_{r2}, \pm a_{r3} \det A_{r3}$$

Only the signs need to be determined:

$$=(-1)^?a_{r1} \det A_{r1}+(-1)^?a_{r2} \det A_{r2}+(-1)^?a_{r3} \det A_{r3}$$

The signs depend on

- the position, i.e., the column, and

- the row chosen.

$$=(-1)^{r+1}a_{r1} \det A_{r1}+(-1)^{r+2}a_{r2} \det A_{r2}+(-1)^{r+3}a_{r3} \det A_{r3}$$

Test $r=3$.

$${\det} _rA = \sum_{k=1}^3(-1)^{r+k}a_{rk} \det A_{rk}.$$

Fact: ${\rm det}A = {\det} _rA_i$ for all $r=1,2,3$.

What we get is this "inductive definition".

Definition: The determinant of an $n \times n$ matrix $A$ is defined as

$$ \det A = \sum_{k=1}^n(-1)^{r+k}a_{rk} \det A_{rk},$$

where $1 \leq k \leq n$.

It is called the "expansion along $r^{\rm th}$ row".

Theorem: $ \det A$ is independent of $r$.

This means that it's well defined (later).

Base: $n=1$, ${\rm det}[a]=a$.

Also for $n=2$ $A = \left[ \begin{array}{} a_{11} & a_{12} \\ a_{21} & a_{22} \end{array} \right]$. Then

$$\begin{array}{} \det A &= \sum_{k=1}^2(-1)^{r+k}a_{rk} \det A_{rk} \\ &=(-1)^{1+1}a_{11} \det A_{11}+(-1)^{1+2}a_{12} \det A_{12} \\ &= a_{11} \det [a_{22}] - a_{12} \det [a_{21}] \\ &=a_{11}a_{22}-a_{12}a_{21}. \end{array}$$

Properties of determinants

- 1. $ \det 0=0$. Proof: easy.

- 2. $A$ has a zero row, then $ \det A=0$. Proof: Expand along that row, then all the coefficients are zeros in the sum.

- 3. $ \det A^T= \det A$.

- 4. $A$ has two identical rows, then $ \det A=0$.

- 5. $ \det I_n=1$.

Proof: $$\begin{array}{} \det A &= 1 \cdot \det A_{11} + 0 \\ &= 1 \cdot 1 \cdot \det (A_{11})_{11} \end{array}$$

expand again, on and on.

This is proof by induction. Better: prove $ \det I_1=1$ then $ \det I_{n-1}=1$ implies $\det I_n=1$.

Homework: Define $V = \{(x_1,\ldots,x_n, \ldots) \colon x_n \rightarrow a$ for some $a \}$.

- 1. Define operations

$$\begin{array}{} x=(x_1,\ldots,x_n,\ldots) \\ y=(y_1,\ldots,y_n,\ldots) \end{array} \rightarrow x+y = (x_1+y_1,\ldots,x_n+y_n,\ldots)$$

Observation: without the $\ldots$, we would have $V={\bf R}^n$.

Property: $ \det A^T= \det A$.

Test it...

For $2 \times 2$: $\left[ \begin{array}{} a & b \\ c & d \end{array} \right]^T = \left[ \begin{array}{} a & c \\ b & d \end{array} \right]$.

$\det = ad-bc$, same!

Exercise: examine and confirm.

Property: ${\rm det \hspace{3pt}}A$ of $n \times n$ matrix has $n!$ terms.

Theorem: The determinant is independent of the expansion row:

$$ {\det} _1A = {\det} _rA = {\det} A.$$

Proof idea:

- Expand it along $1^{\rm st}$ row, then each participant along $r^{\rm th}$ row.

- Expand it along row $r$, then each participant along $1^{\rm st}$ row.

- Show they are equal.

Plan: Prove by induction on $n$.

Observe: $ \det A$ is also defined by induction.

- 1. $ \det _1A = \det _rA$ true for $(n-1)\times (n-1)$ matrix $A$

- 2. Show that $ \det _1A = \det _rA$ for an $n \times n$ matrix $A$.

We define this via determinants of submatrices of dimension $(n-1) \times (n-1)$.

Examine $A$, given, $n \times n$, and its submatrices $A_{ij},(A_{ij})_{rk}$:

Consider $A_{ij}$.

Expansion along $1^{\rm st}$ row:

$${\det} _1A = \sum_{j=1}^n(-1)^{i+j}a_{ij} \det A_{ij}$$

apply the definition again.

Warning: the indices of the entries of $A_{ij}$ aren't the same as the ones of $A$:

- $a_{21}$ in $A$ is in second row, first column

- $a_{21}$ in $A_{ij}$ is in the first row, first column

- $a_{2(j+1)}$ in $A$ is second row, $(j+1)$ column

- $a_{2(j+1)}$ in $A_{ij}$ is in first row, $j^{\rm th}$ column.

We name entries in $A_{ij}=\{e_{rk}\}$. Express $e_{rk}$ in terms of $a_{rk}$.

Question: What happens to the row numbers?

$$b_{r*}=a_{(r-1)*}$$

Question: What happens to the column numbers?

$b_{*k} = a_{*k}$ if $k < j$

$b_{*k} = a_{*(k-1)}$ if $k > j$

So

$$b_{rk} = \left\{ \begin{array} a_{(r-1)k} &; k < j \\ a_{(r-1)(k-1)} &; k > j \end{array} \right.$$

Use it.

$${\det} _{r-1}A_{ij} \stackrel{ {\rm def} }{=} \sum_{k=1}^{n-1}(-1)^?a_{rk} \det (A_{ij})_{rk}.$$

Expand along $(r-1)$ row (in $A_ij$).

Here, $a_rk$ comes with $ \det (A_{ij})_{rk}$. The only thing to find is the sign $=$ row # + column # of $a_{rk}$ in $A_{ij}$.

$$= \left\{ \begin{array}{} r-1+k &; k < j \\ r-1+k-1 &; k > j \end{array} \right.$$

So

$$ {\det} _{r-1}A_{ij} = \sum_{k=1}^{j-1}(-1)^{r+k-1}a_{rk} \det (A_{ij})_{rk} + \sum_{k=j+1}^n (-1)^{r+k-2}a_{rk} \det (A_{ij})_{rk}$$

Substitute

$$\begin{array}{} {\det} _1A &= \sum_{j=1}^n (-1)^{1+j}a_{1j}(\sum_{k=1}^{j-1}(-1)^{r+k-1}a_{rk}{\rm det}(A_{1j})_{rk} + \sum_{k=j+1}^n (-1)^{r+k-2}a_{rk}{\rm det}(A_{1j})_{rk}) \\ &= \sum_{k<j} (-1)^{1+j}a_{1j}(-1)^{r+k-1}a_{rk}{\rm det}(A_{1j})_{rk} + \sum_{k>j}(-1)^{1+j}a_{1j}(-1)^{r+k-2}{\rm det}(A_{1j})_{rk} \\ &= \sum_{k<j}(-1)^{r+k+j}a_{1j}a_{rk} \det (A_{1j})_{rk} + \sum_{k>j}(-1)^{r+k+j-1}a_{1j}a_{rk} \det (A_{1j})_{rk} \end{array}$$ (1)

Alternatively, expand $A$ along another row. Choose $r \neq 1$.

$$ {\det} _rA = \sum_{j=1}^n (-1)^{r+j}a_{rj} \det A_{rj}.$$

Expand these along first row.

We denote $A_{r+1,j}$ as $\{c_{ik}\}$. Review:

So, $$c_{ik} = \left\{ \begin{array}{} a_{ik} &; i<r+1,k<j \\ a_{i,k-1} &; i<r+1, k>j \\ a_{i-1,k} &; i > r+1, k < j \\ a_{i-1,k-1} &; i>r+1, k>j \end{array} \right.$$

Again, $$ {\det} _1A_{rj} = \sum_{k=1}^n (-1)^{??} a_{1k} \det (A_{rj})_{1k}.$$

Find the sign,

$$=\sum_{k<j}(-1)^{1+k}a_{1k} \det (A_{rj})_{1k} + \sum_{k>i}(-1)^{1+k-1}a_{1k} \det (A_{rj})_{1k}$$

First term is first part of the row and second term is second part of row.

Substitute:

$$\begin{array}{} {\det} _rA &= \sum_{j=1}^n(-1)^{r+j}a_{rj}( \sum_{k<j}(-1)^{1+k}a_{1k}{\rm det}(A_{rj})_{1k} + \sum_{k>i}(-1)^{1+k-1}a_{1k}{\rm det}(A_{rj})_{1k} ) \\ &= \sum_{k<j}(-1)^{r+j}a_{rj}(-1)^{1+k}a_{1k}{\rm det}(A_{rj})_{1k} + \sum_{k>j}(-1)^{r+j}a_{rj}(-1)^ka_{1k}{\rm det}(A_{rk})_{1k} \\ &= \sum_{k<j}(-1)^{r+j+k}a_{rj}a_{1k}{\rm det}(A_{rj})_{1k} + \sum_{k>j}(-1)^{r+j+k}a_{rj}a_{1k}{\rm det}(A_{rj})_{1k} \end{array}$$

This is (2). Compare to (1) $$=\sum_{k<j}(-1)^{r+k+j}a_{1j}a_{rk}{\rm det}(A_{ij})_{rk} + \sum_{k>j}(-1)^{r+k+j-1}a_{ij}a_{rk}{\rm det}(A_{ij})_{rk}.$$

$$(A_{rj})_{1k} = (A_{ij})_{rk}$$

The rest doesn't match. To make them equal, flip $j,k$ in (2). $\blacksquare$

So, ${\det}A$ is well defined, can be computed by expanding along any row.

Compare to linear functions, they have the additive property:

$$L(x+y)=L(x)+L(y).$$

i.e., a linear function preserves addition.

Meanwhile, the determinant preserves multiplication.

Theorem: $ \det (AB) = \det A \cdot \det B$.

This is the multiplicative property.

Linear operators $L \colon {\bf R} \rightarrow {\bf R}$ do not have this property.

Example: $2(xy) \neq 2x \cdot 2y$ .

Example: $f \colon {\bf R} \rightarrow {\bf R}$ that preserves multiplication:

$$f(xy)=f(x) \cdot f(y).$$

- 1. Two constant functions, $0$ and $1$.

- 2. $(xy)^2 = x^2y^2$, all powers.

Sidenote: What about converting addition into multiplication and vice versa?

- logarithm: $f(xy)=f(x)+f(y)$.

- exponential: $f(x+y)=f(x)f(y)$.

Question: Given $AB$ with $A$ or $B$ not invertible, then $AB$ isn't invertible?

Correct.

This is confirmed by the formula: $$ \det (AB) = \det A \cdot \det B = 0.$$

Properties:

- 1. One row of zeroes, then $ \det A=0$. (Just expand along this row.)

- 2. Elementary row operations only change the sign of $ \det A$.

- 3. Two identical rows, then $ \det A=0$.

- 4. $ \det A^T = \det A$

The transpose

If $A = \{a_{ij}\}$ and $A^T = \{b_{ij}\}$, then $b_{ij}=a_{ji}$.

This is the reflection of the matrix about the main diagonal.

$$x \longleftrightarrow y.$$

It "looks like" the inverse. But in general, $A^T \neq A^{-1}$.

If $A \in {\bf M}(n,m)$, then $A^T \in {\bf M}(m,n)$.

Or as linear operators: $$A \colon {\bf R}^m \rightarrow {\bf R}^n .... A^T \colon {\bf R}^n \rightarrow {\bf R}^m.$$

Example: $A = \left[ \begin{array}{} 1 & 1 \\ 1 & 1 \end{array} \right]$, $A^T = \left[ \begin{array}{} 1 & 1 \\ 1 & 1 \end{array} \right] \neq A^{-1}$.

Now, $ \det A=0$, so $A$ is singular, there is no $A^{-1}$.

Also, $AA^{-1}=I$. Apply the formula.

$$ \det (AA^{-1})= \det A \det A^{-1} = \det I=1.$$

So we have:

Corollary: $$\det A^{-1} = \frac{1}{ \det A}.$$

To prove, compute:

$$\begin{array}{} \det (P^{-1}AP) &= \det(P^{-1}) \cdot \det A \cdot \det P \\ &= \frac{1}{ \det A} \cdot {\rm det \hspace{3pt}}A \cdot \det P \\ &= \det A. \end{array}$$ $\blacksquare$

Corollary: $\det (P^{-1}AP) = \det A$.

Why is this statement important? If $P$ is the change of basis matrix, then $P^{-1}AP$ is the matrix of $A$ is the new basis. So, $ \det A$ is independent of the basis.

We knew a small part of that: singularity vs. invertibility ($ \det =0$ vs $ \det \neq 0$)

Corollary: $ \det A$ can be computed by expanding along any column: $$ \det A = \sum_{r=1}^n(-1)^{rk}a_{rk} \det A_{rk}$$

Theorem: (Summary) $A$ is a linear operator: ${\bf R}^n \rightarrow {\bf R}^n$. Then the following conditions are equivalent:

- 1. $A$ is non-singular as a matrix;

- 2. $A$ is invertible as a function;

- 3. $ \det A \neq 0$;

- 4. ${\rm rank \hspace{3pt}} A = n$;

- 5. $A$ can be reduced to $I_n$ by elementary row operations;

- 6. For any $b \in {\bf R}^n$ there is $v \in {\bf R}^n$ such that $b = A(v)$, and such a $v$ is unique. ($v=A^{-1}(b)$)