This site is being phased out.

Calculus I, the discrete version

Contents

- 1 Discrete functions

- 2 The integral

- 3 Properties of the integral

- 4 The differential

- 5 Algebraic properties of the differential

- 6 Computations of differentials

- 7 The Fundamental Theorem of Calculus

- 8 The derivative

- 9 Algebraic properties of the derivative

- 10 Global maxima and minima

- 11 The Mean Value Theorem

- 12 Antiderivatives and the integral

- 13 Concavity and the second derivative

- 14 Newton's Laws

Discrete functions

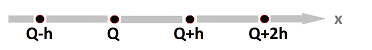

We divide the $x$-axis (i.e., the real line ${\bf R}$) into discrete pieces.

We start by dividing the $x$-axis into intervals of equal length $h>0$ starting from some location $Q$:

Then the two types of pieces:

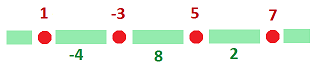

- the nodes $x=...,Q-2h,Q-h,Q,Q+h,Q+2h,Q+3h, ...$; and

- the edges $[x,x+h]=...,[Q-2h,Q-h],[Q-h,Q],[Q,Q+h],[Q+h,Q+2h],...$.

The members of the two types will not be intermixed in computations.

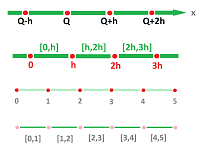

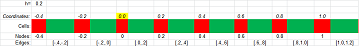

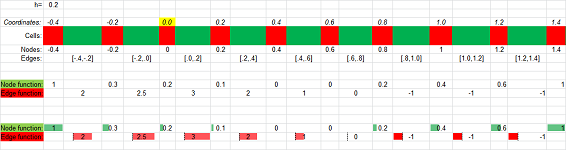

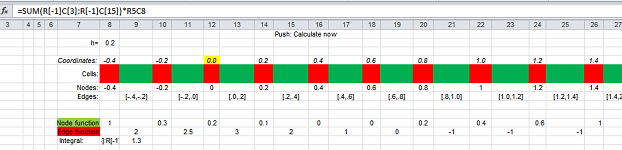

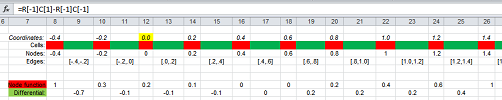

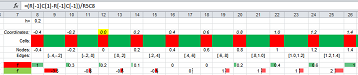

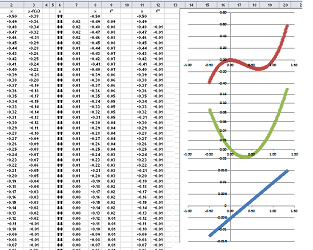

Specific representations can also be provided with a spreadsheet:

You can see how every other cell is squarely and every other is stretched horizontally to emphasize the different nature of these cells: nodes and edges.

For the sake of simplicity, below we illustrate mostly the case of $$h=1 \text{ and } Q=0.$$ In that case, we have

- nodes $A=...,-2,-1,0,1,2,3, ...$; and

- edges $[A,B]=...,[-2,-1],[-1,0],[0,1],[1,2],...$.

By interval we mean only one with nodes as its end-points.

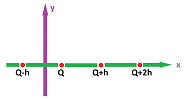

In the mean time, the $y$-axis is just the reals:

Now, the main objects in our study will be these two types of discrete functions:

- node functions with nodes as inputs; and

- edge functions with edges as inputs.

The outputs are real numbers.

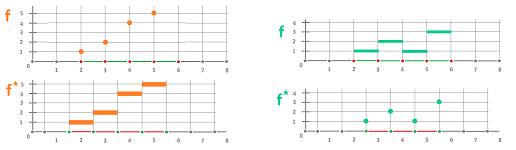

We use arrows to picture these functions as correspondences:

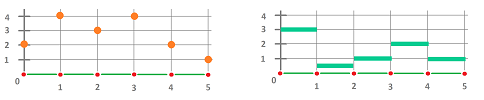

Here we have two:

- a node function $f: 0\mapsto 2,\ 1\mapsto 4,\ 2\mapsto 3,\ ...$; and

- an edge function $s: [0,1]\mapsto 3,\ [1,2]\mapsto .5,\ [2,3]\mapsto 1,\ ...$.

A more compact way to visualize is this:

We can also list the values of the two discrete functions:

- a node function $f$ with $f(0)=2,\ f(1)=4,\ f(2)=3,\ ...$; and

- a edge function $s$ with $s\Big([0,1] \Big)=3,\ s\Big([1,2] \Big)=.5,\ s\Big([2,3] \Big)=1,\ ...$.

We also use letters to label the pieces. Each is then assigned two symbols: one is its name (a latter) and the other is the value of the discrete function at that location (a number):

Here we list the values again:

- $f(A)=2,\ f(B)=4,\ f(C)=3,\ ...$;

- $s([A,B])=3,\ s([B,C])=.5,\ s([C,D])=1,\ ...$.

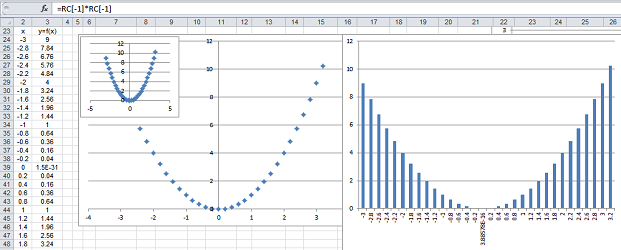

Discrete functions can be represented by tables (aka Spreadsheets):

Then the data can be visualized with colors, bars, icons, etc. However, the most common way to visualize functions is via their graphs. What is a graph? Given a function $f$, it is a collection of points on the $xy$-plane so that we always have $y=f(x)$.

For a node function, $x$ is a node, a number, and $y=f(x)$ is also a number. Together, they produce $(x,y)$, a point on the $xy$-plane (with the $x$-axis split into cells as shown above).

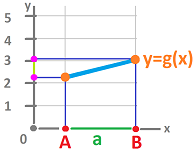

For an edge function, $[A,B]$ is an edge, an interval in the $x$-axis, and $y=g([A,B])$ is a number. Together, they produce a collection of points on the $xy$-plane such as $(x,y)$ for every $x$ in $[A,B]$. The result is a horizontal segment.

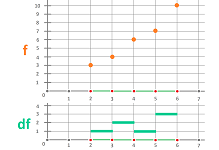

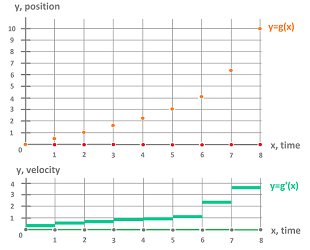

Spreadsheets have various way to visualize functions. To underscore the different nature of these two kinds of functions, the graph of the node function is shown with dots and that of the edge function with vertical bars:

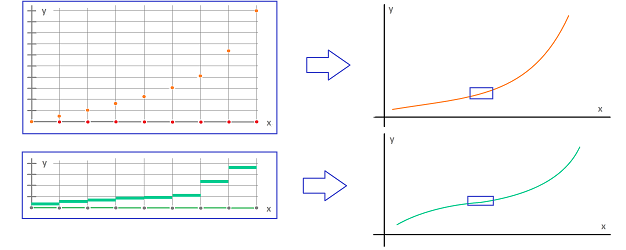

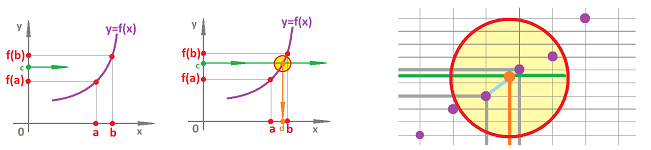

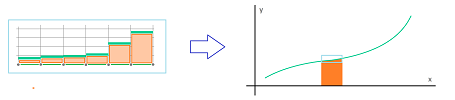

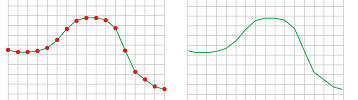

Even though these functions often consist of unrelated pieces, it is possible that we can see a continuous curve if we zoom out:

If we zoom in, we can fill in the gaps...

Theorem (Intermediate Value Theorem). Given a node function $f$, if a real number $c$ satisfies $$f(a) \le c \le f(b)$$ for some nodes $a,b$, then there is a edge $[A,A']$ located between the nodes $a,b$ such that $$f(A)\le c\le f(A'),$$ or $$f(A')\le c\le f(A).$$

Both kinds of discrete function often come from real-valued functions given by formulas, such as $F(x)=x^2$. We simply use the same formula and only the domain is changed: nodes or edges. For example, the function $y=F(x)=x^2$ ($x$ is a real number) produces these two:

- the node function $f$ given by $f( x ):=x^2$ ($x$ is a node), and

- the edge function $g$ given by $g\big( [x,x+h] \big):=x^2$ ($x$ is a node).

The construction is called sampling. The sampled function reappears if we zoom out on the graph of either of these discrete functions.

Let's consider a special edge function.

Definition. The standard differential, denoted by $dx$, is the edge function equal to $h$ on every edge, i.e., for every node $x$ we have: $$dx\big( [x,x+h] \big)=h.$$

We can use the standard differential to construct an edge function from any node function by simple multiplication, as follows.

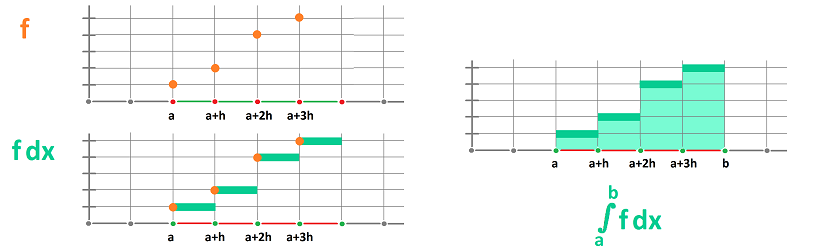

Proposition. Suppose we are given a node function $f$, then the following is an edge function: $$g\big( [x,x+h] \big)=f(x)\cdot dx\big( [x,x+h] \big)=f(x) \cdot h .$$

The abbreviated notation for this edge function is $$g=f\,dx.$$

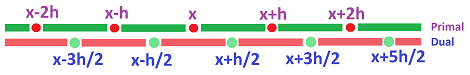

We can convert edge functions to node functions and vice versa by switching between nodes and edges. To set this up we use the following match between the cells.

Thus there are a new node for each edge in the domain and a new edge for each node. Together, these new nodes and edges form a new copy of the domain.

This is how the cells of the two are matched:

- an edge $a=[x,x+h]$ corresponds to the node $a^\star=x+h/2$; and

- a node $x$ corresponds to the edge $x^\star=[x-h/2,x+h/2]$. $\\$

Definition. The original domain is called primal and the new is called dual.

The relation can be reversed:

- a dual edge $a=[x-h/2,x+h/2]$ corresponds to the primal node $a^\star=x$; and

- a dual node $x+h/2$ corresponds to the primal edge $x^\star=[x,x+h]$. $\\$

Definition. For both primal and dual and for both nodes and edges, we have $a$ and $a^\star$ are called dual of each other.

Now duality of functions.

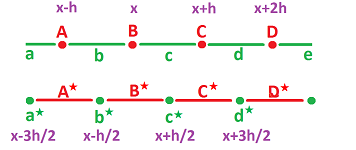

They are can be seen to come in pairs, primal and dual:

- if $f$ is a primal node function, then $f^\star(\big [x-h/2,x+h/2] \big):=f(x)$ is a dual edge function;

- if $g$ is a primal edge function, then $g^\star(x):=g(\big [x-h/2,x+h/2] \big)$ is a dual node function.

The following is the general approach.

Proposition. Given a node/edge function $s$, the following is an edge/node function: $$s^\star (a):=s(a^\star).$$

Then, $s^\star$ is called the dual function of $s$.

Two dual pairs are illustrated below:

If we zoom out, the functions dual to each other will look identical!

The integral

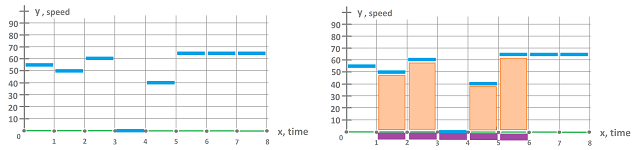

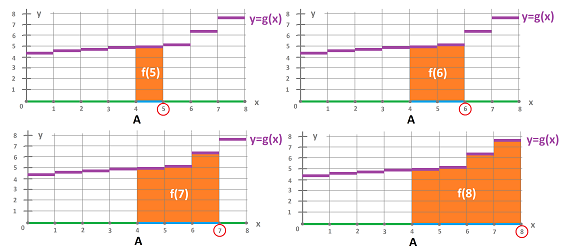

Let's consider a simple situation. A person drove for $8$ hours. What happened during each hour is known; he covered the following distance: $$\begin{array}{l|c|c|c|c|c|c|c|c} \text{hour} &1 &2 &3 &4 &5 &6 &7 &8\\ \hline \text{distance} &55&50&60&0 &40&65&65&65 \end{array}$$

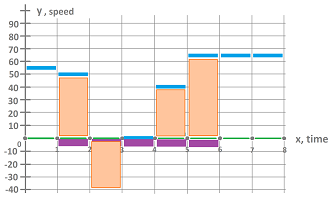

We represent the distances as an edge function $s$:

The values of this function $s$ is known for the single edges: $$s\Big( [0,1]\Big)=55,\ s\Big([1,2]\Big)=50,\ s\Big([2,3]\Big)=60,\ ...$$

Now, what is the distance covered by the driver during $2-6$ hours?

We represent this time interval as a “chain” of edges, $$a=[1,5]=[1,2]\cup [2,3]\cup [3,4]\cup [4,5].$$ Then the distance is simply the sum: $$\begin{array}{lllll} &=s\Big( [1,2] \Big)+s\Big( [2,3] \Big)+s\Big( [3,4] \Big)+s\Big( [4,5] \Big)\\ &=50+60+0+40\\ &=150. \end{array}$$ We think of the answer as the displacement of an object that moves with speed given by $s$ over a period of time represented by $a$.

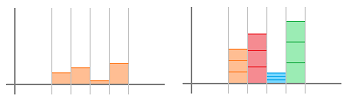

Definition. Given two nodes $A,B$ with $A<B$, the integral of an edge function $s$ over the interval $[A,B]$ is defined to be the sum of its values over the interval: $$\displaystyle\int_A^B s:= s\Big( [A,A_1] \Big)+...+s\Big( [A_n,B] \Big),$$ where $A_1,...,A_n$ are the consecutive nodes between $A$ and $B$.

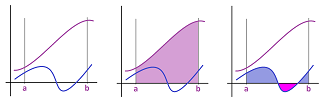

As we can see, the integral of $s$ is the area of the graph of $s$ that lies over $a$ when $h=1$. What if $h \ne 1$? We can still interpret integrals as areas: $$\int_a^bf\, dx =\text{area under the graph of } f.$$

In fact, zooming out suggests that we can compute the areas of more complex, curved regions -- even if they are still made of nothing but rectangles:

The operation of finding $\int_A^B s$ from $s$ is called integration.

Now, even if the person didn't spend any time driving, the displacement still makes sense. It's zero! We extend then the meaning of the integral to include intervals of zero length.

Definition. Given a node $A$, the integral of an edge function $s$ over the interval $[A,A]$ is defined to be equal to zero: $$\displaystyle\int_A^A s:= 0.$$

Furthermore, if the person drove for an hour in the opposite direction and covered $40$ miles, the data becomes: $$\begin{array}{l|c|c|c|c|c|c|c|c} \text{hour} &1 &2 &3 &4 &5 &6 &7 &8\\ \hline \text{distance} &55&-40&60&0 &40&65&65&65 \end{array}$$

Then, we still find the displacement by adding these values: $$\begin{array}{lllll} &=s\Big( [1,2] \Big)+s\Big( [2,3] \Big)+s\Big( [3,4] \Big)+s\Big( [4,5] \Big)\\ &=50+(-40)+0+40\\ &=50. \end{array}$$

To capitalize on this idea even further, we extend the meaning of the integral to include “reversed” intervals.

Definition. Given two nodes $A,B$ with $A<B$, the integral of an edge function $s$ over the interval $[B,A]$ is defined to be the negative sum of its values over the interval: $$\displaystyle\int_B^A s:= -\Big( s\big( [A,A_1] \big)+...+s\big( [A_n,B] \big) \Big),$$ where $A_1,...,A_n$ are the consecutive nodes between $A$ and $B$.

In other words, we have: $$\int\limits_B^A s := -\int\limits_A^B s.$$ It is as if time goes backward, the direction of motion is the opposite, and all gains are reversed...

There are no integrals of node functions. However, any node function $f$ can be integrated indirectly via $f\, dx$.

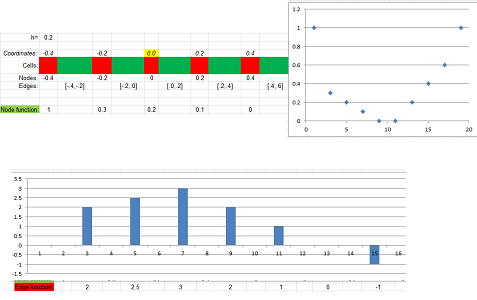

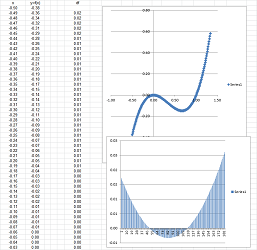

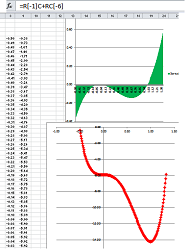

Finding an integral with a spreadsheet is shown below (see Spreadsheets):

Properties of the integral

The main property of the integral follows from the definition.

Theorem (Additivity). Suppose $s$ is an edge function. For any nodes $a,b,c$ with $a<c$, we have: $$\int\limits_a^b s +\int\limits_b^c s = \int\limits_a^c s.$$

Proof. Applying the definition to the intervals $[a,b]$ and $[b,c]$ and then to $[a,c]$, we have: $$\begin{array}{ll} \int\limits_{a}^{b} s +\int\limits_b^c s&= s\big([a,a+h]\big)+s\big([a+h,a+2h]\big)+...+s\big([b-h,b]\big) \\ &+s\big([b,b+h]\big)+s\big([b+h,b+2h]\big)+...+s\big([c-h,c]\big)\\ &= s\big([a,a+h]\big)+s\big([a+h,a+2h]\big)+...+s\big([c-h,c]\big)\\ &=\int\limits_{a}^{c} s. \end{array}$$ $\blacksquare$

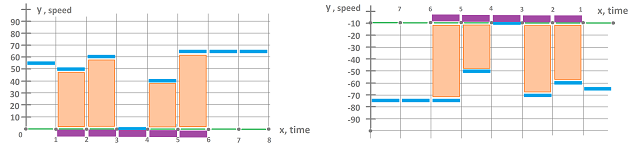

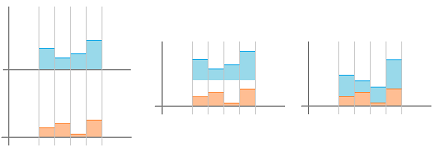

The area interpretation of additivity is illustrated below:

Here we have simply, $$\begin{array}{lll} \int_{a}^{b} s &+& \int_{b}^{c} s &=& \int_{a}^{c} s,\\ orange&+&green&=&blue. \end{array}$$

With the motion interpretation of integral, we have: $$\begin{array}{lll} &\text{distance covered during the 1st hour }\\ +&\text{distance covered during the 2nd hour }\\ =&\text{distance during the two hours}. \end{array}$$

Theorem (Comparison Rule). (1) Suppose $s$ is an edge function. For any nodes $a,b$ with $a<b$, if $s\big([x,x+h]\big) \geq 0$ on $[a,b]$ then $$ \int_{a}^{b} s \geq 0. $$ (2) Suppose $s$ and $t$ are edge functions. For any nodes $a,b$ with $a<b$, if $s\big([x,x+h]\big) \geq t\big([x,x+h]\big)$ on $[a,b]$ then $$\int_{a}^{b} s \geq \int_{a}^{b} t.$$

Proof. (2) Applying the definition to the function $s\big([x,x+h]\big)$ and then to $t\big([x,x+h]\big)$, we have: $$\begin{array}{ll} \int\limits_{a}^{b} s &= s\big([a,a+h]\big)+s\big([a+h,a+2h]\big)+...+s\big([b-h,b]\big)\\ &\le t\big([a,a+h]\big)+t\big([a+h,a+2h]\big)+...+t\big([b-h,h]\big)\\ &=\int\limits_{a}^{b} t. \end{array}$$ $\blacksquare$

The larger function contains a larger area under its graph:

In terms of motion: faster covers longer distance.

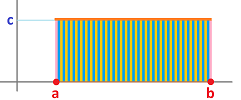

Theorem (Constant Function Rule). For any nodes $a,b$ and any real $c$, we have: $$\int\limits_{a}^{b} c\, dx = c ( b-a ). $$

Proof. Suppose there are $m$ edges between $a$ and $b$ and these are the nodes: $$a,a+h,...,b-h,b.$$ Then $$m=\frac{b-a}{h}.$$ Therefore, from the definition, we have: $$\int\limits_{a}^{b} c\,dx = \underbrace{ch+ch+...+ch}_{m\text{ times}}= chm=c ( b-a ). $$ $\blacksquare$

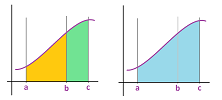

Once we zoomed out, we recognize that the integral represents the area of the rectangle with width $b-a$ and height $c$:

What if we know only estimates of the function? Suppose the graph of an edge function $s$ lies between two horizontal lines $y=m$ and $y=M$, with $m<M$. Then what can we say about the integral of $s$? We would like to find an estimate for the area of the orange region in terms of $a$, $b$, $m$, $M$. Below, the yellow region on the left is less then the orange area. On the right, the green area is larger.

Furthermore, these two regions are rectangles and their areas are easy to compute. We use the following inequalities: $$\text{the area of the smaller rectangle}\leq \text{the area under the graph} \leq \text{the area of the larger rectangle}.$$ For the general case, we have the following.

Theorem (Estimate Rule). Suppose $s$ is an edge function. For any nodes $a,b$ with $a<b$, if $$m \leq s\big([x,x+h]\big) \leq M,$$ for all $x$ with $a\le x < x+h \le b$, then $$m(b-a)\leq \int_{a}^{b} s \leq M(b-a).$$

Proof. For either of the two inequalities, we apply the Constant Function Rule and the Comparison Rule (2). $\blacksquare$

Finally, these are the algebraic properties.

Theorem (Constant Multiple Rule). Suppose $s$ is an edge function. For any nodes $a,b$ and any real $c$, we have: $$ \int_{a}^{b} c\, s = c \, \int_{a}^{b} s.$$

Proof. Applying the definition to the function $s\big([x,x+h]\big)$, we have: $$\begin{array}{ll} \int\limits_{a}^{b} c \cdot s &= c \cdot s\big([a,a+h]\big)+c \cdot s\big([a+h,a+2h]\big)+...+c \cdot s\big([b-h,b]\big)\\ &=c \cdot \Big( s\big([a,a+h]\big)+s\big([a+h,a+2h]\big)+...+s\big([b-h,h]\big) \Big)\\ &=c \, \int_{a}^{b} s. \end{array}$$ $\blacksquare$

The picture below illustrates the proof:

Theorem (Sum Rule). Suppose $s$ and $t$ are edge functions. For any nodes $a,b$, we have: $$\int_{a}^{b} \left( s + t \right) = \int_{a}^{b} s + \int_{a}^{b} t. $$

Proof. Applying the definition to the function $s\big([x,x+h]\big)+t\big([x,x+h]\big)$, we have: $$\begin{array}{ll} \int\limits_{a}^{b} \left( s + t \right) &= \Big( s\big([a,a+h]\big) + t\big([a,a+h]\big) \Big)+\Big( s\big([a+h,a+2h]\big) + t\big([a+h,a+2h]\big) \Big)+...\\ &...+\Big( s\big([b-h,b]\big) + t\big([b-h,b]\big) \Big)\\ &=\Big( s\big([a,a+h]\big)+s\big([a+h,a+2h]\big)+...+s\big([b-h,b]\big) \Big)\\ & +\Big( t\big([a,a+h]\big)+t\big([a+h,a+2h]\big)+...+t\big([b-h,b]\big) \Big)\\ &=\int_{a}^{b} s + \int_{a}^{b} t. \end{array}$$ $\blacksquare$

The picture below illustrates the proof:

The differential

We next study the change of node functions.

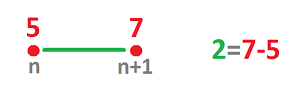

Let's consider an example of motion. Suppose a node function $p$ gives the position of a person and suppose

- at time $n$ hours we are at the $5$ mile mark: $p(n)=5$, and then

- at time $n+1$ hours we are at the $7$ mile mark: $p(n+1)=7$. $\\$

We don't know what exactly has happened during this hour but the simplest assumption would be that we have been walking at a constant speed of $2$ miles per hour.

Now, instead of our velocity function $v$ assigning this value to each instant of time during this period, it is assigned to the whole interval: $$v\Big( [n,n+1] \Big)=2.$$ This way, the elements of the domain of the velocity function are the edges and the resulting function is an edge function.

Node functions are defined on the nodes, such as: $$A=...-1,0,2,3,4, ....$$ They change abruptly and, consequently, the change over every edge $[A,B]$ is simply the difference of values at the end-points, from right to left: $$f(B)-f(A).$$

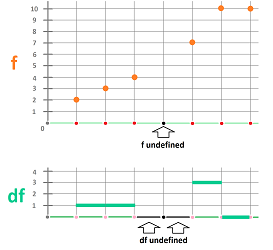

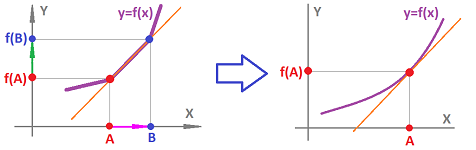

The output of this simple computation is assigned to the edge $[A,B]$: $$[A,B] \mapsto f(B)-f(A).$$ The relation between a node function and its change is illustrated below:

Definition. The differential of a node function $f$ at edge $[A,B]$ is defined to be the number $$df \big([A,B] \big):=f(B)-f(A).$$

Proposition. The differential of a node function $f$ at edge $[A,B]$ if and only of it is defined at both of its end-points $A,B$.

Thus, we have:

- $f$ is defined on the nodes, and

- $df$ is defined on the edges.

The latter is a new edge function!

Definition. The differential of a node function $f$ is an edge function denoted by $df$ given by its values on each edge: $$df \big([x,x+h] \big):=f(x+h)-f(x).$$

This is how the definition works:

Proposition. If a node function is undefined at node $c$, then its differential is undefined at either of the edges $[c-h,c]$ and $[c,c+h]$.

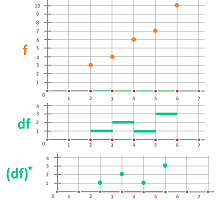

The differentials can be computed for node functions given by tables, see Spreadsheets. This is how a spreadsheet computes the whole differential:

Example. When the node functions are represented by formulas, the computations are straightforward ($h=1$): $$\begin{array}{llllll} (1)&f(n)=3n^2+1\ &\Longrightarrow\ &df\big( [n,n+1] \big)=(3(n+1)^2+1)-(3n^2+1)=6n+3;\\ (2)&g(n)=\frac{1}{n}\ &\Longrightarrow\ &dg\big( [n,n+1] \big)= \frac{1}{n+1}-\frac{1}{n}=-\frac{1}{n(n+1)} \text{ for } n\ne -1,0;\\ (3)&p(n)=2^n\ &\Longrightarrow\ &dp\big( [n,n+1] \big)= 2^{n+1}-2^{n}=2^n. \end{array}$$ $\square$

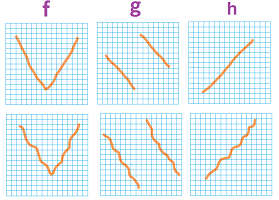

Definition. A node function $f$ is called increasing on interval $[a,b]$ if $$f(A)<f(B) \text{ for all nodes } A < B \text{ within } [a,b].$$ It is called decreasing on interval $[a,b]$ if $$f(A)>f(B) \text{ for all nodes } A < B \text{ within } [a,b].$$ The notation we use is $$f\nearrow \text{ on } [a,b],$$ and $$f\searrow \text{ on } [a,b],$$ respectively.

Of course, the function is increasing (or decreasing) on the interval when it is increasing (or decreasing) on each edge within the interval: $$f(x)<f(x+h)\quad (\text{or } f(x)>f(x+h)).$$

There are a few simple facts about the differential.

Theorem (Monotonicity). A differentiable node function is increasing (decreasing) on an interval if and only if its differential is positive (negative) on each edge in this interval; i.e., $$f\nearrow \text{ on } [a,b]\ \Longleftrightarrow\ df \big([x,x+h]\big) >0\text{ whenever } a \le x < x+h \le b;$$ and $$f\searrow \text{ on } [a,b]\ \Longleftrightarrow\ df \big([x,x+h]\big) <0\text{ whenever } a \le x < x+h \le b.$$

Proof. $$f(x+h)>f(x)\ \Longleftrightarrow\ f(x+h)-f(x)>0.$$ $\blacksquare$

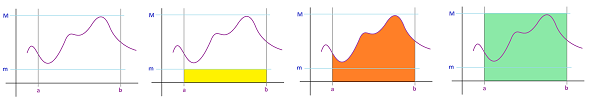

Even though the proof is obvious, zooming out shows the meaningfulness of the theorem:

By simply looking at the graph of the differential we discover how the function behaves. Meanwhile, when the function is given by a formula, we find its differential algebraically and then study the sign of this new function.

Example. We use the differentials computed above ($h=1$). $$\begin{array}{llll} (1)&f(n)=3n^2+1\ &\Longrightarrow\ &df\big( [n,n+1] \big)=6n+3\ &\Longrightarrow\ \\ &df\big( [n,n+1] \big)<0 \text{ if } n \le -1 &\text{ and }& df\big( [n,n+1] \big)>0 \text{ if } n\ge 0 \ &\Longrightarrow\ \\ & f\searrow \text{ on } (-\infty,-0] &\text{ and }& f\nearrow \text{ on } [0,\infty);\\ \\ (2)&g(n)=\frac{1}{n}\ &\Longrightarrow\ &dg\big( [n,n+1] \big)=-\frac{1}{n(n+1)}\ &\Longrightarrow\ \\ &dg\big( [n,n+1] \big)<0 \text{ if } n \le -2 &\text{ and }& dg\big( [n,n+1] \big) <0 \text{ if } n\ge 1 \ &\Longrightarrow\ \\ & g\searrow \text{ on } (-\infty,-1] &\text{ and }& g\searrow \text{ on } [1,\infty);\\ \\ (3)&p(n)=2^n\ &\Longrightarrow\ &dp\big( [n,n+1] \big)=2^n\ &\Longrightarrow \\ &dp\big( [n,n+1] \big)>0 \text{ for all } n \ &\Longrightarrow\ \\ & p\nearrow \text{ on } (-\infty,\infty). \end{array}$$ With just this data we can sketch very rough graphs of these three functions:

$\square$

Definition. A node function $f$ has a local maximum at node $c$ if there is an interval $[a,b]$ with $a<c<b$ such that $$f(A)<f(c) \text{ for any node } A \text{ within } [a,b].$$ It has a local minimum at node $c$ if there is an interval $[a,b]$ with $a<c<b$ such that $$f(A)>f(c) \text{ for any node } A \text{ within } [a,b].$$

Corollary (Extreme Values). A differentiable node function has a local maximum (minimum) at a point if and only if its differential is positive (negative) to the point's left and negative (positive) to its right; i.e., $$c \text{ is a local maximum of }f\ \Longleftrightarrow df \big([c-h,c]\big) >0 \text{ and } df \big([c,c+h]\big) <0;$$ and $$c \text{ is a local minimum of }f\ \Longleftrightarrow df \big([c-h,c]\big) <0 \text{ and } df \big([c,c+h]\big) >0.$$

In other words, $c$ is a local maximum of $f$ is increasing to the left of $c$ and increasing to the right (or vice versa for the minimum).

Both a node function and its differential are computed by a spreadsheet and shown below:

Edge functions don't have differentials. However, their dual functions do: $$ \newcommand{\ra}[1]{\!\!\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} \newcommand{\la}[1]{\!\!\!\!\!\!\!\xleftarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\ua}[1]{\left\uparrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{cccc} \text{ node function }& \ra{\quad d\quad }& \text{ edge function } &\\ \ua{\star} & \ne & \da{\star} & \\ \text{ edge function }& \la{\quad d\quad } & \text{ node function }& \\ \end{array} $$ Note that we do not claim that making the full circle will give us the function we started with.

The standard differential $dx$ is indeed a differential.

Proposition. The standard differential $dx$ is the differential of the identity function: $$dx=d(x).$$

Proof. $$f(x)=x\ \Longrightarrow\ df\big( [x,x+h] \big)=f(x+h)-f(x)=x+h-x=h.$$ $\blacksquare$

Algebraic properties of the differential

What happens to the differential(s) as we perform algebraic operations with the function(s)?

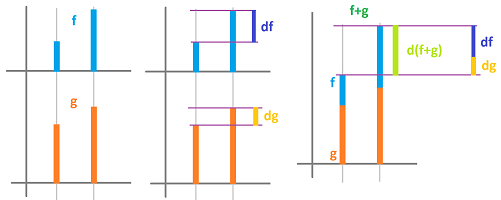

The first idea is illustrated below:

Here, the bars are stacked on top of each other, the heights are added to each other and so are the height differences.

Theorem (Sum Rule). The sum of two node functions defined on an interval is defined on the interval and its differential is equal to the sum of their differentials; i.e., for any two node functions $f,g$, we have: $$d(f + g)= df + dg.$$

Proof. Applying the definition to the function $f+g$, we have: $$\begin{array}{lll} d(f+g)\big([x,x+h]\big)&=(f+g)(x+h)-(f+g)(x)\\ &=f(x+h)+g(x+h)-f(x)-g(x)\\ &=\big( f(x+h)-f(x) \big) +\big(g(x+h)-g(x) \big)\\ &=df\big([x,x+h]\big)+dg\big([x,x+h]\big). \end{array}$$ $\blacksquare$

In terms of motion, if two runners are running away from each other starting from a common location, then the distance between them is the sum of the distances they have covered.

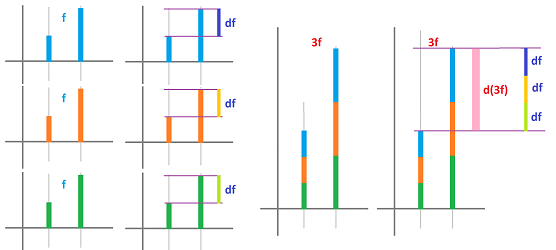

The second idea is illustrated below:

Here, if the heights triple then so do the height differences.

Theorem (Constant Multiple Rule). A multiple of a node function defined on an interval is defined on the interval and its differential is equal to the multiple of the function's differential; i.e., for any node function $f$ and any real $c$, we have: $$d(cf) = c\, df.$$

Proof. Applying the definition to the function $c\,f$, we have: $$\begin{array}{lll} d(c\cdot f)\big([x,x+h]\big)&=(c\cdot f)(x+h)-(c\cdot f)(x)\\ &=c\cdot f(x+h)-c\cdot f(x)\\ &=c\cdot \big( f(x+h)-f(x) \big)\\ &=c\cdot df\big([x,x+h]\big). \end{array}$$ $\blacksquare$

In terms of motion, if the distance is rescaled, such as from miles to kilometers, then so is the velocity -- at the same proportion.

Theorem (Constant Function Rule). A node function defined on an interval is constant on the interval if and only if its differential on each edge in this interval is zero; i.e., $$f \text{ is constant on } [a,b]\ \Longleftrightarrow df \big([x,x+h]\big) =0 \text{ whenever } a\le x < x+h\le b.$$

Proof. $$f(x)=C \text{ for all } x\ \Longleftrightarrow\ df \big([x,x+h]\big)=C-C=0.$$ $\blacksquare$

Corollary. Two node functions defined on an interval have the same differential on the interval if and only if they differ by a constant on that interval; i.e., $$df =dg \text{ on } [a,b]\ \Longleftrightarrow f=g+C \text{ for some real } C \text{ on } [a,b].$$

Proof. By the Sum Rule and the Constant Multiple Rule, we have $$df =dg \text{ on } [a,b]\ \Longleftrightarrow d(f-g)=0 \text{ on } [a,b].$$ Meanwhile, by the Constant Function Rule, we have $$d(f-g)=0 \text{ on } [a,b]\ \Longleftrightarrow f-g \text{ is constant on } [a,b].$$ $\blacksquare$

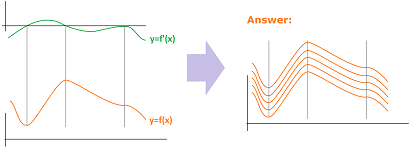

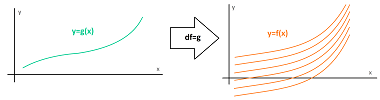

The graphs of functions that differ by a constant have exactly same shape:

In terms of motion, two runners run while holding the two ends of a stick between them, still capable of changing their common speed.

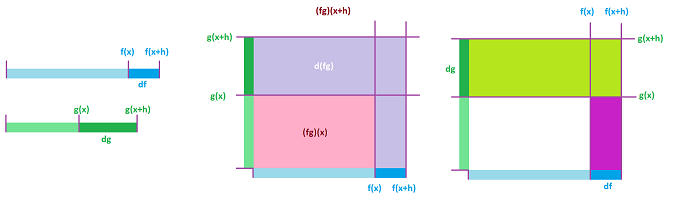

The next idea is illustrated below:

As the width and the depth are increasing, so is the area of the rectangle. But the increase of the area cannot be expressed entirely in terms of the increases of the width and depth! This increase is split into two parts corresponding to the two terms in the right-hand side of the formula below.

Theorem (Product Rule). The product of two node functions defined on an interval is defined on the interval and its differential is found as a combination of these functions and their differentials; specifically, given two node functions $f,g$, for any edge $[x,x+h]$, we have: $$d(f \cdot g)\big([x,x+h]\big) = f(x+h)dg\big([x,x+h]\big) + df\big([x,x+h] \big)g(x).$$

Proof. $$\begin{array}{lll} d(f \cdot g)\big([x,x+h]\big)&=(f \cdot g)(x+h)- (f \cdot g)(x)\\ &=f(x+h) \cdot g(x+h)- f(x) \cdot g(x)\\ &=f(x+h) \cdot g(x+h)- f(x+h) \cdot g(x) +f(x+h) \cdot g(x)- f(x) \cdot g(x)\\ &=f(x+h) \cdot (g(x+h)- g(x)) +(f(x+h) - f(x)) \cdot g(x)\\ &=f(x+h) \cdot dg\big([x,x+h]\big) + df\big([x,x+h] \big) \cdot g(x). \end{array}$$ $\blacksquare$

In terms of motion, it is as if two runners are unfurling a flag while running east and north respectively.

Theorem (Quotient Rule). The quotient of two node functions defined on an interval is defined on the interval and its differential is found as a combination of these functions and their differentials; specifically, given two node functions $f,g$ and any edge $[x,x+h]$, we have: $$d(f/g)([x,x+h]) = \frac{df\big([x,x+h] \big)g(x) - f(x+h)dg\big([x,x+h] \big)}{g(x)g(x+h)},$$ provided $g(x),g(x+h) \ne 0$.

The composition of two node functions is impossible because the argument of one and the value of the other won't match. Instead, we will consider the case when only one of the functions is a node function.

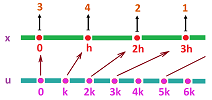

First, we assume that the node function is preceded by another function. What is that function? For the composition to make sense, it has to be defined on another domain and take the nodes of this domain to the nodes of the first. What happens is illustrated below for $Q=0$:

This is the general set-up:

- the $x$-axis has nodes $...,Q,Q+h,Q+2h,...$, and

- the $u$-axis has nodes $...,P,P+k,P+2k,...$.

Then

- $g$ takes the edges of the $u$-axis to the edges of the $x$-axis.

One can think of $g$ as a uniform re-scaling of the $u$-axis. The simplest example is a linear function. For example, consider $g(u)=mu+b,\ m\ne 0$. Then, $$g(P+nk)=m(P+nk)+b=(mP+b)+mnk.$$ Therefore, $$Q=mP+b \text{ and } mnk=nh.$$ Then, the new domain has these features: $$P=\frac{Q-b}{m}\text{ and } k=h/m.$$

Theorem (Chain Rule). The composition of a node function defined on an interval and a linear function is defined on a certain interval and its differential is equal to the product of the node function's differential and the slope of the linear function; i.e., if $f$ is a node function on the $x$-axis and $g$ is a linear function: $$g(u):=mu+b$$ on the $u$-axis that takes the edges of the $u$-axis to the edges of the $x$-axis, then the differential of the composition function, $$p(u):=(f\circ g)(u)=f(mu+b),$$ is found by $$dp\big( [u,u+k] \big)=df\big( [x,x+h] \big),$$ where $x=mu+b$.

Proof. We compute the differential: $$\begin{array}{lll} dp\big( [u,u+k] \big)&=p(u+k)-p(u)\\ &=f(m(u+k)+b)-f(mu+b)\\ &=f(mu+mk+b)-f(mu+b)\\ &=f(x+h)-f(x)\\ &=df\big( [x,x+h] \big). \end{array}$$ $\blacksquare$

Function $g$ is also seen as a change of variable.

Second, we assume that the node function is followed by another function that is simply a function of a real argument and a real value. When this function is linear $$g(t):=mt+b,$$ the differential of the composition function (a node function on the $x$-axis), $$q(x):=(g\circ f)(x)=mf(x)+b,$$ is found by the Sum and the Constant Multiple Rules.

Computations of differentials

For simplicity, we carry out these computations for the case of $h=1$.

Theorem (Power Formula). For any positive integer $k$ and any edge $[n,n+1]$, we have: $$d (n^k)\big([n,n+1]\big)=(n+1)^k-n^k=(n+1)^{k-1}-(n+1)^{k-2}n\pm ...+(-1)^kn^{k-1}.$$

This what we know about the case of an odd $k$: $$n+1>n \ \Rightarrow\ (n+1)^k > n^k \ \Rightarrow\ (n+1)^k-n^k>0 \ \Rightarrow\ d (n^k)>0,$$ for all $n$. Therefore, the function is increasing: $$n^k \nearrow.$$ When $k$ is even, this analysis applies to all $n>0$. For $n<0$, it is the opposite: $$n+1>n \ \Rightarrow\ (n+1)^k < n^k \ \Rightarrow\ (n+1)^k-n^k<0 \ \Rightarrow\ d (n^k)<0.$$ Therefore, we have : $$n^k \searrow \text{ on } (-\infty, 0] \text{ and } n^k \nearrow \text{ on } [0,-\infty),$$ with a minimum point at $0$.

Theorem (Factorial Formula). For any positive integer $k$ and any edge $[n,n+1]$, we have: $$d (n^{\underline {k}})\big([n,n+1]\big)=kn^{\underline {k-1}},$$ where $$p^{\underline {q}} := p(p-1)(p-2)(p-3)...(p-q+1). $$

Proof. From the definition, we have: $$\begin{array}{lll} d (x^{\underline {k}})\big([n,n+1]\big)&=d\big( n(n-1)(n-2)...(n-k+1) \big)\\ &=(n+1)n(n−1)...(n−k+2)−n(n−1)(n−2)...(n−k+2)(n−k+1) \\ &= n(n−1)...(n−k + 2)(n + 1 − (n − k + 1)) \\ &= kn^{\underline {k-1}} . \end{array}$$ $\blacksquare$

Therefore, function $n^{\underline {k}}$ is decreasing on $(-\infty, 0]$ and then increasing on $[0,\infty)$.

Theorem (Exponent Formula). For any positive real $b$ and any edge $[n,n+1]$, we have: $$d(b^{tn})\big([n,n+1]\big)=(b^t-1)b^{tn}.$$

Therefore, the exponential function $b^n$ is increasing for $b>1$ and decreasing for $0<b<1$.

Exercise. Prove this theorem.

Theorem (Log Formula). For any positive real $b$ and any edge $[n,n+1]$, we have: $$d(\log_b (tn)) \big([n,n+1] \big)=\log_b \left( 1+\tfrac{1}{n} \right).$$

Therefore, the logarithm $\log_b n$ is increasing for $b>1$ and decreasing for $0<b<1$.

Exercise. Prove this theorem.

Theorem (Trig Formulas). For any real $t$ and any edge $[n,n+1]$, we have: $$\begin{array}{lllll} d(\sin (tn))\big([n,n+1]\big)=2\sin \big(\tfrac{t}{2}\big) \cos \big(t(n+\tfrac{1}{2})\big),\\ d(\cos (tn))\big([n,n+1]\big)=-2\sin \big(\tfrac{t}{2}\big) \sin \big(t(n+\tfrac{1}{2}\big)). \end{array}$$

Proof. We use the formulas: $$\begin{array}{lllll} \sin u - \sin v = 2 \sin\big(\tfrac{1}{2}(u-v)\big) \cos\big(\tfrac{1}{2}(u+v)\big);\\ \cos u + \cos v = -2 \sin\big(\tfrac{1}{2}(u-v)\big) \sin\big(\tfrac{1}{2}(u+v)\big). \end{array}$$ Then $$d(\sin tn)\big([n,n+1]\big)=\sin \big(t(n+1)\big) - \sin (tn) = 2 \sin\big(\tfrac{1}{2}t\big) \cos\big(\tfrac{1}{2}t(2n+1)\big).$$ $\blacksquare$

From the definition of $\sin$ and $\cos$ we know that they alternate -- periodically every $\pi /t $ -- between positive and negative values: $$\begin{array}{ccccccccccccccccccc} +&+& + &&&& + &+& + &&&& + &+& +\\ &&&- &-& - &&&& - &-& - &&&& - \\ \end{array}$$ Then, the theorem confirms that the two functions also alternate -- periodically every $\pi /t $ -- between increasing and decreasing behavior: $$\begin{array}{ccccccccccccccccccc} &\cdot &&&&&& \cdot &&&&&& \cdot \\ \nearrow && \searrow &&&& \nearrow && \searrow &&&& \nearrow && \searrow\\ &&&\searrow && \nearrow &&&& \searrow && \nearrow &&&& \searrow \\ &&&&\cdot &&&&&& \cdot &&&&&& \cdot \end{array}$$ Local extreme points also appear periodically.

Exercise. Finish the proof.

The Fundamental Theorem of Calculus

We now consider the relation between the integral and the differential.

Given a node function $f$, let's take our definition of the differential $df$ at an edge, $$df\big([A,B] \big) :=f(B) - f(A),$$ and integrate this edge function over the same edge. Since there is only one interval here, the result is trivial: $$\displaystyle\int_A^B df = f(B) - f(A).$$

Now, what about integration over “longer” intervals? Let $A_0,A_1,...,A_k$ is a sequence of consecutive nodes. Let's consider the interval as a “chain” of edges: $$[A_0,A_k] := [A_0,A_1] \cup [A_1,A_2]\ \cup\ ...\ \cup\ [A_{k-1},A_k].$$ Then we have: $$\begin{array}{llll} \int_{A_0}^{A_k} df &= df\big([A_0,A_1]& \cup\ [A_1,A_2]\ \cup &...& \cup\ [A_{k-1},A_k] \big) \\ &= df\big([A_0,A_1] \big)&+df\big([A_1,A_2] \big)+&...&+df\big([A_{k-1},A_k] \big) \\ &= f(A_1)-f(A_0) &+ f(A_2) - f(A_1) +& ... &+ f(A_k) - f(A_{k-1}) \\ &= f(A_k) -f(A_0) . \end{array}$$ We simply applied the definition repeatedly. The result is of crucial importance.

Theorem (Fundamental Theorem of Calculus I). For any node function $f$ defined on interval $[A,B]$, we have $$\begin{array}{|c|} \hline \\ \quad \displaystyle\int_A^B df =f(B) -f(A). \quad \\ \\ \hline \end{array}$$

This is the theorem's “net change” form.

Corollary. Suppose $g$ be an edge function and $f$ a node function defined on interval $[a,b]$. Suppose also that $$df=g.$$ Then, for any node $x$ in $[a,b]$, we have $$\int_a^x g=f(x)-f(a).$$

Another form of the theorem uses the integral of an edge function $g$ with a variable upper limit to define a new function:

Theorem (Fundamental Theorem of Calculus II). Let $g$ be an edge function on interval $[a,b]$. Define a node function $f$ on $[a,b]$ by giving it a value for every node $x=a,a+h,...,b-h,b$, by $$f(x):=\int_a^x g.$$ Then $$df=g.$$

Proof. We use Additivity: $$\begin{array}{ll} df\big([x,x+h]\big)&=f(x+h)-f(x)\\ &=\int_a^{x+h} g - \int_a^x g\\ &=\int_x^{x+h} g\\ &=g\big([x,x+h]\big). \end{array}$$ $\blacksquare$

This is how a spreadsheet computes and plots such a function:

Theorem (Fundamental Theorem of Calculus III). Suppose $g$ be an edge function and $f$ a node function defined on interval $[a,b]$. Suppose also that $$df=g.$$ Then, for any node $x$ in $[a,b]$, we have $$\int_a^x g=f(x)-f(a).$$

The derivative

The ability to measure distances in the domain allows us to study “the rate of change” of a node function instead of just “change”, which is the differential.

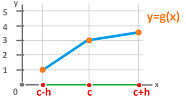

For a node function $y=g(x)$, as if it is linear on interval $[A,B]$, its rate of change is defined to be $$\frac{\text{change of } y}{ \text{change of } x}=\frac{g(B)-g(A)}{B-A}.$$ The ration is also known as the slope of the line.

We can re-write the fraction as $$\frac{g(B)-g(A)}{B-A} = \frac{g(B)-g(A)}{|[A,B]|}= \frac{g(B)-g(A)}{h}.$$ The numerator is easily recognized as $dg(a)$, the differential $dg$ of the node function $g$ evaluated at the edge $a=[A,B]$. Now, to create a new function, we need assign this new number, the output, to an input? Is it an edge or a node? We assign the value of the above ratio to the node dual to edge $a$, as follows.

Definition. The derivative of a differentiable node function $g$ is the node function given by its values at each node, $x$: $$g'(x):=\frac{1}{h}dg\big([x-h/2,x+h/2]\big).$$

Also useful is the explicit form: $$g'( x ):=\frac{g(x+h/2)-g(x-h/2)}{h}.$$

As a node function, the derivative can be further differentiated, as discussed in the next subsection.

The operation of finding $g'$ from $g$ is called differentiation.

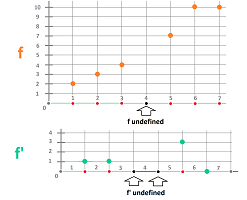

This is how the definition works ($h=1$):

Proposition. If a node function is undefined at node $c$, then its derivative is undefined at either of the (adjacent to $c$ dual) nodes $c-h/2$ and $c+h/2$.

When the node function is represented by a formula, the computation is often straightforward.

Example. (1) $$\begin{array}{lll} f(x)=3x^2+1\ &\Longrightarrow\ \\ f'(x)&=\frac{1}{h}\big(3(x+h/2)^2+1)-(3(x-h/2)^2+1)\big)\\ &=\frac{1}{h}\big((3x^2+3xh+3h^2/4+1)-(3x^2-3xh+3h^2/4+1) \big)\\ &=\frac{1}{h}6xh\\ &=6x. \end{array}$$ (2) $$\begin{array}{lll}g(x)=\frac{1}{x}\ &\Longrightarrow\ \\ g'(x)&= \frac{1}{h}\left( \frac{1}{x+h/2}-\frac{1}{x-h/2} \right)\\ &= \frac{1}{h}\left( \frac{x-h/2}{(x+h/2)(x-h/2)}-\frac{x+h/2}{(x-h/2)(x+h/2)} \right)\\ &=\frac{1}{h}\frac{-h}{(x-h/2)(x+h/2)}\\ &=-\frac{1}{(x-h/2)(x+h/2)} \text{ for } x\ne -h/2,h/2. \end{array}$$ (3) $$\begin{array}{lll} p(x)=2^x\ &\Longrightarrow\ \\ p'(x)&= \frac{1}{h}(2^{x+h/2}-2^{x-h/2})\\ &=\frac{1}{h}2^{x}(2^{h/2}-2^{-h/2})\\ &=\frac{2^h-1}{h}2^{-h/2}\,2^x . \end{array}$$ $\square$

Proposition. If the differentials are equal then so are the derivatives, i.e., for any node functions $f$ and $g$, we have $$f'=g' \Longleftrightarrow df=dg.$$

Proposition. The sign of the differential and that of the derivative coincide; i.e., for any node function $f$, we have $$\operatorname{sign} df \big( [x-h/2,x+h/2] \big) = \operatorname{sign} f'(x).$$

These two facts allow us to restate the simple theorems about the differential as ones about the derivative.

Theorem (Monotonicity). A node function is increasing (decreasing) on an interval if and only if its derivative is positive (negative) on each edge in this interval; i.e., $$f\nearrow \text{ on } [a,b]\ \Longleftrightarrow\ f' ( x ) >0\text{ whenever } a \le x-h/2 < x+h/2 \le b;$$ and $$f\searrow \text{ on } [a,b]\ \Longleftrightarrow\ f' ( x ) <0\text{ whenever } a \le x-h/2 < x+h/2 \le b.$$

Zooming out shows the meaning of the theorem:

Corollary (Extreme Values). A node function has a local maximum (minimum) at a point if and only if its derivative is positive (negative) to the point's left and negative (positive) to its right; i.e., $$c \text{ is a local maximum of }f\ \Longleftrightarrow f' ( c-h/2 ) >0 \text{ and } f' ( c+h/2 ) <0;$$ and $$c \text{ is a local minimum of }f\ \Longleftrightarrow f' ( c-h/2 ) <0 \text{ and } f' ( c+h/2 ) >0.$$

The derivative is computed with a spreadsheet:

Link to file: Spreadsheets.

The derivative also gives us the slope of the line that -- when zoomed out -- appear to touch the graph at the point.

Definition. Given a node function $f$, its tangent line at edge $[x,x+h]$ is the line in the $xy$-plane that connects the points $(a,f(a))$ and $(a+h,f(a+h))$ on the graph of $f$.

Proposition. The tangent line of a node function $f$ at edge $[a,a+h]$, on which $f$ is defined, is given by: $$y=f(a)+f'(a+h/2) (x-a).$$

If we keep only the segment of the tangent line that lies over the edge $[a,a+h]$ but do that for every edge, the result is a “continuous” curve:

There are no derivatives of edge functions.

We can re-write the definition of the derivative as follows: $$df\big( [x-h/2,x+h/2] \big)=f'(x)\cdot h.$$ In other words, we have the following important formula that connects the differential and the derivative of a node function by means of the standard differential.

Theorem. For any node function $f$ defined on an interval, we have on that interval $$df=f'dx.$$

We can now restate the familiar theorem for differentials as one for derivatives.

Theorem (Fundamental Theorem of Calculus I). For any node function $f$ defined on interval $[A,B]$, we have $$\int_A^B f'\, dx=f(B)-f(A).$$

Algebraic properties of the derivative

The formula that gives the derivative is simple. Then, the Sum Rule and the Constant Multiple Rule for the differential all transform automatically to their analogs for the derivative. Furthermore, the denominator is the only thing we need to watch for in order to derive the rest of algebraic properties of the derivative.

Theorem (Sum Rule). The sum of two node functions defined on an interval is defined on the interval and its derivative is equal to the sum of their derivatives; i.e., for any two node functions $f,g$, we have: $$(f + g)'= f' + g'.$$

Theorem (Constant Multiple Rule). A multiple of a node function defined on an interval is defined on the interval and its derivative is equal to the multiple of the function's derivative; i.e., for any node function $f$ and any real $c$, we have: $$(cf)' = c\, f'.$$

Theorem (Constant Function). A node function is constant on an interval if and only if its derivative on the dual of each edge in this interval is zero; i.e., $$f \text{ is constant on } [a,b]\ \Longleftrightarrow f'( x ) =0 \text{ whenever } a\le x < x+h\le b.$$

Corollary. Two node functions defined on an interval have the same derivative on the interval if and only if they differ by a constant on that interval; i.e., $$f' =g' \text{ on } [a,b]\ \Longleftrightarrow f=g+C \text{ for some real } C \text{ on } [a,b].$$

Proof. By the Sum Rule and the Constant Multiple Rule, we have $$f' =g' \text{ on } [a,b]\ \Longleftrightarrow (f-g)'=0 \text{ on } [a,b].$$ Meanwhile, by the Constant Function Rule, we have $$(f-g)'=0 \text{ on } [a,b]\ \Longleftrightarrow f-g \text{ is constant on } [a,b].$$ $\blacksquare$

Theorem (Product Rule). The product of two node functions defined on an interval is defined on an interval and its derivative is found as a combination of these functions and their derivatives; specifically, given two node functions $f,g$, we have: $$(f \cdot g)'( x ) = f(x+h/2)g'( x ) + f'( x )g(x-h/2).$$

Proof. $$\begin{array}{lll} (f \cdot g)'( x )&=\frac{1}{h}\Big((f \cdot g)(x+h/2)- (f \cdot g)(x-h/2)\Big)\\ &=\frac{1}{h}\Big( f(x+h/2) \cdot g(x+h/2)- f(x-h/2) \cdot g(x-h/2) \Big)\\ &=\frac{1}{h}\Big( f(x+h/2) \cdot g(x+h/2)- f(x+h/2) \cdot g(x-h/2) \\ &+f(x+h/2) \cdot g(x-h/2)- f(x-h/2) \cdot g(x-h/2) \Big)\\ &=\frac{1}{h}\Big( f(x+h/2) \cdot g(x+h/2)- f(x+h/2) \cdot g(x-h/2) \Big)\\ &+\frac{1}{h}\Big( f(x+h/2) \cdot g(x-h/2)- f(x-h/2) \cdot g(x-h/2) \Big)\\ &=f(x+h/2) \frac{g(x+h/2)- g(x-h/2)}{h} +\frac{f(x+h/2) - f(x-h/2)}{h} g(x-h/2)\\ &=f(x+h/2)\cdot g'( x ) + f'( x )\cdot g(x-h/2). \end{array}$$ $\blacksquare$

Theorem (Quotient Rule). The quotient of two node functions defined on an edge is defined on the edge unless the denominator is equal to zero and its derivative is found as a combination of these functions and their derivatives; specifically, given two node functions $f,g$, we have: $$(f/g)'( x ) = \frac{f'( x )g(x-h/2) - f(x+h/2)g'( x )}{g(x-h/2)g(x+h/2)},$$ provided $g(x-h/2),g(x+h/2) \ne 0$.

Proof. We start with the case $f=1$. Then we have: $$\begin{array}{lll} (1 / g)'( x )&=\frac{1}{h}\left(\frac{1}{g(x+h/2)}- \frac{1}{g(x-h/2)}\right)\\ &=\frac{1}{h}\frac{g(x-h/2)- g(x+h/2)}{g(x+h/2)g(x-h/2)} \\ &=-\frac{g(x+h/2)- g(x-h/2)}{h}\frac{1}{g(x+h/2)g(x-h/2)} \\ &= -g'( x )\frac{1}{g(x-h/2) \cdot g(x+h/2)}. \end{array}$$ Now the general formula follows from the Product Rule. $\blacksquare$

A rule for compositions is available only for the special case below.

Theorem (Chain Rule). The composition of a node function defined on an interval and a linear function is defined on a certain interval and its derivative is equal to the product of the node function's derivative and the slope of the linear function; i.e., if $f$ is a node function on the $x$-axis and $g$ is a linear function: $$g(u):=mu+b$$ on the $u$-axis that takes the edges of the $u$-axis to the edges of the $x$-axis, then the derivative of the composition function, $$p(u):=(f\circ g)(u)=f(mu+b),$$ is found by $$p'(u)=f'(mu+b)m.$$

Proof. From the Chain Rule for differentials, we know that $$dp\big( [u-k/2,u+k/2] \big)=df\big( [x-h/2,x+h/2] \big),$$ where $x=mu+b$. We divide both sides by $k$: $$\frac{1}{k}\, dp\big( [u-k/2,u+k/2] \big)=\frac{1}{k}\, df\big( [x-h/2,x+h/2] \big),$$ then we have $$p'(u)=\frac{1}{h/m}\, df\big( [x-h/2,x+h/2] \big)=mf'(x).$$ $\blacksquare$

Theorem (Power Formula). For any positive integer $k$, we have: $$(x^k)'=(x+h/2)^{k-1}-(x+h/2)^{k-2}(x-h/2)\pm ...+(-1)^k(x-h/2)^{k-1}.$$

Theorem (Exponent Formula). For any positive real $b$, we have: $$(b^x)'=\frac{b^h-1}{h}b^{-h/2}\, b^x.$$

Exercise. Prove this theorem.

Theorem (Log Formula). For any positive real $b$ and $t$, we have: $$(\log_b (tx))'=\log_b \left( 1+\frac{1}{x-h/2} h\right)^{1/h} \text{ for }x>0.$$

Proof. $$\begin{array}{lllll} (\log_b (tx))'&=\frac{1}{h} \log_b \big( t (x+h/2) \big)-\log_b \big( t (x-h/2) \big)\\ &=\frac{1}{h}\log_b \frac{t (x+h/2)}{ t (x-h/2) }\\ &=\frac{1}{h}\log_b \frac{x+h/2}{ x-h/2 }\\ &=\frac{1}{h}\log_b \left( 1+ \frac{h}{ x-h/2 } \right). \end{array}$$ $\blacksquare$

Theorem (Trig Formulas). For any real $t$, we have: $$\begin{array}{lllll} (\sin (tx))'= \frac{\sin (t\,h/2)}{h/2} \cos (tx),\\ (\cos (tx))'=-\frac{\sin (t\,h/2)}{h/2} \sin (tx). \end{array}$$

Proof. We use the formulas: $$\begin{array}{lllll} \sin u - \sin v = 2 \sin\big(\tfrac{1}{2}(u-v)\big) \cos\big(\tfrac{1}{2}(u+v)\big);\\ \cos u + \cos v = -2 \sin\big(\tfrac{1}{2}(u-v)\big) \sin\big(\tfrac{1}{2}(u+v)\big). \end{array}$$ Then $$\begin{array}{lllll} (\sin (tx))'( x )&=\frac{1}{h}\Big(\sin \big(t(x+h/2)\big) - \sin (\big(t(x-h/2)\big) \Big)\\ &= \frac{1}{h} 2 \sin\big(\tfrac{1}{2}t\,h\big) \cos\big(tx\big). \end{array}$$ $\blacksquare$

Exercise. Finish the proof.

Global maxima and minima

The Mean Value Theorem

Antiderivatives and the integral

Definition. Given a node function $f$. A node function $F$ is called an antiderivative, or an indefinite integral, of $f$ if $F'=f$.

The operation of finding $F$ from $f$ is called integration.

We restate the familiar theorems about the differentials as one about derivatives.

Theorem (Fundamental Theorem of Calculus I). For any node function $f$ defined on interval $[A,B]$, we have $$\displaystyle\int_A^B f' \, dx =f(B) -f(A). $$

Theorem (Fundamental Theorem of Calculus II). Suppose $g$ be an edge function defined on interval $[a,b]$. Define a node function $F$ by giving it a value for every node $x$ within $[a,b]$, $$F(x):=\int_a^x f\,dx.$$ Then $F'=f$, i.e., $F$ is an antiderivative of $f$, on $[a,b]$.

Proof. We apply the Fundamental Theorem of Calculus II to $g=f\,dx$. Then $dF=dg$. We restate the identity as $F'\,dx=f\,dx$ and arrive to our conclusion. $\blacksquare$

Notation. Antiderivatives of a node function $f$ are denoted by $$\int f\, dx.$$

By the corollary in the last subsection, any two antiderivatives of $g$ differ by a constant on any interval in their domain. Therefore, they all can be represented as a definite integral above.

That's why it suffices to provide just one of them, say $$\int g(x)\, dx =f(x).$$ However, how do we present the whole, infinite collection of antiderivatives? Every antiderivative is given by the formula: $$f(x)+C,$$ where $C$ is any real number. The collection will contain at least these: $$\begin{array}{ll} ...\\ f(x)-1,\\ f(x),\\ f(x)+1,\\ f(x)+2,\\ ... \end{array}$$ Then the common, abbreviated way to present the collection is: $$\int g(x)\, dx =f(x)+C.$$

However, the formula is only valid on an interval within the domain of $g$. In general, when the domain of $g$ doesn't contain some of the nodes, the domain splits into disconnected intervals. Then, there will be one constant for each such interval.

Example. Suppose $c$ is a real number. Let's find all the antiderivatives of the node function $f(x):=c$. From the previous experience with differentiation we recall that $$(cx)'=c.$$ Therefore, the node function $$g(x)=cx$$ is an antiderivative of $f$. However, to present the whole collection of antiderivatives we need to give more: $$\int c\, dx =cx+C.$$ $\square$

The operations of differentiation and integration “cancel” each other as follows.

Theorem. For any node function $F$, we have $$\int F'\, dx=F(x)+C;$$ and for any node function $f$, we have $$\left( \int f\, dx \right)' = f(x).$$

Therefore, formulas about the derivatives can be turned into ones about antiderivatives. Below we reverse some of the computations in the last subsection.

Example. (1) $$\begin{array}{lll} F(x)=3x^2+1 & \Longrightarrow\ F'( x )=6x ;\\ f(x)=6x &\Longrightarrow\ \int f\, dx =3x^2+1. \end{array}$$ The whole collection of antiderivatives is given by $$\int 6x\, dx =3x^2 +1+C,$$ where $C$ is any real number. Since $1$ is a constant, it can be “absorbed” into $C$, as follows: $$\int 6x\, dx =3x^2 +C.$$

(2) $$\begin{array}{lll} G(x)=\frac{1}{x} &\Longrightarrow\ G'( x )=-\frac{1}{(x-h/2)(x+h/2)} \text{ for } x\ne 0;\\ g(x)=-\frac{1}{(x-h/2)(x+h/2)}&\Longrightarrow\ \int g\, dx =\frac{1}{x} \text{ on } (-\infty,-h] \text{ and on } [h,\infty). \end{array}$$ The whole collection of antiderivatives is given by $$\int \frac{1}{(x-h/2)(x+h/2)} \, dx= \begin{cases} -\frac{1}{x} +C_1 \text{ for } x<0;\\ -\frac{1}{x} +C_2 \text{ for } x>0. \end{cases}$$

(3) $$\begin{array}{lll} P(x)=2^x\ \Longrightarrow\ P'( x )=\frac{2^h-1}{h}2^{x};\\ p(x)=\frac{h}{2^h-1}2^{-h/2}\, 2^{x} \Longrightarrow\ \int p\, dx =2^x. \end{array}$$ The whole collection of antiderivatives is given by $$\int 2^x \, dx=\frac{h}{2^h-1}2^{h/2}\, 2^x +C.$$ $\square$

Example (2) above shows that antidifferentiation is carried out independently on each disconnected interval in the domain of the function:

Some of the formulas of the derivatives in the last subsection can be easily reversed.

Theorem (Sum Rule for Integrals). For any interval $[a,b]$ and any two node functions $f,g$ defined on $[a,b]$, we have: $$\int (f + g)\, dx= \int f\, dx + \int g\, dx.$$

Theorem (Constant Multiple Rule for Integrals). For any interval $[a,b]$, any node function $f$ defined on $[a,b]$, and any real $c$, we have: $$\int cf\, dx = c \int f\, dx.$$

A rule for compositions is available only for the limited case below.

Theorem (Integration by Substitution). If $f$ is a node function on interval $[a,b]$ in the $x$-axis and $g$ is a linear function (a change of variable): $$g(u):=mu+b$$ on the $u$-axis that takes the edges of the $u$-axis to the edges of the $x$-axis, then $$\int g\, du=\frac{1}{m}\int f\,dx,$$ where $x=mu+b$.

Proof. We know from the Chain Rule that $$G(u):=F(mu+b)+C\ \Longleftrightarrow \ G'(u)=F'(x)m.$$ Then we arrive to our conclusion by reversing the last identity. $\blacksquare$

We don't need the $+C$ term above because it is implicitly present in both left- and right-hand sides of the above identities.

Theorem (Factorial Formula for Integrals). For any non-negative integer $k$, we have: $$ \int x^{\underline {k}} \, dx = \frac{1}{k+1} x^{\underline {k+1}} +C.$$

Theorem (Exponent Formula for Integrals). For any positive real $b$, we have: $$\int b^x\, dx =\frac{h}{b^h-1} b^x+C.$$

Theorem (Trig Formulas for Integrals). For any real $t$, we have: $$\begin{array}{lllll} \int \sin (tx)\, dx = \frac{h/2}{\sin (t\,h/2)} \cos ( tx)+C,\\ \int \cos (tx)\, dx =-\frac{h/2}{\sin (t\,h/2)} \sin ( tx)+C. \end{array}$$

Concavity and the second derivative

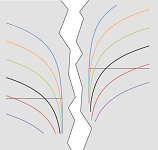

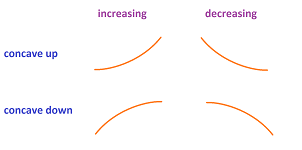

The picture below informally suggests what upward and downward concavity mean:

We can think of these as graphs of node functions zoomed out.

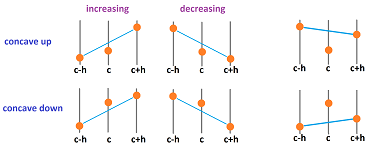

Now, let's zoom in.

The pattern is clear: it is concave up when the middle point lies below the line connecting the other two and concave down when it is above.

Definition. A node function $f$ is called concave up on interval $[a,b]$ if $$f(c)<\frac{f(c-h)+f(c+h)}{2},$$ for all adjacent edges $[c-h,c],[c,c+h]$ within the interval $[a,b]$. A node function $f$ is called concave down on interval $[a,b]$ if $$f(c)>\frac{f(c-h)+f(c+h)}{2}.$$

We will use the notation:

- $\smile$ for concave up, and

- $\frown$ for concave down.

The sign of the differential will tell us the difference between increasing and decreasing behavior. We need a similar tool to evaluate the concavity which is a concept that captures the shape of the graph of a function in calculus as well as the acceleration in physics. The concavity is determined by the sign of the following expression: $$2f(c)-\big(f(c-h)+f(c+h)\big).$$ Let's rearrange the terms: $$=\big(f(c)-f(c-h)\big)-\big(f(c+h)-f(c)\big).$$ This is the difference of two differentials of $f$ evaluated at the two adjacent edges $[c-h,c],[c,c+h]$. To see the derivatives, let's divide this by $h^2$: $$\frac{1}{h^2}\Big(2f(c)-\big(f(c-h)+f(c+h\big)\Big) = \frac{\frac{f(c)-f(c-h)}{h}-\frac{f(c+h)-f(c)}{h}}{h}.$$

Let's take a closer look at this formula. The two fractions in the numerator are recognized as the derivative of the node function $f$ evaluated at the two adjacent nodes $c-h/2,c+h/2$.

$$= \frac{f'(c+h/2)-f'(c-h/2)}{h}.$$

Then the whole expression is the rate of change of the derivative. It is the derivative of the derivative!

Definition. Given a node function its second derivative is the derivative of its derivative: $$f' '=(f')'.$$

Theorem (Concavity). Suppose a node function$f$ is defined on interval $[a-h,b+h]$ with $b>a+h$. Then $f$ is

- (1) concave up on interval $[a,b]$ if and only if $f' '>0$ on $[a,b]$.

- (2) concave down on interval $[a,b]$ if and only if $f' '<0$ on $[a,b]$.

Proposition. The second derivative of a node function $g$ is a node function given by its values at each node: $$g' '(B)=\frac{1}{h^2}( dg(b) - dg(a)),$$ where $a,b$ are the edges adjacent to $B$ on the left and right respectively.

Alternatively, we have $$g' '(x)=\frac{1}{h^2}\Big( dg\big([x,x+h/2]) - dg\big([x,x-h/2]\big) \Big),$$

The duality diagram below shows the whole computation: $$ \newcommand{\ra}[1]{\!\!\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} \newcommand{\la}[1]{\!\!\!\!\!\!\!\xleftarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\ua}[1]{\left\uparrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{lcccccc} g' '\ ..\ g\ & \ra{d}& dg &\\ \ua{\star} & \ne & \da{\star} & \\ d(g')& \la{d} & g'& \\ \end{array} $$ Note that making the full circle won't give us the same function.

This is how the formula works ($h=1$):

Proposition. If a node function is undefined at node $c$, then its second derivative is undefined at the nodes $c-h,c,c+h$.

In the pursuit of closed solutions, we will use the first derivatives computed previously via this formula: $$f' '(x)=\frac{f'(x+h/2)-f'(x-h/2)}{h}.$$

Example. (1) $$\begin{array}{lll} f(x)&=3x^2+1\ \Longrightarrow\ \\ f'( x )&=6x\ \Longrightarrow\\ f' '(x)&=\frac{1}{h}\big( 6(x+h/2)-6(x-h/2) \big)\\ &=6.\\ f' '(x)>0\ &\Longrightarrow\ f \smile. \end{array}$$

(2) $$\begin{array}{lll}g(x)&=\frac{1}{x}\ \Longrightarrow\ \\ g'( x )&= -\frac{1}{(x-h/2)(x+h/2)} \text{ for } x\ne 0\ \Longrightarrow\\ g' '(x)&=\frac{1}{h}\left( -\frac{1}{x(x+h)}+\frac{1}{(x-h)x} \right)\\ &=\frac{1}{h}\frac{1}{x}\left( -\frac{1}{x+h}+\frac{1}{x-h} \right)\\ &=\frac{1}{h}\frac{1}{x} \frac{(h-x)+(x+h)}{(x-h)(x+h)}\\ &=\frac{2}{(x-h)x(x+h)}.\\ g' '(x)<0 \text{ for } x\le -3h &\Longrightarrow\ g \frown \text{ on } (-\infty,-2h];\\ g' '(x)>0 \text{ for } x\ge h &\Longrightarrow\ g \smile \text{ on } [2h,\infty). \end{array}$$

(3) $$\begin{array}{lll} p(x)&=2^x\ \Longrightarrow\ \\ p'( x )&= \frac{2^h-1}{h}2^{-h/2}\, 2^x \ \Longrightarrow\ \\ p' '(x)&=\frac{1}{h}\left( \frac{2^h-1}{h}2^{x} - \frac{2^h-1}{h}2^{x-h} \right)\\ &=\frac{1}{h} \frac{2^h-1}{h} 2^{x} ( 1 - 2^{-h})\\ &=\left( \frac{2^h-1}{h} \right)^2 2^{-h} 2^{x}. \\ p' '(x)>0\ &\Longrightarrow\ p \smile. \end{array}$$

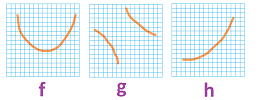

Using this data combined with the increasing/decreasing behavior established earlier, we can sketch the graphs of these three functions:

$\square$

Example. Let's use the Trig Formulas: $$\begin{array}{lllll} (\sin (tx))'= \frac{\sin (t\,h/2)}{h/2} \cos ( tx),\\ (\cos (tx))'=-\frac{\sin (t\,h/2)}{h/2} \sin ( tx). \end{array}$$ Then, we have $$\begin{array}{lllll} (\sin (tx))' '= -\left( \frac{\sin (t\, h/2)}{h/2} \right)^2 \sin ( tx),\\ (\cos (tx))' '=\left( \frac{\sin (t\, h/2)}{h/2} \right)^2 \cos ( tx). \end{array}$$ As we know, the two functions alternate -- periodically every $\pi /t $ -- between positive and negative values: $$\begin{array}{ccccccccccccccccccc} +&+& + &&&& + &+& + &&&& + &+& +\\ &&&- &-& - &&&& - &-& - &&&& - \\ \end{array}$$ Therefore the two functions alternate -- periodically every $\pi /t $ -- between concave up and concave down behavior: $$\begin{array}{ccccccccccccccccccc} &\cdot &&&&&& \cdot &&&&&& \cdot \\ \nearrow &\frown& \searrow &&&& \nearrow &\frown& \searrow &&&& \nearrow &\frown& \searrow\\ &&&\searrow &\smile& \nearrow &&&& \searrow &\smile& \nearrow &&&& \searrow \\ &&&&\cdot &&&&&& \cdot &&&&&& \cdot \end{array}$$ $\square$

Example. Let's use the Exponent Formula: $$(b^x)'=\frac{b^h-1}{h}b^{-h/2}\, b^x.$$ Then $$(b^x)' '=\left( \frac{b^h-1}{h} \right)^2 b^{-h}\, b^x \quad >0.$$ Therefore, the function is concave up for all $b\ne 0$. $\square$

This is how the second derivative is computed with a spreadsheet:

Link to file: Spreadsheets.

Newton's Laws

The concepts introduced above allow us to state some elementary facts about the discrete Newtonian physics, in dimension $1$.

First, the time is given by the standard domain, the discrete representation of ${\bf R}$. Second, the space is given by ${\bf R}$, at the simplest.

The main quantities we are to study are:

- the location $r$ is a node function;

- the displacement $dr$ is a edge function;

- the velocity $v=r'$ is a node function;

- the momentum $p=mv$, where $m$ is a constant mass, is also a node function;

- the impulse $J=dp$ is a edge function;

- the acceleration $a=r' '$ is a node function.

Some of these discrete functions are seen in the following duality diagram: $$ \newcommand{\ra}[1]{\!\!\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} \newcommand{\la}[1]{\!\!\!\!\!\!\!\xleftarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\ua}[1]{\left\uparrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{lcccc} a\ ..\ r\ & \ra{d}& dr &\\ \ua{\star} & \ne & \da{\star} & \\ J/m& \la{d} & v& \\ \end{array} $$ Note that making the full circle won't bring us back to $r$.

For example, the upward concavity of the discrete function below is obvious, which indicates a positive acceleration:

Newton's First Law: If the net force is zero, then the velocity $v$ of the object is constant: $$F=0 \Longrightarrow v=const.$$

The law can be restated without invoking the geometry of time:

- If the net force is zero, then the displacement $dr$ of the object is constant:

$$F=0 \Longrightarrow dr=const.$$ The law shows that the only possible type of motion in this force-less and distance-less space-time is uniform; i.e., it is a repeated addition: $$r(t+1)=r(t)+c.$$

Newton's Second Law: The net force on an object is equal to the derivative of its momentum $p$: $$F=p'.$$

As we have seen, the second derivative is meaningless without specifying geometry, the geometry of time.

Newton's Third Law: If one object exerts a force $F_1$ on another object, the latter simultaneously exerts a force $F_2$ on the former, and the two forces are exactly opposite: $$F_1 = -F_2.$$

Law of Conservation of Momentum: In a system of objects that is “closed”; i.e.,

- there is no exchange of matter with its surroundings, and

- there are no external forces; $\\$

the total momentum is constant: $$p=const.$$

In other words, $$J=dp=0.$$ To prove, consider two particles interacting with each other. By the third law, the forces between them are exactly opposite: $$F_1=-F_2.$$ Due to the second law, we conclude that $$p_1'=-p_2',$$ or $$(p_1+p_2)'=0.$$

Exercise. State the equation of motion for a variable-mass system (such as a rocket). Hint: apply the second law to the entire, constant-mass system.

Exercise. Create a spreadsheet for all of these quantities.

To study physics in dimension $3$, one simply combines three discrete functions for each of the above quantities: $$f_i:{\mathbb R}\to {\bf R},\ i=1,2,3,$$ into one: $$f=(f_1,f_2,f_3):{\mathbb R}\to {\bf R}^3.$$

We have

$$ \Delta X = -\frac{\mu}{r^2}u,$$

where $u$ is a unit vector such that $X = ru$. It follows about the angular momentum $H$ that

$$H = X \times dX = ru \times d(ru) = ru \times (rdu+dru) = r^2(u \times du) + rdr(u \times u) = r^2u \times du.$$

Now consider

$$\Delta X \times H = -\frac{\mu }{r^2}u \times (r^2u \times du) = -\mu u \times (u \times du) = -\mu[(u\cdot du)u-(u\cdot u)du].$$

(see Vector triple product). Notice that $u\cdot u = |u|^2 = 1$.

$$u\cdot du = \frac{1}{2}(u\cdot du + du\cdot u) = \frac{1}{2}\frac{d}{dt}(u\cdot u) = 0 $$

Substituting this into the previous equation, one gets:

$$\Delta X\times H=\mu du -\mu(u\cdot du)u.$$

Integrating both sides:

$$dX\times H=\mu u + c \sum -\mu (u\cdot du)u,$$

where $c$ is a constant vector. Dotting this with $X$ yields:

$$ X\cdot (dX\times H)=X\cdot(\mu u + c) = \mu X\cdot u + X\cdot c = \mu r(u\cdot u)+rc\cos(\theta)+ =r(\mu + c\cos(\theta)+ ),$$

where $\theta$ is the angle between $X$ and $c$. Solving for $r$:

$$r = \frac{X\cdot(dX\times H)}{\mu + c\cos(\theta)+} = \frac{(X\times dX)\cdot H}{\mu + c\cos(\theta)+} = \frac{||H||^2}{\mu + c\cos(\theta)+}$$

Making the substitutions

$$p=\frac{||H||^2}{\mu}\text{ and }e=\frac{c}{\mu},$$

we arrive at the equation

$$r = \frac{p}{1 + e \cdot \cos \theta}.$$