This site is being phased out.

Matrices as functions

Contents

Vector functions

Recall from Calculus 3: course...

Consider $u=f(x,y)=2x-3y$, a function of two variables. It's also a scalar function, because $u$ is a number $\in {\bf R}$.

Notation for such a function is:

- $f \colon {\bf R}^2 \rightarrow {\bf R}$ , meaning

- $f \colon (x,y) \rightarrow u$.

Another: $v=g(x,y)=x+5y$ is also a scalar function of two variables:

- $g \colon {\bf R}^2 \rightarrow {\bf R}$ , meaning

- $g \colon (x,y) \rightarrow v$.

Let's combine them, so that we have one function

- $F \colon {\bf R}^2 \rightarrow {\bf R}^2$, meaning

- $F \colon (x,y) \rightarrow (u,v)$.

Note: these are vector spaces, ${\bf R}^2$. We just combined $u$ and $v$ in one vector $(u,v)$.

Then this is the formula for $$F(x,y) = (u,v) = (2x-3y,x+5y).$$ Here $(x,y)$ is the input and $(u,v)$ is the output. Both are vectors!

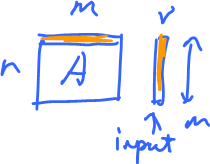

That's what's behind the formula:

$$\stackrel{ {\rm vector} } {\rightarrow} F \stackrel{ {\rm vector} }{\rightarrow},$$

It's a vector function!

But the formula $F(x,y)=(2x-3y,x+5y)$ depends on $x$ and $y$, not $(x,y)$.

This is better:

$$F(x,y) = \left[ \begin{array}{} 2 & -3 \\ 1 & 5 \end{array} \right] \left[ \begin{array}{} x \\ y \end{array} \right] = AX.$$

Turns out, this is matrix product! It is called a matrix representation of this function, $F$.

This works out but note that $f$ and $g$ happen to be linear.

Does it work when the function isn't linear?

What if $F(x,y)=(e^x,\frac{1}{y})$? Try.

But, if we do have a matrix, we can always understand it as a function, example:

$$\left[ \begin{array}{} a & b \\ c & d \end{array} \right] \left[ \begin{array}{} x \\ y \end{array} \right] = \left[ \begin{array}{} ax+by \\ cx+dy \end{array} \right]=(ax+by,cx+dy),$$

for some $a,b,c,d$ fixed.

This is called linearity, in dimension $2$, if you can represent a function this way.

What about dimension $1$?

Very familiar: $f(x)=ax$ is a linear function.

Note: In calculus, $ax+b$ is "linear", not anymore; "affine" is more precise.

Matrix as a linear function

Compare $A$ and $AX$.

While $AX$ is a formula of the function ($X$ is the variable),

$$A(X)=AX=\left[ \begin{array}{} a & b \\ c & d \end{array} \right] \left[ \begin{array}{} x \\ y \end{array} \right] = \left[ \begin{array}{} ax+by \\ cx+dy \end{array} \right]=(ax+by,cx+dy).$$ $A$ is just a table. It's the table, however, that contains all the information about the function.

So one can think of $A$ is an abbreviation of $AX$.

Example: Consider examples in dimension $2$:

$$A \colon {\bf R}^2 \rightarrow {\bf R}^2$$

Note: we use same letter for the function and the matrix.

Then $A$ is a transformation of the plane. It takes one point ($=$ vector) $(x,y)$ to another point ($=$ vector).

Points are vectors and vice versa.

Examples of linear functions in ${\bf R}^2$:

What about "abstract" vector spaces?

Consider $A \colon {\bf R}^2 \rightarrow {\bf R}^2$, as vector spaces. Elements in the first ${\bf R}^2$ are considered to be $\left[ \begin{array}{} x \\ y \end{array} \right]$ and in the second, $\left[ \begin{array}{} u \\ v \end{array} \right]$.

Now, consider the algebraic operations of these vector spaces. What happens to them under $A$?

We know:

$$A\left[ \begin{array}{} x \\ y \end{array} \right] = \left[ \begin{array}{} u \\ v \end{array} \right], A\left[ \begin{array}{} x' \\ y' \end{array} \right] = \left[ \begin{array}{} u' \\ v' \end{array} \right],$$

now what is

$A \left( \left[ \begin{array}{} x \\ y \end{array} \right] + \left[ \begin{array}{} x' \\ y' \end{array} \right] \right) = ?$

Let's recall some operations that behave well under addition of functions. In Calculus 1: course:

- derivative,

- integral, and

- limits in general.

Example:

$$\begin{array}{} (f+g)' &= f'+g' & ({\rm sum \hspace{3pt} rule}) \\ (cf)' &= cf' & ({\rm constant \hspace{3pt} multiple \hspace{3pt} rule}) \end{array}$$

These are, in fact, the two operations of a vector space.

So, differentiation respects

- addition,

- scalar multiplication.

They are the algebraic operations of $C^1({\bf R})$.

There two properties taken together are called linearity of differentiation.

This example suggests what "should" happen to a vector: $$A \left( \left[ \begin{array}{} x \\ y \end{array} \right] + \left[ \begin{array}{} x' \\ y' \end{array} \right] \right) = A \left[ \begin{array}{} x \\ y \end{array} \right] + A\left[ \begin{array}{} x \\ y' \end{array} \right]$$

Let's verify:

$$\begin{array}{} LHS &= \left[ \begin{array}{} a & b \\ c & d \end{array} \right] \left( \left[ \begin{array}{} x \\ y \end{array} \right] + \left[ \begin{array}{} x' \\ y' \end{array} \right] \right) \\ &= \left[ \begin{array}{} a & b \\ c & d \end{array} \right] \left[ \begin{array}{} x + x' \\ y + y' \end{array} \right] \\ &= \left[ \begin{array}{} a(x+x')+b(y+y') \\ c(x+x')+d(y+y') \end{array} \right] \in {\bf R}^2 \end{array}$$

$$\begin{array}{} RHS &= \left[ \begin{array}{} a & b \\ c & d \end{array} \right] \left[ \begin{array}{} x \\ y \end{array} \right] + \left[ \begin{array}{} a & b \\ c & d \end{array} \right] \left[ \begin{array}{} x' \\ y' \end{array} \right] \\ &= \left[ \begin{array}{} ax+by \\ cx+dy \end{array} \right] + \left[ \begin{array}{} ax'+by' \\ cx'+dy' \end{array} \right] \\ &=\left[ \begin{array}{} ax+by+ax'+by' \\ cx + dy + cx' + dy' \end{array} \right] \end{array}$$

They are the same, after factoring.

Same property holds for all dimensions.

Next, this is the distributive property of matrix multiplication:

$$A \left( r\left[ \begin{array}{} x \\ y \end{array} \right] \right) = rA \left[ \begin{array}{} x \\ y \end{array} \right]$$

It's easy to verify for these spaces.

Now, this is the general...

Definition: A function on vector spaces that satisfy these properties is called linear: $$\begin{array}{} A(rv) &= r(Av), \\ A(u+v) &= A(u)+A(v). \end{array}$$

Once again, this is supposed to remind you of:

$$\begin{array}{} (f+g)' &= f'+g', \\ (cf)' &= cf', \end{array}$$

or

$$\begin{array}{} \frac{d}{dx}(f+g) &= \frac{d}{dx}f + \frac{d}{dx} g ,\\ \frac{d}{dx}(cf) &= c \frac{d}{dx}f. \end{array}$$

Examples:

- 1. If $A \in {\bf M}(n,m)$, then $A$ is a function, $A \colon {\bf R}^m \rightarrow {\bf R}^n$.

- 2. $\frac{d}{dx} \colon C^1({\bf R}) \rightarrow ?$, (from the differentiable functions to what?)

Also, we can narrow this down to polynomials: $$\begin{array}{} \frac{d}{dx} \colon & {\bf P} \rightarrow {\bf P} \\ & {\bf P}^n \rightarrow {\bf P}^n \\ & {\bf P}^n \rightarrow {\bf P}^{n-1} \end{array}$$

As in: $\frac{d}{dx}(x^2+x)=2x+1$

- 3. $\displaystyle\int \colon C({\bf R}) \rightarrow C({\bf R})$

This make sense because of the Sum Rule and Constant Multiple Rule and considering the fact that antiderivatives are continuous.

Problem: $f \rightarrow \displaystyle\int f + C$ many answers.

It's an "ill-defined" function. How to fix this?

Let $V = \{f \in C({\bf R}) \colon f(0)=0 \}$. Alternatively, use equivalence relations ($f'=g'$).

Exercise: Turn definite integration into a linear function.

Homework: Let $V$ be the set of absolutely convergent sequences: $V = \{ (x_1,\ldots,x_n,\ldots) \colon x_n \in {\bf R}, x_n \rightarrow a \}$.

- 1. Define operations on $V$ that make it a vector space. Prove the axioms.

- 2. Let $S$ be the set of all sequences of $0$'s with one $1$:

$$S = \{(1,0,0,\ldots), (0,1,0,\ldots),(0,0,1,0,\ldots), \ldots \}.$$ Is $S$ linearly independent, spanning, a basis of $V$?

- 3. What is the dimension of $V$?

Linear functions on vector spaces, linear operators

Recall...

Definition: Given two vector spaces $V$ and $U$. A function $A \colon V \rightarrow U$ is called a linear operator if it "respects the operations" of these spaces:

$$\begin{array}{} A(x+y) &= A(x) + A(y) \\ A(rx) &= rA(x) \end{array}$$

where $x,y \in V$ and $r \in {\bf R}$.

Note: on the left $+, \cdot$ are in $V$, on the right, $+, \cdot$ are in $U$.

Properties...

Property 1: Linear operators take zero to zero: $A(0)=0$ (note: or $A(0_V)=0_U$, two different zeros!)

Proof: $A(0)=A(r \cdot 0) = rA(0)$, what if $r=0$? Then $rA(0)=0$, so $A(0)=0$.

Property 2: Linear operators take inverse to inverse: $A(-x)=-A(x)$ for all $x \in V$.

Proof: Same trick:

$$A(-x)=A((-1)x) = (-1)A(x) = -A(x)$$

Property 3: Linear operators take linear combinations to linear combinations with the same coefficients.

$$A(r_1x_1+r_2x_2+\ldots+r_nx_n) = r_1A(x_1)+r_2A(x_2)+\ldots+r_nA(x_n).$$

Proof: Use the two properties in the definition $n$ times each, then induction.

Example: What if $U=V$? Then $A \colon V \rightarrow V$ is a linear operator, a "self-function". In particular, if we choose $A(x)=x$ for all $x$, it is called the identity operator. Prove it's linear.

Notation: $Id$, $Id_v$, ${\bf 1}_V$.

Example: For any pair of spaces, we can define a linear operator $A \colon V \rightarrow U$ by $A(x)=0$ for every $x \in V$. This is the zero operator.

Theorem (Uniqueness): Suppose $S = \{v_1,\ldots,v_n\} \subset V$, vector space, $A, B \colon V \rightarrow U$ are linear operators. Suppose $S$ is a basis of $V$ and suppose the values of $A,B$ coincide on $S$:

$$A(v_i) = B(v_i)$$

for all $i=1,\ldots,n$.

Then

$$A=B.$$

Note: (illustrate in dimension $1$) Given $f(x)=ax$, $g(x)=bx$, compare $f$ and $g$ at $x=1$. So, if $f(1)=g(1)$, then $f=g$.

Conclusion, we can't drop the condition: ${\rm span \hspace{3pt}} S = V$ from the theorem. We can drop linear independence of $S$, exercise.

Proof: Recall $A=B$ means $A(x)=B(x)$ for all $x \in V$. Pick an arbitrary $x$ and prove that.

Since $S$ spans $V$, there are $a_1,\ldots,a_n \in {\bf R}$ such that $$x = a_1v_1+\ldots+a_nv_n.$$ Then $$\begin{array}{} A(x) &= A(a_1v_1+\ldots+a_nv_n) \\ &= a_1A(v_1) + \ldots + a_nA(v_n) \\ &= a_1B(v_1) + \ldots + a_nB(v_n) \\ &= B(a_1v_1+\ldots+a_nv_n) \\ &= B(x). \end{array}$$

Above, we use Property 3 (linearity), then the theorem's assumption, and then Property 3 again. $\blacksquare$

Examples of linear operators

Fact: Matrices are linear operators on ${\bf R}^n$.

Once a basis is given that is, otherwise the standard basis is assumed.

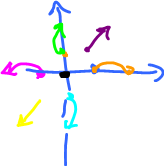

Rotation through $90^o$

$$\begin{array}{} R \colon & (1,0) \rightarrow (0,1) \\ & (0,1) \rightarrow (-1,0) \end{array}$$

Turns out, we only have to look at the basis!

Claim: $R$ can be represented by a matrix made of these vectors:

$R = \left[ \begin{array}{} 0 & -1 \\ 1 & 0 \end{array} \right]$, i.e. we combine these vectors as columns.

Verify: $R(1,1) = \left[ \begin{array}{} 0 & -1 \\ 1 & 0 \end{array} \right] \left[ \begin{array}{} 1 \\ 1 \end{array} \right] = \left[ \begin{array}{} -1 \\ 1 \end{array} \right]$.

Projection $P$ on the $x$-axis: $$(x,y) \rightarrow (x,0).$$

$$\left[ \begin{array}{} ? & ? \\ ? & ? \end{array} \right] \left[ \begin{array}{} x \\ y \end{array} \right] = \left[ \begin{array}{} x \\ 0 \end{array} \right]$$

What do we put here so that it works? We can guess:

$$\left[ \begin{array}{} 1 & 0 \\ 0 & 0 \end{array} \right] \left[ \begin{array}{} x \\ y \end{array} \right] = \left[ \begin{array}{} x \\ 0 \end{array} \right]$$

Differentiation on ${\bf P}^n$, ${\rm dim \hspace{3pt}} {\bf P}^n = n+1$:

$$\frac{d}{dx}(a_nx^n+\ldots+a_1x+a_0) = na_nx^{n-1}+(n-1)a_{n-1}x^{n-2}+\ldots+a_1$$

$D \colon {\bf P}^n \rightarrow {\bf P}^n$

$$D \colon (a_0,a_1,\ldots,a_n) \rightarrow (a_1,2a_2,\ldots,(n-1)a_{n-1},na_n,0)$$

$$\left[ \begin{array}{} 0 & 1 & 0 & 0 & \ldots & 0 \\ 0 & 0 & 2 & 0 & \ldots & 0 \\ \vdots \\ 0 & 0 & 0 & 0 & \ldots & n \\ 0 & 0 & 0 & 0 & \ldots & 0 \end{array} \right] \left[ \begin{array}{} a_0 \\ a_1 \\ a_2 \\ \vdots \\ a_{n-1} \\ a_n \end{array} \right] = \left[ \begin{array}{} a_1 \\ 2a_2 \\ \vdots \\ na_n \\ 0 \end{array} \right]$$

All zeros except right above the diagonal.

If $D=\{a_{ij}\}$, write a formula for $a_{ij}=?$ Exercise.

The derivative $f'(a)$ at $a$, fixed point is a linear operator of $f \colon {\bf R} \rightarrow {\bf R}$: $f'(a)=3$, or $\frac{dy}{dx}=3$, or $dy=3dx$ (differential form).

Think of $dx$ and $dy$ as variables, then $f'(a)$ is multiplication by $3$.

So $f(a) \colon {\bf R} \rightarrow {\bf R}$ is linear. But the same applies to $grad \hspace{3pt} f(a)$, for $f \colon {\bf R}^n \rightarrow {\bf R}$ and other generalizations of the derivative.

What is this? $A \colon V \rightarrow V$, for a given $r \in {\bf R}$, define $A(x)=rx$.

What is it in ${\bf R}^2$:

$$\left[ \begin{array}{} x \\ y \end{array} \right] \stackrel{A}{\rightarrow} \left[ \begin{array}{} 2x \\ 2y \end{array} \right] = 2\left[ \begin{array}{} x \\ y \end{array} \right]$$

It's...

Radial stretch $2X$:

Geometrically:

Its matrix: $\left[ \begin{array}{} 2 & 0 \\ 0 & 2 \end{array} \right]$.

What about $\left[ \begin{array}{} 2 & 0 \\ 0 & 3 \end{array} \right]$? It's...

Stretch, non-uniform:

Exercise.