This site is being phased out.

Chain maps

Contents

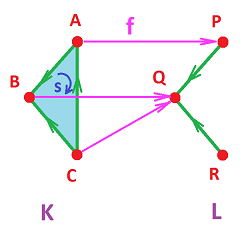

Cubical maps

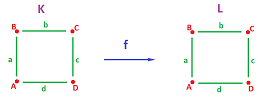

Let's consider maps of the “cubical circle” to itself $$f: X = {\bf S}^1 \to Y = {\bf S}^1 .$$ We represent $X$ and $Y$ as two identical cubical complexes $K$ and $L$ and then find an appropriate representation $g:K\to L$ for each $f$ in terms of their cells. More precisely, we are after $$g=f_{\Delta}:C(K)\to C(L).$$

We will try to find several possible functions $g$ under the following condition:

- (A) $g$ maps each cell in $K$ to a cell in $L$ of the same dimension, otherwise it's $0$. $\\$

The condition corresponds to the clone/collapse condition for a simplicial map.

We make up a few simple examples.

Example (identity). $$\begin{array}{lllllll} g(A) = A, &g(B) = B, &g(C) = C, &g(D) = D, \\ g(a) = a, &g(b) = b, &g(c) = c, &g(d) = d. \end{array}$$ $\square$

Example (constant). $$\begin{array}{lllllll} g(A) = A, &g(B) = A, &g(C) = A, &g(D) = A, \\ g(a) = 0, &g(b) = 0, &g(c) = 0, &g(d) = 0. \end{array}$$ All $1$-cells collapse. Unlike the case of a general constant map, there are only $4$ such maps for these cubical complexes. $\square$

Example (flip). $$\begin{array}{lllllll} g(A) = D, &g(B) = C, &g(C) = B, &g(D) = A, \\ g(a) = c, &g(b) = b, &g(c) = a, &g(d) = d. \end{array}$$ This is a vertical flip; there are also the horizontal and diagonal flips, a total of $4$. Only these four axes allow condition (A) to be satisfied. $\square$

Example (rotation). $$\begin{array}{llllll} &\partial (f_1(AB)) &=\partial (0) &=0;\\ \hspace{.14in}&f_0( \partial (AB))&= f_0( A+B)=f_0(A)+f_0(B)=X+X &=0.&&\hspace{.14in}\square \end{array}$$

Exercise. Complete the example.

Next, let's try these values for our vertices: $$g(A) = A, \ g(B) = C,\ ...$$ This is trouble. Even though we can find a cell for $g(a)$, it can't be $AC$ because it's not in $L$. Therefore, $g(a)$ won't be aligned with its endpoints. As a result, $g$ breaks apart. To prevent this from happening, we need to require that the endpoints of the image in $L$ of any edge in $K$ are the images of the endpoints of the edge.

Furthermore, we want to ensure the cells of all dimensions remain attached after $g$ is applied and we require:

- (B) $g$ takes boundary to boundary. $\\$

We arrive to the familiar algebraic continuity condition: $$\partial g = g \partial.$$

Exercise. Verify this condition for the examples above.

Observe now that $g$ is defined on complex $K$ but its values aren't technically all in $L$. There are also $0$s. They aren't cells, but rather chains. Recall that, even though $g$ is defined on cells of $K$ only, it can be extended to all chains, by linearity: $$g(A + B) = g(A) + g(B), ...$$

Thus, condition (A) simply means that $g$ maps $k$-chains to $k$-chains. More precisely, $g$ is a collection of functions (a chain map): $$g_k : C_k(K) \to C_k(L),\ k = 0, 1, 2, ....$$ For brevity we use the following notation: $$g : C(K) \to C(L).$$

Example (projection). $$\begin{array}{llllll} g(A) = A, &g(B) = A, &g(C) = D, &g(D) = A, \\ g(a) = 0, &g(b) = d, &g(c) = 0, &g(d) = d. \end{array}$$ Let's verify condition (B): $$\begin{array}{lllllll} \partial g(A) = \partial 0 = 0, \\ g \partial (A) = g(0) = 0. \end{array}$$ Same for the rest of $0$-cells. $$\begin{array}{llllllll} \partial g(a) = \partial (0) = 0,\\ g \partial (a) = g(A + B) = g(A) + g(B) = A + A = 0. \end{array}$$ Same for $c$. $$\begin{array}{lllllll} \partial g(b) = \partial (d) = A + D, \\ g \partial (b) = g(B + C) = g(B) + g(C) = A + D. \end{array}$$ Same for $d$. $\square$

Exercise. Try the “diagonal fold”: $A$ goes to $C$, while $C, B$ and $D$ stay.

In each of these examples, an idea of a map $f$ of the circle/square was present first, then $f$ was realized as a chain map $g$.

Notation: The chain map of $f$ us denoted by $$g = f_{\Delta} : C(K) \to C(L).$$

Let's make sure that this idea makes sense by reversing this construction. This time, we suppose instead that we already have a chain map $$g : C(K) \to C(L),$$ what is a possible “realization” of $g$: $$f : |K| \to |L|?$$ The idea is simple: if we know where each vertex goes under $f$, we can construct the rest of $f$ using linearity, i.e., interpolation.

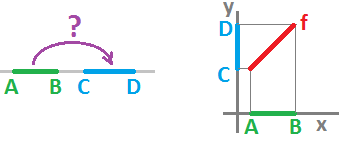

Example (interpolation). A simple example first. Suppose $$K = \{A, B, a :\ \partial a = B-A \},\ L = \{C, D, b:\ \partial b = D-C \}$$ are two complexes representing the closed intervals. Define a chain map: $$g(A) = C,\ g(B) = D,\ g(a) = b.$$ If the first two identities is all we know, we can still construct a continuous function $f : |K| \to |L|$ such that $f_{\Delta} = g$. The third identity will be taken care of by condition (B).

If we include $|K|$ and $|L|$ as subsets of the $x$-axis and the $y$-axis respectively, the solution becomes obvious: $$f(x) := C + \tfrac{D-C}{B-A} \cdot (x-A).$$ This approach allows us to give a single formula for realizations of all chain operators: $$f(x) := g(A) + \tfrac{g(B)-g(A)}{B-A} \cdot (x-A).$$ For example, suppose we have a constant map: $$g(A) = C,\ g(B) = C,\ g(a) = 0.$$ Then $$\hspace{.33in}f(x) = C + \tfrac{C-C}{B-A} \cdot (x-A) = C.\hspace{.33in}\square$$

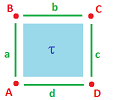

Example. Let's consider a chain map $g$ of the complex $K$ representing the solid square.

Knowing the values of $g$ on the $0$-cells of $K$ gives us the values of $f=|g|$ at those points. How do we extend it to the rest of $K$?

An arbitrary point $u$ in $|K|$ is represented as a convex combination of $A, B, C, D$: $$u = sA + tB + pC + qD, \text{ with } s + t + p + q = 1.$$ Then we define $f(u)$ to be $$f(u) := sf(A) + tf(B) + pf(C) + qf(D).$$ Accordingly, $f(u)$ is a convex combination of $f(A), f(B), f(C), f(D)$. But all of these are vertices of $|L|$, hence $f(u) \in |L|$. $\square$

Example (projection). Let's consider the projection: $$\begin{array}{llllll} g(A) = A, &g(B) = A, &g(C) = D, &g(D) = A, \\ g(a) = 0, &g(b) = d, &g(c) = 0, &g(d) = d, \\ g(\tau) = 0. \end{array}$$ Then, $$\begin{array}{lllllllllll} f(u) &= sf(A) + tf(B) + pf(C) + qf(D) \\ &= sA + tA + pD + qD \\ &= (s+t)A + (p+q)D. \end{array}$$ Due to $(s+t) + (p+q) = 1$, we conclude that $f(u)$ belongs to the interval $AD$. $\square$

Commutative diagrams

This very fruitful approach will be used throughout.

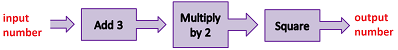

Compositions of functions can be visualized as flowcharts:

In general, we represent a function $f : X \to Y$ diagrammatically as a black box that takes an input and releases an output (same $y$ for same $x$): $$ \newcommand{\ra}[1]{\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{ccccccccccccccc} \text{input} & & \text{function} & & \text{output} \\ x & \ra{} & \begin{array}{|c|}\hline\quad f \quad \\ \hline\end{array} & \ra{} & y \end{array} $$ or, simply, $$ \newcommand{\ra}[1]{\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{llllllllllll} X & \ra{f} & Y. \end{array} $$ Suppose now that we have another function $g : Y \to Z$; how do we represent their composition $fg=g \circ f$?

To compute it, we “wire” their diagrams together consecutively: $$ \newcommand{\ra}[1]{\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{ccccccccccccccc} x & \ra{} & \begin{array}{|c|}\hline\quad f \quad \\ \hline\end{array} & \ra{} & y & \ra{} & \begin{array}{|c|}\hline\quad g \quad \\ \hline\end{array} & \ra{} & z \end{array}$$ The standard notation is the following: $$ \newcommand{\ra}[1]{\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{llllllllllll} X & \ra{f} & Y & \ra{g} & Z. \end{array} $$ Or, alternatively, we may want to emphasize the resulting composition: $$ \newcommand{\ra}[1]{\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{rclcc} X & \ra{f} & Y \\ &_{gf} \searrow\quad & \da{g} \\ & & Z \end{array} $$ We say that the new function “completes the diagram”.

The point illustrated by the diagram is that, starting with $x\in X$, you can

- go right then down, or

- go diagonally; $\\$

and either way, you get the same result: $$g(f(x))=(gf)(x).$$

In the diagram, this is how the values of the functions are related: $$ \newcommand{\ra}[1]{\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{rrrlll} &x\in& X &\quad\quad \ra{f}\ & Y &\ni f(x) \\ &&& _{gf} \searrow \quad& \da{g} \\ &&& (gf)(x)\in & Z &\ni g(f(x)) \end{array} $$

Example. As an example, we can use this idea to represent the inverse function $f^{-1}$ of $f$. It is the function that completes the diagram with the identity function on $X$: $$ \newcommand{\ra}[1]{\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{llllllllllll} & X & \quad\ra{f} & Y \\ & & _{{\rm Id}_X} \searrow\quad & \da{f^{-1}} \\ \qquad\qquad\qquad\qquad& & & X&\qquad\qquad\qquad\qquad\square \end{array}$$

Exercise. Plot the other diagram for this example.

Example. The restriction of $f:X\to Y$ to subset $A\subset X$ completes this diagram with the inclusion of $A$ into $X$: $$ \newcommand{\ra}[1]{\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{lccllllllll} & A &\quad \hookrightarrow & X \\ & & _{f|_A} \searrow\quad & \da{f} \\ \qquad\qquad\qquad\qquad& & & Y&\qquad\qquad\qquad\qquad\square \end{array}$$

Diagrams like these are used to represent compositions of all kinds of functions: continuous functions, graph functions, homomorphisms, linear operators, and many more.

Exercise. Complete the diagram: $$ \newcommand{\ra}[1]{\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} \newcommand{\ua}[1]{\left\uparrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{cclccc} \ker f & \hookrightarrow & X \\ & _{?} \searrow & \da{f}\\ & & Y& \end{array} $$

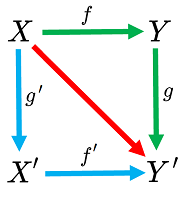

A commutative diagram may be of any shape. For example, consider this square: $$ \newcommand{\ra}[1]{\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{lclccccccc} X & \ra{f} & Y \\ \da{g'} & \searrow & \da{g} \\ X' & \ra{f'} & Y' \end{array} $$ As before, go right then down, or go down then right, with the same result: $$gf = f'g'.$$ Both give you the function of the diagonal arrow!

This identity is the reason why it makes sense to call such a diagram “commutative”. To put it differently,

The illustration above explains how the blue and green threads are tied together in the beginning -- as we start with the same $x$ in the left upper corner -- and at the end -- where the output of these compositions in the right bottom corner turns out to be the same. It is as if the commutativity turns this combination of loose threads into a piece of fabric!

The algebraic continuity condition in the last subsection $$\partial _Jf_1(e)=f_0( \partial _G e)$$ is also represented as a commutative diagram: $$ \newcommand{\ra}[1]{\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{cccccccccc} C_1(G) & \ra{f_1} & C_1(J) \\ \da{\partial _G} & \searrow & \da{\partial _J} \\ C_0(G) & \ra{f_0} & C_0(J). \end{array} $$

Exercise. Draw a commutative diagram for the composition of two chain maps.

Simplicial maps

This is how we defined cell maps for the $1$-dimensional case. What we require from the edge function $f$ the following:

- for each edge $e$, $f_E$ takes its endpoints to the endpoints of $f(e)$. $\\$

Or, in a more compact form, $$f(A)=X, \ f(B)=Y \Longleftrightarrow f(AB)=XY.$$ We say that, in this case, the edge is cloned.

But what about the collapsed edges? It's easy; we just require:

- for each edge $e$, if $e$ is taken to node $X$, then so are its endpoints, and vice versa. $\\$

Or, in a more compact form, $$f(A)=f(B)=X \Longleftrightarrow f_E(AB)=X .$$

Definition. A function of graphs $f:G\to J$, $$f=\Big\{f_N:\ N_G\to N_J,\ f_E:E_G \to E_J \cup N_J \Big\},$$ is called a graph map if $$f_E(AB)= \begin{cases} f_N(A)f_N(B) & \text{ if } f_N(A) \ne f_N(B),\\ f_N(A) & \text{ if } f_N(A) = f_N(B). \end{cases}$$

In the most abbreviated form, this discrete continuity condition is: $$f(AB)=f(A)f(B),$$ with the understanding that $XX=X$.

With this property assumed, we only need to provide a map of nodes, and the map of edges will be derived.

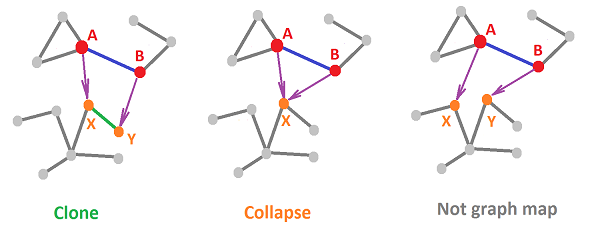

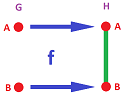

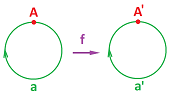

Below we illustrate these ideas for general graphs. Here are two continuous possibilities for a discrete function and one discontinuous:

Following this idea, we introduce the following notation: we allow repetition of vertices in the list that defines a simplex. A list of vertices $$s=A_0 ... A_n$$ is an $m$-simplex if there are exactly $m+1$ distinct vertices on the list.

Now, to understand what a simplicial map $f:K\to L$ between two (so far unoriented) simplicial complexes is, we first assume that $f$ is defined on vertices only: if $A$ is a vertex in $K$ then $f(A)$ is a vertex in $L$. This function can be, so far, arbitrary but not every such function can be extended to the whole complex $K$!

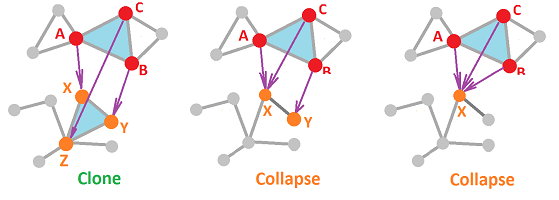

Suppose now we have a $2$-simplex: $$s=A_0A_1A_2 \in K.$$ Let's define $f(s)\in L$. We already know what happens under $f$ to the vertices and to the faces of $s$: $$f(A_iA_j)=f(A_i)f(A_j).$$ This new simplex $f(s)$ will have to be determined by the values of $f$ at these vertices -- the values are already known as vertices in $L$ -- as follows: $$f(s)=f(A_0 A_1 A_2):=f(A_0) f(A_1) f(A_2).$$ The latter is an $m$-simplex ($m \le 2$), which is required to belong to $L$. With the three possibilities for $m$, there are three possibilities for this new simplex. We see that, unlike a $1$-simples, a $2$-simplex can collapse in two ways:

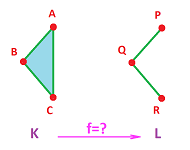

Example. Let's consider possible maps between these two complexes:

- $K=\{A, B, C, AB, BC, CA, ABC\}$,

- $L=\{P, Q, R, PQ, QR\}$.

We start with: $$f(A)=P,\ f(B)=Q,\ f(C)=R.$$ Then, by the above formula, we have $$f(AB)=PQ \in L,\ f(BC)=QR \in L,\ f(CA)=RP \not\in L.$$ Such a simplicial map is impossible!

Let's try another value for $C$: $$f(A)=P,\ f(B)=Q,\ f(C)=Q.$$ Then two edges clone and one collapses: $$f(AB)=PQ \in L,\ f(BC)=Q \in L,\ f(CA)=PQ \in L;$$ and the triangle collapses too: $$f(ABC)=PQ \in L.$$ That works! The result is below:

$\square$

Exercise. Consider three more possibilities.

Definition. A function $f:K\to L$ between two (unoriented) simplicial complexes is called a simplicial map if it satisfies the following: $$A_0 ... A_n \in K \Longrightarrow f(A_0) ... f(A_n) \in L;$$ then, the value of $f$ on a simplex of $K$ is a simplex of $L$ given by $$f(A_0 ... A_n):=f(A_0) ... f(A_n).$$ A bijective simplicial map is called a simplicial isomorphism.

The above condition is a kind of discrete continuity.

Theorem. A function $f:K\to L$ is a simplicial isomorphism if and only if it is bijective on vertices.

Theorem. A constant function $C:K\to L$ (i.e., a map defined by $f(s)=V$ for some fixed vertex $V\in L$) is a simplicial map.

Theorem. The composition $gf:K\to M$ of two simplicial maps $f:K\to L$ and $g:L\to M$ is a simplicial map.

Exercise. Prove these theorems.

Exercise. Provide a simplicial map that “represents” the following map $f:{\bf S}^1\to {\bf T}^2$:

Exercise. Give the graph of a simplicial map a structure of a simplicial complex.

Exercise. Define cubical maps.

Chain maps of simplicial maps

We can now move on to algebra.

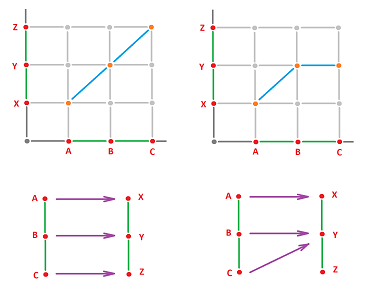

Example. We consider the maps of the two two-edge graphs given above: $$\begin{array}{lll} N_G=\{A,B,C\},& E_G=\{AB,BC\},\\ N_J=\{X,Y,Z\},& E_J=\{XY,YZ\}; \end{array}$$ and the two graph maps.

The key idea is to think of graph maps as functions on the generators of the chain groups: $$\begin{array}{lll} C_0(G)=< A,B,C >,& C_1(G)=< AB,BC >,\\ C_0(J)=< X,Y,Z >,& C_1(J)=< XY,YZ >. \end{array}$$ Let's express the values of the generators in $G$ under $f$ in terms of the generators in $J$.

The first function is given by $$\begin{array}{lllll} &f_N(A)=X,&f_N(B)=Y,&f_N(C)=Z,\\ &f_E(AB)=XY,&f_E(BC)=YZ.& \end{array}$$ It is then written coordinate-wise as follows: $$\begin{array}{lllll} &f_0\Big ([1,0,0]^T\Big)=[1,0,0]^T,&f_0\Big ([0,1,0]^T\Big)=[0,1,0]^T,&f_0\Big ([0,0,1]^T\Big)=[0,0,1]^T,\\ &f_1\Big ([1,0]^T\Big)=[1,0]^T,&f_1\Big ([0,1]^T\Big)=[0,1]^T. \end{array}$$ The second is given by $$\begin{array}{lllll} &f_N(A)=X, &f_N(B)=Y,&f_N(C)=Y,\\ &f_E(AB)=XY,&f_E(BC)=Y. \end{array}$$ It is written coordinate-wise as follows: $$\begin{array}{lllll} &f_0\Big([1,0,0]^T\Big)=[1,0,0]^T, &f_0\Big([0,1,0]^T\Big)=[0,1,0]^T, &f_0\Big([0,0,1]^T\Big)=[0,0,1]^T,\\ &f_1\Big([1,0]^T\Big)=[1,0]^T, &f_1\Big([0,1]^T\Big)=0. \end{array}$$ The very last item requires special attention: the collapsing of an edge in $G$ does not produce a corresponding edge in $J$. This is why we make an algebraic decision to assign it the zero value.

As always, it suffices to know the values of a function on the generators to recover the whole homomorphism. These two functions $f_N$ and $f_E$ generate two homomorphisms: $$f_0:C_0(G) \to C_0(J),\ f_1:C_1(G) \to C_1(J).$$ It follows that the first homomorphism is the identity and the second can be thought of as a projection. $\square$

Exercise. Prove the last statement.

Exercise. Find the matrices of $f_0,f_1$ in the last example.

Exercise. Find the matrices of $f_0,f_1$ for $f:G\to J$ given by $f_E(AB)=XY, \ f_E(BC)=XY$.

Suppose a simplicial map $$f:K \to L$$ between two oriented simplicial complexes is given. The map records where every simplex goes, but what about chains? We want now to define the corresponding linear operators: $$f_k:C_k(K) \to C_k(L),\ k=0,1, ....$$

Since the $k$-simplices of $K$ form a basis of the $k$-chain group $C_k(K)$, we only need to know the values of $f_k$ for these simplices. And we do, from $f$.

The only problem is that, while $s$ is a $k$-simplex in $K$, its image $f(s)$ might be a simplex in $L$ of a lower dimension. Therefore, if $s$ collapses, then $f(s) \not\in C_k(L)$, so that $f(s)$ appears to be undefined... To resolve this conundrum, even though $f(s)$ is not a $k$-simplex, can we think of it as a $k$-chain? There is only one legitimate choice for such a chain: it's $0$!

So, this is how we define the chain maps $f_0, ...,f_k, ...$ for $f$.

Definition. The $n$th chain map $f_n$ of a simplicial maps $f$ is defined by its values on each $n$-simplex in $K$, $$s = A_0A_1 ... A_n,$$ where $A_0, A_1, ..., A_n$ are its vertices, as follows; $$f_n(s):=\begin{cases} f(A_0) ... f(A_n), & \text{ if } f(A_i) \ne f(A_j)\ \forall i\ne j; \\ 0, & \text{ otherwise.} \end{cases}$$

Thus, a simplex is cloned or it is taken to $0$.

Example. Let's consider these two complexes from last subsection but first orient them, arbitrarily:

- $K=\{A, B, C, AB, CB, CA, ACB\}$,

- $L=\{P, Q, R, PQ, RQ\}$. $\\$

This is the simplicial map we considered above: $$f(A)=P,\ f(B)=Q,\ f(C)=Q.$$ These three identities immediately give us the three columns of the matrix of the linear operator $f_0:C_0(K)\to C_0(L)$, or $f_0:{\bf R}^3\to {\bf R}^3$: $$f_0=\left[ \begin{array}{cccccccccc} 1 &0 &0 \\ 0 &1 &1 \\ 0 &0 &0 \end{array} \right].$$ Further, the simplicial identities $$f(AB)=PQ,\ f(BC)=Q,\ f(CA)=PQ,$$ give us these chain identities: $$f_1(AB)=PQ,\ f_1(BC)=0,\ f_1(CA)=QP.$$ These three give us the three columns of the matrix of the linear map $f_1:C_1(K)\to C_1(L)$, or $f_1:{\bf R}^3\to {\bf R}^2$: $$f_1=\left[ \begin{array}{cccccccccc} 1 &0 &-1 \\ 0 &0 &0 \end{array} \right].$$ Finally, the simplicial identity $$f(ABC)=PQ$$ gives us the chain identity: $$f_2(ACB)=0.$$ Therefore, $$\hspace{.45in} f_2=0. \hspace{.45in}\square$$

Exercise. Add a $2$-simplex to either of these two simplicial complexes, orient the new complexes, and then find the matrices of the chain maps of these two simplicial maps:

The definition of $f_n$ is simple enough, but we can make it even more compact if we observe that

- case 1: $f_n(s)=f(A_0) ... f(A_n)$, if $f(A_i) \ne f(A_j)$, for all $i\ne j$; $\\$

implies

- case 2: $f_n(s)=0$, if $f(A_i) = f(A_j)$, for some $i\ne j$. $\\$

The reason is that having two coinciding vertices in a simplex -- understood as a chain -- make it equal $0$. Indeed, when we flip them, the sign has to flip too; but it's the same simplex! $$\begin{array}{lll} B_i=B_j \Longrightarrow\\ B_0 ... B_i ... B_j ... B_n &= -B_0 ... B_j ... B_i ... B_n \\ &=-B_0 ... B_i ... B_j ... B_n. \end{array}$$ It must be zero then.

We amend the convention from the last subsection.

Notation: We allow repetition of vertices in the list that defines a simplex. But, a list of vertices $$s=A_0 ... A_n$$ is the $0$ chain unless there are exactly $n+1$ distinct vertices on the list.

Definition. The $n$-chain map $f_n:C_n(K) \to C_n(L)$ induced by a simplicial map $f:K \to L$ is defined by its values on the simplices, as follows: $$f_n(A_0 ... A_n):=f(A_0) ... f(A_n).$$

Theorem. The chain map induced by a simplicial isomorphism is an isomorphism. Moreover, the chain map induced by the identity simplicial map is the identity.

Theorem. The chain map induced by a simplicial constant map $C:K\to L$ with $K,L$ edge-connected is acyclic: $C_0=\operatorname{Id},\ C_k=0,\ \forall k>0$.

Theorem. The chain map induced by the composition $gf:K\to M$ of two simplicial maps $f:K\to L$ and $g:L\to M$ is the composition of the chain maps induced by these simplicial maps: $$(gf)_k=g_kf_k.$$

Exercise. Prove these theorems.

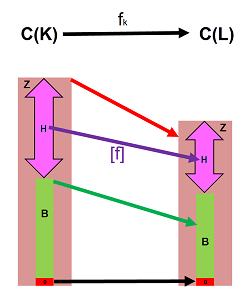

Since we have two simplicial complexes, there are two chain complexes: $$ \newcommand{\ra}[1]{\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \begin{array}{ccccccccccccc} ...& \to & C_{k+1}(K)& \ra{\partial_{k+1}} & C_{k}(K)& \ra{\partial_{k}} & C_{k-1}(K)& \to&...& \to & 0,\\ ...& \to & C_{k+1}(L)& \ra{\partial_{k+1}} & C_{k}(L)& \ra{\partial_{k}} & C_{k-1}(L)& \to&...& \to & 0. \end{array} $$ The chain maps connect these two chain complexes item by item, “vertically”, like this: $$ \newcommand{\ra}[1]{\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} \newcommand{\la}[1]{\!\!\!\!\!\xleftarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\ua}[1]{\left\uparrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{ccccccccccccc} ...& \to & C_{k+1}(K)& \ra{\partial_{k+1}} & C_{k}(K)& \ra{\partial_{k}} & C_{k-1}(K)& \to&...& \to & 0\\ ...& & \da{f_{k+1}}& & \da{f_{k}}& & \da{f_{k-1}}& &...& & \\ ...& \to & C_{k+1}(L)& \ra{\partial_{k+1}} & C_{k}(L)& \ra{\partial_{k}} & C_{k-1}(L)& \to&...& \to & 0. \end{array} $$ The arrows here are maps and paths of arrows are their compositions. The question is then, are the results “path-independent”? In other words, is the diagram commutative?

Exercise. Produce such a diagram for the map in the last example.

How chain maps interact with the boundary operators

We need to understand how the chain map of a simplicial map fits into the chain complexes.

Let's take another look at the “discrete continuity conditions”. No matter how compact these conditions are, one is forced to explain that the collapsed case appears to be an exception to the general case. To get around this inconvenience, let's find out what happens under $f$ to the boundary operator of the graph.

Example. We have two here: $$\begin{array}{lll} \partial _G: &C_1(G) \to C_0(G)\\ \partial _J: &C_1(J) \to C_0(J), \end{array}$$ one for each graph.

Now, we just use the fact that $$\partial (AB) = A + B,$$ and the continuity condition takes this form: $$\partial (f_1(AB))=f_0( \partial (AB)).$$ It applies, without change, to the case of a collapsed edge. Indeed, if $AB$ collapses to $X$, both sides are $0$: $$\begin{array}{llllll} &\partial (f_1(AB)) &=\partial (0) &=0;\\ &f_0( \partial (AB))&= f_0( A+B)=f_0(A)+f_0(B)=X+X &=0.&&\square \end{array}$$

There are two boundary operators to deal with for a given simplicial map $$f:K\to L,$$ for either of the complexes: $$\partial ^K_k: C_k(K) \to C_{k-1}(K)$$ and $$\partial ^L_k: C_k(L) \to C_{k-1}(L).$$ (Both the subscripts and the superscripts may be omitted.) Along with the chain maps of $f$, the boundary operators can be combined in a single diagram: $$ \newcommand{\ra}[1]{\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{cccccccccc} C_k(K) & \ra{f_k} & C_k(L) \\ \da{\partial ^K_k} & \searrow & \da{\partial ^L_k} \\ C_{k-1}(K) & \ra{f_{k-1}} & C_{k-1}(L) \end{array} $$ As we shall see, the diagram is commutative. The familiar and wonderfully compact way to present this condition is below: $$\begin{array}{|c|} \hline \\ \quad \partial f=f \partial \quad \\ \\ \hline \end{array} $$

Definition. A chain map is any collection of homomorphisms between the elements of any two chain complexes that satisfies the above condition.

This behavior is expected from simplicial maps. After all, when a simplex is cloned, so is its boundary, face by face:

It is the case of collapse that needs special attention. We will prove the general case.

For the proof we will need the following two facts. Both boundary operators are defined by the same formula for $k=0,1, ...$: $$\partial_k( A_0 ... A_k ) := \displaystyle\sum_{i=0}^k(-1)^i A_0 ... [A_i] ... A_k. \quad\quad\text{(1)}$$ We will also need the definition of the chain map of a simplicial map: $$f_{k} (A_0 ... A_k):= f(A_0) ... f(A_i) ... f(A_k).\quad\quad \text{(2)}$$

Theorem (Algebraic Continuity Condition). If $f:K\to L$ is a simplicial map, then its chain maps satisfy for $k=1,2, ...$: $$\partial^L_k f_k = f_{k-1} \partial^K_k.$$

Proof. The proof is by examination. To prove the identity, we evaluate its left-hand side and its right-hand side for $$s = A_0A_1 ... A_k \in C_k(K).$$

First, the right-hand side. We use (1), $$\begin{array}{lll} f_{k-1}( \partial_k(s)) &= f_{k-1} \Big( \displaystyle\sum_{i=0}^k (-1)^i A_0 ... [A_i] ... A_k \Big), &\text{ then by linearity} \\ &= \displaystyle\sum_{i=0}^k (-1)^i f_{k-1} (A_0 ... [A_i] ... A_k), &\text{ then by (2)} \\ &= \displaystyle\sum_{i=0}^k (-1)^i f(A_0) ... [f(A_i)] ... f(A_k). \end{array}$$

Next, the left-hand side. We use (2), $$\begin{array}{lll} \partial_k(f_k(s)) &= \partial_k(f(A_0) ... f(A_k)), &\text{ then by (1) } \\ &= \displaystyle\sum_{i=0}^k (-1)^i f(A_0) ... [f(A_i)] ... f(A_k). \end{array}$$

Now, as we have proven the identity for all basis elements, simplices, of the vector space, $C_k(K)$, then the two linear operator coincide. $\blacksquare$

Corollary. The theorem holds for chains over ${\bf Z}_2$.

Exercise. Prove the corollary (a) by rewriting the above proof, (b) directly from the theorem. Hint: think of an appropriate function ${\bf Z}\to {\bf Z}_2$.

Below are the main theorems of this theory.

Theorem. Transitioning to chain maps preserves the identity: $$(\operatorname{Id}_G)_0={\rm Id}_{C_0(G)},\ ({\rm Id}_G)_1={\rm Id}_{C_1(G)}.$$

Theorem. Transitioning to chain maps preserves the compositions: $$(fg)_0=f_0g_0,\ (fg)_1=f_1g_1.$$

Theorem. Transitioning to chain maps preserves the inverses: $$(f^{-1})_0=f_0^{-1},\ (f^{-1})_1=f_1^{-1}.$$

Exercise. Prove these three theorems.

We will use the algebraic continuity condition: $\partial f = f \partial$. In particular, both squares of this diagram are commutative: $$ \newcommand{\ra}[1]{\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} \newcommand{\la}[1]{\!\!\!\!\!\xleftarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\ua}[1]{\left\uparrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{ccccccccccccc} C_{k+1}(K)& \ra{\partial_{k+1}} & C_{k}(K)& \ra{\partial_{k}} & C_{k-1}(K)\\ \da{f_{k+1}}& & \da{f_{k}}& & \da{f_{k-1}}\\ C_{k+1}(L)& \ra{\partial_{k+1}} & C_{k}(L)& \ra{\partial_{k}} & C_{k-1}(L) \end{array} $$

Corollary. Chain maps take cycles to cycles: $$f_k(Z_k(K) ) \subset Z_k(L).$$

Proof. Suppose $x \in Z_k(K)$, then $\partial_k(x) = 0$. Then we trace $x$ in the right square of the diagram: $$\begin{array}{ll} \partial_k f_k(x) &= f_{k-1} \partial_k (x) \\ &= f_{k-1}(0) \\ &= 0. \end{array}$$ Hence $f_k(x)$ is a cycle too. $\blacksquare$

Corollary. Chain maps take boundaries to boundaries: $$f_k( B_k(K) ) \subset B_k(L).$$

Proof. Suppose $x \in B_k(K),$ then $x = \partial_{k+1}(u)$ for some $u \in C_{k+1}(K)$. Then we trace $x$ back in the left square of the diagram: $$\begin{array}{llll} f_k(x) &= f_k \partial_{k+1}(u) \\ &= \partial_{k+1}f_{k+1}(u). \end{array}$$ Hence $f_k(x)$ is a boundary too. $\blacksquare$

The two results are summarized below:

Thus, we are able to restrict $f_k$ to cycles: $$f_k : Z_k(K) \to Z_k(L),$$ or to boundaries: $$f_k : B_k(K) \to B_k(L).$$

Computing chain maps

Even with the most trivial examples we should pretend that we don't know the answer...

Example (segment). We start with these simplicial complexes (graphs): $$G=\{A,B\},\ H=\{A,B,AB\},$$ and $$f(A)=A,\ f(B)=B.$$ Let's consider the inclusion:

Then on the chain level we have: $$\begin{array}{llllll} C_0(G)=< A,B >,& C_0(H)= < A,B >,\\ f_0(A)=A,& f_0(B)=B & \Longrightarrow f_0=\operatorname{Id};\\ C_1(G)=0,& C_1(H)= < AB >\\ &&\Longrightarrow f_1=0. \end{array} $$ Meanwhile, $\partial _0=0$ for $G$ and for $H$ we have: $$\partial _1=[-1, 1]^T.$$ $\square$

Exercise. Modify the computation for the case when there is no $AB$.

Example (two segments). Given these two two-edge simplicial complexes:

- 1. $K=\{A,B,C,AB,BC\}$,

- 2. $L=\{X,Y,Z,XY,YZ\}$. $\\$

Consider these simplicial maps:

- 1. $f(A)=X,\ f(B)=Y,\ f(C)=Z,\ f(AB)=XY,\ f(BC)=YZ$;

- 2. $f(A)=X,\ f(B)=Y,\ f(C)=Y,\ f(AB)=XY,\ f(BC)=Y$. $\\$

They are given below:

We think of these identities as functions evaluated on the generators of the two chain groups:

- 1. $C_0(G)=< A,B,C >,\ C_1(G)=< AB,BC >$;

- 2. $C_0(H)=< X,Y,Z >,\ C_1(H)=< XY,YZ >$. $\\$

These functions induce these two linear operators: $$f_0:C_0(G) \to C_0(H),\ f_1:C_1(G) \to C_1(H),$$ as follows.

The first function gives these values:

- $f_0(A)=X,\ f_0(B)=Y,\ f_0(C)=Z,$

- $f_1(AB)=XY,\ f_1(BC)=YZ$. $\\$

Those, written coordinate-wise, produce the columns of its matrices: $$f_0=\left[\begin{array}{ccccc} 1&0&0\\ 0&1&0\\ 0&0&1 \end{array} \right] =\operatorname{Id},\ f_1=\left[\begin{array}{ccccc} 1&0\\ 0&1 \end{array} \right] =\operatorname{Id}.$$ The second produces:

- $f_0(A)=X,\ f_0(B)=Y,\ f_0(C)=Y,$

- $f_1(AB)=XY,\ f_1(BC)=0$, $\\$

which leads to: $$f_0=\left[\begin{array}{ccccc} 1&0&0\\ 0&1&1\\ 0&0&0 \end{array} \right],\ f_1=\left[\begin{array}{ccccc} 1&0\\ 0&0 \end{array} \right].$$

The first linear operator is the identity and the second can be thought of as a projection. $\square$

Exercise. Modify the computation for the case of an increasing and then decreasing function.

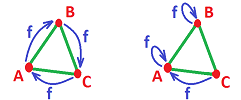

Example (hollow triangle). Suppose: $$G=H:=\{A,B,C,AB,BC,CA\},$$ and $$f(A)=B,\ f(B)=C,\ f(C)=A.$$

Here is a rotated triangle:

The homology maps are computed as follows: $$Z_1(G)=Z_1(H)=< AB+BC+CA >.$$ Now, $$f_1(AB+BC+CA)=f_1(AB)+f_1(BC)+f_1(CA)=BC+CA+AB=AB+BC+CA.$$ Therefore, $f_1:Z_1(G)\to Z_1(H)$ is the identity and so is the homology map $[f_1]:H_1(G)\to H_1(H)$. Conclusion: the hole is preserved.

Alternatively, we collapse the triangle onto one of its edges: $$f(A)=A,\ f(B)=B,\ f(C)=A.$$ Then $$f_1(AB+BC+CA)=f_1(AB)+f_1(BC)+f_1(CA)=AB+BA+0=0.$$ So, the map is zero. $\square$

Exercise. Modify the computation for the case (a) a reflection and (b) a collapse to a vertex.

Exercise. Suppose a map collapses all edges. Find its chain map.

Exercise. Find the matrix of the chain map of a graph map that shifts by one edge a graph of $n$ edges arranged in (a) a string, (b) a circle.

Exercise. Find the matrix of the chain map of a graph map that folds in half a graph of $2n$ edges arranged in (a) a string, (b) a circle, (c) a figure eight; also (d) for a figure eight with $4n$ edges -- the other fold.

Exercise. Find the matrix of the chain map of a graph map that flips a graph of $2n$ edges arranged in (a) a string, (b) a circle, (c) a figure eight; also (d) for a figure eight with $4n$ edges -- the other flip.

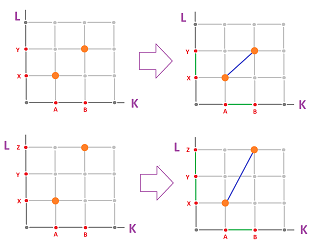

The definition

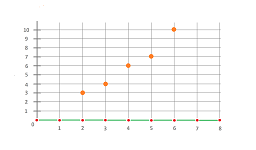

A discrete function is also any function $$f:{\bf Z} \to {\bf Z}.$$

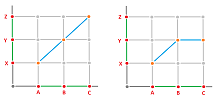

In other words, this is a map of nodes on ${\mathbb R}$. Furthermore, the edges in the $x$-axis may be taken to chains of edges in the $y$-axis:

Here $K$ and $L$ are subcomplex of ${\mathbb R}_x$ and ${\mathbb R}_y$ respectively. Algebraically, we have:

- $f(AB)=XY$, or

- $f(AB)=XY+YZ$.

The former gives as a cell map $f:K\to L$ and the latter a chain map $f:C(K)\to C(L)$ that doesn't come from a cell map.

For a simplicial map, we have defined its chain maps as the homomorphisms between the chain groups of the complexes. At this point, we can ignore the origin of these new maps and study chain maps in a purely algebraic manner.

First, we suppose that we have two chain complexes, i.e., combinations of modules and homomorphisms between these modules, called the boundary operators: $$\begin{array}{llll} M:=&\{M_i,\partial^M_i:& i=0,1,2, ...\},\\ N:=&\{N_i,\partial^N_i:& i=0,1,2, ...\}, \end{array}$$ with $$M_{-1}=N_{-1}=0.$$ As chain complexes, they are to satisfy the “double boundary identity”: $$\begin{array}{llll} \partial^M_i \partial^M_{i+1} =0,& i=0,1,2, ...;\\ \partial^N_i \partial^N_{i+1} =0,& i=0,1,2, .... \end{array}$$ The compact form of this condition is, for both: $$\partial\partial =0.$$

Definition. A chain map $f:M\to N$ is a combination of homomorphisms between the corresponding items of the two chain complexes: $$f=\{f_i:\ M_i\to N_i:\ i=0,1, ...\},$$ if it satisfies the “algebraic continuity condition”: $$\partial^M_i f_i = f_{i-1} \partial^N_i,\ i=0,1, ....$$

The compact form of this condition is: $$f\partial =\partial f.$$ In other words, the diagram commutes: $$ \newcommand{\ra}[1]{\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} \newcommand{\la}[1]{\!\!\!\!\!\xleftarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\ua}[1]{\left\uparrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{ccccccccccccccccccc} M_{i+1}& \ra{\partial^M_{i+1}} & M_i\\ \da{f_{i+1}}& \searrow & \da{f_i}\\ N_{i+1}& \ra{\partial^N_{i+1}} & N_i \end{array} $$

This combination of modules and homomorphisms forms a diagram with the two chain complexes occupying the two rows and the chain map connecting them by the vertical arrows, item by item: $$ \newcommand{\ra}[1]{\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} \newcommand{\la}[1]{\!\!\!\!\!\xleftarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\ua}[1]{\left\uparrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{cccccccccccccccccccc} ...& \to & M_{k+1}& \ra{\partial^M_{k+1}} & M_{k}& \ra{\partial^M_{k}} & M_{k-1}& \to &...& \to & 0\\ ...& & \da{f_{k+1}}& & \da{f_{k}}& & \da{f_{k-1}}& &...& & \\ ...& \to & N_{k+1}& \ra{\partial^N_{k+1}} & N_{k}& \ra{\partial^N_{k}} & N_{k-1}& \to &...& \to & 0 \end{array} $$ Each square commutes.

Example. One of the simplest example of a chain map is multiplication by an arbitrary $r\in R$ when the chain complexes are identical: $$\newcommand{\ra}[1]{\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} \newcommand{\la}[1]{\!\!\!\!\!\xleftarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\ua}[1]{\left\uparrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{cccccccccccccccccccc} ...& \to & M_{k+1}& \ra{\partial^M_{k+1}} & M_{k}& \ra{\partial^M_{k}} & M_{k-1}& \to &...& \to & 0\\ ...& & \da{\times r}& & \da{\times r}& & \da{\times r}& &...& & \\ ...& \to & M_{k+1}& \ra{\partial^M_{k+1}} & M_{k}& \ra{\partial^M_{k}} & M_{k-1}& \to &...& \to & 0 \end{array} $$ $\square$

Examples of chain maps

Definition. Given map of cells $f: |K| \to |L|$, the $k$th chain map generated by map $f$, $$f_k: C_k(K) \to C_k(L),$$ is defined on the generators as follows. For each $k$-cell $s$ in $K$,

- 1. if $s$ is cloned by $f$, define $f_k(s) := \pm f(s)$, with the sign determined by the orientation of the cell $f(s)$ in $L$ induced by $f$;

- 2. if $s$ is collapsed by $f$, define $f_k(s) := 0$. $\\$

Also, $$f_{\Delta} = \{f_i:i=0,1, ... \}:C(K)\to C(L)$$ is the (total) chain map generated by $f$.

In the items 1 and 2 of the definition, the left-hand sides are the values of $s$ under $f_k$, while the right hand sides are the images of $s$ under $f$.

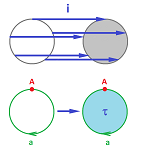

Example (inclusion). As a quick example, consider the inclusion $f$ of the circle into the disk as its boundary:

Here we have: $$f: K = {\bf S}^1 \to L = {\bf B}^2 .$$ After representing the spaces by their chain complexes, we examine to what cell in $L$ each cell in $K$ is taken by $f$:

- $f(A) = A,$

- $f(a) = a.$ $\\$

Further, we compute the chain maps $$f_i: C_i(K) \to C_i(L),\ i=0,1,$$ as linear operators by defining them on the generators of these vector spaces:

- $f_0(A) = A,$

- $f_1(a) = a.$ $\\$

$\square$

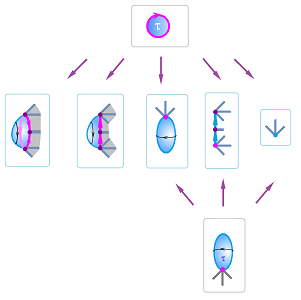

We take the lead from simplicial maps: every $n$-cell $s$ is either cloned, $f(s) \approx s$, or collapsed, $f(s)$ is an $k$-cell with $k < n$.

For general cells:

The illustration of this construction for dimension $2$ also shows that, in contrast to the simplicial case, a collapsed cell may be stretched over several lower dimensional cells:

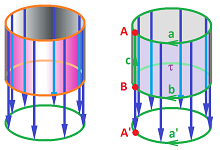

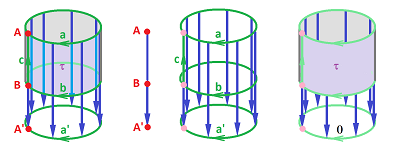

Example (projection of the cylinder on the circle). Let's consider the projection of the cylinder on the circle:

We have:

- $f(A) = f(B) = A'$, cloned;

- $f(a) = f(b) = a'$, cloned;

- $f(c) = A'$, collapsed;

- $f(\tau) = a'$, collapsed.

From the images of the cells under $f$ listed above, we conclude:

- $f_0(A) = f_0(B) = A';$

- $f_1(a) = f_1(b) = a';\ f_1(c) = 0;$

- $f_2(\tau) = 0.$

Now we need to work out the operators:

- $f_0:\ C_0(K) = < A, B > \to C_0(L) = < A' >;$

- $f_1:\ C_1(K) = < a, b, c >\to C_1(L) = < a' >;$

- $f_2:\ C_2(K) = < \tau > \to C_2(L) = 0.$

From the values of the operators on the basis elements, we conclude:

- $f_0 = [1, 1];$

- $f_1 = [1, 1, 0];$

- $f_2 = 0.$

Next, the diagram has to commute: $$ \newcommand{\ra}[1]{\!\!\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{c|cccccccccccccc} & 0 & \ra{\partial_3} & C_2 & \ra{\partial_2} & C_1 & \ra{\partial_1} & C_0 & \ra{\partial_0} &0 \\ \hline C(K): & 0 & \ra{0} & {\bf R} & \ra{\partial_2} & {\bf R}^3 & \ra{\partial_1} & {\bf R}^2 & \ra{0} &0 \\ f_{\Delta}: &\ \ \da{0} & &\ \ \ \da{f_2} & & \ \da{f_1} & & \ \da{f_0} & & \ \da{0}\\ C(L): & 0 & \ra{0} & 0 & \ra{\partial_2} & {\bf R} & \ra{\partial_1} & {\bf R} & \ra{0} &0 \\ \end{array} $$ Let's verify that the identity is satisfied in each square above. First we list the boundary operators for the two chain complexes: $$\begin{array}{llllllllll} \partial^K_2(\tau)=a-b, &\partial^K_1(a)=\partial^K_1(b)=0, \partial^K_1(c)=A-B, &\partial^K_0(A)=\partial^K_0(B)=0;\\ \partial^L_2=0, &\partial^L_1(a')=0, &\partial^L_0(A')=0. \end{array}$$ Now we go through the diagram from left to right. $$\begin{array}{l|l|l|l} \partial_2f_2(\tau) = f_1\partial_2(\tau)&{\partial}_1f_1(a) = f_0{\partial}_1(a)&\partial_1f_1(b) = f_0\partial_1(b)&{\partial}_1f_1(c) = f_0{\partial}_1(c)\\ \partial_2(0) = f_1(a - b) &{\partial}_1(a') = f_0(0)&\partial_1(a') = f_0(0)&{\partial}_1(0) = f_0(B - A) = A' - A'\\ 0 = a' - a' = 0, {\rm \hspace{3pt} OK}&0 = 0, {\rm \hspace{3pt} OK}&0 = 0, {\rm \hspace{3pt} OK}&0 = 0, {\rm \hspace{3pt} OK} \end{array}$$ So, $f_{\Delta}$ is indeed a chain map.

The full diagram is: $$\newcommand{\ra}[1]{\!\!\!\!\!\!\!\xrightarrow{\quad#1\quad}\!\!\!\!\!} \newcommand{\da}[1]{\left\downarrow{\scriptstyle#1}\vphantom{\displaystyle\int_0^1}\right.} % \begin{array}{c|cccccccccc} & 0 & \ra{\partial_3} & C_2 & \ra{\partial_2} & C_1 & \ra{\partial_1} & C_0 & \ra{\partial_0} &0 \\ \hline M: & 0 & \ra{0} & < \tau > & \ra{[1,-1,0]^T} & < a, b, c > & \ra{{\tiny \left[\begin{array}{ccc}0&0&-1\\0&0&1\end{array}\right]}} & < A,B > & \ra{0} &0 \\ f_{\Delta}: &\ \da{0} & &\ \ \da{0} & &\quad\quad \da{[1,1,0]} & &\ \quad\da{[1,1]} & &\ \ \da{0}\\ N: & 0 & \ra{0} & 0 & \ra{0} & < a' > & \ra{0} & < A' > & \ra{0} & 0 \end{array} $$ $\square$

Exercise. Represent the cylinder as a complex with two $2$-cells, find an appropriate cell complex for the circle and an appropriate cell map for the projection, compute the chain map, and confirm that the diagram commutes.

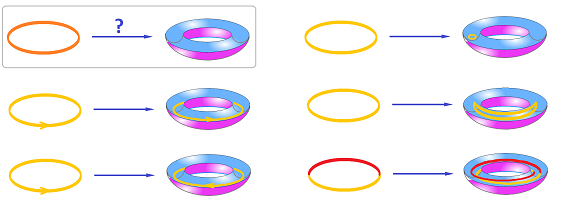

Let's consider maps of the circle to itself. Such a map can be thought of as a circular rope, $X$, being fitted into a circular groove, $Y$. You can carefully transport the rope to the groove without disturbing its shape to get the identity map, or you can compress it into a tight knot to get the constant map, etc.:

To build chain map representations for these maps, we use the following cells for the circles:

Example (constant). If $f: X \to Y$ is a constant map, all $k$-chains of $X$ with $k>0$ collapse or, algebraically speaking, are mapped to $0$ by $f_{\Delta}$. Meanwhile, all $0$-chains classes of $X$ are mapped to the same $0$-chain of $Y$. $\square$

Example (identity). If $f$ is the identity map, we have $$\begin{array}{llllll} &f_{\Delta} (A) = A', \\ \hspace{.39in}&f_{\Delta} (a) = a'.&\hspace{.39in}\square \end{array}$$

Example (flip). Suppose $f$ is a flip (a reflection about the $y$-axis) of the circle. Then $$\hspace{.42in} f_1(a) = -a'. \hspace{.42in}\square$$

Example (turn). You can also turn (or rotate) the rope before placing it into the groove. The resulting map is very similar to the identity regardless of the degree of the turn. Indeed, we have: $$f_*(a) = a'.$$ Even though the map is simple, there is no chain map! $\square$

Example (wrap). If you wind the rope twice before placing it into the groove, you get: $$f_*(a) = 2a'.$$ This isn't a map of cells but it is a chain map! $\square$

Example (inclusion). In the all examples above we have $$f_*(A) = A'.$$ Let's consider an example that illustrates what else can happen to $0$-classes. Consider the inclusion of the two endpoints of a segment into the segment. Then $f : X = \{A, B\} \to Y = [A,B]$ is given by $f(A) = A,\ f(B) = B$. Now, even though $A$ and $B$ aren't homologous in $X$, their images under $f$ are, in $Y$. So, $f(A) \sim f(B)$. In other words, $$f_*([A]) = f_*([B]) = [A] = [B].$$ Algebraically, $[A] - [B]$ is mapped to $0$. $\square$

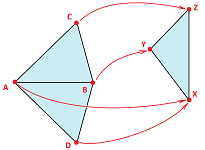

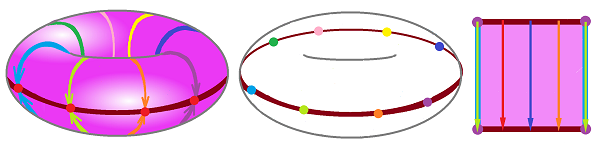

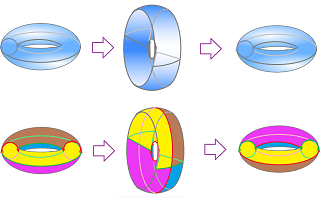

Example (collapse). A more advanced example of collapse is that of the torus to a circle, $f: X = {\bf T}^2 \to Y = {\bf S}^1$.

We choose one longitude $L$ (in red) and then move every point of the torus to its nearest point on $L$. $\square$

Example (inversion). And here's turning the torus inside out:

$\square$

Exercise. Consider the following self-maps of the torus ${\bf T}^2$:

- (a) collapsing it to a meridian,

- (b) collapsing it to the equator (above),

- (c) collapsing a meridian to a point,

- (d) gluing the outer equator to the inner equator,

- (e) turning it inside out. $\\$

For each of those, describe the cell structure and compute the chain maps of this map.

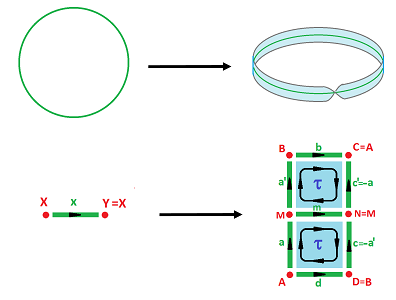

Example (embedding). We consider maps from the circle to the Möbius band: $$f:{\bf S}^1 \to {\bf M}^2.$$ First, we map the circle to the median circle of the band:

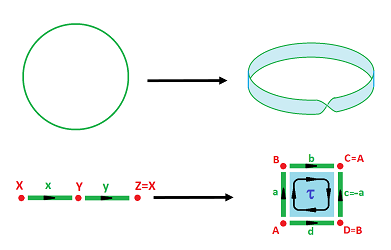

As you can see, we are forced to subdivide the band's square into two to turn this map into a cell map. Then we have: $$f(X)=f(Y)=M \text{ and } f(x)=m.$$ Second, we map the circle to the edge of the band:

As you can see, we have to subdivide the circle's edge into two edges to turn this map into a map of cells. Then we have: $$f(X)=B,\ f(Y)=A \text{ and } f(x)=b,\ f(y)=d.$$ $\square$

Exercise. (a) Provide details of the computations in the last example. (b) What if there was no subdivision?

Exercise. Consider a few possible maps for each of these and compute their chain maps:

- embeddings of the circle into the torus;

- self-maps of the figure eight;

- embeddings of the circle to the sphere.